Official statement

Other statements from this video 10 ▾

- □ Faut-il baliser les programmes de fidélité pour améliorer ses résultats enrichis ?

- □ Pourquoi Google abandonne-t-il 7 types de données structurées et que faut-il faire maintenant ?

- □ Faut-il maintenir les données structurées si Google arrête d'en afficher certaines ?

- 4:56 Pourquoi Google refuse-t-il de s'engager sur l'avenir des AI Overviews ?

- 6:24 Pourquoi Google n'indexe-t-il pas toutes vos pages et comment l'anticiper ?

- 8:48 Peut-on empêcher Google de nous positionner sur certains mots-clés ?

- 9:56 Combien de temps Google met-il vraiment à reconnaître les changements SEO ?

- 12:00 Comment Google découvre-t-il vraiment les URLs de votre site ?

- 12:00 Faut-il vraiment compter le nombre exact d'URLs de son site ?

- 15:15 Faut-il vraiment soumettre son sitemap tous les jours ?

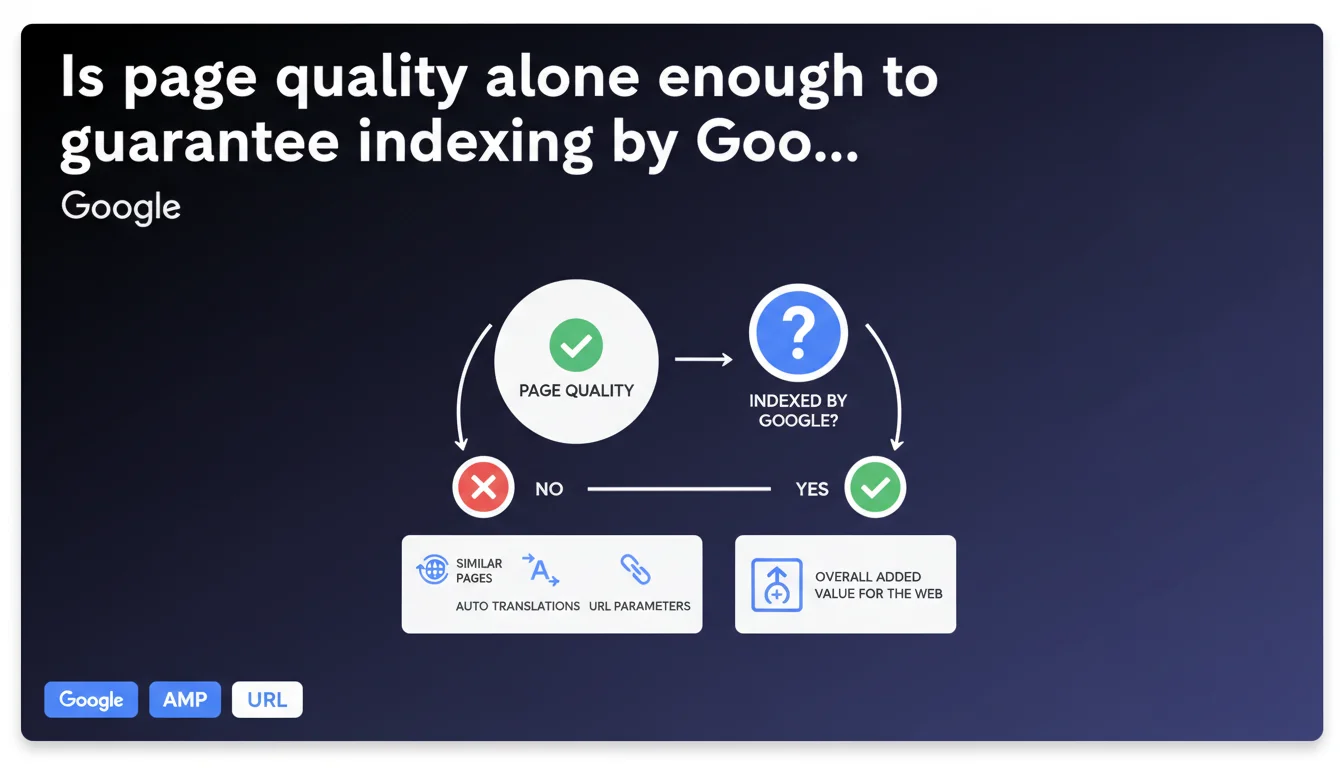

Google states that page quality is not the sole indexing criterion. A technically flawless and well-written page can remain unindexed if it doesn't bring unique value to the web — automatic translations, duplicate content, and similar URL variations are the first victims. Indexing relies on a balance between individual quality and collective usefulness.

What you need to understand

What does Google mean by "overall added value for the web"?

Google doesn't just evaluate a page in isolation. It analyzes its differentiated contribution compared to what already exists in the index. A page can be well-written, well-structured, fast, but if it reproduces what ten other pages already say, its indexing becomes questionable.

Concretely, if your e-commerce site generates thousands of product pages with nearly identical descriptions, or if you automatically translate content without local adaptation, you're entering the risk zone. Google doesn't want to index 50 versions of the same product page with different URL parameters.

Why can a "good" page be ignored?

Because indexing is expensive. Every indexed URL mobilizes storage resources, crawl resources, processing power. Google makes trade-offs: a medium-quality page that's unique will often be preferred over an excellent page... if it's the tenth of its kind.

Automatically translated content that hasn't been reworked, pages with redundant URL parameters, syndicated content without added value — all of this weighs in the balance. Intrinsic quality doesn't compensate for lack of differentiation.

What signals does Google use to measure this "unique value"?

Here, Google remains vague. One can assume semantic similarity analyses, duplication patterns, user behavior. But it's impossible to know with certainty which algorithms make the final call. What's clear: quality alone is no longer enough to cross the indexing threshold.

- Indexing relies on a balance between quality and uniqueness

- A technically perfect page can remain unindexed if it duplicates existing content

- Auto translations, parameterized URLs, and content variations are targeted first

- Google prioritizes overall index efficiency, not individual page performance

SEO Expert opinion

Is this statement consistent with field observations?

Yes, and it's even a welcome confirmation. For years, we've observed clean, well-designed sites struggling to get their entire URLs indexed. Tight crawl budgets, orphaned pages in Search Console, "discovered but not indexed" URLs — all of this makes more sense with this lens.

But — and this is crucial — Google provides no numerical indicators to measure this famous "overall added value." We're flying blind. How do you know if your page is perceived as sufficiently unique? No official metric. [To verify] by testing, observing, comparing indexation rates by page types.

What nuances should be added to this statement?

First, this rule doesn't apply uniformly across all site types. A news media that publishes 50 articles per day on different topics won't face the same constraints as an e-commerce site with 10,000 nearly identical product sheets.

Second, be careful not to confuse "not indexed" with "poorly ranked." A page can be indexed but invisible at position 150, or conversely excellent but outside the index. These are two distinct issues, even if Google sometimes mixes them in its communications.

In what cases can this rule work in your favor?

If you produce truly differentiated content — original angles, exclusive data, field expertise — this statement protects you. Google confirms it will arbitrate in your favor against competitors who recycle generic content.

It's also good news for sites that invest in editorial depth rather than volume. Better 100 solid, unique pages than 1,000 automatically generated ones. Google tells you this openly.

Practical impact and recommendations

What should you concretely do to maximize your indexation chances?

First priority: audit internal duplication. Identify pages that look too similar, merge them, or canonicalize them. Don't let Google arbitrate for you — take action first.

Next, review your parameterized URLs. If you're generating infinite variations for technical reasons (filters, sorts, sessions), properly tag them with canonical or noindex directives. Don't waste your crawl budget on pages with no differentiated value.

For translations: abandon unsupervised automatic tools. A human translation, even partial, signals to Google a real localization effort. That's what makes the difference between an indexable page and a linguistic duplicate.

What mistakes must be absolutely avoided?

Don't multiply "weak" pages hoping to cast a wide net. Google is explicit: quantity without uniqueness hurts you. Better to voluntarily deindex low-value pages than leave them polluting your crawl budget.

Also avoid confusing technical quality with editorial value. A fast, well-marked, mobile-friendly page remains pointless if it repeats what 20 other pages already say. Technical stack doesn't compensate for lack of differentiation.

- Audit and merge duplicate internal content

- Clean up parameterized URLs with canonical or noindex

- Manually rework automatic translations

- Prioritize editorial depth over page multiplication

- Monitor indexation rates by page type in Search Console

- Voluntarily deindex low-value-added pages

How do you pilot this strategy over time?

Track your indexation rates by site segment. If your product sheets plateau at 60% indexation while your blog articles reach 95%, that's a clear signal of duplication or low differentiation.

Test editorial variations on a sample. Enrich 50 product sheets with unique content, wait a few weeks, compare indexation rates. It's the only way to measure the real impact of "unique value" in your context.

❓ Frequently Asked Questions

Une page de très haute qualité peut-elle vraiment ne pas être indexée ?

Comment savoir si mes pages sont perçues comme suffisamment uniques par Google ?

Les traductions automatiques sont-elles systématiquement exclues de l'index ?

Faut-il désindexer volontairement les pages à faible valeur ajoutée ?

Le crawl budget est-il le seul facteur limitant l'indexation ?

🎥 From the same video 10

Other SEO insights extracted from this same Google Search Central video · published on 26/06/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.