Official statement

Other statements from this video 8 ▾

- □ Le mobile-first indexing a-t-il vraiment changé la donne en SEO depuis 2016 ?

- □ La balise meta keywords sert-elle encore à quelque chose en SEO ?

- □ Utiliser Google Analytics ou Chrome améliore-t-il vraiment votre référencement ?

- □ Pourquoi Google normalise-t-il votre HTML même quand il est cassé ?

- □ Le CSS influence-t-il réellement le poids SEO de vos balises H1-H6 ?

- □ Comment Caffeine ingère-t-il vraiment les données de Googlebot dans l'index ?

- □ Faut-il vraiment optimiser différemment chaque outil de suppression Google ?

- □ Pourquoi Google ne documente-t-il qu'une seule balise meta dans son guide SEO officiel ?

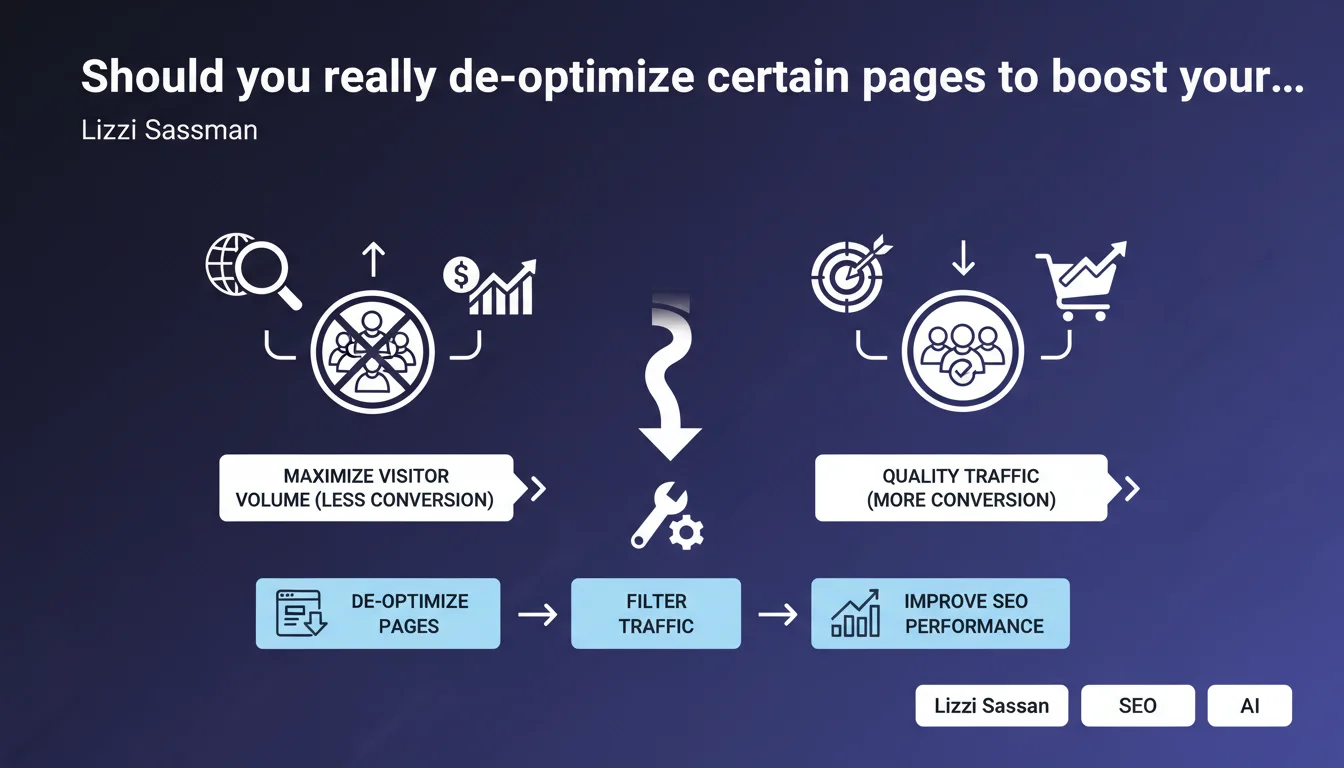

Google states that it may be necessary to intentionally de-optimize certain pages to avoid attracting unqualified traffic. The goal: prioritize conversion over raw visitor volume. A strategic shift that challenges the obsession with traffic metrics in our dashboards.

What you need to understand

What exactly does Google mean by "de-optimize"?

The phrasing may sound counterintuitive, but it refers to a deliberate process of restricting SEO targeting. In concrete terms, it involves reviewing a page's semantic architecture so it ranks solely on high-conversion-potential queries.

This can involve removing generic keywords, tightening the lexical field, or even modifying title tags to exclude overly broad search intents. The idea is to consciously sacrifice high-volume traffic in favor of visitors better aligned with the page's business objective.

Why is Google now communicating about this concept?

Because the SEO industry remains obsessed with vanity metrics — sessions, impressions, organic traffic growth. Yet Google benefits when websites deliver a coherent user experience, where visitors actually find what they're looking for.

Unqualified traffic generates pogo-sticking, high bounce rates, and degrades behavioral signals. By encouraging SEOs to better qualify their audiences, Google indirectly improves the overall quality of its index.

In what cases does this principle apply in practice?

Typically on sites where the final objective is transactional or lead-generation focused — e-commerce, SaaS, lead generation. A premium product page that attracts traffic from "free" or "cheap" searches serves no purpose and may actually harm overall conversion rates.

Same logic applies to informational content with a conversion funnel behind it: better to have 1,000 visitors with a 5% conversion rate than 10,000 visitors with a 0.2% action rate.

- Traffic volume is no longer a relevant KPI in itself — traffic quality is what matters

- De-optimization means tightening semantic targeting to exclude misaligned intents

- Google values this approach because it improves behavioral signals and user experience

- This strategy applies primarily to transactional sites or those with high conversion stakes

SEO Expert opinion

Is this statement consistent with what we observe in the field?

Absolutely. Over the past 2-3 years, we've seen sites intentionally lose rankings on generic queries to perform better on their core target. Google's algorithms — particularly the Helpful Content updates — increasingly prioritize contextual relevance over simple lexical matching.

Let's be honest: many SEOs still measure success by raw traffic. But more mature clients understand that 10,000 visitors who don't convert are worthless. This official statement validates a practice that the best practitioners have already been applying for some time.

What are the limitations of this approach?

The main risk: over-restricting targeting and missing opportunities. Some seemingly distant queries can generate unexpected conversions — you need reliable analytics data before making that call.

Another issue: this logic assumes you already have traffic to filter. For a newly launched or early-stage site, de-optimizing prematurely can slow the acquisition of trust signals and natural backlinks. Timing is crucial.

How do you balance volume versus quality in an overall SEO strategy?

By segmenting your site by business objective. Some pages can legitimately target volume — top-of-funnel informational content, for example — while others must be ultra-targeted to maximize conversion.

The mistake would be applying the same optimization logic everywhere. A blog can remain generalist to attract cold audiences, while your product or service pages must be surgically precise in their semantic targeting.

Practical impact and recommendations

How do you identify pages that need de-optimization?

Start by cross-referencing your Search Console and Analytics data. Find pages generating lots of impressions and clicks but anemic conversion rates or minimal engagement.

Next, analyze which queries are draining this unqualified traffic. If you're selling premium training and 40% of your traffic comes from "free training," you have a semantic misalignment problem.

- Extract from Search Console pages with high traffic but low engagement (time on page, pages per session)

- Identify in Analytics pages with bounce rate >70% and conversion rate <1%

- Analyze source queries via the "Performance" tool to spot misaligned intents

- Verify that these unqualified queries don't have another better-suited page on your site

What concrete actions tighten a page's targeting?

First step: rework your title tag and meta description to exclude generic terms. If your title contains "free" when your offer is paid, you mechanically attract unsuitable traffic.

Then adjust the lexical field of your content. Remove sections answering informational intents if your page is transactional. Instead reinforce specialization signals — pricing, technical specifications, detailed comparisons.

Final lever: robots tags or canonicals to outright deindex or merge pages draining worthless parasitic traffic. Radical, but sometimes necessary.

- Rewrite titles to eliminate overly generic or misleading terms

- Tighten the semantic field of content by removing off-topic digressions

- Add precise qualifiers ("premium," "professional," "enterprise") to filter your audience

- Use Schema structured data to clearly signal offer type (paid, free, etc.)

- Consider deindexing high-volume pages with no business return

What risks should you monitor during implementation?

The main danger: losing rankings on queries that actually do convert, because you misinterpreted data or over-restricted targeting. Always test on a sample of pages before scaling.

Another trap: creating gaps in your internal linking. If you de-optimize a page that served as a hub, ensure you properly redistribute link juice and navigation paths.

Strategic de-optimization marks a turning point in SEO maturity: we shift from a volume-based logic to a traffic ROI logic. This requires fine-grained analytics mastery, deep understanding of search intents, and the ability to arbitrate between short and long-term goals.

This type of optimization requires specialized expertise and rigorous analytics monitoring to avoid costly mistakes. If you lack internal resources or experience with this type of arbitrage, partnering with a specialized SEO agency can provide you with an outside perspective and advanced analytical tools to safely navigate this strategic transition.

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 03/11/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.