Official statement

Other statements from this video 5 ▾

- □ L'outil d'inspection d'URL est-il vraiment l'arme ultime pour déboguer vos problèmes d'indexation ?

- □ L'outil d'inspection d'URL peut-il vraiment diagnostiquer tous vos problèmes d'indexation ?

- □ Faut-il vraiment demander une exploration manuelle via l'outil d'inspection d'URL ?

- □ Pourquoi Google indexe-t-il parfois une URL différente de celle que vous attendez ?

- □ Pourquoi vérifier le HTML rendu peut-il révéler des erreurs invisibles dans votre code source ?

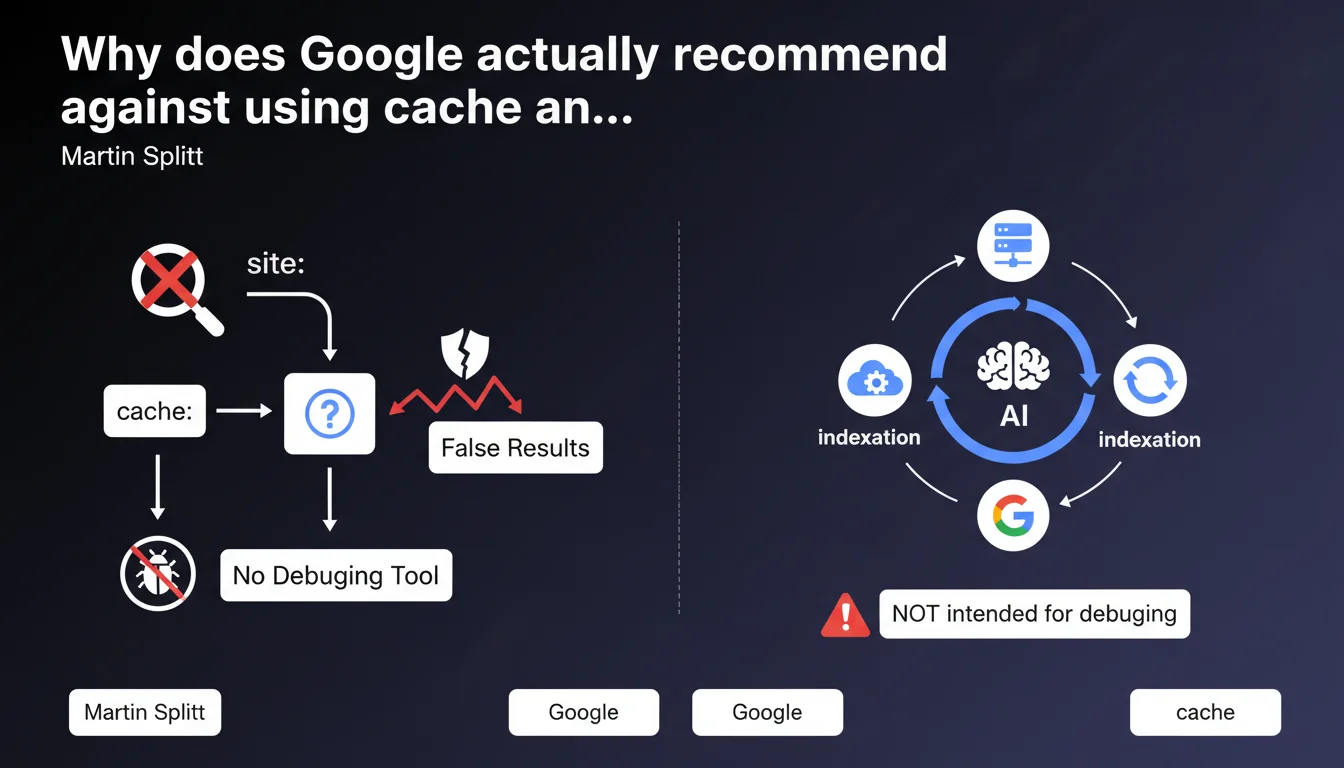

Google states that cache and the site: operator are not designed to diagnose indexation problems and can provide misleading results. Martin Splitt recommends using official tools like Search Console instead to get reliable data on actual indexation status.

What you need to understand

Why do these tools give misleading results?

The Google cache and site: operator do not reflect the current state of the main index. Cache displays an archived version that may be days or even weeks old and doesn't necessarily correspond to the version used for ranking.

The site: operator queries a secondary index optimized for speed, not accuracy. It can show deindexed pages or miss pages that are actually present in the ranking index. Google has confirmed this multiple times — it's just an approximation.

What are the concrete risks of using these methods?

A site can appear indexed via site: when it's not actually in the main index. Conversely, pages missing from site: can perfectly well rank. This confusion leads to wrong decisions: voluntary deindexing of healthy pages, unjustified panic, diagnosis completely off base.

Technical teams waste valuable time looking for problems that don't exist or ignoring ones that do. That's time and resources wasted on wild goose chases.

What tools should you use instead?

Google Search Console remains the absolute reference for checking indexation. Coverage reports, the URL inspection tool, and server logs crossed with GSC provide a reliable picture. Period.

- Cache and site: query secondary indexes, not the ranking index

- These tools can display deindexed pages or miss indexed pages

- Search Console provides official data on actual indexation status

- Server logs + GSC offer the most reliable diagnosis

- Using cache/site: leads to incorrect SEO decisions

SEO Expert opinion

Does this recommendation contradict established field practices?

Let's be honest: the majority of SEOs still use site: as a quick first check. But just because it's common doesn't mean it's reliable. Field observations confirm that site: regularly misses pages or displays phantom ones.

The real problem? Clients and decision-makers continue relying on these approximate datasets to make budget decisions. Result: indexation strategies built on sand. [To verify] Google doesn't explicitly communicate the cache refresh delay or the exact precision of site:, making it difficult to estimate acceptable error margins.

In what cases are these tools still useful despite everything?

For a rough overview of indexed volume or quick detection of massive problems — say, 90% of the site disappearing overnight — site: can serve as an early warning. But that's it. Never stop there.

Cache retains value for comparing page versions over time or checking if Google has crawled a recent change. But again, URL inspection in GSC does this better and with more detail (HTML rendering, blocked resources, etc.).

What alternatives actually offer more reliability?

Server logs crossed with Search Console are the gold standard for diagnosis. You see exactly what Googlebot crawls, when, and with what actual HTTP status. No approximation, no secondary index.

Tools like Screaming Frog combined with GSC API data enable precise indexation audits. And that's where it gets tricky for many teams: this approach requires solid technical setup and expert data interpretation.

Practical impact and recommendations

What should you do concretely right now?

Stop selling indexation audits based on site:. It's that simple. Train your teams to use Search Console as the single reference source, with server logs as validation.

If a client or manager asks you "how many pages are indexed", never answer with site:. Go get the data from GSC, Coverage section, Valid tab. That's the only metric that matters.

What mistakes must you absolutely avoid?

Don't panic if a page doesn't appear in site: while it ranks perfectly. Don't deindex an entire section because cache is three weeks old. Never base any strategic decision on these tools — ever.

Another classic trap: comparing site: result numbers between two dates to measure indexation progress. These figures fluctuate randomly and mean nothing.

How do you verify that your indexation audit is reliable?

Systematically cross-check three sources: Search Console (validated pages), server logs (actual Googlebot crawl), and analytics (actual organic traffic). If these three sources align, you have a solid diagnosis.

Automate GSC data retrieval via API to track actual indexation evolution over time. Manual dashboards based on site: are a waste of time.

- Use Search Console exclusively to check indexation status

- Cross GSC with server logs for precise diagnosis

- Never make strategic decisions based on site: or cache

- Train teams and clients on correct GSC data interpretation

- Automate indexation tracking via Search Console API

- Use URL inspection to test specific pages

- Clearly document in audits why site: isn't used

❓ Frequently Asked Questions

L'opérateur site: est-il totalement inutile alors ?

Pourquoi Google maintient-il ces outils s'ils sont imprécis ?

Search Console peut-il aussi montrer des données inexactes ?

Comment expliquer à un client que son site est indexé si site: ne montre rien ?

Les logs serveur sont-ils indispensables pour diagnostiquer l'indexation ?

🎥 From the same video 5

Other SEO insights extracted from this same Google Search Central video · published on 07/12/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.