Official statement

Other statements from this video 5 ▾

- □ Pourquoi Google déconseille-t-il l'utilisation du cache et de l'opérateur site: pour déboguer ?

- □ L'outil d'inspection d'URL est-il vraiment l'arme ultime pour déboguer vos problèmes d'indexation ?

- □ L'outil d'inspection d'URL peut-il vraiment diagnostiquer tous vos problèmes d'indexation ?

- □ Pourquoi Google indexe-t-il parfois une URL différente de celle que vous attendez ?

- □ Pourquoi vérifier le HTML rendu peut-il révéler des erreurs invisibles dans votre code source ?

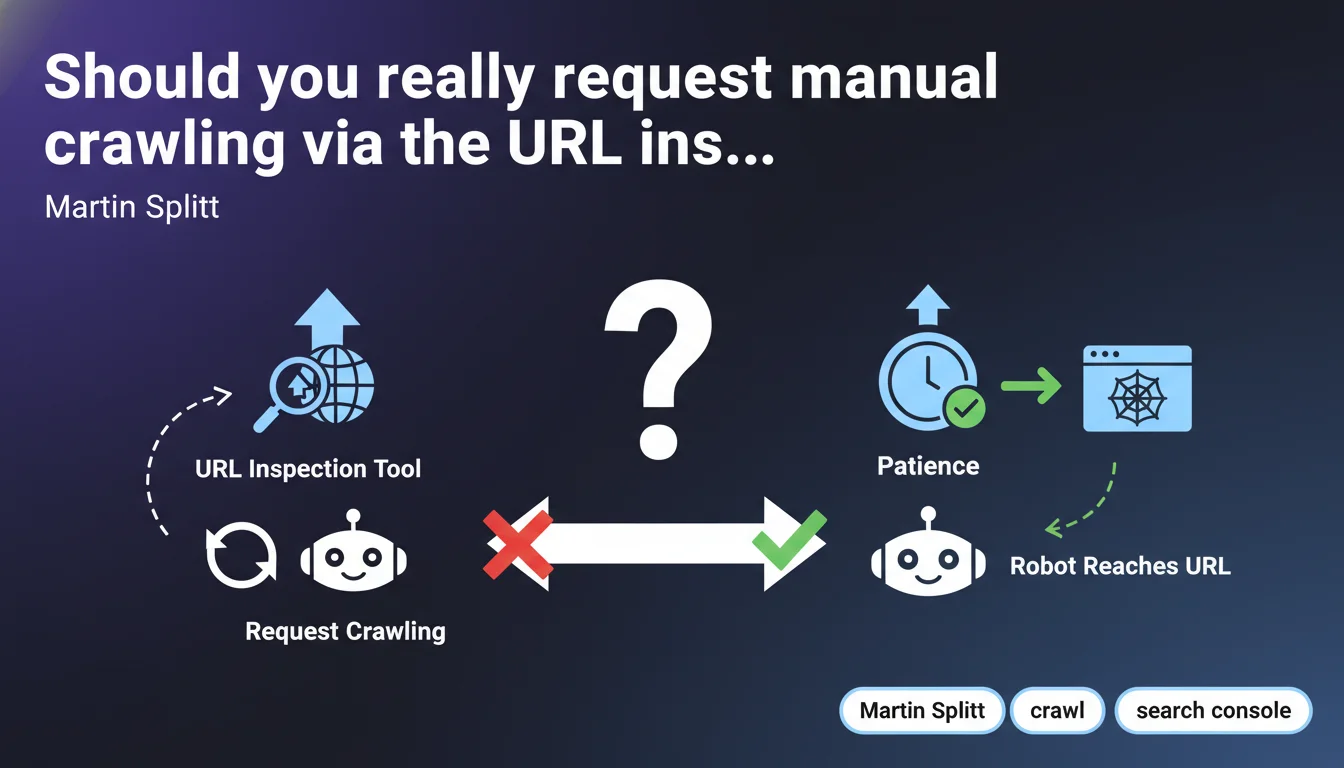

Google confirms that you can force the crawling of a not-yet-crawled URL via the Search Console inspection tool. But Splitt tempers this: often, it's simply a matter of patience before Googlebot naturally reaches the page. The underlying message? Don't overuse this feature.

What you need to understand

When should you use the URL inspection tool to request crawling?

The URL inspection tool in Search Console allows you to manually submit a page to Google for crawling and indexing. Splitt clarifies that this is relevant for pages that have not yet been discovered by the crawler.

The important nuance: if the page already exists in the index or is queued for crawling, requesting new crawling probably won't change anything. Google has its own crawl priorities, and this feature doesn't entirely bypass the algorithm.

Why does Google insist on patience?

Splitt explicitly reminds us that "sometimes, it's simply a matter of patience". Translation: Googlebot will eventually arrive, even without manual intervention. This statement reflects Google's desire to regulate the use of this tool.

If every webmaster systematically submits every new URL, it creates additional load on Google's servers — and potentially noise in crawl priorities. Hence this message that seeks to moderate enthusiasm.

What is the crawler's prioritization logic?

Google crawls pages according to several criteria: site popularity, update frequency, depth in the site structure, internal linking quality, presence in the XML sitemap. An isolated URL with no incoming links will naturally have low priority.

Requesting manual crawling can speed up the process for a strategic page, but it doesn't replace a well-managed crawl budget and coherent architecture.

- The inspection tool is useful for new undiscovered pages, not for forcing systematic re-crawling

- Google crawls according to its own priorities — the manual request is not a guarantee of immediate indexing

- Patience is often the best strategy if internal linking and the sitemap are correct

- Overusing this tool can harm the site's quality perception by Google

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, generally. We actually observe that well-linked pages and those present in the sitemap are naturally crawled within days or weeks, depending on domain authority. Forcing crawling via the inspection tool can accelerate the process by a few hours to a few days — but not dramatically.

Let's be honest: on a high-authority site, a new URL is crawled within hours without intervention. On a weak site, even a manual request can take several days before actual processing.

What nuances should be added to this advice?

Splitt doesn't clarify one crucial point: requesting crawling doesn't guarantee indexing. Google can very well crawl the page and decide not to index it for various reasons (duplicate content, low quality, canonicalization, unintentional noindex, etc.).

Another blind spot: what is reasonable usage frequency? Google imposes a daily quota (approximately 10-15 requests based on field feedback), but doesn't officially communicate on this threshold. [To verify]: could overusing this feature trigger a negative signal? Nothing confirmed, but some SEOs suspect a negative side effect.

In what cases does this approach not work?

If the page is blocked by robots.txt, orphaned (no internal or external links), has noindex, or if the server returns 5xx errors, requesting crawling won't help. The tool inspects and reports these issues, but obviously doesn't fix them.

Similarly, for a penalized site or one with very low crawl budget, this feature is a band-aid on a broken leg. The real work involves improving overall architecture and content quality.

Practical impact and recommendations

What should you do concretely before requesting crawling?

Before clicking "Request indexing," verify that the page is technically accessible: no noindex, no robots.txt blocking, correct canonical tags, acceptable server response time. The inspection tool will tell you about these issues, but it's worth fixing them beforehand.

Also make sure the page is linked from at least one other indexed page on the site. An orphaned URL, even when manually submitted, remains fragile and risks not being re-crawled regularly.

What mistakes should you avoid with this tool?

Don't spam the tool with every minor publication. Reserve it for strategic pages that need to be indexed quickly (product launch, news article, fix for an important page). For everything else, let natural crawling happen.

Also avoid submitting low-quality pages or duplicate content hoping to force their indexing. It doesn't work — and can even reinforce the idea that your site produces mediocre content.

How do you verify that the crawling strategy is working?

Monitor the index coverage reports in Search Console. If you notice a large number of pages "Crawled, not indexed" despite repeated manual requests, it's a red flag. The problem isn't technical, but qualitative.

Also analyze server logs to see whether Googlebot actually visits the pages you submit, and how frequently. If the bot rarely returns, it means crawl budget is poorly distributed — a structural problem the inspection tool won't solve.

- Check for absence of technical blocks (robots.txt, noindex, canonical) before any request

- Ensure the page is linked from at least one other indexed page

- Reserve manual requests for strategic or urgent pages

- Don't exceed 10-15 requests per day to avoid a potential negative signal

- Analyze server logs to confirm that Googlebot actually visits submitted URLs

- Monitor the coverage report to detect pages crawled but not indexed

❓ Frequently Asked Questions

Combien de temps faut-il attendre avant de demander une exploration manuelle ?

Peut-on soumettre plusieurs URLs par jour via l'outil d'inspection ?

Demander une exploration garantit-il l'indexation de la page ?

Faut-il re-soumettre une page après une mise à jour de contenu ?

L'outil d'inspection améliore-t-il le crawl budget global du site ?

🎥 From the same video 5

Other SEO insights extracted from this same Google Search Central video · published on 07/12/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.