Official statement

Other statements from this video 8 ▾

- □ Les Core Web Vitals sont-ils vraiment un outil de diagnostic UX ou juste un critère de ranking ?

- □ Pourquoi Google privilégie-t-il les données lab pour le débogage SEO ?

- □ Lighthouse est-il vraiment l'outil de référence pour diagnostiquer les problèmes de performance ?

- □ Pourquoi Lighthouse ne peut-il pas mesurer la vraie réactivité de votre site ?

- □ Pourquoi Lighthouse ne détecte-t-il pas tous vos problèmes de Core Web Vitals ?

- □ Pourquoi le performance panel Chrome DevTools change-t-il la donne pour le debug des Core Web Vitals ?

- □ Les données de laboratoire peuvent-elles remplacer les données terrain pour optimiser l'UX ?

- □ Faut-il vraiment tester les Core Web Vitals en laboratoire plutôt qu'en production ?

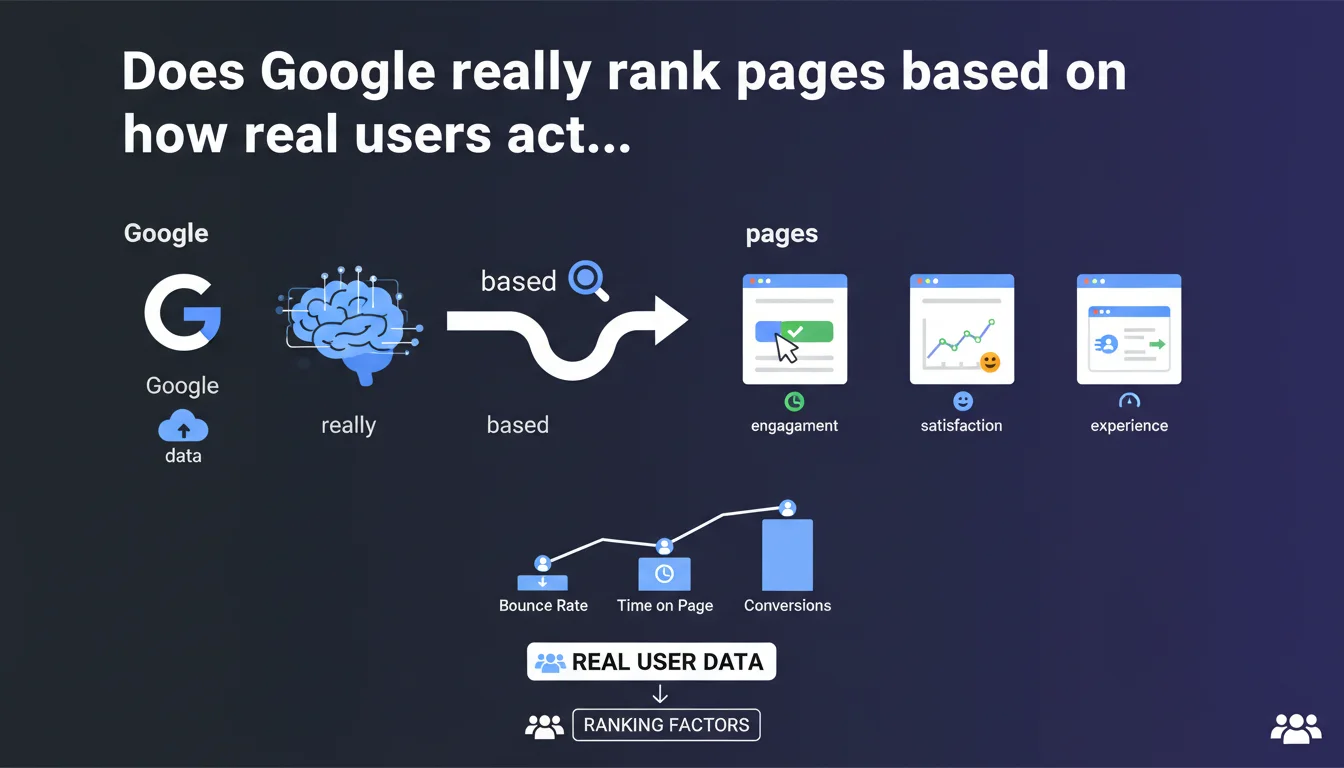

Google confirms that performance metrics (especially Core Web Vitals) come from real users navigating your site, not laboratory tests. This distinction has direct consequences for how you should audit and optimize your performance — and for the reliability of the tools you use daily.

What you need to understand

What's the actual difference between synthetic data and real user data?

Synthetic data comes from tools like Lighthouse, PageSpeed Insights in lab mode, or WebPageTest. These measure performance in a controlled environment, with a specific device and connection.

Real user data (or RUM for Real User Monitoring) comes from the Chrome User Experience Report (CrUX). Google collects these metrics from the Chrome browsers of millions of real users, with their varied connections, diverse devices, and installed extensions.

Why does this distinction directly impact your SEO strategy?

Because Google uses exclusively CrUX data to evaluate page experience in its ranking algorithm. A site can show a Lighthouse score of 95/100 and still fail Core Web Vitals in real conditions if your users predominantly have 3G connections or low-end devices.

Your optimizations must therefore target performance as perceived by your real visitors, not just laboratory benchmarks. This is a paradigm shift for many practitioners who still focus too heavily on synthetic tests.

How does Google collect this real user data?

Through the Chrome User Experience Report, which aggregates browsing data from Chrome users who've opted into syncing and usage statistics. Metrics are collected at the origin level (domain) and sometimes at the URL level for sufficiently visited pages.

The data is public and accessible via PageSpeed Insights (Field Data tab), the CrUX API, BigQuery, or Search Console in the Core Web Vitals report. But be careful — not all sites have enough CrUX data to be evaluated.

- Core Web Vitals (LCP, INP, CLS) come exclusively from real data, not lab tests

- Google uses the 75th percentile of visits: 75% of your users must fall below the thresholds for your URL to be considered "good"

- A site with low Chrome traffic may not have CrUX data — so it won't be evaluated on page experience

- Data is aggregated over 28 rolling days, not in real time

- Synthetic tests remain useful for diagnostics, but never replace real data for ranking

SEO Expert opinion

Is this statement consistent with what we actually observe in the field?

Absolutely — and that's precisely where many practitioners still get it wrong. I regularly see SEO audits based solely on Lighthouse or GTmetrix, with recommendations completely disconnected from the client's actual traffic reality.

The problem: a site can score 90+ in lab and stagnate in the "needs improvement" or "poor" thresholds in CrUX because the real audience primarily browses on unstable mobile 4G or budget Android devices. Lab test conditions never reflect the diversity of real user contexts.

What nuances should we add to this claim?

First, not all sites have CrUX data. Google requires a minimum volume of Chrome visits for an origin or URL to appear in the report. Low-traffic sites, or those whose audience uses Chrome minimally, can therefore escape evaluation — which isn't necessarily a disadvantage.

Second, data is aggregated over 28 days. An optimization deployed today won't show up in CrUX until gradually, with a lag potentially reaching a month. [To verify]: Google has never precisely detailed the latency between deployment and visible impact in CrUX reports.

In what cases does this rule not fully apply?

Sites with very low Chrome traffic, corporate intranets, or audiences that predominantly use alternative browsers (Safari, Firefox) don't generate enough CrUX data. In those cases, Google may rely on other signals — or simply not evaluate page experience as a ranking factor.

Another exception: newly created pages don't yet have CrUX history. During the first weeks, Google can't evaluate them on this criterion. That doesn't mean they won't rank — just that this factor isn't yet in play.

Practical impact and recommendations

What should you concretely do to optimize for real data?

First step: check your current CrUX data. Use PageSpeed Insights (Field Data tab), Search Console (Core Web Vitals report), or query the CrUX API directly. Identify which URLs or URL groups are struggling.

Next, segment your data by device type (mobile vs desktop) and by effective connection type (4G, 3G, 2G). CrUX BigQuery allows this granularity. You'll often discover your performance issues are concentrated on mobile 3G/4G, not desktop broadband.

What mistakes should you absolutely avoid?

Never rely solely on Lighthouse scores to validate an optimization. It's a diagnostic tool, not a ranking indicator. A perfect lab score guarantees nothing if your real users continue experiencing catastrophic load times.

Another classic trap: optimizing for the 50th percentile instead of the 75th. Google evaluates your URLs on the 75th percentile — meaning 75% of your visits must meet the thresholds. Aiming just for the median (50th percentile) isn't enough.

How can you verify your site is being evaluated on real data?

Two simple checks. First, log into Search Console and check the Core Web Vitals report. If you see data, Google is collecting CrUX from your domain.

Second, test a representative URL on PageSpeed Insights. If the "Field Data" (real-world data) tab shows metrics, you're being evaluated. Otherwise, you'll only see "Lab Data" — and in that case, page experience probably isn't weighing on your ranking.

- Prioritize analysis of real CrUX data rather than lab tests to identify performance issues

- Segment your audits by device type and connection type — issues are rarely uniform

- Target the 75th percentile, not the median, for your Core Web Vitals optimizations

- Monitor your metric evolution over 28 rolling days, not in real time

- Use synthetic tests (Lighthouse, WebPageTest) only to diagnose root causes, never to validate final results

- If your site lacks CrUX data, focus first on growing Chrome traffic before optimizing blind

❓ Frequently Asked Questions

Les données CrUX sont-elles disponibles pour tous les sites web ?

Combien de temps faut-il pour qu'une optimisation apparaisse dans les données CrUX ?

Pourquoi mes scores Lighthouse sont excellents mais mes Core Web Vitals restent médiocres ?

Google utilise-t-il le 50e ou le 75e percentile pour évaluer les Core Web Vitals ?

Peut-on consulter les données CrUX de ses concurrents ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 29/08/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.