Official statement

Other statements from this video 14 ▾

- □ Faut-il changer de domaine lors d'une réduction de catalogue ou conserver l'existant ?

- □ Peut-on vraiment avoir des millions de redirections 301 sans impacter son SEO ?

- □ Faut-il vraiment ignorer les erreurs 404 dans Google Search Console ?

- □ Faut-il vraiment ajouter les pages paginées dans le sitemap XML ?

- □ Google crawle-t-il vraiment les liens dans les menus déroulants au survol ?

- □ Combien de redirections peut-on vraiment mettre sur un site sans pénalité SEO ?

- □ Faut-il privilégier une personne ou une organisation comme auteur d'un article pour le SEO ?

- □ Faut-il vraiment aligner URL, title et H1 pour ranker en SEO ?

- □ Bloquer une page de redirection par robots.txt peut-il vraiment empêcher le passage du PageRank ?

- □ Les tirets multiples dans un nom de domaine pénalisent-ils votre SEO ?

- □ Faut-il publier du contenu tous les jours pour bien ranker sur Google ?

- □ Faut-il vraiment abandonner le texte dans les images pour le SEO ?

- □ Désindexer des URLs : Google limite-t-il vraiment les options à deux méthodes ?

- □ Les Core Web Vitals écrasent-ils vraiment la pertinence dans le classement Google ?

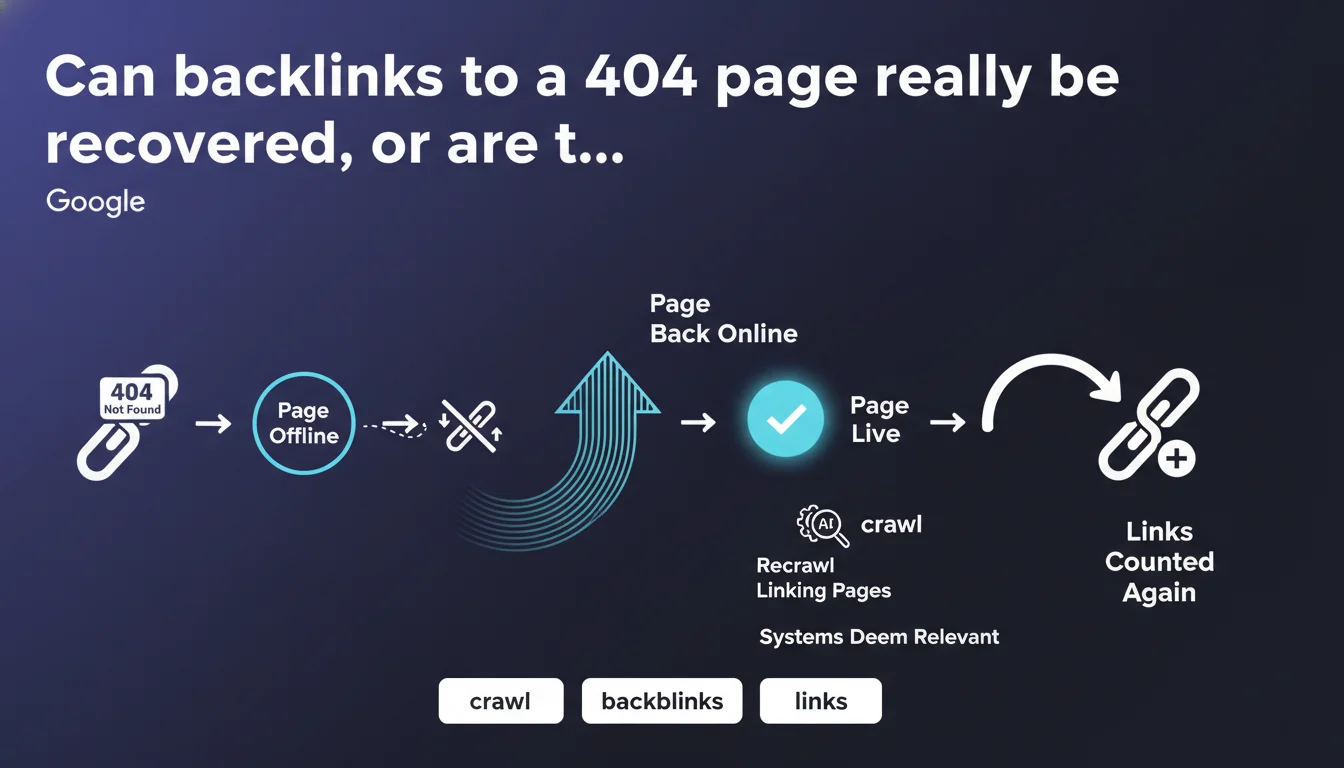

Google automatically recounts links pointing to a page that comes back online after being in 404 status. Recrawling of source pages and a re-evaluation of link relevance by algorithms are necessary — so it's not immediate. A resurrected dead page can thus recover its link equity, provided that the backlinks are still deemed relevant.

What you need to understand

Does Google really recover all links after a 404 page is resurrected?

The promise is clear: a page that comes back online after a period in 404 recovers its backlinks. No lasting penalty, no permanent marking in the index.

But there's a catch in the wording. Google specifies two conditions: the source pages must be recrawled, and the links must be deemed still relevant by the systems. This second point introduces a subjective and opaque variable — nothing guarantees that an old link will still be considered valid by the algorithms.

Why is this statement strategically important for SEO practitioners?

Because it sweeps away a persistent fear in the industry: that of permanently losing the accumulated link equity on a disappeared URL. Many SEOs avoid restoring dead pages for fear that PageRank is irrecoverable.

Google asserts here that this fear is unfounded. The engine does not blacklist a URL for its 404 history — at least, not permanently. It's a strong signal for strategies to recover archived content or revive abandoned sections.

What's the actual timeframe before links are recounted?

Google doesn't say. And that's where it gets tricky.

The recrawling of source pages depends on the crawl budget allocated to each referring site, the frequency of bot visits, the page depth in the site architecture. For links from infrequently crawled sites, the delay can stretch over several weeks — or even months.

- Links don't return instantly — you have to wait for the source pages to be recrawled.

- The notion of link « relevance » remains fuzzy — Google doesn't specify which criteria trigger a devaluation.

- No guaranteed timeline — everything depends on crawl budget and index refresh speed.

- A resurrected URL is not treated differently from a new URL — links must go through the standard algorithmic validation circuit.

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes and no. On sites with high crawl budget, we do observe progressive ranking recovery after bringing a 404 page back online. Rankings go up over days to weeks, suggesting that backlinks are indeed being reintegrated.

But on more modest sites or deep pages, the effect is much slower — and sometimes incomplete. [To verify]: some practitioners report cases where a resurrected page never recovers its initial traffic level, even with an intact backlink profile. Is this due to algorithmic devaluation of old links, excessive recrawl delay, or other factors (content freshness, UX signals)? Hard to determine without case-by-case audit.

What nuances should be added to Google's statement?

The notion of link « relevance » is a black box. Google doesn't say whether a link from 5 years ago, from an outdated editorial context, will still be counted. It also doesn't specify whether anchor text or semantic context of the source page factor into this evaluation.

Another point: recrawling source pages is not a priority process. If a referring page is rarely visited by Googlebot, the link can remain « dormant » for weeks. And that's where reality diverges from the theoretical ideal — recovery is not automatic, it's dependent on factors outside your control.

In what cases does this rule not apply fully?

If the page was in 404 for several months, or even years, some backlinks may have been removed by source webmasters — or the referring pages themselves may have disappeared. In that case, recovery is mathematically impossible.

Another limitation: if you resurrect a page with radically different content, Google may consider that the old links are no longer relevant — and ignore them. Let's be honest: an « iPhone 6 Comparison » page transformed into a « Foldable Smartphone Guide » has little in common with its original backlinks.

Practical impact and recommendations

What should you do concretely to maximize backlink recovery?

First priority: force rapid recrawling of source pages. Use Search Console to submit the resurrected URL, but also — and this is often forgotten — to request reindexing of key referring pages identified via Ahrefs, Majestic, or other backlink tools.

Second lever: ensure that the restored content is consistent with the context of the backlinks. If the old links pointed to a technical guide, don't come back with lightweight commercial content — Google might devalue the relevance.

What mistakes should you avoid when bringing a 404 page back online?

Don't make the mistake of setting up a 301 redirect immediately after resurrecting a page. If you bring it back online, let it live — otherwise, you're negating the entire benefit of backlink recovery. The 301 redirect transfers equity, but it doesn't allow the original page to rank again.

Another trap: restoring a page without checking backlink quality. If 80% of links come from spammed domains or deleted pages, the operation is pointless. Audit before resurrecting.

How do you verify that links are being recounted?

Monitor the evolution of the number of active backlinks in Search Console and your third-party tools. Compare before/after over a 4 to 6-week window — recrawling source pages takes time.

Also track SERP positions for queries historically associated with the page. If rankings climb progressively, that's a sign backlinks are being counted. If nothing moves after several weeks, it's probably because the links are deemed irrelevant — or the source pages haven't been recrawled.

- Submit the resurrected URL in Search Console to accelerate recrawl

- Identify source pages of major backlinks and request reindexing if possible

- Ensure restored content is consistent with the context of old backlinks

- Avoid setting up a 301 redirect immediately after bringing the page back online — let the page live

- Audit backlink quality before deciding to resurrect a dead page

- Track the evolution of active backlinks and rankings over 4 to 6 weeks

❓ Frequently Asked Questions

Combien de temps faut-il pour que les backlinks soient recomptabilisés après la remise en ligne d'une page 404 ?

Si une page a été en 404 pendant plusieurs mois, les backlinks sont-ils définitivement perdus ?

Peut-on forcer Google à recrawler plus rapidement les pages sources des backlinks ?

Google dévalue-t-il les backlinks anciens jugés non pertinents après une résurrection de page ?

Faut-il restaurer le contenu original à l'identique ou peut-on le modifier après la remise en ligne ?

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · published on 29/12/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.