Official statement

Other statements from this video 5 ▾

- □ Google utilise-t-il les scores d'autorité et de spam des outils SEO dans son algorithme ?

- □ Les scores d'outils SEO tiers ont-ils vraiment une utilité pour optimiser votre positionnement ?

- □ Pourquoi devriez-vous vous méfier des scores SEO proposés par les outils d'audit ?

- □ Faut-il ignorer les scores Lighthouse pour optimiser son référencement ?

- □ Les scores transparents sont-ils vraiment la clé pour détecter vos problèmes d'UX ?

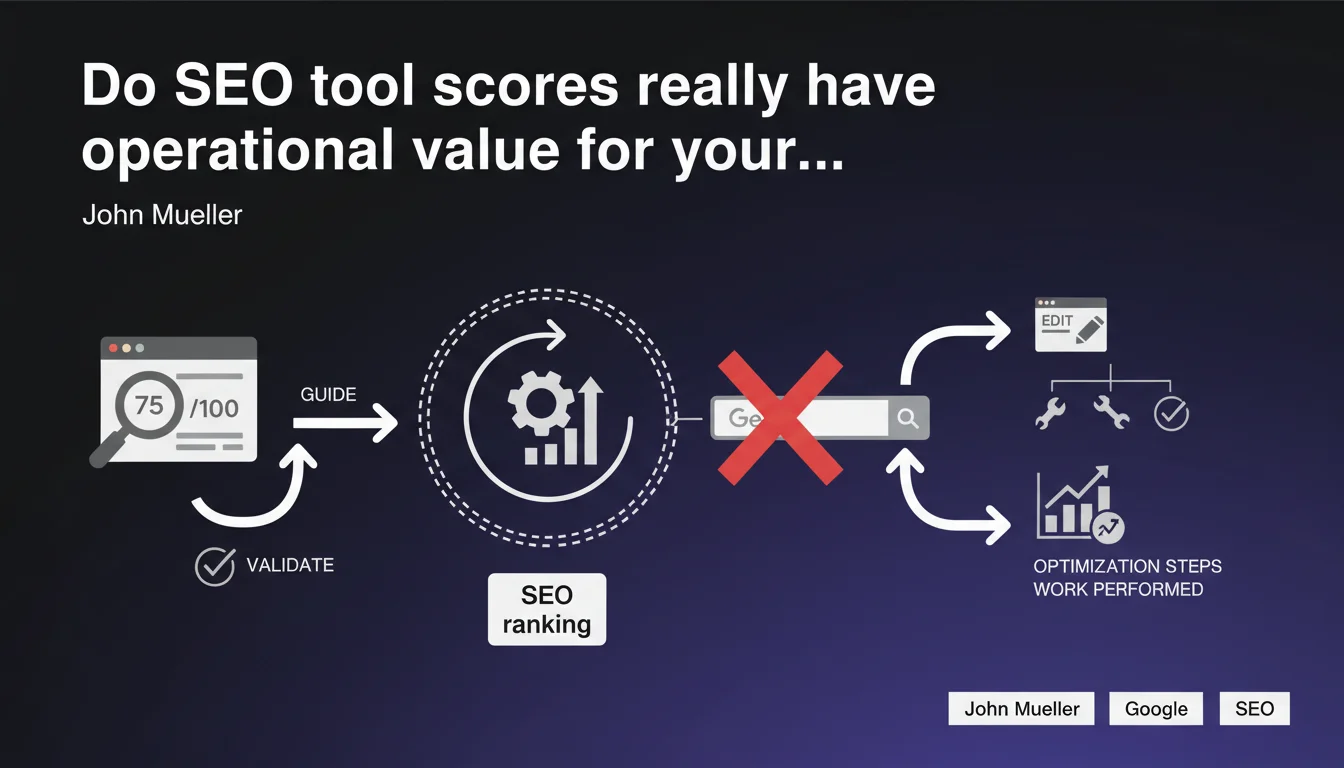

John Mueller confirms that SEO tool scores (Semrush, Ahrefs, Lighthouse, etc.) are not used by Google for ranking purposes, but they remain relevant for guiding optimizations and validating the work done on a website. Their value depends on the methodology behind the score and the ability to translate them into concrete actions.

What you need to understand

What does Mueller's statement really mean?

Mueller draws a clear distinction: Google does not integrate any third-party tool scores into its ranking algorithm. No PageSpeed Insights score, no Domain Authority, no global SEO score from audit platforms. These are external representations of criteria that Google measures in its own way.

On the other hand, he validates their operational usefulness. These scores can serve as a compass to prioritize projects and as a validation metric to measure the impact of optimizations. The key is to understand what they actually measure.

Why does Google emphasize this distinction?

Because many clients and decision-makers obsess over scores that have no direct correlation with SERP performance. A site can have a Lighthouse score of 95 and stagnate on page 3, while a competitor at 60 dominates page 1.

Google wants to discourage fixation on vanity metrics and refocus attention on what matters: content relevance, real user experience, topical authority. Scores are only imperfect proxies for these dimensions.

In what context do these scores remain relevant?

They are useful for detecting technical issues (loading times, crawl errors, structure problems) and for comparing a site's evolution before/after intervention. They provide a standardized framework that facilitates communication with non-technical teams.

But beware: a score never replaces a thorough qualitative analysis. You must always dig deeper behind the number to identify the levers that will have a real impact on organic visibility.

- Third-party tool scores are not ranking signals used by Google

- They remain valid for guiding optimization priorities and measuring progress

- Their relevance depends on the underlying methodology and the ability to translate them into actions

- Never confuse high scores with real SEO performance in SERPs

- Favor thorough qualitative analysis over obsession with a global figure

SEO Expert opinion

Is this position consistent with field observations?

Absolutely. We regularly see that sites with flawless technical scores struggle to rank, while others — technically average — perform well thanks to their topical authority and content quality. Scores often capture measurable criteria (speed, HTML structure, HTTPS) but miss the qualitative dimensions that carry weight.

That said, a catastrophic score generally signals real problems that need to be addressed. The danger is believing that a 90+ score guarantees success. It's only part of the equation.

What nuances should be added regarding score usage?

Not all scores are equal. A PageSpeed Insights score measures performance according to Core Web Vitals, which are indeed ranking signals — but the overall score is not used as-is by Google. A Domain Authority from Moz or a Domain Rating from Ahrefs model perceived authority, but with proprietary methodologies that don't necessarily reflect Google's vision.

You must therefore contextualize each score: understand its methodology, its limitations, and use it as one indicator among many. Never make it your sole KPI for an SEO strategy.

When do these scores become misleading?

When they are used outside competitive context. A score of 75 can be excellent in a low-competition sector, but insufficient against over-optimized competitors. Tools often generalize recommendations without accounting for the competitive reality of the sector.

[To verify]: some tools integrate obsolete criteria or overvalue minor aspects (e.g., text/HTML ratio, keyword density). You need to know how to filter relevant recommendations from methodological artifacts.

Practical impact and recommendations

How can you use these scores productively?

Integrate them into a structured audit process. Use them to quickly detect technical anomalies, then dig deeper manually to understand real impact. For example, a low Lighthouse score on LCP should trigger analysis of render-blocking resources, not just a race for the number.

Use them to communicate with technical teams: a score that improves positively is an effective visual argument to validate the impact of optimizations. But always keep an eye on business metrics: rankings, traffic, conversions.

What mistakes should you absolutely avoid?

Never over-optimize for the score at the expense of real user experience. I've seen sites remove essential features (tracking, chat, videos) to gain a few Lighthouse points, then lose engagement and conversions.

Also avoid comparing scores between tools with different methodologies. A Domain Authority of 50 from Moz doesn't mean the same thing as a Domain Rating of 50 from Ahrefs. Choose a reference tool and track evolution over time rather than seeking consistency across platforms.

What methodology should you adopt concretely?

Use scores as a starting point to identify priority projects, then validate their relevance through manual analysis. Document score evolution before/after intervention to qualify the ROI of SEO work to decision-makers.

Always cross-reference scores with real data: Search Console, Google Analytics, rank tracking tools. A score that rises without impact on organic traffic signals cosmetic, not structural optimization.

- Use scores to detect technical problems, not as a final objective

- Document score evolution to measure the impact of optimizations

- Systematically cross-reference with business KPIs (rankings, traffic, conversions)

- Never over-optimize for a score at the expense of real user experience

- Choose a reference tool and track evolution over time

- Contextualize each score based on the competitive reality of the sector

- Train non-technical teams so they understand the limitations of scores

❓ Frequently Asked Questions

Les scores PageSpeed Insights sont-ils des signaux de classement Google ?

Faut-il viser un score de 100 sur tous les outils SEO ?

Le Domain Authority de Moz influence-t-il le classement Google ?

Comment savoir si un score reflète un vrai problème SEO ?

Peut-on se fier uniquement aux recommandations automatiques des outils ?

🎥 From the same video 5

Other SEO insights extracted from this same Google Search Central video · published on 15/08/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.