Official statement

What you need to understand

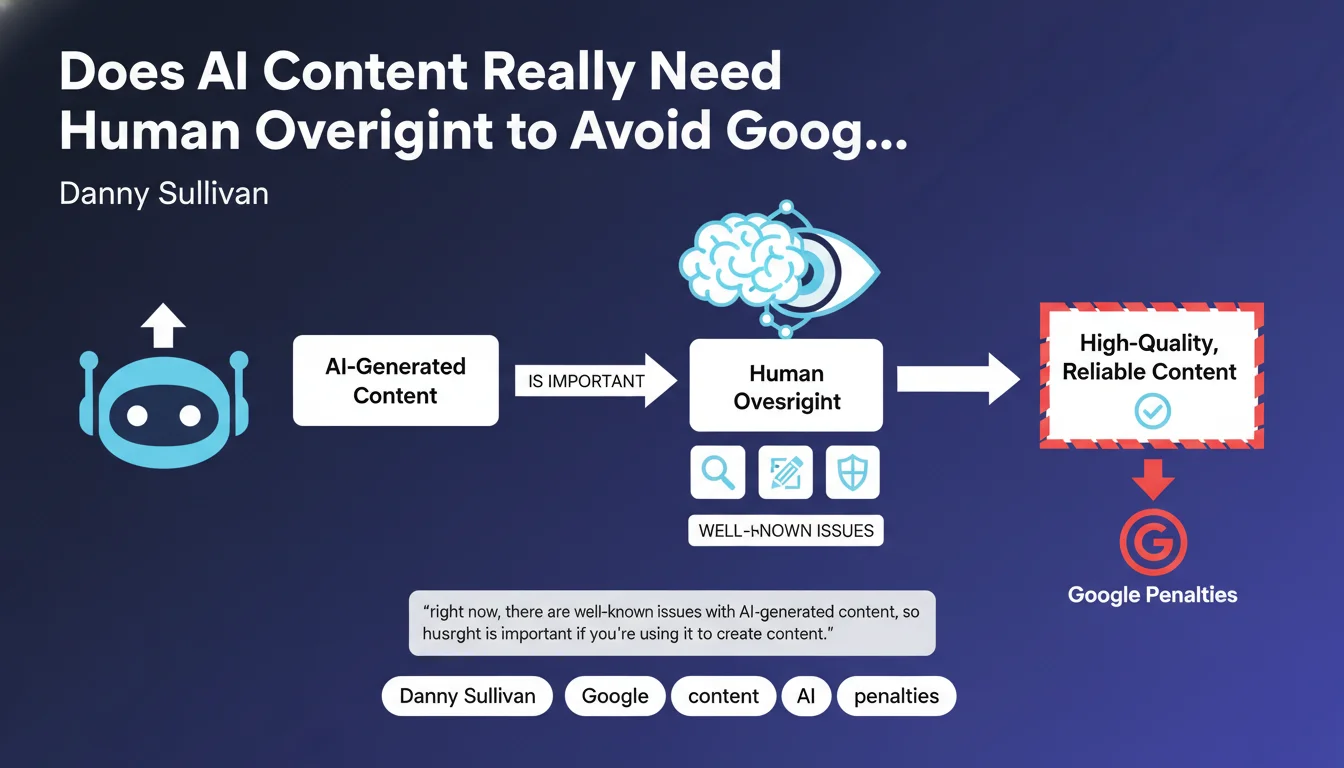

Why does Google insist on human supervision of AI content?

Google acknowledges that artificial intelligence can be used to create content, but warns against its current limitations. AI models can produce inaccurate information, factual hallucinations, or generic content without real added value.

The official position is not to ban AI, but to require that the final result meets standard quality criteria. Published content must be helpful, accurate and relevant, whether it's created by a human or AI-assisted.

What does human supervision actually mean in practice?

Human supervision isn't limited to a simple spell-check. It involves thorough fact-checking, adding personal expertise and adapting the content to the specific context of your audience.

This means that a subject matter expert must validate, enrich and personalize the content generated by AI. The human role is to bring depth, nuance and a unique perspective that AI cannot create alone.

What are the risks of unsupervised AI content?

Content produced massively by AI without control presents recurring quality issues. It may contain factual errors, lack coherence or provide outdated information.

- Risk of misinformation: AI can fabricate facts or misinterpret data

- Generic content without value: superficial texts that bring nothing new

- Contextual inconsistency: information not adapted to the target audience or local market

- Loss of trust: users quickly detect low-quality content

- Failure to meet E-E-A-T standards: lack of demonstrated expertise, experience and authority

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Absolutely. Data shows that Google has indeed targeted sites with massive AI content during recent Helpful Content and Core Updates. Penalized sites typically had mass-generated content without real supervision or added value.

On the other hand, sites using AI in a strategic and supervised manner continue to perform well. The tool isn't the problem: it's the usage that's decisive. Google evaluates the final result, not the creation process.

What important nuances should be added to this position?

First crucial point: Google cannot technically detect whether content is created by AI or not with absolute reliability. Its algorithms evaluate quality, not origin. Excellent supervised AI content will pass all filters.

Second nuance: the necessary supervision varies according to the type of content and stakes involved. A medical or financial article (YMYL domain) requires much more rigorous expert validation than a standard product description.

In which cases does this rule become particularly critical?

YMYL (Your Money Your Life) domains are under increased scrutiny. Health, finance, legal: any content that could impact safety or well-being requires documented expert supervision.

For e-commerce sites, mass-generated product sheets without differentiation are also problematic. Technical or scientific content requires validation by domain experts to avoid serious factual errors.

Practical impact and recommendations

What should you actually do to effectively supervise AI content?

Implement a multi-step validation process. Each piece of AI-generated content must go through a subject matter expert who verifies facts, adds updated information and integrates unique perspectives.

Systematically enrich content with differentiating elements: concrete examples drawn from your experience, proprietary data, real case studies, customer testimonials, or analyses specific to your sector.

Document your writers' and reviewers' expertise. Add detailed author biographies, professional references and proof of authority in the subject area.

What critical mistakes should you absolutely avoid?

Never publish AI content without thorough fact-checking. AI hallucinations can create highly credible false information. Verify every statistic, every date, every technical claim.

Avoid mass production without differentiation. Creating 100 generic articles in one day with AI is exactly what Google seeks to penalize. Prioritize quality over quantity.

Don't neglect E-E-A-T signals. AI content without clear attribution to an expert, without proof of real experience and without demonstration of authority will quickly be identified as low-value.

How can you verify that your AI approach meets Google's expectations?

Apply the added value test: if you removed your content from the web, would anyone miss it? Does it bring a unique perspective, exclusive information or particular expertise?

Analyze your user engagement metrics: time on page, bounce rate, pages per session. Quality content, whether AI-assisted or not, generates engagement. Low metrics signal a quality problem.

- Set up a validation workflow with subject matter experts

- Systematically verify all factual information produced by AI

- Enrich each piece of content with personal experience elements and proprietary data

- Add detailed author biographies and proof of expertise

- Avoid mass production of generic content without differentiation

- Personalize content for your specific audience and context

- Integrate concrete examples, case studies and real testimonials

- Monitor engagement metrics to detect low-value content

- Document the review process and supervisors' qualifications

- Conduct regular quality audits on published content

💬 Comments (0)

Be the first to comment.