Official statement

Other statements from this video 7 ▾

- □ Pourquoi les frameworks JavaScript génèrent-ils des soft 404 sur les sites à fort inventaire ?

- □ Robots.txt bloque-t-il vos ressources critiques sans que vous le sachiez ?

- □ Pourquoi l'historique du robots.txt dans Search Console change-t-il la donne ?

- □ Pourquoi héberger robots.txt sur plusieurs CDN peut-il saboter votre crawl budget ?

- □ Une requête AJAX qui échoue peut-elle tuer l'indexation de toute votre page ?

- □ Comment Chrome DevTools peut-il révéler les problèmes de rendu que Googlebot rencontre sur vos pages ?

- □ Pourquoi Google pénalise-t-il les sites qui gèrent mal leurs erreurs JavaScript ?

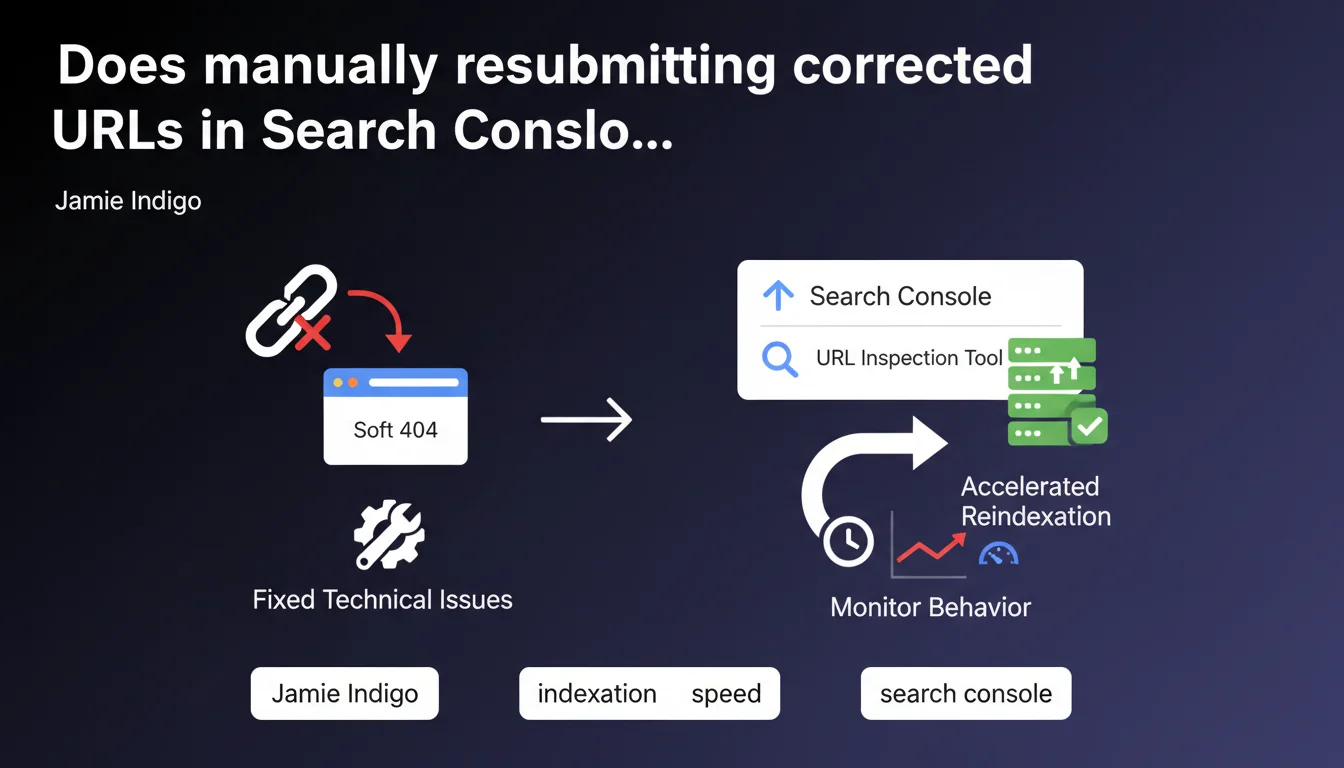

Google confirms that manually resubmitting corrected URLs via Search Console allows you to precisely track their behavior and accelerates their return to the index after resolving technical issues like soft 404s. This approach offers granular control over the reindexing process rather than waiting for Googlebot's natural crawl.

What you need to understand

What is a soft 404 and why is it problematic?

A soft 404 occurs when a page returns an HTTP 200 (OK) status code when it should signal a 404 error. The page displays empty content, generic messaging, or indicates that the resource doesn't exist — but technically, the server claims everything is fine.

Google detects these inconsistencies and treats these pages as non-existent, which excludes them from the index. The problem? Once marked this way, they don't automatically return to the index even after fixing the underlying technical issue.

Why does manual resubmission make a difference?

Normally, after fixing the issue, you wait for Googlebot to naturally recrawl the affected URLs. This can take days or even weeks depending on your crawl budget and how frequently the bot visits your site.

Resubmitting via Search Console triggers active prioritization of these URLs. Google examines them quickly and updates their indexation status if the fixes are effective. This is especially useful for strategic pages that generate traffic or conversions.

What is the advantage of the specific tracking mentioned by Google?

Manually resubmitting creates a traceable history in Search Console. You see exactly when the URL was re-examined, whether it was reindexed, and potentially what issues persist.

Without this approach, you're flying blind — impossible to tell whether the lack of indexation comes from a crawl that hasn't happened yet or an unresolved technical problem.

- Soft 404: pages returning 200 but treated as non-existent by Google

- Technical correction alone doesn't guarantee fast reindexing

- Manual resubmission prioritizes crawl and offers granular tracking

- Particularly effective for high-stakes business pages

SEO Expert opinion

Does this statement match real-world observations?

Yes, we regularly see that resubmission does indeed accelerate the process. On sites with low crawl budget or thousands of pages, waiting for natural bot visits can take forever.

However — and this is crucial — resubmitting doesn't force indexation. If the technical issue persists or if the page has other defects (thin content, questionable quality), Google will reject it again. Resubmission isn't a magic wand that bypasses quality criteria.

In what cases is this approach useless?

If your site receives intensive daily crawling — typically large media sites or e-commerce platforms with high content freshness — the benefit of manual resubmission becomes marginal. Googlebot will naturally return within 24-48 hours anyway.

Another case: if you have hundreds of soft 404 URLs, resubmitting one by one through the Search Console tool becomes impractical. [To verify] Google provides no guidance on a threshold beyond which the method becomes counterproductive, or whether batch resubmission via XML sitemap would have the same accelerating effect.

What nuance should be added about "specific tracking"?

Resubmitting does create a record, but Search Console doesn't guarantee detailed real-time feedback. You'll see "URL submitted and indexed" or an error message, but rarely a thorough explanation if something goes wrong.

So "tracking" remains relative: you know an action was attempted, not necessarily why it failed if it did. For detailed diagnosis, you'll need to cross-reference with server logs, the coverage report, and potentially third-party tools.

Practical impact and recommendations

What to do concretely after fixing a soft 404?

Once the technical issue is resolved — for example, you've fixed a template that was returning empty content with a 200 status — go to Search Console > URL Inspection. Paste the corrected URL, run a live test to verify that Googlebot sees the right content, then click "Request indexing".

Monitor the coverage report over the next few days. If the URL switches to "Valid", you've succeeded. If it stays in error, investigate further: is the HTTP code correct? Is the content substantial enough? Is there a noindex tag or robots.txt blocking it?

What mistakes to avoid when resubmitting?

Never resubmit a URL before verifying live that it's fixed. Testing with the inspection tool prevents submitting a still-defective page, which would waste time and muddy your metrics.

Another pitfall: mass resubmitting URLs without prioritizing. Focus first on strategic pages (those generating organic traffic or conversions). Ancillary pages will naturally follow during the next deep crawl.

How to verify the fix has taken effect?

Beyond Search Console, cross-reference with your server logs. Verify that Googlebot actually recrawls the resubmitted URLs and receives a 200 with complete content. Compare crawl dates before/after resubmission: a quick revisit confirms prioritization.

Also use a site:URL search in Google a few days later to confirm actual indexation. If the URL still doesn't appear, a problem persists — and you need to investigate further.

- Fix the technical issue first (HTTP code, content, template)

- Test the URL with the inspection tool before resubmitting

- Request indexation via Search Console

- Monitor the coverage report over 3-7 days

- Cross-reference with server logs to confirm recrawl

- Prioritize high-impact business pages

- Don't mass resubmit without strategy

❓ Frequently Asked Questions

Réoumettre une URL plusieurs fois accélère-t-il encore plus le processus ?

Peut-on réoumettre via sitemap XML plutôt qu'URL par URL ?

Combien de temps faut-il attendre avant de voir l'effet d'une résoumission ?

La résoumission fonctionne-t-elle pour d'autres problèmes que les soft 404 ?

Y a-t-il un quota de résoumissions quotidiennes dans Search Console ?

🎥 From the same video 7

Other SEO insights extracted from this same Google Search Central video · published on 02/03/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.