Official statement

Other statements from this video 13 ▾

- □ Les mauvaises traductions peuvent-elles pénaliser l'ensemble de votre site multilingue ?

- □ Le contenu dupliqué sur les fiches produits est-il vraiment sans danger pour votre référencement ?

- □ Faut-il traduire toutes vos pages ou concentrer vos efforts sur les plus stratégiques ?

- □ Faut-il vraiment désactiver le ciblage géographique dans Search Console pour un site international ?

- □ Google indexe-t-il vraiment le texte masqué dans votre code HTML ?

- □ Faut-il préférer rel=canonical aux redirections user-agent pour les pages non indexées ?

- □ Faut-il déployer ses optimisations SEO en une seule fois plutôt que progressivement ?

- □ Pas de cache Google sur ma page : est-ce un signal d'alarme pour mon indexation ?

- □ Googlebot ignore-t-il vraiment toutes les permissions du navigateur lors du crawl ?

- □ Le score Page Experience est-il vraiment indispensable pour apparaître dans Top Stories ?

- □ Google attribue-t-il vraiment un score EAT à votre site ?

- □ Pagination SEO : faut-il privilégier les liens séquentiels ou multiples pages ?

- □ Les Core Web Vitals mesurés uniquement sur Chrome : faut-il s'inquiéter de la représentativité ?

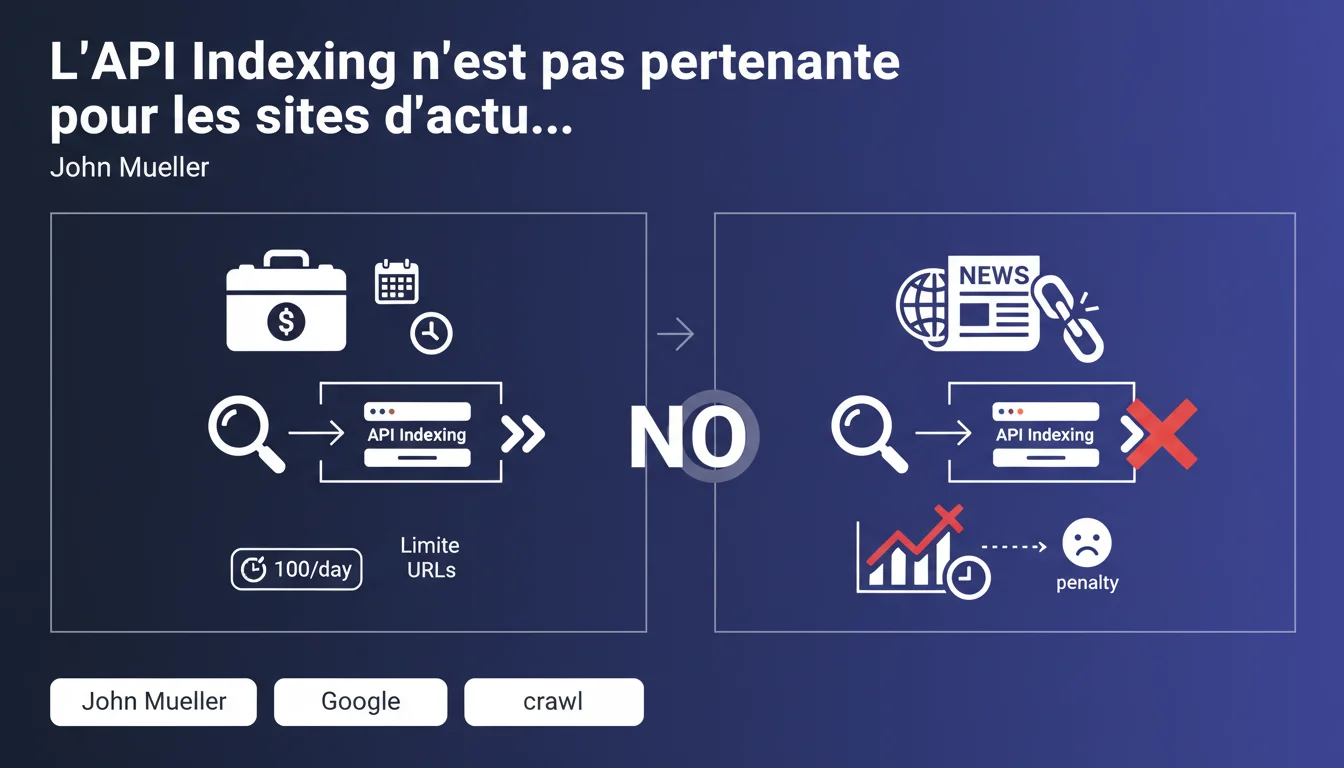

Google's Indexing API is intended only for job listings and streaming videos—not for news sites or traditional editorial content. Using it outside its intended use case does not accelerate the indexing of news articles and provides no tangible SEO benefit, even though Google claims it doesn't trigger penalties.

What you need to understand

Why Does Google Limit the Indexing API to Such Specific Content?<\/h3>

The Indexing API was designed for high-turnover content types<\/strong>: job listings and streaming videos. These types of content share a common characteristic—they have a very short lifespan and require near-instant indexing.<\/p>

Google has built this API with a strict quota of URLs. The reasoning is straightforward: the infrastructure cannot handle millions of daily requests for all types of content. News sites, forums, e-commerce? They must go through traditional channels.<\/p>

Mueller specifies that the API can speed up crawling<\/strong> but does not impact the indexing of news articles. This distinction deserves clarification: speeding up crawling means that Googlebot visits the reported URL more quickly.<\/p>

But crawling ≠ indexing. A page can be crawled within minutes and remain stuck in processing or selection for hours or even days. For a news site, this acceleration of crawling without an immediate indexing guarantee provides no operational benefit.<\/p>

According to Mueller, no penalties are applied<\/strong> in the case of incorrect use of the API. Google merely ignores non-compliant requests or processes them through standard pipelines.<\/p>

That said, the absence of sanction does not mean it is a neutral practice. You are consuming your quotas for zero benefit—and you risk saturating your allocation if you have genuine job listings or videos to submit elsewhere.<\/p>

Does the API Really Speed Up Crawling as Mueller Suggests?<\/h3>

What Are the Risks of Using the Indexing API Outside Its Intended Use Case?<\/h3>

SEO Expert opinion

Does This Statement Really Reflect Field Observations?<\/h3>

Let’s be honest: many SEOs have tested the Indexing API on non-eligible content—and the results are unanimous. No measurable impact on indexing speed<\/strong> for news articles, product pages, or traditional editorial content.<\/p>

What Mueller does not explicitly state is that Google already has very effective mechanisms in place to detect and index news content quickly. Sites registered in the Publisher Center, equipped with a properly configured News sitemap and an active RSS feed, see their articles indexed in minutes—without the Indexing API.<\/p>

The real question: why do so many SEOs continue to use this API outside its scope? Because the alternatives seem opaque or insufficiently documented. [To verify]<\/strong>: Google never communicates precise SLAs on indexing timelines via sitemap, which fuels the temptation to seek shortcuts.<\/p>

Mueller states that incorrect use does not incur penalties<\/strong>. Technically correct—but incomplete. If you saturate your quota with irrelevant URLs, you block your own resources for truly eligible content.<\/p>

Second point: he says the API "can speed up crawling." This conditional deserves attention. In what specific cases? For what volumes? With what average latency? [To verify]<\/strong>: no numerical data supports this claim. We remain in the dark.<\/p>

For job boards and recruitment sites<\/strong>, the Indexing API remains a valuable tool. A job listing has a lifespan of a few days to a few weeks—every hour counts. Even modest crawling acceleration can make the difference between visibility and invisibility.<\/p>

Similarly for video streaming platforms<\/strong>: new content must appear in enriched results quickly. But in practice? The vast majority of websites have no reason to use this API.<\/p>What Are the Grey Areas in This Statement?<\/h3>

When Does the Indexing API Provide Real Added Value?<\/h3>

Practical impact and recommendations

What Should You Actually Do If You Manage a News Site?<\/h3>

Forget the Indexing API. Focus on the official channels optimized for news<\/strong>: News sitemap, RSS feed, Publisher Center. These tools are designed to quickly detect and index new articles.<\/p>

Ensure your News sitemap is updated in real-time—ideally via an automated system that generates a new version as soon as an article is published. Google crawls these sitemaps at high frequency, often several times per hour for recognized news sites.<\/p>

Second lever: the Publisher Center<\/strong>. If you haven’t set it up yet, you are missing out on a major indexing accelerator. This tool allows Google to instantly detect your new content and integrate it into Google News.<\/p>

First mistake: believing it can replace a well-designed sitemap. The Indexing API is not a mass indexing tool—it was designed to signal some critical URLs per day<\/strong>, not hundreds or thousands.<\/p>

Second mistake: using it on ineligible content hoping to "force" indexing. Google ignores these requests or processes them through traditional pipelines. Result: you consume technical resources for zero benefit.<\/p>

Measure the delay between publication and indexing<\/strong> for your articles. If this delay consistently exceeds 30 minutes, the problem does not arise from a lack of the Indexing API—it stems from your technical infrastructure, crawl budget, or insufficient quality signals.<\/p>

Audit your News sitemap: does it contain outdated URLs? Duplicates? Articles dated several weeks back? A polluted sitemap slows the detection of new content. Clean it rigorously.<\/p>

What Mistakes Should You Absolutely Avoid with the Indexing API?<\/h3>

How Can You Check if Your Indexing Strategy is Optimal?<\/h3>

❓ Frequently Asked Questions

Puis-je utiliser l'API Indexing pour mes articles de blog ou mes fiches produits ?

Quelle est la différence entre accélérer le crawl et accélérer l'indexation ?

Quels outils Google recommande-t-il pour les sites d'actualités ?

Y a-t-il une pénalité si j'utilise l'API Indexing incorrectement ?

Combien d'URLs puis-je soumettre via l'API Indexing ?

🎥 From the same video 13

Other SEO insights extracted from this same Google Search Central video · published on 31/12/2021

🎥 Watch the full video on YouTube →Related statements

Get real-time analysis of the latest Google SEO declarations

Be the first to know every time a new official Google statement drops — with full expert analysis.

💬 Comments (0)

Be the first to comment.