Official statement

What you need to understand

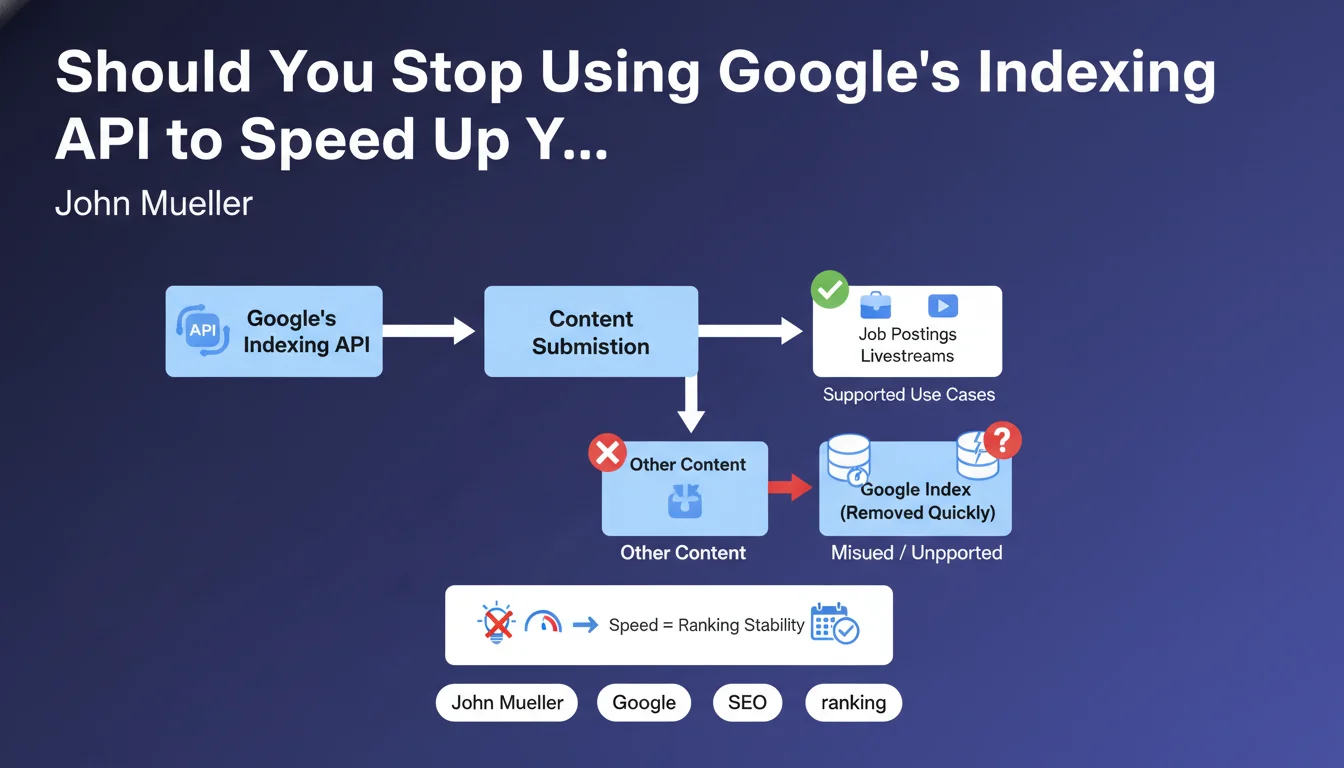

Google is warning against the misuse of its Indexing API, a technical tool officially intended for only two specific use cases: structured job postings and live stream videos (livestreams).

Many SEO practitioners have discovered that this API could significantly accelerate the indexing of any type of content, far beyond these two authorized categories. This practice has spread throughout the SEO community as a technique to bypass natural indexing delays.

Google now clearly states that this abusive use has negative consequences: even if content can be temporarily indexed faster, it is then frequently removed from the index, making the technique counterproductive.

- The Indexing API is only intended for 2 types of content: job postings and livestreams

- Using it for other content is considered technical spam

- Content indexed through misuse is often deindexed quickly

- This practice can potentially harm your site's reputation with Google

SEO Expert opinion

This clarification is part of Google's ongoing actions against large-scale technical manipulations. Field observations indeed confirm that content pushed via the API outside authorized use cases experiences significant volatility in the index.

However, it's important to nuance this: some sites have been able to observe significant temporary gains with this technique, which explains its popularity. But the risk now becomes too high, especially for established sites that have a reputation to preserve.

The real question remains that of natural indexing being too slow, which pushes some SEOs toward these solutions. Google should also work on improving standard indexing timeframes for legitimate sites that publish fresh, quality content.

Practical impact and recommendations

- Audit your current practices: check whether you or your service providers are using the Indexing API for content other than job postings and livestreams

- Immediately disable any non-compliant use of the API to avoid potential penalties

- Return to fundamentals: optimize your crawl budget via the robots.txt file and site architecture

- Use Search Console correctly: manually submit strategic URLs via the URL inspection tool (in moderation)

- Improve your internal linking to facilitate natural discovery of your new pages by Googlebot

- Create and maintain a quality XML sitemap, automatically updated and properly referenced

- Work on your domain authority: a trusted site with natural backlinks sees its content indexed more quickly

- Optimize crawl speed: a responsive server and fast response times promote more frequent indexing

- If you actually manage job postings or livestreams: use the API in accordance with official documentation with appropriate structured markup

Implementing an optimal and compliant indexing strategy requires a thorough understanding of Google's technical mechanisms and a personalized approach depending on your site type. These optimizations touch on sensitive technical aspects (architecture, crawl budget, dynamic sitemaps) that require specialized expertise. For sites with significant rapid indexing challenges, support from a specialized SEO agency can prove valuable in implementing a sustainable strategy, avoiding technical pitfalls, and ensuring full compliance with Google's guidelines.

💬 Comments (0)

Be the first to comment.