Official statement

Other statements from this video 9 ▾

- □ Le Web Rendering Service de Google suit-il vraiment toutes les dernières fonctionnalités de Chrome ?

- □ Pourquoi Google peine-t-il à indexer correctement les sites qui utilisent des Web Workers ?

- □ Pourquoi les SEO et développeurs doivent-ils absolument travailler ensemble ?

- □ Les core updates de Google sont-elles vraiment des rappels à l'ordre sur les guidelines ?

- □ Les core updates sont-elles vraiment neutres ou cachent-elles des pénalités déguisées ?

- □ Core update : pourquoi Google refuse-t-il de donner des détails spécifiques ?

- □ Les core updates de Google sont-elles vraiment conçues pour améliorer l'expérience utilisateur ou pour redistribuer les positions ?

- □ Pourquoi Google refuse-t-il de révéler ce que contiennent vraiment les core updates ?

- □ Les core updates de Google affectent-ils vraiment tous les sites ?

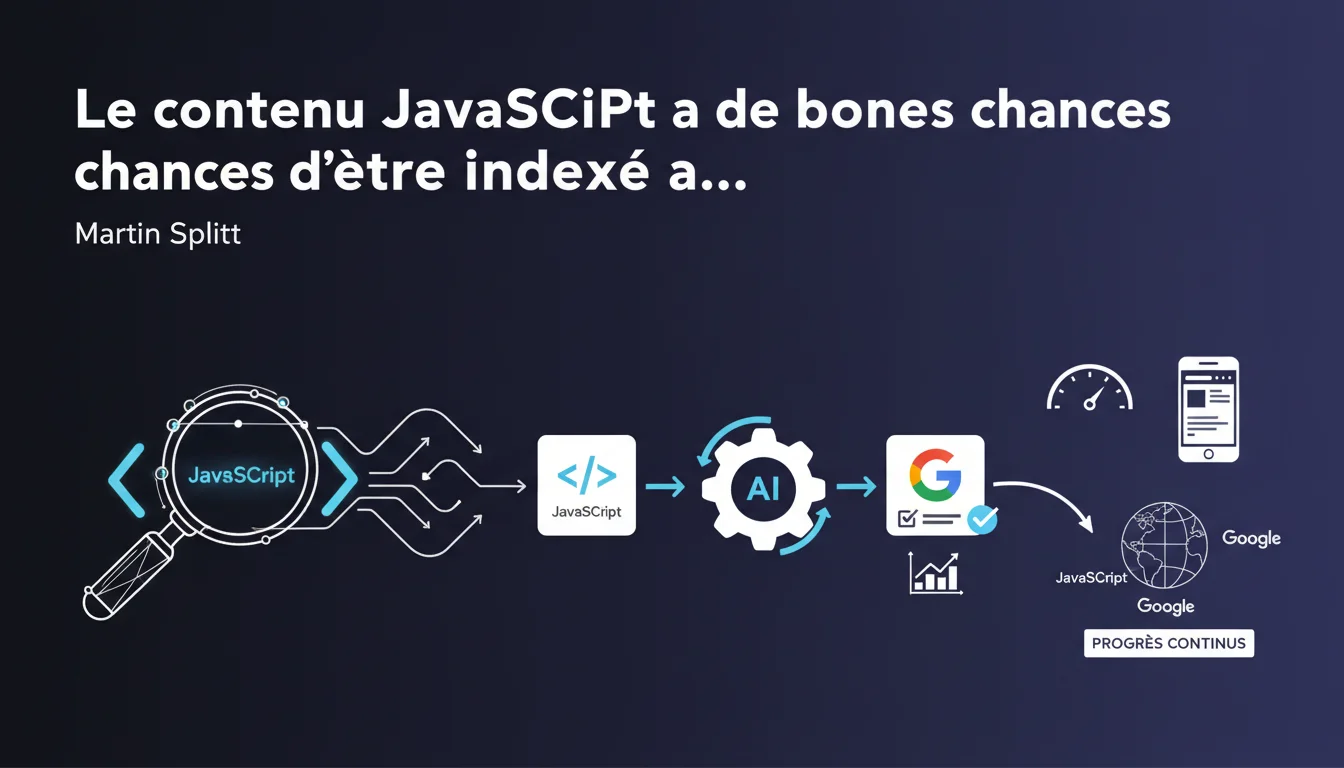

Google claims that content generated through JavaScript has a good chance of being indexed now, even though the issue isn't entirely resolved. JavaScript indexing has significantly improved in recent years, but it remains a technical challenge with some gray areas. In practical terms? Relying solely on JavaScript is still risky for critical content.

What you need to understand

What does "good chance of being indexed" really mean?

This cautious phrasing by Martin Splitt reveals a ground reality: JavaScript indexing is not guaranteed at 100%. Google has indeed made progress in client-side rendering, but "good chances" does not equate to "absolute certainty."

The core issue remains the crawl budget and the cost of rendering. Google must first crawl the raw HTML page and then wait for the JavaScript to execute to retrieve the final content. This two-step process can cause indexing delays or even outright failures if resources are limited.

Why does Google mention "huge progress"?

Historically, Google struggled to handle JavaScript at all. Any dynamic content was invisible to Googlebot. Since the introduction of its Chromium-based rendering engine, things have changed.

But let's be honest: "huge progress" doesn't mean "problem solved." Complex sites with heavy JS, poorly configured React/Vue/Angular frameworks, or prohibitive load times still encounter difficulties. Deferred rendering can take several days—or may never happen at all.

What are the key takeaways?

- JavaScript indexing works better than before, but it remains unpredictable for strategic content

- Google employs a two-step process: raw HTML crawl, then JS rendering if budget allows

- Sites with limited resources (low authority, reduced crawl budget) suffer more delays

- SSR (Server-Side Rendering) or hydration remains the safest solution to ensure indexing

- JavaScript is not a categorical "no," but a "yes under conditions"

SEO Expert opinion

Is this statement consistent with observed practices on the ground?

Yes and no. On well-optimized sites with a good crawl budget, Google does index a lot of JS content. But as soon as you step off the beaten path—new sites, low authority, poorly structured JS—problems arise.

Empirical tests show that critical content should never solely depend on JavaScript. Even with the "huge progress" mentioned, there are still pages where JS content only appears after several weeks. [To be verified]: Google does not provide any specific metrics on JS rendering success rates, making quantitative evaluation difficult.

What nuances should we consider in this announcement?

Splitt mentions "good chances," not guarantees. This is crucial. JS rendering depends on multiple factors: crawl budget, code complexity, execution time, server resources. A site can function perfectly in JS for the user and still remain partially invisible to Google.

And that's where it gets tricky. Modern frameworks (Next.js, Nuxt) offer SSR or SSG precisely because they know that pure CSR remains unpredictable. The existence of these tools indicates that the problem is not "resolved."

In what cases does this rule not apply?

For sites with low authority or deep pages with few backlinks, JS rendering remains a risky bet. Google allocates fewer resources to these pages, so dynamic content may simply never get rendered.

The same goes for e-commerce sites with thousands of product pages generated in JS. The crawl budget skyrockets, rendering lags, and some pages remain invisible for weeks. SSR or partial hydration then becomes essential.

Practical impact and recommendations

What should you actually do with this information?

First, audit your current JavaScript implementation. Use the Search Console to verify that Google is seeing the final content after rendering. Compare the raw HTML with the fully rendered version using the URL inspection tool.

Next, identify critical content—titles, meta descriptions, main text, internal links. This content should never rely solely on JavaScript. Prefer SSR, SSG, or at least a progressive hydration.

What mistakes should you absolutely avoid?

Don’t blindly trust Google’s statements. “Good chances” is not a binding promise. Testing, measuring, verifying—that’s the only reliable approach.

Avoid generating critical SEO elements (title tags, meta descriptions, structured data) solely in JS. Even if Google can retrieve them, rendering delays may penalize initial indexing.

- Check that the main content appears in the raw HTML (View Source)

- Test the rendering with the URL inspection tool in the Search Console

- Prefer SSR/SSG for strategic pages (home, categories, product sheets)

- Monitor crawl budget and rendering errors via Search Console

- Implement an HTML fallback for essential content

- Avoid heavy JS frameworks without prior optimization

How can you ensure reliable indexing of dynamic content?

The most robust solution remains Server-Side Rendering or static generation. Next.js, Nuxt, and SvelteKit offer these options natively. You serve complete HTML on the first request, and Google indexes it immediately.

If SSR is not feasible, opt for a progressive hydration: essential content arrives as HTML, and JS enriches the user experience afterward. It’s an acceptable compromise.

❓ Frequently Asked Questions

Google indexe-t-il tout le contenu JavaScript sans exception ?

Le SSR est-il obligatoire pour être bien référencé en JS ?

Comment vérifier que Google voit bien mon contenu JS ?

Les frameworks modernes comme React sont-ils compatibles avec le SEO ?

Combien de temps Google met-il pour rendre une page JavaScript ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 11/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.