Official statement

Other statements from this video 12 ▾

- □ Is E-A-T really not a Google ranking factor?

- □ Does having multiple URLs for the same content really trigger a Google penalty?

- □ Why does Google refuse to reveal the complete formula behind its ranking algorithm?

- □ Should you embrace experimentation to unlock your SEO potential?

- □ Does eliminating redirect chains really protect your crawl budget from wasting resources?

- □ Is the impact/effort matrix really the key to prioritizing your SEO tasks effectively?

- □ Should SEOs dictate technical solutions to developers, or simply expose the SEO problems they've identified?

- □ Does choosing between 301 and 302 redirects really impact your Google rankings?

- □ Is developing content that remains invisible to search engines really worth your time and effort?

- □ Does Google really push algorithm updates every single minute?

- □ Should you really integrate SEO from the development phase to avoid costly fixes later?

- □ Can SEO pages without real user value still rank in Google?

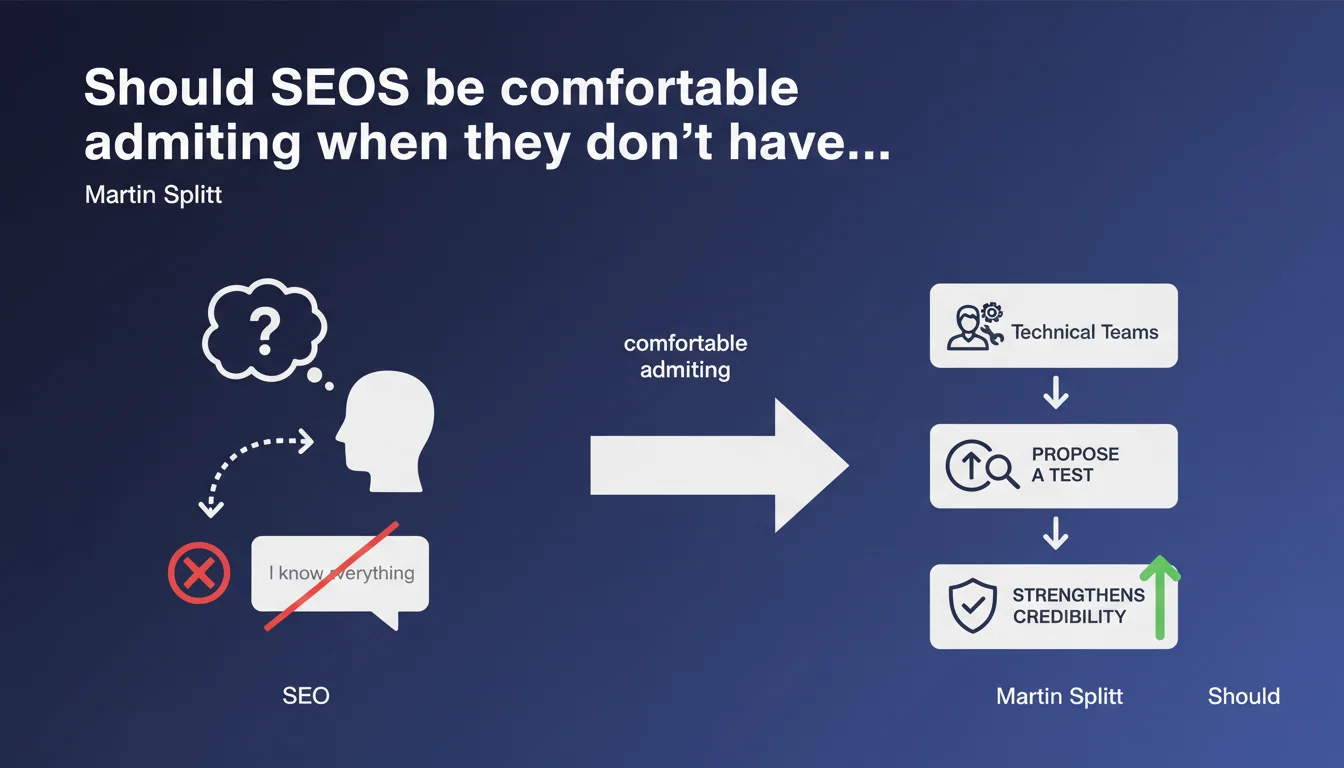

Martin Splitt encourages SEOs to honestly say "I don't know" rather than bluff, just like developers do. This honesty paired with a testing proposal strengthens credibility with technical teams and opens the door to healthier collaboration. The advice may seem obvious, but it addresses a real problem: the pressure to appear omniscient.

What you need to understand

Why does Martin Splitt emphasize this point?

The statement targets a recurring flaw in our profession: the temptation to deliver a definitive answer even when Google's exact mechanisms remain opaque. Developers learned long ago that admitting a gap and then proposing a test is more productive than bullshit.

Splitt encourages us to adopt this posture. Concretely, this means an SEO who says "I don't know precisely, but we can test by measuring X and Y" gains credibility — especially in front of technical teams that spot bluffing from a mile away.

What does this change practically for SEOs?

First, it relieves pressure. Nobody knows every detail of Google's algorithm, not even Mountain View employees. Second, it shifts the focus: instead of pretending to know everything, the SEO expert becomes the one who knows how to ask the right questions and structure tests.

For clients and internal teams, it signals maturity. A consultant who admits their limits while proposing a validation method inspires more confidence than a guru who asserts truths without nuance.

What are the risks of ignoring this advice?

The main danger? Losing all credibility the day a categorical claim turns out to be false. Technical teams don't forget. Once you've claimed to know when it was just hot air, it's hard to regain their trust.

Second risk: getting locked into rigid strategies based on unfounded certainties. SEO evolves quickly — what worked yesterday may not work tomorrow. Admitting uncertainty allows you to stay agile and adjust tactics based on real results.

- Honesty strengthens credibility, especially with technical teams

- Proposing a test after "I don't know" transforms the admission into constructive action

- Bluffing has a high cost: loss of trust, rigid strategies, team resistance

- Nobody knows all the algorithm details — even Google only communicates general principles

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Yes, and even critically so. I've seen entire projects stalled because an SEO asserted a "truth" without foundation, generating resistance on the dev side. Conversely, the smoothest collaborations emerge when the SEO frankly admits gray areas and proposes measurable A/B tests.

But let's be honest: this posture requires a level of autonomy and legitimacy. A junior who repeats "I don't know" without ever proposing a validation method quickly loses trust. The admission of ignorance only works if followed by a rigorous approach.

In what contexts can this approach pose problems?

Facing a client or boss expecting immediate certainties, saying "I don't know" can be misinterpreted as incompetence. [To verify]: company culture plays tremendously. In some environments, admitting uncertainty is valued; in others, it's seen as weakness.

Second tricky context: emergency situations. When a site has just lost 60% of its traffic after an update, the decision-maker wants quick action. There, you need to find balance — admit you don't have all the answers while proposing an action plan based on testable hypotheses.

What nuances should be added to this advice?

Splitt is right in principle, but you must distinguish what we don't know due to lack of data (ex: precise impact of parameter X on ranking) from what we should know (ex: how robots.txt works). An expert who ignores fundamentals can't hide behind this advice.

Another nuance: the test proposal must be realistic. Saying "we'll test" on a site with neither traffic nor technical resources is hot air. Honesty also means admitting when a test isn't feasible and proposing an alternative (benchmarking, competitive analysis, etc.).

Practical impact and recommendations

How to integrate this posture into your daily practice?

First step: identify gray areas. List the recurring questions you answer by habit even though you lack absolute certainty. On each, rephrase your answer by separating what's established (ex: Google uses backlinks as a signal) from what's hypothesis (ex: a link from X carries more weight than a link from Y).

Next, prepare simple testing protocols for frequent hypotheses. If a client asks "should the keyword be in the H1?", you can answer: "Data shows correlation, but causality is uncertain. We can test by deploying on a sample of pages and measuring impression changes over 4 weeks."

What mistakes should be avoided in this approach?

Mistake #1: admitting ignorance without proposing a follow-up. "I don't know" alone leaves the other person hanging. Always follow with a validation method or research to pursue.

Mistake #2: confusing honesty with passivity. Admitting you don't know doesn't relieve you of the duty to learn. If you repeat the same phrase on the same topics for months without ever digging deeper, the problem is no longer honesty — it's lack of curiosity.

Mistake #3: using this posture as an excuse to avoid making decisions. In some contexts, you must make a call even with incomplete data. What matters is owning the uncertainty and scheduling a checkpoint to adjust.

What checklist validates your approach?

- Systematically distinguish established facts from hypotheses in my recommendations

- Prepare 3-5 simple testing protocols for frequent questions (H1, meta description, URL structure, etc.)

- Train teams and clients on the probabilistic nature of SEO to frame expectations

- Frankly admit when a test isn't feasible and propose an alternative (benchmarking, case study, etc.)

- Track results of previous tests to capitalize on learnings and progressively reduce gray areas

- Never leave an "I don't know" without proposing a method or additional research

❓ Frequently Asked Questions

Comment dire « je ne sais pas » sans perdre la confiance du client ?

Est-ce que Google partage vraiment toutes les informations nécessaires pour comprendre son algo ?

Quels types de tests sont les plus efficaces pour valider une hypothèse SEO ?

Cette approche est-elle compatible avec des environnements où il faut décider vite ?

Comment convaincre une équipe technique réticente au SEO ?

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 26/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.