Official statement

Other statements from this video 6 ▾

- □ Should you really ignore daily fluctuations in Search Console?

- □ Can crawl speed fluctuations really change what gets indexed on your site?

- □ Do social signals really impact your Google rankings?

- □ Should you really stop checking your daily SEO rankings?

- □ Should you really be concerned about sudden spikes in Google Search Console?

- □ Should you really panic at every ranking fluctuation?

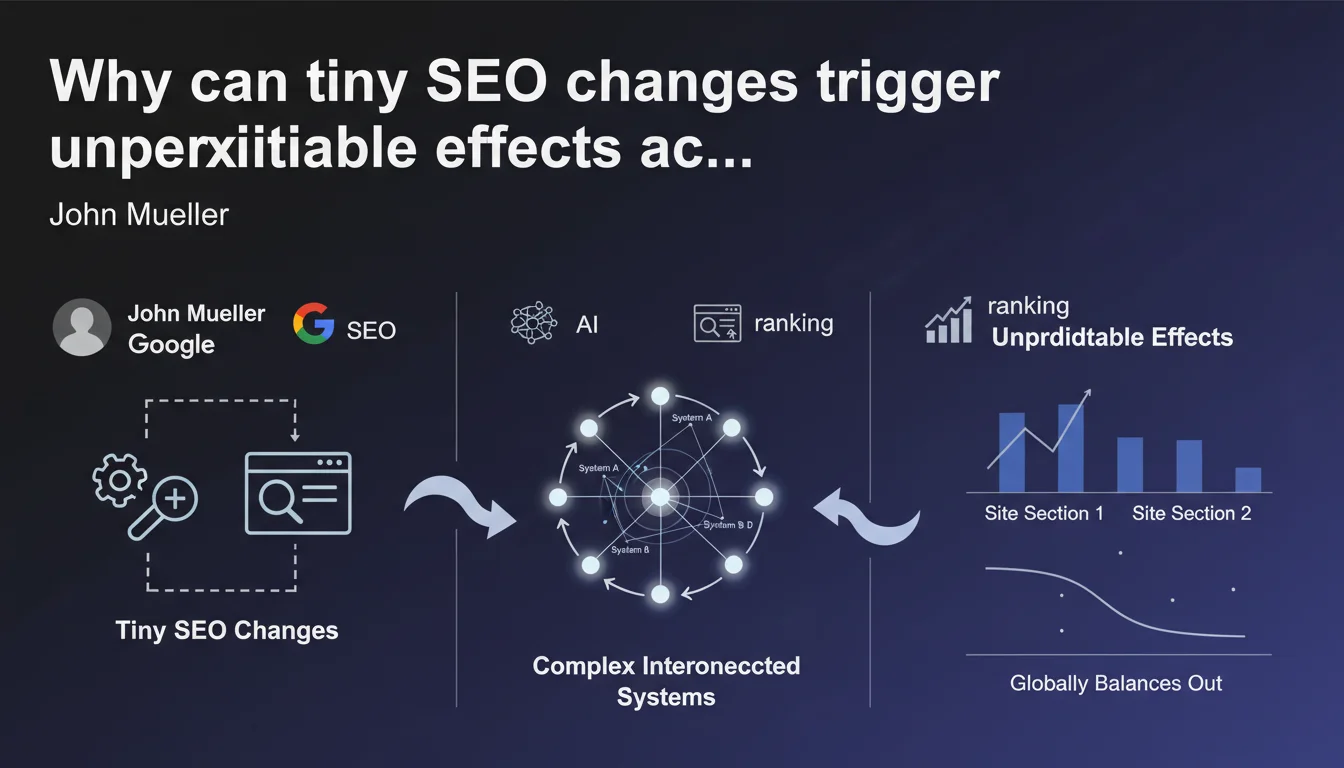

Google Search functions as a network of interconnected systems where even minor modifications can trigger unexpected ripple effects. These effects tend to balance out at the global scale, but remain visible on specific site segments or search queries. This unpredictability isn't a bug—it's a structural feature of the algorithm.

What you need to understand

John Mueller reminds us of a truth many SEO professionals forget: Google's algorithm is not a linear machine. Every technical modification, every content adjustment, every new link can trigger chain reactions that are difficult to anticipate.

This statement comes at a time when SEO professionals are constantly searching for simple cause-and-effect relationships. Yet the reality is far more complex.

What does this system interconnection concretely mean?

Google doesn't process your site with a single algorithm. It uses a superposition of systems: crawling, indexation, quality evaluation, semantic relevance, user signals, link analysis, spam detection, and more.

When you modify your URL structure, you're not just touching crawling. You potentially impact internal linking, PageRank distribution, duplicate content signals, and even semantic understanding of your pages. Each system reacts in its own way, with its own timeframes.

Why are these effects so difficult to predict?

Because Google's systems don't function in isolation. They are interdependent. A change that improves your score on one criterion can degrade your position on another.

Typical example: you optimize your loading speed by removing images. Technical performance increases. But if those images were providing semantic richness or improving user engagement, you lose on another front. Systems adjust and compensate—but not always as you hoped.

What does it mean that "these changes tend to balance out globally"?

Mueller suggests that local fluctuations (on certain pages, certain queries) often compensate for each other at your site's overall scale. In other words: you can lose traffic on one section while gaining it on another, with no obvious reason.

This is exactly what makes SEO analysis so frustrating. You launch an optimization, overall traffic stays stable, but digging deeper you discover contradictory movements depending on page types. Google gives you no dashboard to understand these internal balances.

- Google's algorithm is an ecosystem of interconnected systems, not a suite of independent rules

- A small technical change can trigger unpredictable cascading effects

- Local fluctuations (by page or query) can neutralize each other at the site's global scale

- The possibility of perfect predictability in SEO optimization is a myth—even Google implicitly admits this

- Analyzing a change's impact requires fine-grained measurement and observation over time

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. Every experienced SEO professional has lived through this: you fix an obvious technical error (misconfigured canonicals, for instance), and instead of a clear improvement, you witness a chaotic readjustment over several weeks. Some pages rise, others fall, with no apparent logic.

What Mueller doesn't explicitly state—but what we observe regularly—is that this complexity makes causal attribution nearly impossible on certain sites. You launch three optimizations simultaneously, traffic increases: which one worked? Impossible to say with certainty.

What nuances should we add to this claim?

First point: not all changes are equal. Fixing a robots.txt error that blocks indexation will have a predictable and measurable effect. Modifying the average length of your meta descriptions? Far less clear. The complexity Mueller discusses concerns mostly marginal optimizations, not blocking errors.

Second nuance: this statement can serve as a universal excuse for Google to justify any inconsistency. Your site crashes with no reason? "It's complex, it's normal." This opacity works in Google's favor, which never needs to give precise explanations. [Verify] whether this complexity is a technical necessity or a deliberate choice of non-transparency.

In which cases does this logic not apply?

On very niche or highly specialized sites with limited search volume, the balancing effects are less visible. If you only rank on 50 very specific keywords, a modification will directly impact those queries without compensation elsewhere. The global balancing Mueller mentions works mainly on sites with thematic diversity and high page volume.

Another edge case: targeted manual or algorithmic penalties. If you're hit by a spam filter or manual action, there's no magical balancing. The effect is clear, brutal, and perfectly measurable. System complexity only plays in gray areas, not in cases of obvious violations.

Practical impact and recommendations

What should you concretely do in the face of this complexity?

First, document every modification you make to a site. Not just major overhauls—small tweaks too. Date, nature of change, affected pages. Without this traceability, you're flying blind.

Next, segment your analyses. Never look at overall traffic alone. Break it down by page type, search intent, product category. That's where you'll detect the contradictory movements Mueller mentions.

Finally, extend your observation windows. An SEO change can take 4 to 8 weeks to produce its full effects. Analyzing after 10 days means you're right in the chaotic readjustment phase.

What errors should you avoid in this context of uncertainty?

Error number one: launching multiple changes simultaneously. If you deploy technical refactoring, content rewriting, and a link-building campaign all at once, you'll never isolate the causes of traffic variations. Proceed step-by-step, even if it's slower.

Error number two: panicking over temporary fluctuations. Mueller explicitly states: some effects are transitory before systems balance out. Don't undo a solid optimization because it caused a temporary drop on a query segment.

Error number three: believing there's a magic reproducible formula. What worked on site A won't necessarily work on site B because system interactions aren't the same. Adapt, test, measure—don't blindly copy.

How can you verify that your optimizations produce the intended effect?

Set up a granular dashboard: traffic by page category, average positions by semantic cluster, click-through rates by query type. Tools like Google Search Console allow these breakdowns—if you take time to configure them.

Use A/B tests or progressive rollouts when possible. Deploy a change on 20% of your pages, observe, then scale. This requires technical rigor, but it's the only way to reach solid conclusions in such a complex environment.

- Keep a detailed change log of all modifications made to the site (technical, editorial, link-building)

- Segment traffic analysis by page type, search intent, and semantic clusters

- Observe changes over minimum periods of 6 to 8 weeks before concluding an optimization is effective

- Avoid simultaneous multiple changes—proceed step-by-step to isolate each action's effects

- Don't panic over temporary fluctuations—let Google's systems balance themselves

- Implement A/B tests or progressive deployments to validate optimization hypotheses

- Monitor contradictory movements: some pages may lose traffic while others gain it

- Systematically question causality—temporal correlation is not proof of cause-and-effect

❓ Frequently Asked Questions

Combien de temps faut-il attendre pour mesurer l'impact réel d'un changement SEO ?

Comment savoir si une baisse de trafic est temporaire ou durable ?

Peut-on vraiment prévoir l'impact d'une optimisation SEO sur Google ?

Pourquoi certaines optimisations provoquent-elles des baisses temporaires avant de fonctionner ?

Faut-il tout tester isolément pour comprendre ce qui marche ?

🎥 From the same video 6

Other SEO insights extracted from this same Google Search Central video · published on 26/05/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.