Official statement

Other statements from this video 9 ▾

- □ JavaScript et indexation : Google est-il vraiment capable de tout indexer ?

- □ Le Web Rendering Service de Google suit-il vraiment toutes les dernières fonctionnalités de Chrome ?

- □ Pourquoi Google peine-t-il à indexer correctement les sites qui utilisent des Web Workers ?

- □ Pourquoi les SEO et développeurs doivent-ils absolument travailler ensemble ?

- □ Les core updates de Google sont-elles vraiment des rappels à l'ordre sur les guidelines ?

- □ Les core updates sont-elles vraiment neutres ou cachent-elles des pénalités déguisées ?

- □ Core update : pourquoi Google refuse-t-il de donner des détails spécifiques ?

- □ Les core updates de Google sont-elles vraiment conçues pour améliorer l'expérience utilisateur ou pour redistribuer les positions ?

- □ Les core updates de Google affectent-ils vraiment tous les sites ?

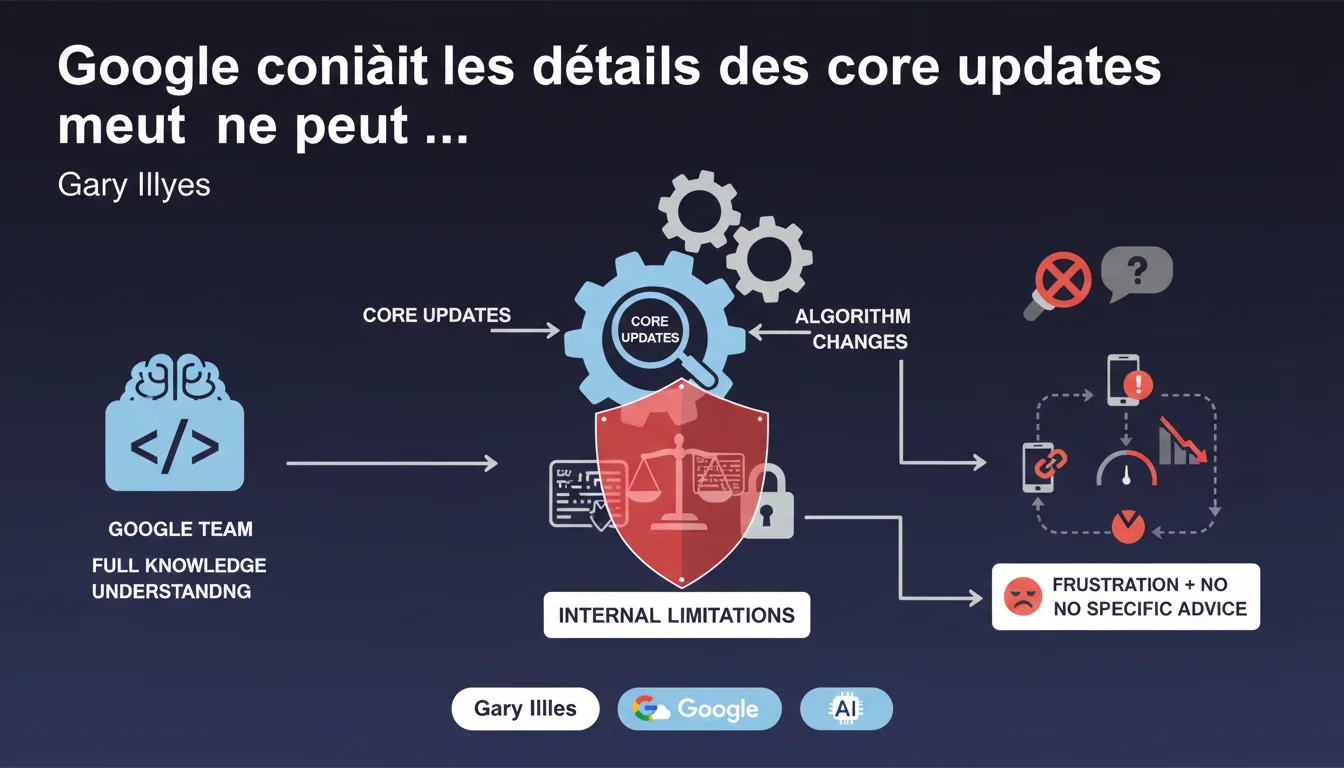

The Google team knows exactly what changes during core updates but cannot legally or strategically disclose it. The only official line remains the recommendation to follow the guidelines – a response that has frustrated the SEO industry for years.

What you need to understand

Why is Gary Illyes' statement important?

Gary Illyes here acknowledges what many have suspected: Google possesses accurate information on core updates but deliberately chooses not to share it. This is not a lack of knowledge — it’s an imposed limitation, probably for legal or competitive reasons or to avoid algorithm manipulation.

This partial transparency creates a frustrating asymmetry of information. SEOs have to work in the dark with correlations and assumptions, while Google holds the complete playbook.

What does 'cannot communicate' mean in this context?

The term 'cannot' suggests an external constraint rather than an arbitrary refusal. Several plausible hypotheses exist: antitrust obligations (not favoring certain players), protecting intellectual property, or avoiding the emergence of manipulation strategies before anti-spam systems are ready.

As a result, Search teams are caught between their technical expertise and the impossibility of sharing it. Hence, this generic communication repeated with each update.

Are the guidelines really the only valid compass?

In theory, yes. The Quality Rater Guidelines and E-E-A-T principles remain the most comprehensive official documentation. However, in practice, these documents are too general to diagnose a traffic drop post-update.

The reality on the ground shows that certain technical factors (loading times, structure, effective crawling) play a decisive role — even though Google prefers to talk about 'quality content'.

- Google knows the exact details of core updates but will never publicly communicate them

- This limitation is not a choice of the Search team but an imposed constraint

- The guidelines remain the only official framework, despite their generic nature

- The SEO industry must continue to work with assumptions and field correlations

SEO Expert opinion

Is this silence policy sustainable in the long term?

Honestly? Probably yes, but at the cost of a growing frustration in the industry. Google has survived decades of demands for more transparency without conceding. The engine's dominant position allows it to maintain this asymmetry.

The problem is that this opacity fuels conspiracy theories and false certainties. 'Experts' sell magic formulas based on dubious correlations, while real signals remain buried in statistical noise.

Is there actually coherence between the guidelines and real-world results?

Not always. The guidelines talk about useful content, expertise, and user experience — but some technically mediocre sites with average content rank better than expertly crafted resources that are poorly optimized technically. [To be verified]: the actual weighting between quality signals and technical signals remains unclear.

Post-update observations often show patterns (increased importance of internal links, valuation of 'fresh' content, etc.) that Google will never officially confirm. We work through triangulation.

Should we accept this opacity or continue to demand more details?

Both. Demanding transparency maintains public pressure that can at least influence future communications. But pragmatically, we must accept that we will never have the complete recipe.

The real SEO skill today is to know how to interpret weak signals, methodically test, and adjust quickly — not to wait for Google to publish a manual.

Practical impact and recommendations

What practical steps can we take in light of this lack of precise information?

First, stop looking for a magic recipe. If Google cannot communicate the details, no 'SEO guru' knows them either. Beware of absolute certainties sold as revealed truths.

Next, adopt an approach based on documented fundamentals: strong technical architecture, content aligned with search intent, seamless user experience, clear E-E-A-T signals. These pillars endure through all updates without exception.

How can you adapt your SEO strategy in this context of opacity?

Prioritize measurement and experimentation. Implement A/B tests on page segments, track correlations between your actions and performance, document your observations after each core update.

Diversify your information sources: case studies, large-scale correlation analyses (like Semrush Sensor or Sistrix), feedback from the SEO community. The truth often emerges from the convergence of multiple independent signals.

What mistakes should you avoid in the face of core updates uncertainty?

Don't panic at every fluctuation. Core updates often take several weeks to stabilize. Waiting 2-3 weeks before reacting prevents hasty decisions based on noise.

Avoid drastic over-optimizations. Massively changing a site after a drop may worsen the situation if you base decisions on incorrect assumptions. Proceed with measured and documented iterations.

- Regularly audit your compliance with Quality Rater Guidelines and E-E-A-T principles

- Implement granular tracking of your SEO KPIs to quickly detect update impacts

- Document your assumptions and test them methodically on samples of pages

- Invest in a robust technical architecture (crawling, indexing, Core Web Vitals)

- Build a demonstrable sector expertise rather than multiplying generic content

- Stay connected to observations from the SEO community to cross-reference signals

❓ Frequently Asked Questions

Pourquoi Google ne peut-il pas révéler les détails des core updates ?

Les Quality Rater Guidelines suffisent-elles vraiment pour optimiser son site ?

Comment réagir à une chute de trafic après un core update sans savoir ce qui a changé ?

Peut-on faire confiance aux analyses de corrélation post-update publiées par les outils SEO ?

Cette opacité de Google va-t-elle évoluer un jour ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 11/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.