Official statement

Other statements from this video 4 ▾

- □ Is Google finally addressing the technical complexity that plagues e-commerce sites?

- □ Does Google really offer free tools to help e-commerce businesses identify their SEO problems?

- □ Why does Google refuse to provide platform-specific SEO recommendations for e-commerce solutions?

- □ Is Google finally showing you which technical SEO fixes will actually move the needle for your e-commerce site?

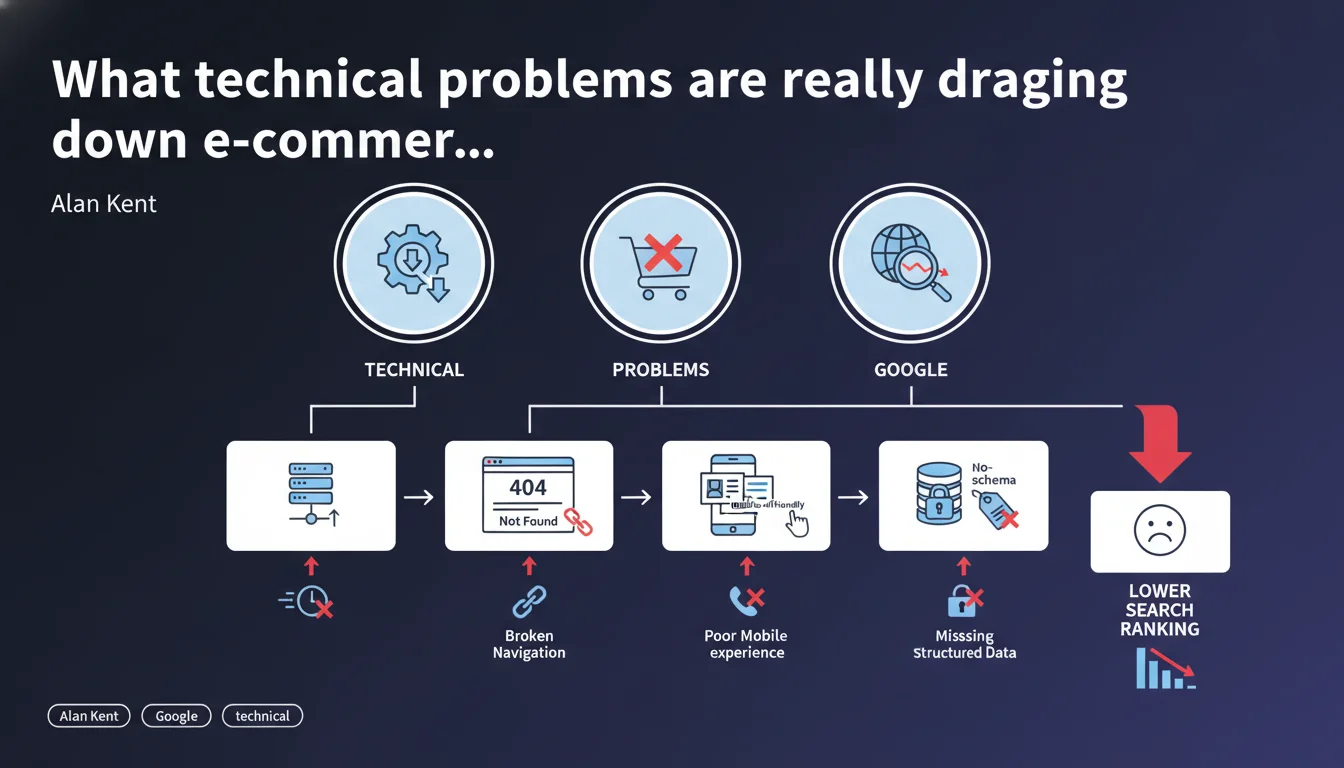

Google has identified several recurring technical issues on e-commerce sites that are hampering their performance in search results. These malfunctions are widespread enough to justify a dedicated series of analyses. The challenge: identify and fix these bottlenecks before they permanently damage your visibility.

What you need to understand

Why is Google specifically focusing on e-commerce sites?

E-commerce sites represent a massive segment of commercial search queries. Their complex architecture — product catalogs, filters, facets, inventory management — generates specific technical problems that Google observes repeatedly.

This announcement from Alan Kent signals that these malfunctions are not anecdotal. They are systematic enough to deserve targeted communication efforts. In other words: if you manage an e-commerce site, there's a good chance you're affected by at least one of these problems.

What types of technical problems is Google referring to?

The statement remains intentionally vague. Kent hasn't yet listed the specific problems — that's what the upcoming series is for. But the context hints at the usual suspects: massive duplicate content via facets, wasted crawl budget on useless pages, failing pagination, poorly implemented canonical tags.

E-commerce sites often generate thousands of URL variations for the same content. Without strict technical governance, Googlebot wastes enormous amounts of time crawling valueless pages, at the expense of strategic pages.

Does this announcement change anything for SEO practitioners?

Not immediately. This is a preamble, not a technical revelation. But it validates what many observe in the field: Google struggles to efficiently index poorly structured catalogs.

The real interest lies in the promise of a detailed series. If Google takes the trouble to document these issues, it's because current solutions — or rather their absence — pose a scale problem. Expect more precise recommendations in upcoming publications.

- E-commerce sites accumulate recurring technical problems that slow down their indexation and ranking.

- Google considers these malfunctions systematic enough to justify a dedicated series of analyses.

- Likely suspects: duplicate content, poorly managed crawl budget, failing pagination, misconfigured canonical tags.

- This statement announces upcoming content — stay tuned for Alan Kent's next publications.

SEO Expert opinion

Does this statement bring anything new to the table?

Honestly? No. Any professional who has audited e-commerce sites knows these problems inside and out. Duplicate content on facets, infinite parameterized URLs, canonical tags running wild — this is the daily reality of any proper technical audit.

But the announcement has strategic value: it confirms that Google still hasn't solved these problems algorithmically. Otherwise, why communicate? If the algorithm handled facets and variants properly, Kent wouldn't need to write a series. This means the burden remains squarely on developers' and SEO professionals' shoulders.

Should we expect surprises in the upcoming series?

Hard to say. If Google simply repeats known best practices — use rel="canonical", block unnecessary facets, manage your crawl budget — it won't bring anything new. However, if Kent shares concrete data on the impact of these problems (crawl loss, measurable indexation drops), it becomes useful.

The risk is that this series remains too generic. [To be verified]: will Google provide concrete figures or stay in its usual vagueness? Because an article saying "avoid duplicate content" without quantifying actual impact is useless in 2025.

When doesn't this communication apply to you?

If you manage an e-commerce site with fewer than 500 products and no dynamic facets, you probably aren't affected. Same if you already have solid architecture with properly configured canonical tags, mastered robots.txt, and finely managed crawl budget.

But let's be honest: that's rare. The majority of e-commerce sites have at least one of these problems — often several. Don't assume you're exempt without conducting a serious technical audit of your structure.

Practical impact and recommendations

What concrete steps should you take while waiting for the series to continue?

First step: audit your facet and filter architecture. How many URLs does each filter generate? Do these pages provide unique value or are they disguised duplicate content? Verify that your canonical tags point to the correct source page.

Second action: analyze your crawl budget in Google Search Console. Look at exploration statistics: how many pages does Googlebot visit per day? How many are actually strategic? If you see thousands of explored pages but few indexed, you have a structural problem.

What errors must you absolutely avoid?

Don't massively block your facets in robots.txt without thinking it through. Outright blocking can break your internal linking and prevent Google from discovering certain products. Prefer a combination of canonical tags, strategic noindex, and URL parameters managed in Search Console.

Another trap: believing that a plugin or extension will fix everything. Automated solutions often create more problems than they solve — misconfigured canonical tags, cascading noindex, ignored parameters. Nothing replaces manual auditing and rigorous technical governance.

How do you verify your site doesn't suffer from these problems?

Run a crawl with Screaming Frog or Oncrawl. Identify clusters of similar pages. If you have hundreds of variations for the same product content, you have a problem. Then verify whether Google actually indexes these pages — often they're crawled but not indexed, a sign that the algorithm detects duplication.

Compare the number of pages in your XML sitemap with the number of pages actually indexed in Search Console. A massive gap (50% or more) indicates a structural malfunction. It's a signal that Google struggles to process your content effectively.

- Audit your facet and filter architecture to identify duplicates

- Analyze exploration statistics in Google Search Console

- Verify consistency between crawled and indexed pages

- Examine your canonical tags: do they all point to the correct source page?

- Crawl your site with a dedicated tool to spot similar content clusters

- Compare the number of pages in your sitemap with the number actually indexed

- Test faceted navigation in incognito mode to see what Google actually sees

❓ Frequently Asked Questions

Cette annonce signifie-t-elle que Google va pénaliser les sites e-commerce mal structurés ?

Dois-je attendre la suite de la série avant d'agir ?

Les petits sites e-commerce sont-ils concernés par ces problèmes ?

Faut-il bloquer toutes les facettes dans robots.txt ?

Comment savoir si mon site souffre de ces problèmes techniques ?

🎥 From the same video 4

Other SEO insights extracted from this same Google Search Central video · published on 13/04/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.