Official statement

Other statements from this video 5 ▾

- □ Does Google Trends really show you all search data, or just a fraction of the real picture?

- □ Why Can't Google Trends Ever Tell You the Real Number of Keyword Searches?

- □ How can you leverage 20 years of Google Trends history to supercharge your SEO strategy?

- □ Does Google Trends really aggregate all keyword variants together, or does it treat them separately?

- □ Should you really prioritize topics over keywords when analyzing search trends?

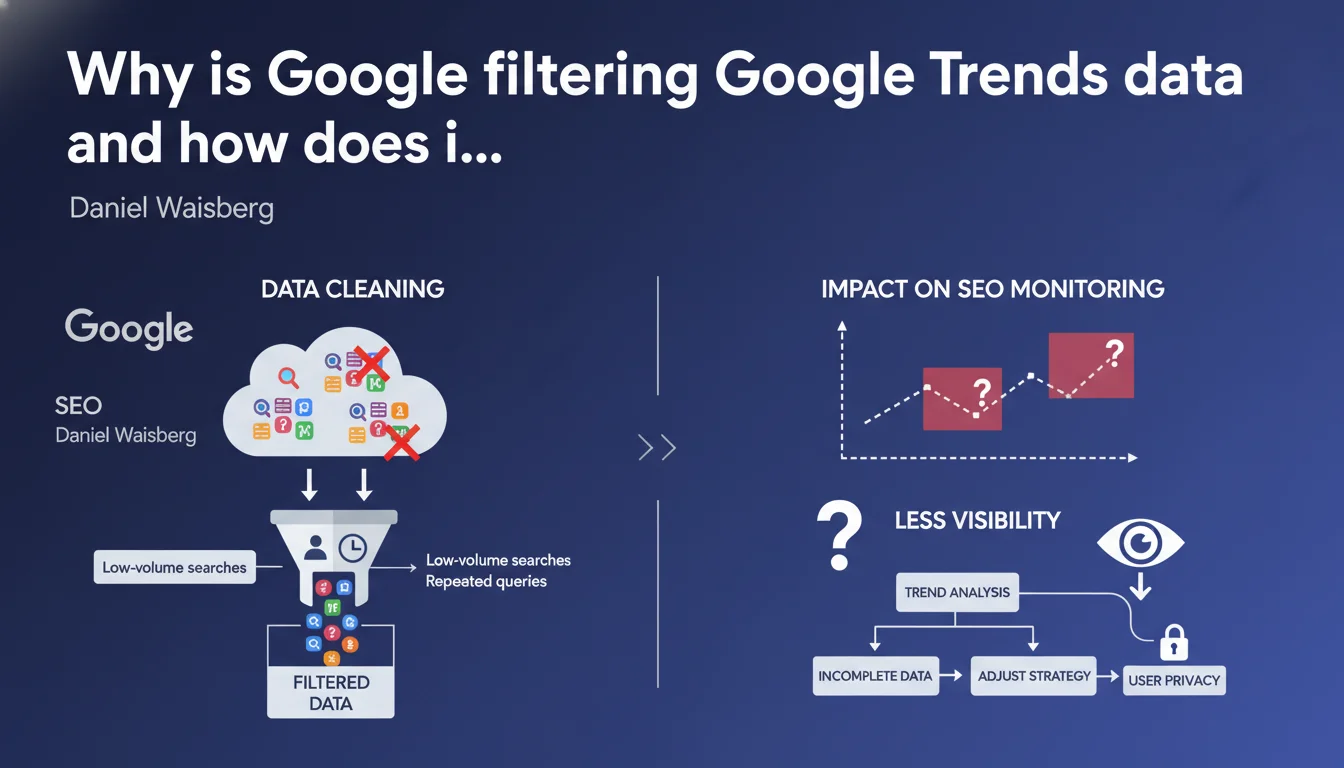

Google removes from Trends searches performed by very few users and repeated queries from the same person over a short period. Stated objective: protect privacy and eliminate statistical noise. For SEO professionals, this means certain weak signals or micro-trends will never be visible in the tool.

What you need to understand

What does this data cleaning in Trends concretely mean?

Google applies two main filters to the data displayed in Google Trends. First, any term searched by a very low volume of users disappears from the interface — the exact threshold is not disclosed. Second, queries repeated by the same person over a short period are excluded to prevent individual behavior from skewing global trends.

Waisberg justifies this approach with two arguments: reducing statistical noise and guaranteeing user anonymity. In other words, Google doesn't want it to be possible to infer the search habits of identifiable individuals from Trends.

Why is this statement being made now?

Trends is a public tool used by journalists, analysts, and SEO professionals for years. Formalizing these filtering rules likely responds to growing regulatory pressure — GDPR, DSA — and a requirement for transparency in personal data processing.

Nevertheless, Google remains vague about exact thresholds. No figures, no precise definition of "very few people" or "short period." It's therefore difficult to know where the boundary lies between exploitable signal and filtered-out noise.

What are the consequences for SEO professionals using Trends?

If you leverage Trends to detect emerging keyword opportunities, know that micro-trends or ultra-specific queries will never appear. You'll only see what crosses a certain popularity threshold.

Another limitation: Trends data doesn't reflect raw search volume, but rather a filtered and normalized version. Cross-referencing with other sources — Search Console, paid tools, internal data — remains essential.

- Google filters terms searched by too few users (unspecified threshold)

- Queries repeated by the same person over a short period are excluded

- Objective: protect privacy and reduce statistical noise

- Trends shows only a partial and normalized version of actual search volumes

- No precise figures communicated on the thresholds applied

SEO Expert opinion

Is this statement consistent with what we observe in the field?

Yes, broadly speaking. Any practitioner who has compared Trends and Search Console knows that the two don't tell the same story. Queries visible in GSC — sometimes with just a few clicks per month — generate no curve in Trends. Waisberg's statement therefore confirms an already observable practice.

Filtering repeated searches is harder to verify empirically, but logical. If someone types "SEO agency Lyon" 20 times while testing their rankings, it shouldn't skew the global trend for that term. [To verify]: the question remains whether this filtering applies to Search Console data as well or only to Trends.

What limitations does this statement fail to mention?

Waisberg talks about privacy and noise, but completely sidesteps the question of sampling. Trends doesn't process 100% of queries — Google samples the data. No information on sampling rate, methodology, or biases introduced.

Another blind spot: regional variations. Trends displays data by country or region, but we don't know how the "very few people" filter applies locally. A term rarely searched nationally might be popular in a given city — does this nuance disappear from the radar?

Should you continue using Trends for SEO monitoring?

Yes, but keep your eyes open. Trends remains a useful macro-trend indicator for capturing seasonal shifts, media spikes, brand awareness comparisons. Not for detecting niche opportunities or emerging long-tail queries.

If your SEO strategy relies on exploiting micro-signals — highly specific queries, confidential markets, local behaviors — Trends will show you only a fraction of reality. Supplement with Keyword Planner, SEMrush, Ahrefs, and especially your own GSC data.

Practical impact and recommendations

How should you adapt your SEO monitoring given these limitations?

First rule: never rely on Trends as your sole source. Systematically cross-reference with Search Console for your own data, and with third-party tools for competitor volumes. Trends provides direction, not exhaustive mapping.

Second reflex: monitor relative trends rather than absolute ones. Trends excels at comparing two terms against each other or tracking a topic's evolution over time. However, draw no conclusions about the real volume of an isolated query.

What mistakes should you avoid when interpreting Trends data?

Classic mistake: concluding that a keyword "doesn't exist" because it doesn't appear in Trends. If your market is niche or your geographic area narrow, Google's filtering may mask real opportunities.

Another pitfall: over-interpreting short-term variations. Trends smooths data, filters noise — a micro-variation may be a statistical artifact, not an exploitable signal. Always validate with other sources before adjusting your editorial calendar.

What should you concretely implement to compensate for these biases?

Build a hybrid monitoring stack: Trends for macro-trends, GSC for your actual performance, paid tools for competitive analysis, social listening for emerging signals. No single tool provides the complete truth.

Document your sources and methods. When presenting a trend analysis to a client or internally, explicitly state the limitations of each tool used. Methodological transparency prevents misunderstandings and strengthens the credibility of your recommendations.

- Never use Trends as the sole source for an SEO decision

- Systematically cross-reference with Search Console and third-party tools

- Interpret Trends as a relative trend indicator, not absolute volume

- Validate any observed variation with other sources before taking action

- Document methodological limitations in your analyses

- Prioritize a hybrid monitoring stack to capture both macro and micro signals

❓ Frequently Asked Questions

Quel est le seuil minimum de recherches pour qu'un terme apparaisse dans Google Trends ?

Les données de Google Search Console sont-elles soumises au même filtrage que Trends ?

Si une requête n'apparaît pas dans Trends, cela signifie-t-il qu'elle n'est jamais recherchée ?

Peut-on contourner ce filtrage pour accéder aux données brutes de recherche ?

Ce filtrage s'applique-t-il de la même manière dans toutes les régions géographiques ?

🎥 From the same video 5

Other SEO insights extracted from this same Google Search Central video · published on 31/07/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.