Official statement

Other statements from this video 5 ▾

- □ Pourquoi Google filtre-t-il les données de Google Trends et qu'est-ce que ça change pour votre veille SEO ?

- □ Pourquoi Google Trends ne vous dira jamais combien de fois un mot-clé est recherché ?

- □ Comment exploiter les 20 ans d'historique de Google Trends pour votre stratégie SEO ?

- □ Google Trends regroupe-t-il vraiment toutes les variantes d'un mot-clé ?

- □ Faut-il vraiment privilégier les sujets aux mots-clés pour analyser les tendances de recherche ?

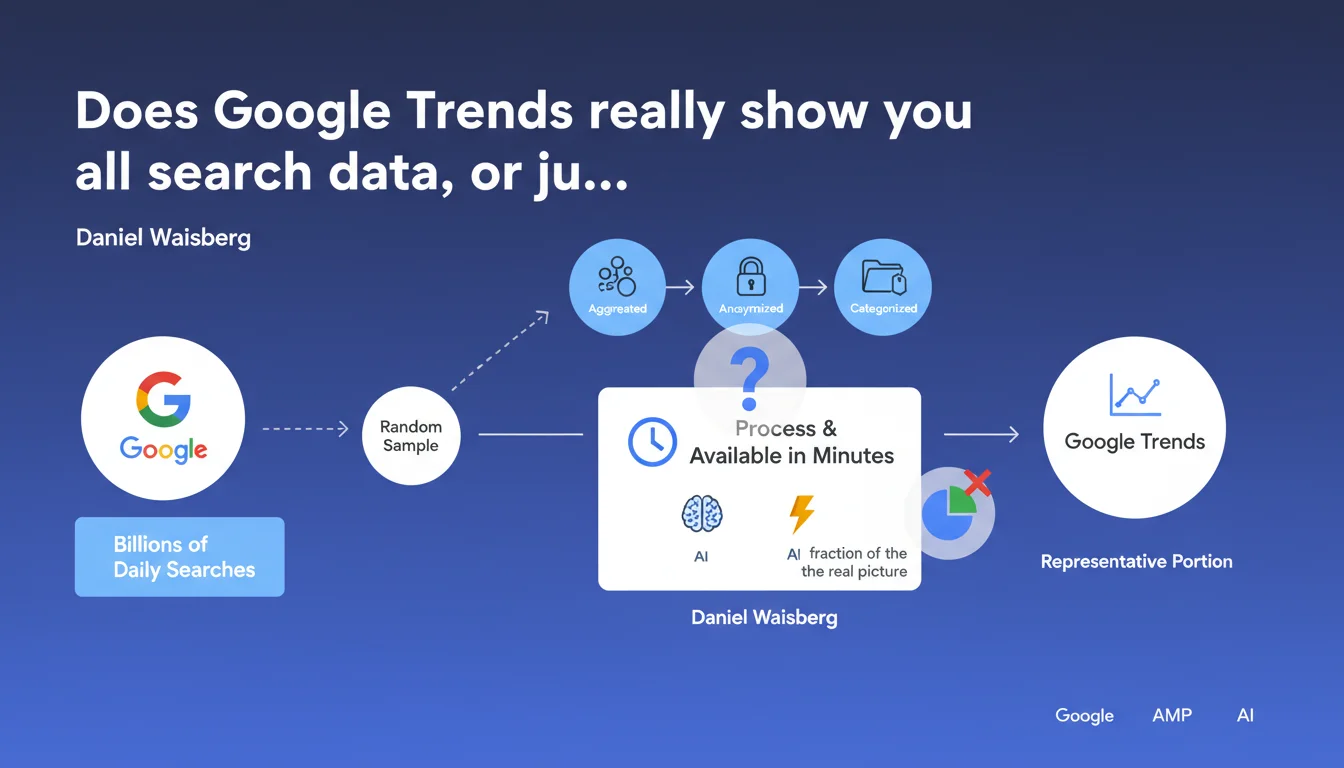

Google Trends doesn't analyze all queries on its search engine, but rather a random aggregated and anonymized sample. This technical choice allows it to process billions of daily searches and make data available within minutes, but it limits the absolute precision of search volume analyses.

What you need to understand

What does "random sample" actually mean for an SEO practitioner?

Google Trends doesn't give you access to all search data — it would be technically impossible to process in real time. The tool draws a representative portion of billions of daily queries, then aggregates and anonymizes it.

This sampling means that the figures displayed are statistical extrapolations, not absolute values. The methodology guarantees representativeness, but introduces a margin of uncertainty — especially on low search volumes or highly localized queries.

Why did Google make this technical choice?

Processing all searches in near real-time would require massive infrastructure and drastically increase the delay in making data available. Sampling allows reducing computational load while maintaining acceptable accuracy for most use cases.

Google prioritizes the speed and accessibility of general trends over an exhaustiveness that would be impossible to guarantee at this scale. For most SEO strategic analyses, this tradeoff remains relevant.

What limitations does this impose on SEO analyses?

Google Trends data is relative and normalized, never absolute. You get popularity curves and comparisons between terms, but never the exact number of monthly searches for a given keyword.

On very specific niches or low-volume terms, sampling can mask real fluctuations or amplify random variations. You should therefore cross-reference this data with other tools (Keyword Planner, Search Console, third-party tools) to validate your hypotheses.

- Google Trends relies on a sample, not on all searches

- Data is aggregated, anonymized, and normalized to ensure privacy

- The tool prioritizes processing speed over absolute precision

- Low or ultra-localized volumes carry a higher margin of uncertainty

- You must systematically cross-reference Google Trends with other data sources

SEO Expert opinion

Is this statement consistent with what practitioners observe in the field?

Absolutely. Any SEO practitioner who uses Google Trends regularly notices that the data never perfectly aligns with Search Console or third-party tools. Trend curves are reliable, but absolute values are missing — this is exactly what you'd expect from a representative sample.

This transparency from Google confirms what we've known empirically: Trends is a macro-analysis tool, not for micro-optimization. Trying to extract exact volumes per keyword is a frequent methodological error among beginners.

What nuances should be added to this statement?

Google doesn't specify the sample size or its exact random selection method. Are we talking about 1% of queries? 10%? Representativeness also depends on categorization and the geographic or temporal granularity requested.

In very local markets or minority languages, the sample may be too small to be statistically significant. [To verify]: Google doesn't indicate a minimum reliability threshold below which data becomes too volatile to be usable.

Another limitation: anonymization and aggregation can artificially smooth certain trends or mask short but significant peaks. Intra-day variations, for example, are difficult to analyze with precision.

In what cases does this limitation become truly problematic?

When you work on ultra-specialized niches with monthly search volumes below a few hundred queries, sampling can completely skew your conclusions. Curves become erratic and unreliable.

Same applies to fine-grained geographic analyses (city or regional level): the local sample may be insufficient to detect real trends. In these cases, Search Console and paid tools become essential.

Practical impact and recommendations

How to use Google Trends effectively despite this limitation?

Use Trends for what it does best: identify emerging trends, compare the relative popularity of multiple terms, detect seasonality. Don't try to extract absolute volumes from it — that's not its purpose.

Systematically cross-reference with Search Console (real data from your site), Keyword Planner (volume ranges), and third-party tools like Semrush or Ahrefs. This triangulation compensates for sampling bias and gives you a more complete picture.

What errors should you avoid when interpreting the data?

Never confuse relative popularity with absolute volume. A score of 100 on Google Trends doesn't mean "100 searches," but rather "peak popularity" during the period and geographic area analyzed.

Avoid over-interpreting fluctuations on low-volume keywords. If the curve is too erratic, it's probably because the sample is insufficient — look for other indicators before making decisions.

Never directly compare terms with very different search volumes without adjusting the scale. Google Trends normalizes data, which can mask significant absolute gaps.

What methodology should you adopt to compensate for this limitation?

- Use Google Trends to spot macro trends, never for exact volumes

- Cross-reference with Search Console to validate real organic traffic data on your site

- Supplement with Keyword Planner or third-party tools to get monthly volume ranges

- On niche markets or fine geographic targeting, use multiple sources before concluding

- Prioritize relative comparisons between terms over absolute values

- Document the margin of uncertainty in your analyses in client reports

❓ Frequently Asked Questions

Google Trends affiche-t-il le volume exact de recherches mensuelles d'un mot-clé ?

Peut-on faire confiance aux données de Google Trends pour une analyse de niche ?

Quelle est la taille de l'échantillon utilisé par Google Trends ?

Google Trends est-il mis à jour en temps réel ?

Pourquoi mes données Search Console ne correspondent-elles jamais à Google Trends ?

🎥 From the same video 5

Other SEO insights extracted from this same Google Search Central video · published on 31/07/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.