Official statement

Other statements from this video 8 ▾

- □ Googlebot stocke-t-il les cookies lors de l'exploration de votre site ?

- □ Pourquoi les robots d'exploration ignorent-ils systématiquement vos cookies ?

- □ Les crawlers Google se comportent-ils vraiment comme de vrais navigateurs ?

- □ Pourquoi tester votre site avec un émulateur de user agent ne suffit-il pas à détecter les problèmes de crawl ?

- □ Pourquoi tester votre site avec un crawler est-il indispensable pour le SEO ?

- □ Pourquoi Google refuse-t-il la pagination basée sur les cookies ?

- □ Les cookies bloquent-ils vraiment l'accès des bots à votre contenu ?

- □ Les sites qui dépendent des cookies sont-ils invisibles pour Googlebot ?

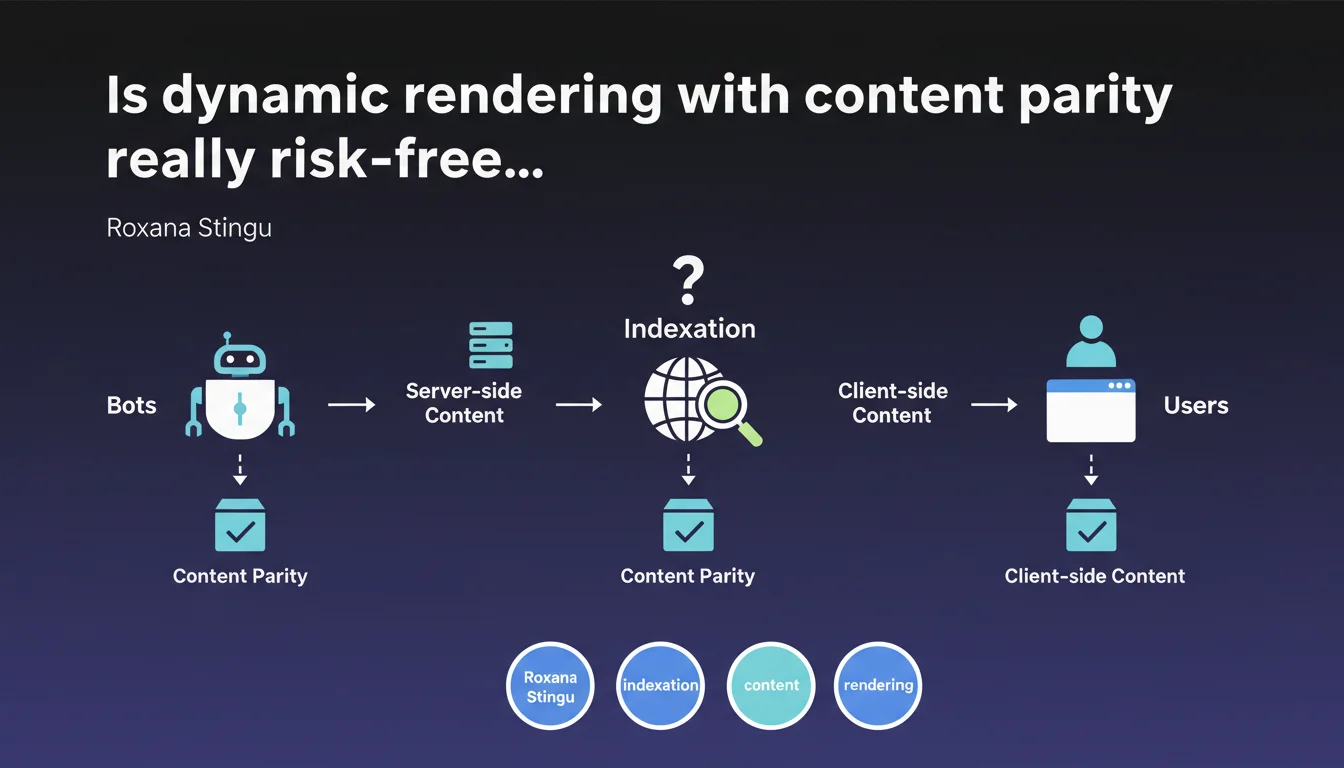

Google allows dynamic rendering on the condition of maintaining strict parity between the server-side content served to bots and the client-side content for users. No divergence is tolerated — even the slightest difference can compromise indexation. This technique remains a workaround, not an official recommendation.

What you need to understand

What is dynamic rendering and why does Google mention it?

Dynamic rendering consists of serving two different versions of the same page: server-side rendered (SSR) content to crawlers and client-side rendered (CSR) content to users. This approach emerged as a temporary solution for React or Vue.js sites that struggle to be properly crawled.

Google does not actively recommend this method, but acknowledges that it can work in certain contexts. Roxana Stingu's statement establishes one non-negotiable condition: complete parity between the two versions.

What does "content parity" concretely mean?

Parity means that the HTML served to Googlebot must contain exactly the same information as what a user sees once the page has loaded. No shortcuts. No simplifications. No content hidden from the bot.

If your SSR version displays 10 products and your CSR loads 50 through infinite scroll, you're not in parity. If your search filters are only present on the client side, you're not in parity. If your action buttons generate content that the bot never sees, you're not in parity.

Why is this parity requirement so strict?

Google has been fighting against cloaking for years — serving different content to bots and users to manipulate rankings. Dynamic rendering comes dangerously close to this red line by definition.

By requiring complete parity, Google ensures you're not using this technique to deceive indexation. Any divergence can be interpreted as an attempt at manipulation, with the consequences we all know.

- Strict parity mandatory: no divergence tolerated between SSR and CSR

- Workaround solution: Google prefers native SSR or server-side hydration

- Cloaking risk: any difference can trigger a manual penalty

- Technical complexity: maintaining two synchronized versions requires resources

SEO Expert opinion

Is this approach really viable in the long term?

Let's be honest: dynamic rendering is a technical band-aid, not a sustainable architecture. Maintaining two perfectly synchronized renders on a site that evolves daily? It's an operational nightmare.

Every feature addition, every A/B test, every third-party widget risks breaking parity. And the worst part is you won't find out until after a traffic drop. Standard monitoring tools don't detect these divergences — you need regular audits comparing both versions.

Is Google telling the whole truth about its rendering capabilities?

[To verify] Google has claimed for years that its crawler "executes JavaScript". Technically true. In practice? Much more nuanced.

Real-world tests show that Googlebot has short timeouts, doesn't always handle complex interactions, can miss content loaded after a delay or conditional on user action. Dynamic rendering exists precisely because Google's JS rendering isn't 100% reliable.

If Google had completely solved the JS rendering problem, this statement wouldn't even exist. The fact that we're still talking about it proves it's a sensitive issue.

In what cases can this solution be justified?

Dynamic rendering can be justified on legacy React/Angular sites already in production, where a complete SSR overhaul would be too expensive short-term. It's a patch that buys time.

But pay attention — and this is crucial — this approach should never be Plan A for a new project. If you're developing today with Next.js, Nuxt or Remix, you have access to native SSR or progressive hydration. Why choose complexity?

Practical impact and recommendations

How do you verify that your parity is respected?

First step: compare both versions systematically. Use a Googlebot user-agent to fetch the SSR HTML, then inspect the final DOM on the client side with DevTools. Every key element must be present in both.

Tools like Screaming Frog allow you to crawl in "JavaScript" and "raw HTML" modes. Compare both exports — indexable URLs, titles, content, internal links. Any divergence is a red flag.

- Crawl the site with and without JavaScript rendering enabled

- Compare title tags, meta descriptions, h1-h3 between both versions

- Verify that internal links are identical (same href, same anchor)

- Test product pages, category pages and critical landing pages manually

- Monitor server logs to detect response differences based on user-agent

- Automate these tests in your CI/CD to prevent regressions

What critical errors must you absolutely avoid?

The most common error: forgetting dynamic content on the SSR side. Search filters, "Load more" buttons, content conditional on cookies — everything that appears on the client side must be present for the bot.

Another classic pitfall: lazy-loaded images or sections that only load on scroll. If your SSR doesn't include them with a valid `src` attribute, Google will never see them.

And above all, don't fall into involuntary cloaking. Serving a "lighter" version to bots to improve speed? Tempting, but that's exactly what Google punishes. Parity is non-negotiable.

What is the best strategy for the medium term?

If you're in dynamic rendering today, plan a migration to native SSR or progressive hydration. Modern frameworks (Next.js, Remix, SvelteKit) make this transition easier than ever.

The ROI is twofold: you eliminate the complexity of maintaining two versions and you improve Core Web Vitals. Well-configured SSR reduces Time to First Byte and First Contentful Paint — two metrics Google values.

❓ Frequently Asked Questions

Le dynamic rendering est-il considéré comme du cloaking par Google ?

Peut-on utiliser le dynamic rendering uniquement pour certaines pages ?

Comment détecter une rupture de parité avant que Google ne s'en aperçoive ?

Le dynamic rendering impacte-t-il les Core Web Vitals ?

Google crawle-t-il vraiment toujours avec JavaScript activé aujourd'hui ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 15/11/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.