Official statement

Other statements from this video 10 ▾

- □ Le CLS est-il vraiment un facteur de classement Google à part entière ?

- □ Vos images sabotent-elles votre CLS sans que vous le sachiez ?

- □ Faut-il vraiment spécifier les dimensions des images pour corriger le CLS ?

- □ Pourquoi le Chrome User Experience Report change-t-il la donne pour mesurer les performances réelles de votre site ?

- □ Le LCP mesure-t-il vraiment la vitesse d'affichage du contenu principal ?

- □ Faut-il vraiment prioriser le chargement de vos images héros pour améliorer le LCP ?

- □ Faut-il vraiment désactiver le lazy loading sur les images above the fold ?

- □ Pourquoi PageSpeed Insights est-il l'outil de performance à privilégier pour le SEO ?

- □ HTTP/2 peut-il vraiment booster les performances de votre site sans refonte technique ?

- □ Faut-il vraiment passer toutes ses images en WebP pour le SEO ?

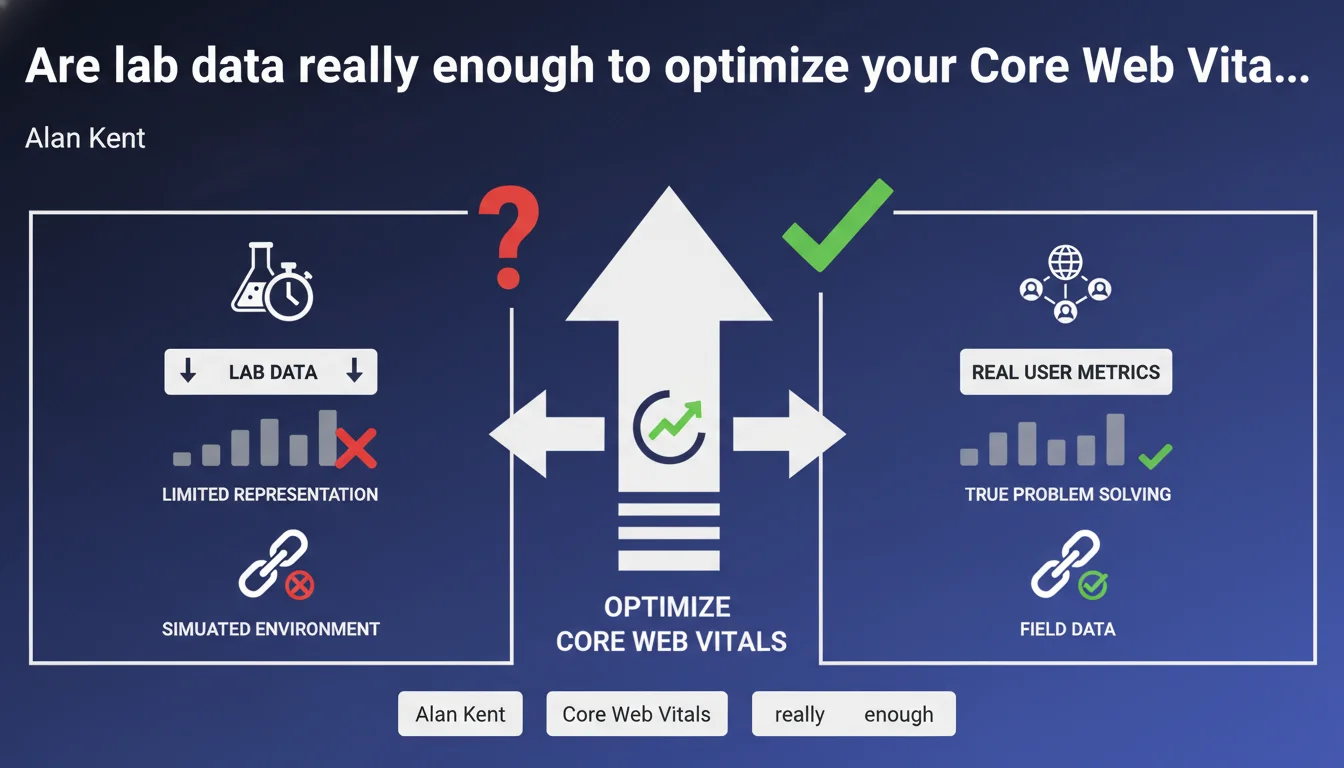

Google insists that only field data (real user data) proves that a performance problem is truly solved for end users. Lab data, while useful for diagnosis, doesn't reflect actual usage conditions and can mislead you about the real impact of your optimizations.

What you need to understand

What's the actual difference between field data and lab data?

Field data comes from real users navigating your site with their own devices, connections, and varied usage contexts. Google collects it through the Chrome User Experience Report (CrUX), and this dataset is what determines your ranking for Core Web Vitals.

Lab data is generated in a controlled environment — typically through Lighthouse, PageSpeed Insights, or WebPageTest. Same device, same connection, identical conditions every time you run a test.

Why does Google push so hard on this distinction?

The reason is blunt: you can have a perfect Lighthouse score and catastrophic real-world metrics. A user with a budget smartphone on 3G doesn't experience the same site as your calibrated test environment.

Google wants you optimizing for real-world experience, not for gaming a diagnostic tool. Field data includes device diversity, network variations, browser extensions, caching — everything that makes a site perform differently depending on who's visiting.

How do you access this field data?

CrUX is the canonical source. You can access it via PageSpeed Insights (the "Discover what real users experience" section), Google Search Console (Core Web Vitals report), or directly through BigQuery for advanced analysis.

You can also implement Real User Monitoring (RUM) with tools like web-vitals.js to collect your own field metrics and understand exactly where things break down for each user segment.

- CrUX data reflects 28 days of real user experience

- Lab data is a starting point for diagnosis, never an end goal

- A site can succeed in the lab and fail in production if your optimization ignores real-world conditions

- The "good" threshold for Core Web Vitals (75th percentile) is calculated on field data only

SEO Expert opinion

Is this distinction really new to SEO practitioners?

Let's be honest: any SEO who's seriously optimized Core Web Vitals knows this difference. The problem is that many clients and even some professionals remain obsessed with Lighthouse scores — because they're visual, immediate, and easy to present in reports.

Alan Kent reminds us of something the market forgets too often: Lighthouse doesn't rank sites. What matters for Google rankings is CrUX. Full stop.

So are lab metrics completely useless?

No — and this is where the statement could mislead if you read it too quickly. Lab data is essential for diagnosing problems detected in field data. You see a catastrophic LCP in CrUX? Lighthouse helps you identify the bottleneck: render-blocking resources, unoptimized images, slow server.

But — and this is critical — once you've fixed things according to Lighthouse, you must verify in CrUX that it actually worked. If most users are on mobile with slow connections, your lab-tested optimization might remain invisible in real metrics.

What are the practical limits of this approach?

CrUX has a 28-day reporting delay. You deploy an optimization today? You won't see the full impact for a month. For low-traffic sites, CrUX data may be unavailable or unrepresentative.

This is where in-house RUM becomes valuable: you get real-time field data segmented however you want. But be careful — your own RUM metrics don't replace CrUX for Google ranking. [Needs verification]: Google has never confirmed whether it weights CrUX data differently based on site traffic volume.

Practical impact and recommendations

How should you prioritize optimizations with this in mind?

Always start with CrUX data to identify problem pages. Search Console tells you exactly which URLs are failing on which metrics — this is your starting point.

Next, use Lighthouse or PageSpeed Insights in lab mode to diagnose the causes on those specific pages. But don't stop at diagnosis: implement the fix, wait for the CrUX update, and validate that the problem is solved for real users.

What traps should you avoid?

Don't over-optimize for use cases that don't represent your actual audience. If 90% of visitors are on desktop with fiber, don't spend weeks optimizing for a Moto G4 on slow 3G — unless you're targeting emerging markets.

Another classic mistake: ignoring metric distribution. The 75th percentile CrUX may look "good" while 25% of users have catastrophic experiences. Analyze full percentile distributions in BigQuery to find your real problems.

What needs to be implemented concretely?

- Set up CrUX access through BigQuery to analyze complete metric distributions

- Implement RUM with web-vitals.js for real-time monitoring segmented by device type, region, and more

- Build a workflow: CrUX (identification) → Lighthouse (diagnosis) → fix → CrUX validation

- Segment optimizations by page type and target audience — an e-commerce product page has different priorities than a blog article

- Automate alerts on CrUX regressions to catch deployments that degrade real-world performance

❓ Frequently Asked Questions

Les données CrUX sont-elles disponibles pour tous les sites ?

Peut-on améliorer les Core Web Vitals sans toucher au code ?

Quelle est la latence entre une optimisation déployée et son impact visible dans CrUX ?

Les données de laboratoire peuvent-elles être meilleures que les données terrain ?

Le RUM remplace-t-il les données CrUX pour le classement Google ?

🎥 From the same video 10

Other SEO insights extracted from this same Google Search Central video · published on 06/05/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.