Official statement

Other statements from this video 11 ▾

- □ Les Core Web Vitals influencent-ils vraiment le classement du contenu utile ?

- □ La compatibilité mobile n'est-elle vraiment plus un facteur de classement Google ?

- □ Pourquoi Google abandonne-t-il le FID au profit de l'INP dans les Core Web Vitals ?

- □ Les Core Web Vitals ne suffisent-ils vraiment pas à garantir une bonne expérience utilisateur ?

- □ Search Generative Experience (SGE) : comment l'IA générative de Google va-t-elle bouleverser les SERPs ?

- □ Le rich results test avec édition de code change-t-il vraiment la donne pour tester vos données structurées ?

- □ Search Console Insights sans Google Analytics : la fin d'une dépendance contraignante ?

- □ Le rapport d'indexation vidéo de Google révèle-t-il enfin les vrais problèmes bloquants ?

- □ Pourquoi Google documente-t-il un nouveau crawler générique et révèle-t-il ses adresses IP ?

- □ Le nouveau rapport de spam de Google change-t-il vraiment la donne pour les SEO ?

- □ Faut-il revoir sa stratégie de noms de domaine maintenant que le .ai devient un ccTLD générique ?

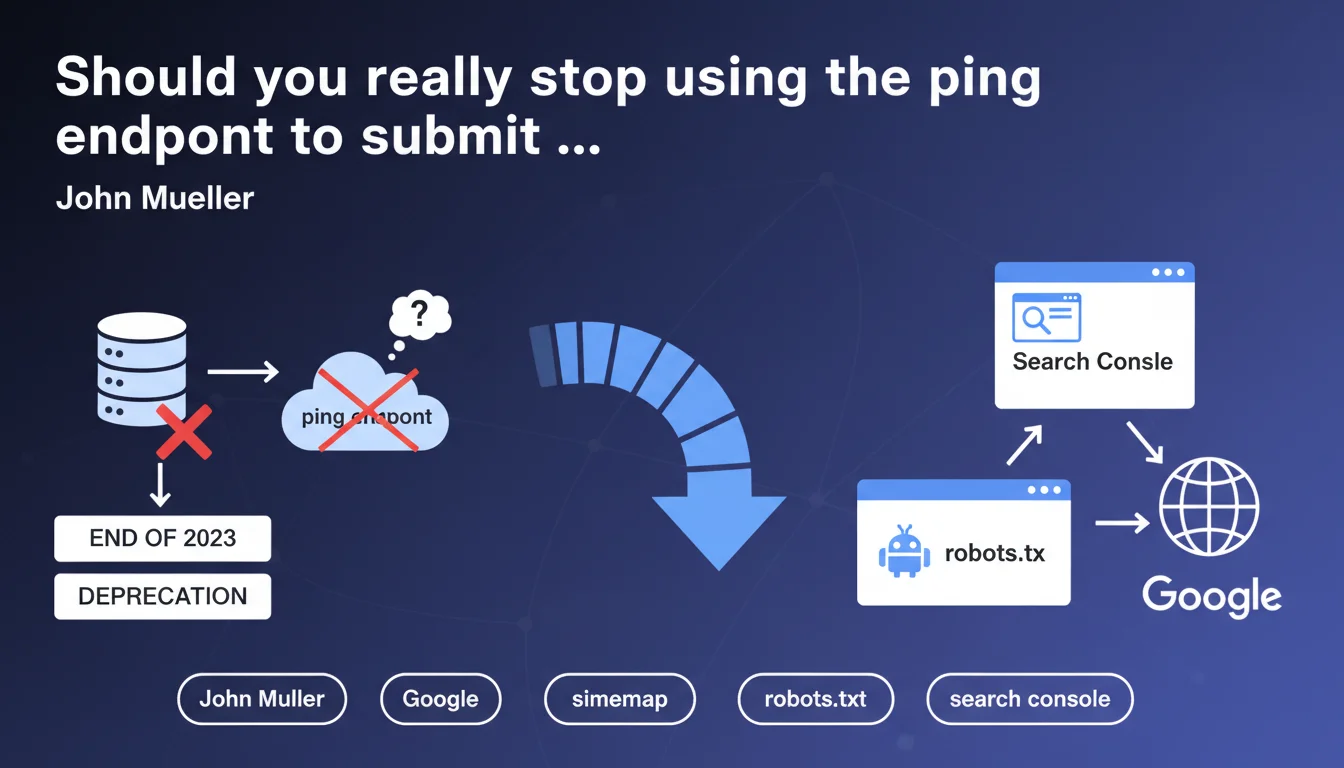

Google has removed the ping endpoint for sitemaps because the data received provided no exploitable value. Only Search Console and robots.txt remain valid for submission. This change forces a clarification of submission workflows, but changes nothing about how sitemaps themselves are processed.

What you need to understand

What is the ping endpoint and why is Google abandoning it?

The ping endpoint allowed you to notify Google via a simple HTTP GET request whenever a sitemap was updated. For years, thousands of sites automated this notification after every content change. Google claims that these data had no practical utility for its crawl system.

The reason given is blunt: the signals sent through this endpoint influenced neither crawl speed nor indexation priority. Google has long relied on its own discovery algorithms rather than manual notifications. The ping had become a technical relic with no real impact.

What are the official alternatives for submitting a sitemap?

Two methods remain validated by Google. The first: Search Console, where you can manually submit or submit via API your sitemaps. This is the preferred method for granular control and tracking of processing errors.

The second: declare your sitemaps in the robots.txt with the Sitemap directive. This approach is passive but reliable — Google automatically discovers sitemaps when crawling your robots.txt file. No additional action required after the initial declaration.

Does this deprecation change how sitemaps function?

No. Sitemap processing remains identical. Google continues to crawl them regularly, analyze the listed URLs, and use metadata (lastmod, priority, changefreq) as weak signals. What disappears is only the ability to actively notify of an update.

Concretely, if you've configured your sitemap in Search Console or robots.txt, nothing changes in your routine. Google will discover it and process it according to its own crawl logic, regardless of your notifications.

- The ping endpoint is removed because it's useless according to Google

- Search Console and robots.txt become the only official methods

- Sitemap processing remains identical, only the notification disappears

- No observed impact on crawl speed or indexation

SEO Expert opinion

Does this decision truly reflect the uselessness of the ping endpoint?

Let's be honest: Google doesn't shut down a feature used by millions of sites without solid technical reason. If the ping endpoint provided even a micro-advantage, they would have kept it. The abandonment confirms what many already suspected — these notifications accelerate nothing.

But — and this is where it gets tricky — Google shares no numerical data about the real impact of the ping. No before/after comparison, no efficiency metrics. We have to take their word for it. [To verify]: the real impact of ping on high-velocity editorial sites has never been publicly documented.

What are the consequences for automated submission workflows?

Thousands of CMS platforms, plugins, and SEO tools used this endpoint in the background. Developers will need to migrate to the Search Console API or completely remove this notification. Some tools will continue sending pings into the void for months before being updated.

The problem? The Search Console API requires OAuth authentication, which complicates automation compared to a simple GET request. For small sites with basic workflows, switching to robots.txt is sufficient. For complex platforms with dynamic sitemap generation, the transition requires development work.

Does this deprecation hide a deeper change in sitemap management?

Not according to the official statement. But let's observe the facts: Google increasingly pushes Search Console as the central hub for communicating with webmasters. Every deprecation of an alternative channel reinforces this centralization. The ping endpoint joins the list of obsolete methods.

What's critically missing from this announcement: clear guidelines on optimal sitemap crawl frequency. If pinging doesn't accelerate anything, how often does Google actually re-crawl a sitemap? No answer. [To verify]: the impact on sites publishing 50+ articles per day remains unclear.

Practical impact and recommendations

What should you concretely do after this deprecation?

First step: audit all your workflows that use the ping endpoint. List your CMS platforms, plugins, cron scripts, third-party tools. Identify which ones still send pings and plan their migration or deactivation.

Then verify that your sitemaps are properly declared in Search Console AND robots.txt. Redundancy costs nothing and ensures Google discovers them systematically. If you use dynamically generated sitemaps created on the fly, make sure the URL remains stable in robots.txt.

What errors should you avoid during the transition?

Don't remove the ping endpoint from your code before verifying that your sitemaps are correctly submitted via Search Console. A brutal transition without verification can create a gap in your tracking. Test in parallel first.

Also avoid believing that manually submitting a sitemap in Search Console forces immediate crawling. Google processes submissions at its own pace. Submission is a signal, not a priority crawl order.

How can you verify that your sitemaps are properly processed without the ping?

In Search Console, Sitemaps section, monitor the date of last read. If it stagnates for several weeks while you're publishing regularly, that's a warning signal. Google should re-crawl your active sitemaps at least weekly.

Also check the coverage rate: how many URLs from the sitemap are actually indexed? A significant gap indicates a sitemap quality problem or crawlability issues with the listed URLs, not a submission problem.

- Audit all workflows using the ping endpoint

- Submit all sitemaps in Search Console

- Declare sitemaps in robots.txt with the Sitemap: directive

- Migrate automations to the Search Console API if necessary

- Check the date of last read in Search Console monthly

- Monitor the coverage rate of sitemap URLs

- Disable obsolete pings only after validating the new workflow

❓ Frequently Asked Questions

Le ping endpoint fonctionnera-t-il encore après la date de dépréciation ?

Dois-je soumettre mon sitemap dans Search Console ET dans robots.txt ?

Soumettre un sitemap via Search Console accélère-t-il le crawl de mes nouvelles pages ?

L'API Search Console peut-elle remplacer complètement le ping endpoint pour les automatisations ?

Comment savoir si Google crawle régulièrement mon sitemap sans le ping ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 05/07/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.