Official statement

Other statements from this video 7 ▾

- □ Comment la série Ecommerce Essentials de Google révolutionne-t-elle l'approche technique du SEO ?

- □ Le nouveau rapport vidéo Search Console va-t-il changer la donne pour le SEO vidéo ?

- □ Comment survivre à une Core Update de Google sans perdre tout son trafic ?

- □ Pourquoi Google confirme-t-il publiquement certaines Core Updates et pas d'autres ?

- □ Faut-il encore s'embêter avec les balises d'extension de sitemap ?

- □ Faut-il connecter Search Console à Data Studio pour optimiser ses performances SEO ?

- □ Comment Google a-t-il réellement adapté sa lutte anti-spam ces dernières années ?

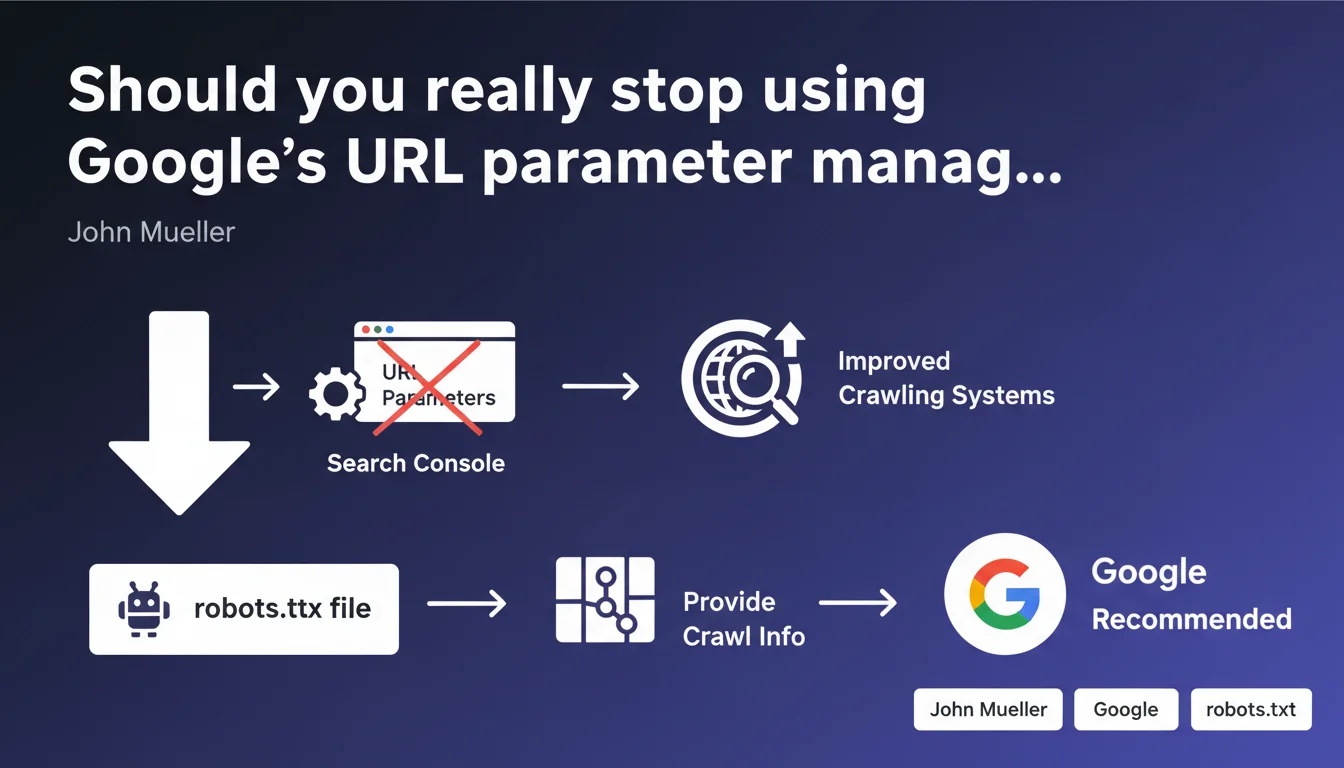

Google is removing the URL parameter management tool from Search Console, believing that its crawling algorithms are now advanced enough to handle parameterized URLs without manual intervention. The robots.txt file becomes the only official option for controlling the crawling of URLs with parameters. This change forces SEOs to reconsider their strategy for managing facets, filters, and sessions.

What you need to understand

Why is Google removing this tool now?

For years, the URL parameter management tool allowed you to tell Google how to treat URLs containing parameters: which ones to actively crawl, which ones to ignore, which parameters changed the page content. Precious time savings to avoid wasting crawl budget on thousands of URLs generated by filters or sessions.

According to Mueller, Google's crawling systems have improved so much that this tool has become unnecessary. Translation: Google believes it can identify URL patterns to ignore or prioritize on its own, without needing us to do the work for it.

What concrete alternative does Google propose?

The official recommendation: use the robots.txt file to block crawling of problematic parameterized URLs. Technically feasible with wildcards and patterns, but far less granular than the old tool.

The problem? Robots.txt blocks crawling, period. It doesn't allow you to say "crawl this URL but don't index it" or "this parameter changes the content, that one doesn't". It's all or nothing, whereas the management tool offered a palette of nuances.

Which sites are most impacted by this change?

E-commerce sites with complex faceted navigation are on the front lines. Those that generated hundreds of thousands of URLs through filter combinations (color + size + price + brand) lose a fine-tuned control lever.

Platforms with session management in URLs, multilingual sites with redundant language parameters, or catalogs with multiple sorting options also see their room for maneuver reduced.

- The URL parameter management tool is disappearing from Search Console

- Google believes its algorithms handle parameterized URLs better than before

- Robots.txt becomes the only recommended method for controlling crawling

- This transition forces a less granular and more binary approach (crawling blocked or allowed)

- Sites with faceted navigation lose a precise crawl budget management tool

SEO Expert opinion

Is Google's confidence in its algorithms justified in the real world?

Let's be honest: Google has indeed made progress in its ability to detect patterns of duplicate or valueless URLs. Observations show that it increasingly ignores obvious sorting or pagination URLs without needing to be told.

But — and this is where it gets tricky — this intelligence isn't uniform across different site types. On complex e-commerce architectures with combined filters, Google regularly continues to crawl thousands of useless URLs that manual parametrization would have prevented. [To verify]: the claim that "systems have improved significantly" lacks concrete data on actual improvement rates.

Can robots.txt really replace the parameter management tool?

No, and it's a dangerous oversimplification on Google's part. Blocking with robots.txt prevents both crawling AND PageRank flow — which can be counterproductive if certain parameterized URLs have value or receive external links.

The old tool allowed you to say "crawl without indexing" or "this parameter changes the content, treat each variation as unique". Robots.txt can't do any of that. It's like replacing a scalpel with an axe.

In which cases does this robots.txt-only approach pose problems?

Concrete case: a retail site receives external links to URLs with UTM or tracking parameters. Blocking these patterns with robots.txt prevents Google from following these links and passing their authority to target pages.

Another problematic scenario: sites that use parameters to manage slightly different content variations (e.g.: list vs. grid display, with or without stock). Impossible to tell Google "treat these variations as identical" without the management tool — you now have to choose between crawling everything or blocking everything.

Practical impact and recommendations

What should you do concretely with this deprecation?

First step: export your configured parameters from the tool before it's completely gone if you haven't already. Document which parameters you had marked as "changes content", "doesn't change content", or "pagination".

Next, analyze your server logs over 30-90 days to identify which parameters Google actually crawls and in what quantity. Compare with your old configurations: if Google was respecting your directives, the transition will be tricky.

How do you adapt your crawl budget strategy without the tool?

For clearly useless parameters (sessions, internal tracking, redundant sorting), a robots.txt directive with wildcard works fine: Disallow: /*?sort= or Disallow: /*?sessionid=.

For more nuanced cases, you need to combine multiple levers: canonical tags to group variations, noindex on overly deep facet combinations, and pagination with rel=next/prev or View All if relevant. Control becomes multi-channel instead of centralized in a single tool.

Sites with complex faceted navigation must rethink their architecture: limit the number of combinable filter levels, implement client-side JavaScript for secondary filters (with accessible fallback), or create static landing pages for popular combinations.

What mistakes should you avoid during this transition?

Classic mistake: blocking all parameters en masse with robots.txt as a precaution. Result: legitimate pages become inaccessible, organic traffic collapses on long-tail queries that were passing through parameterized URLs.

Another trap: doing nothing thinking "Google handles everything on its own now". On medium to large sites, inaction leads to continuous crawl budget waste and potentially duplicate content problems if canonicals aren't perfectly configured.

- Export and document your current URL parameter configurations before the tool closes

- Analyze server logs to measure the real impact of parameters on your crawl budget

- Identify strictly useless parameters (sessions, tracking) and block them via robots.txt

- Audit your canonical tags to ensure they cover all parameterized variations

- Implement noindex on deep facet combinations or low-relevance ones

- Check in Search Console that blocked URLs don't have external backlinks before applying robots.txt

- Monitor the evolution of crawled and indexed page counts after each change

- Review the architecture of faceted sites to reduce unnecessary parameterized URL generation

❓ Frequently Asked Questions

L'outil de gestion des paramètres d'URL est-il déjà supprimé ou peut-on encore l'utiliser ?

Si je bloque des paramètres en robots.txt, est-ce que Google peut quand même indexer ces URLs ?

Comment gérer des URLs paramétrées qui reçoivent des backlinks externes ?

Les filtres de navigation à facettes doivent-ils tous être bloqués en robots.txt maintenant ?

Google détecte-t-il vraiment mieux les URLs paramétrées inutiles qu'avant ?

🎥 From the same video 7

Other SEO insights extracted from this same Google Search Central video · published on 23/06/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.