Official statement

Other statements from this video 7 ▾

- □ Comment la série Ecommerce Essentials de Google révolutionne-t-elle l'approche technique du SEO ?

- □ Le nouveau rapport vidéo Search Console va-t-il changer la donne pour le SEO vidéo ?

- □ Comment survivre à une Core Update de Google sans perdre tout son trafic ?

- □ Pourquoi Google confirme-t-il publiquement certaines Core Updates et pas d'autres ?

- □ Faut-il vraiment arrêter d'utiliser l'outil de gestion des paramètres d'URL dans Search Console ?

- □ Faut-il encore s'embêter avec les balises d'extension de sitemap ?

- □ Faut-il connecter Search Console à Data Studio pour optimiser ses performances SEO ?

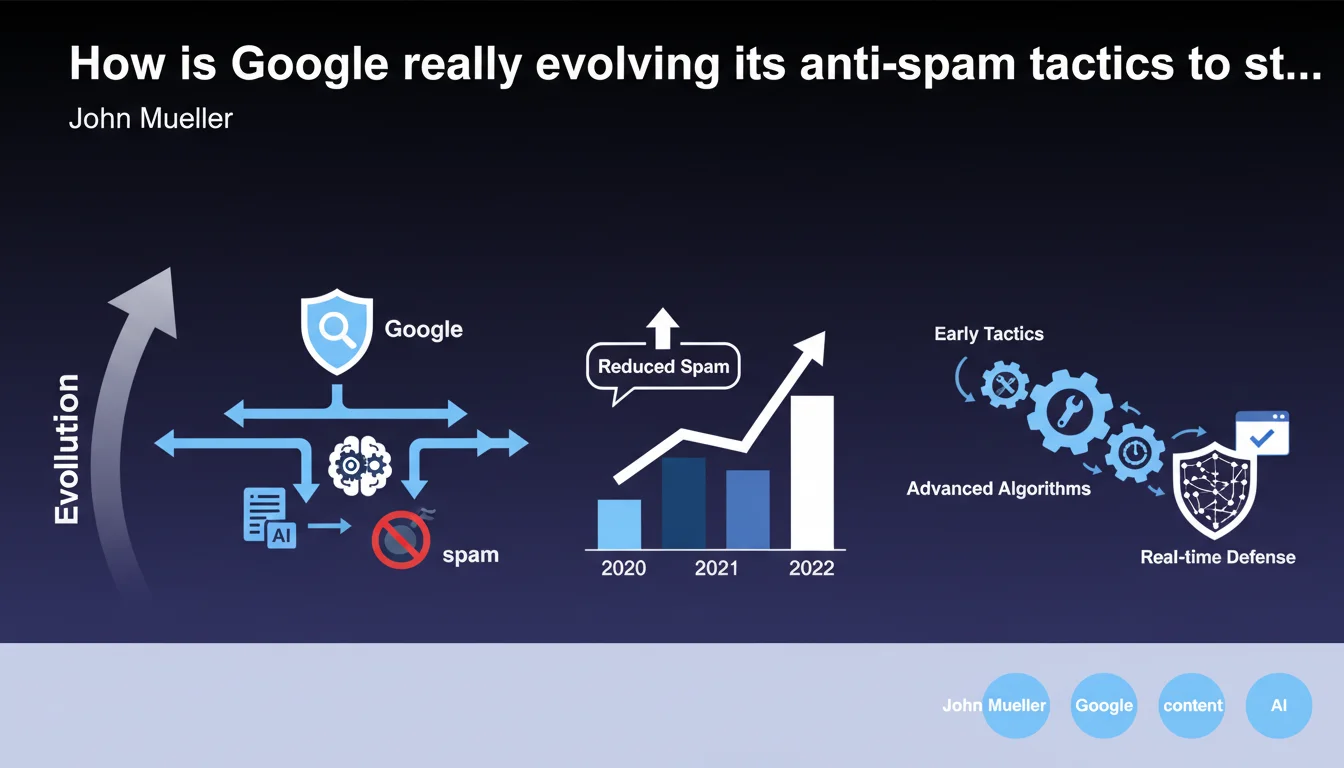

Google has released its annual Webspam report, detailing the evolution of anti-spam and malicious content tactics. The document reveals significant changes in detection and penalization methods over time. For SEO practitioners, it's an opportunity to understand where the bar is set — and how it's constantly shifting.

What you need to understand

Why does Google publish this report every single year?

The publication of the annual Webspam report isn't just a free exercise in transparency. Google wants to publicly demonstrate that it's investing heavily in the quality of its search results. It's also a way to deter bad actors by showcasing the growing effectiveness of its systems.

For SEO professionals, this report offers valuable clues about Google's current priorities when it comes to spam. The types of manipulation receiving particular attention in the report are typically those where Google is ramping up its algorithms and manual review teams.

What major shifts are happening in anti-spam detection methods?

The report explicitly mentions that methods have evolved over the years. In practical terms, this means that spam techniques that worked a few years ago are now largely neutralized — or worse, actively penalized.

Google continuously refines its algorithms to detect increasingly sophisticated patterns. Spammers adapt, Google adapts. The Webspam report documents this perpetual cycle, showing that old playbooks gradually become obsolete, or even toxic for your search rankings.

What exactly does "malicious content" really mean in this context?

Beyond classical spam (link farms, auto-generated content, keyword stuffing), Google considers "malicious content" any attempt to manipulate results at the expense of users: doorway pages, cloaking, deceptive redirects, malware injection.

The report doesn't always spell out the complete taxonomy with precision — intentionally. Google prefers to keep some ambiguity so as not to hand spammers a how-to guide.

- Constant evolution: anti-spam techniques become more sophisticated year after year

- Signaled priorities: the types of spam mentioned in the report are those being actively targeted

- Broad spam definition: includes algorithmic manipulation, deceptive content, and security threats

- Strategic opacity: Google never reveals all its criteria to prevent workarounds

SEO Expert opinion

Is this claim actually consistent with what we see in practice?

Yes and no. Sites engaging in blatant spam (obvious PBNs, low-quality spun content, keyword stuffing) are indeed neutralized faster than before. On that point, Google's claims match what we observe on the ground.

However, some more subtle schemes — like well-disguised link networks or lightly-edited AI content — still regularly slip through the cracks. The Webspam report gives the impression of total control, but real-world evidence shows that effectiveness heavily depends on how sophisticated the techniques are. [Needs verification]: the true proportion of spam caught automatically versus manually caught remains unclear.

What nuances should we add to this official narrative?

Google never publishes granular data: how many sites penalized? What's the false positive rate? What's the breakdown between automated actions and manual reviews? This lack of transparency makes it hard to objectively evaluate real effectiveness.

Plus, the definition of "spam" can shift. Some legitimate sites occasionally suffer penalties that look like collateral damage, especially during broad algorithm rollouts (like the Helpful Content Update). The report never mentions these false positives — yet they exist.

When does this rule actually break down?

Let's be frank: in ultra-competitive sectors (online gambling, finance, health), massive manipulation still persists despite Google's stated efforts. These niches generate too much revenue for spammers to quit easily.

The Webspam report reflects a general trend, but don't assume Google has completely eradicated the problem. There are still gray zones where borderline tactics work — at least temporarily.

Practical impact and recommendations

What should you actually do after this announcement?

First step: audit your current practices. Verify that you're not using any techniques that could be interpreted as manipulative, even marginally. Google's criteria evolve, and what flew under the radar before might now trigger a penalty.

Next, focus on editorial quality and user experience. It's your best defense against future anti-spam updates. A site that delivers real value, with coherent internal linking and original content, will always carry less risk.

What mistakes should you absolutely avoid?

Don't try to disguise borderline techniques hoping they'll slip by unnoticed. Google's systems are becoming increasingly effective at detecting suspicious patterns, even when well-hidden.

Also avoid falling into excessive paranoia. Not every backlink is toxic, not every on-page optimization is spam. You need to know how to tell the difference between legitimate optimization and manipulation.

How can you verify your site remains compliant?

Regularly use Google Search Console to monitor manual actions. Examine your link profiles with tools like Ahrefs or Majestic to spot questionable backlinks. Disavow if necessary, but use good judgment.

Track your position fluctuations after each major update. A sudden drop could signal a problem detected by a new anti-spam algorithm. If this happens, analyze quickly and correct.

- Regularly audit your SEO practices to eliminate any suspicious elements

- Prioritize editorial quality and user experience above all else

- Monitor Google Search Console to detect manual actions

- Analyze your backlink profile and disavow toxic links you identify

- Track your rankings after each update to spot potential penalties

- Document your optimizations so you can justify their legitimacy if needed

❓ Frequently Asked Questions

Le rapport Webspam révèle-t-il des techniques de spam spécifiques à éviter ?

Un site légitime peut-il être pénalisé par erreur lors des actions anti-spam ?

À quelle fréquence Google met-il à jour ses systèmes anti-spam ?

Faut-il désavouer systématiquement tous les backlinks suspects ?

Les techniques de spam mentionnées dans le rapport sont-elles définitivement éradiquées ?

🎥 From the same video 7

Other SEO insights extracted from this same Google Search Central video · published on 23/06/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.