Official statement

Other statements from this video 5 ▾

- □ Les opinions Google sur le Web3 reflètent-elles vraiment la position du moteur de recherche ?

- □ Google est-il vraiment neutre dans la distribution du contenu web ?

- □ Les contenus en communautés privées sont-ils vraiment invisibles pour Google ?

- □ Pourquoi Google ne peut-il pas indexer les contenus sans URL crawlable ?

- □ Google va-t-il abandonner le crawl traditionnel pour indexer le web social ?

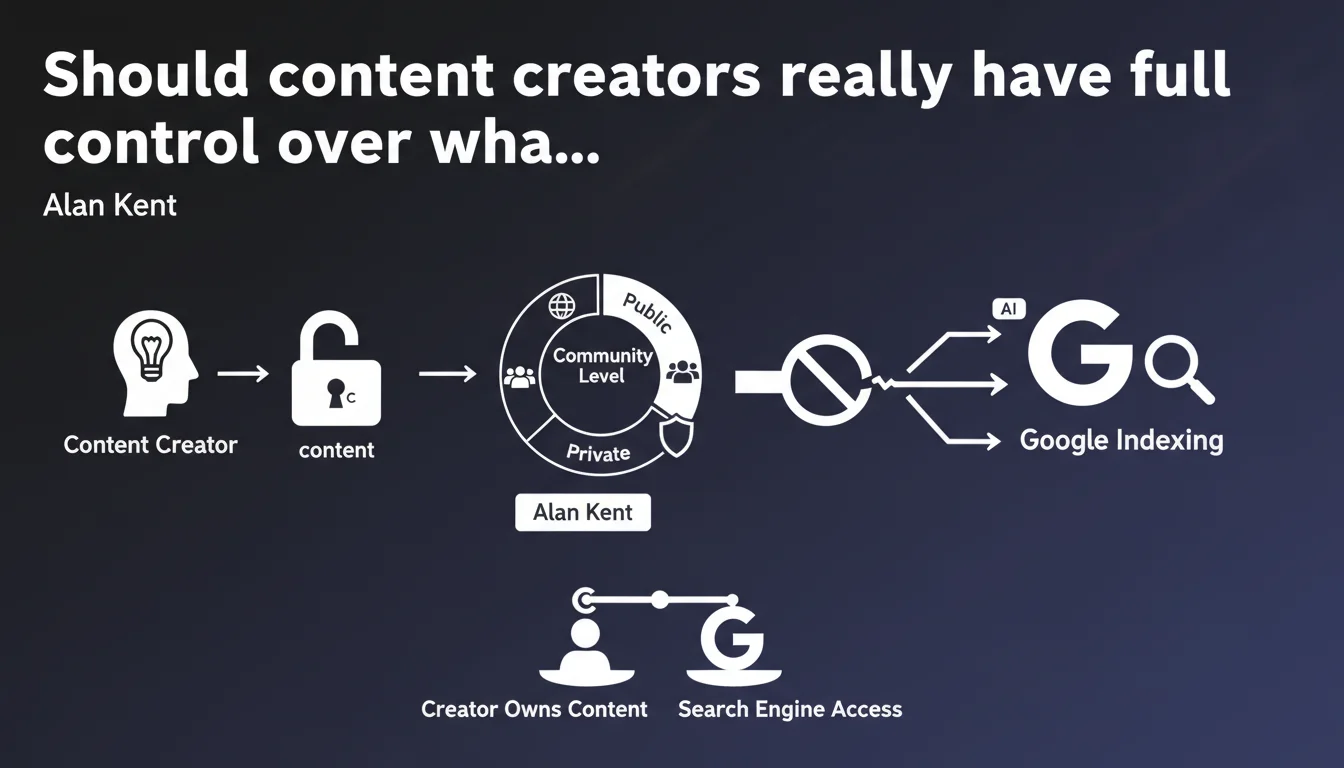

Alan Kent asserts that content creators must maintain control over what is exposed to search engines. It's up to the site owner to decide what becomes public and at what level, because it's their content. A position that reaffirms the importance of robots.txt files, meta robots tags, and granular indexation strategies.

What you need to understand

Why is Google reiterating this principle now?

This statement comes at a time when indexing robots are multiplying — not just Google, but also AI crawlers, aggregators, and third-party tools. Creators sometimes lose control over what gets scraped, how, and by whom.

Alan Kent reminds us of a fundamental principle: it's up to the content owner to decide what should be accessible or not. Not search engines, not third parties. This position defends creator autonomy in the face of sometimes overly aggressive indexation.

What does "controlling available content" concretely mean?

It means controlling which content is crawlable, indexable, and viewable by search engines. This is achieved through technical directives: robots.txt, meta robots tags, sitemap files, URL parameters, and mobile or AMP version management.

Google acknowledges here that creators must be able to define access levels — from fully public to completely private, including intermediate zones reserved for certain communities or subscribers.

What tools are available to exercise this control?

The classic mechanisms remain the most reliable: robots.txt to block crawling, noindex to prevent indexation, canonical to manage duplicates, and URL parameters in Search Console to avoid wasting crawl budget.

For paid or restricted content, schema.org Paywall or 401/403 redirects can signal to Google that a resource isn't accessible to the general public. But the line remains blurry between signaling and complete blocking.

- Robots.txt: blocks crawling upstream, but doesn't prevent indexation if the URL is known elsewhere

- Meta robots noindex: prevents indexation even if the page is crawled

- Canonical: indicates which version of content should be prioritized in the index

- URL parameter management: avoids indexing unnecessary variations (filters, sessions, tracking)

- Paywall Schema: signals paid content to avoid cloaking penalties

SEO Expert opinion

Is this statement consistent with real-world practices?

Yes and no. Google generally respects robots.txt and noindex directives — it's documented, tested, verified. But it happens that pages blocked by robots.txt appear in the index if they have strong external links. Google then says "we couldn't crawl to verify the noindex, so we index the URL without content."

The other concern: third-party crawlers don't always respect these rules. AI bots, scrapers, aggregators sometimes ignore robots.txt. Google only controls part of the ecosystem — this statement applies to Googlebot, not the entire web.

What nuances should be added to this principle?

Controlling what gets indexed is good. But blocking too much can kill your visibility. I've seen sites accidentally noindex strategic pages, or block CSS/JS resources in robots.txt that Google needs to properly render content — Google then can't correctly evaluate the page.

You also need to understand that Google dislikes opacity. If you hide too much, if you play with gray areas (disguised cloaking, poorly signaled paid content), you risk manual penalties. Control, yes — but with transparency. [To verify]: Google has never precisely detailed where the line is between "legitimate restricted content" and "abusive concealment."

In what cases does this rule not fully apply?

Closed social networks or corporate intranets aren't affected — they're already out of Googlebot's reach. However, mixed content (partially public, partially paid) poses problems: Google wants to see enough content to assess relevance, but not everything if it's restricted to subscribers.

Another edge case: user-generated content (forums, comments, UGC). You're technically responsible for it, but you didn't create it. Blocking UGC indexation too aggressively can limit your visibility, but allowing everything to be indexed exposes you to spam and duplicate content.

Practical impact and recommendations

What should you concretely do to maintain control over indexation?

First step: audit what is currently indexed. Type site:yourdomain.com into Google and compare it with what you actually want to appear. Use Search Console to identify indexed pages not submitted in the sitemap — often these are pages you didn't want to expose.

Next, implement a coherent indexation strategy. Clearly define which sections must be public, which should remain private, and which are reserved for members. Document these rules in a readable robots.txt file and maintain a clean sitemap that only lists indexable URLs.

What mistakes should you absolutely avoid?

Never block your CSS and JavaScript resources in robots.txt — Google needs them to properly display your pages. Don't mix robots.txt and noindex on the same page: if you block crawling, Google can't read the noindex, so the page may stay in the index.

Also avoid inconsistencies between directives. A page with noindex that receives a canonical link to another URL, or a page blocked by robots.txt but listed in the sitemap — this kind of contradictory signal slows down indexation and creates confusion.

How can you verify that your site complies with this control logic?

Use the URL inspection tool in Search Console to test page by page. Verify that the rendering matches your expectations, that directives are correctly interpreted. Check the coverage report to spot "Excluded" and "Indexed, but blocked by robots.txt" pages — often warning signals.

For complex sites, a Screaming Frog or Oncrawl crawl lets you cross-reference robots.txt directives, meta robots tags, canonicals, and sitemaps. You detect inconsistencies before Google finds them.

- Audit current index with

site:and Search Console - Document a clear indexation policy by content type

- Verify that robots.txt doesn't block CSS/JS needed for rendering

- Avoid contradictory directives (robots.txt + noindex on same page)

- Use the URL inspection tool to validate actual behavior

- Cross-check data with regular technical crawls

- Remove from sitemap any URL you don't want indexed

- Signal paid content with schema.org Paywall if applicable

❓ Frequently Asked Questions

Si je bloque une page en robots.txt, peut-elle quand même apparaître dans l'index Google ?

Quelle est la différence entre bloquer le crawl et bloquer l'indexation ?

Comment signaler à Google qu'un contenu est réservé aux abonnés sans risquer de pénalité pour cloaking ?

Les robots d'IA respectent-ils les mêmes règles que Googlebot en matière de robots.txt ?

Comment retirer rapidement une page de l'index Google si elle a été indexée par erreur ?

🎥 From the same video 5

Other SEO insights extracted from this same Google Search Central video · published on 19/05/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.