Official statement

Other statements from this video 2 ▾

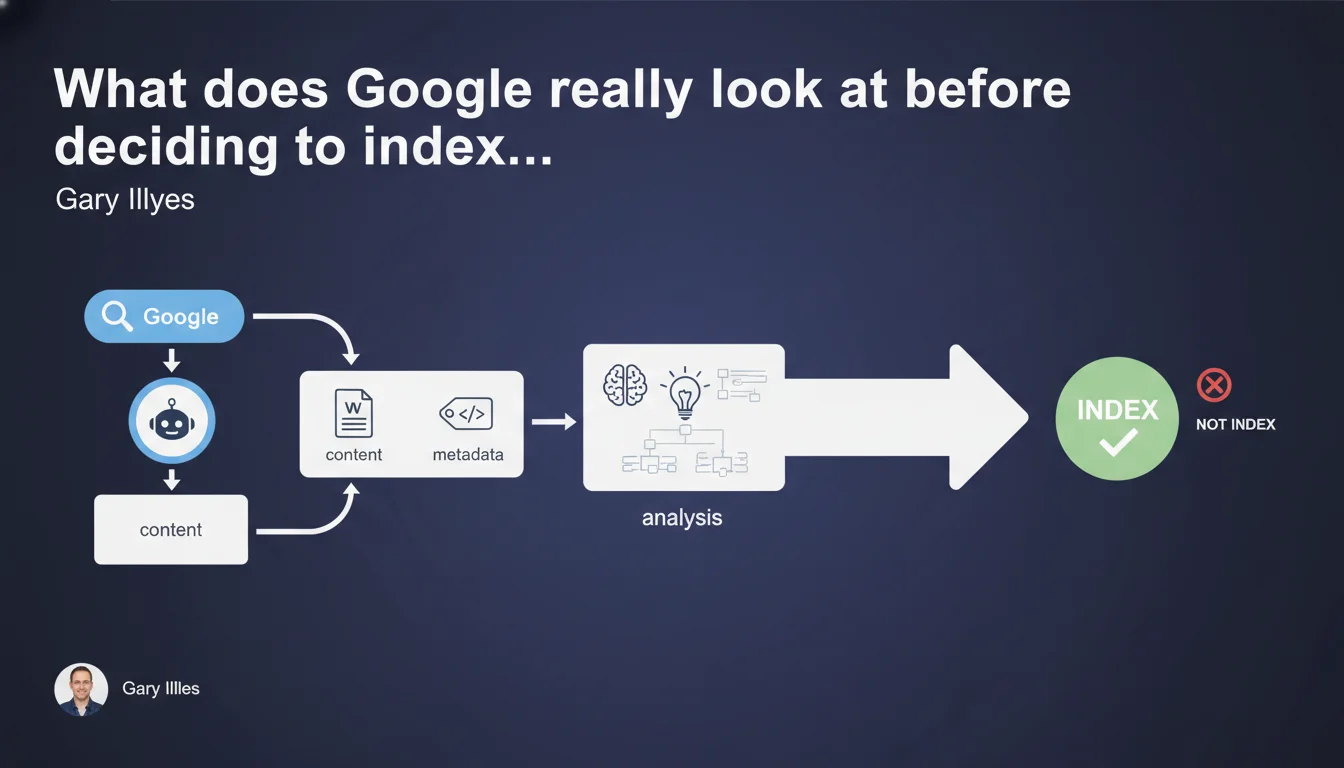

Google must process and analyze both the content AND metadata of a web page before making any indexing decision. No analysis = no indexing possible. This preliminary processing step directly determines the visibility of all your pages in search results.

What you need to understand

Why is this analysis step an absolute prerequisite?

Google cannot index what it doesn't understand. The analysis of content and metadata is the preliminary phase that allows the search engine to determine what your page is about, assess its quality, and decide if it deserves to be added to the index.

This statement from Gary Illyes reinforces a fundamental principle: indexing is never automatic. It results from an algorithmic decision based on this analysis phase. Without prior processing, your page remains invisible in the SERPs — regardless of its quality.

What does "process and analyze" actually mean in practice?

Processing encompasses several technical operations: HTML parsing, extraction of visible text, semantic content analysis, language detection, evaluation of quality signals. Google literally dissects your page to extract meaning from it.

Metadata analysis covers title tags, meta descriptions, structured data, canonical tags, hreflang, robots directives... All these elements are scrutinized before indexing. They directly influence the decision to index or not.

What's the difference between crawling, processing, and indexing?

Crawling is discovery — Googlebot accesses your URL. Processing/analysis is understanding — Google extracts and interprets your content. Indexing is the final decision — your page either joins (or doesn't join) the searchable index.

These three phases are sequential and each can be a bottleneck. A crawled page is not necessarily processed; a processed page is not necessarily indexed.

- Crawling alone guarantees nothing — Google must be able to analyze your content

- Metadata matters as much as content in this analysis phase

- Indexing is conditional — it depends on the results of this prior analysis

- A page blocked at the processing level (poorly managed JavaScript, inaccessible content) will never be indexed

- Google doesn't just "read" your page — it evaluates and judges it before indexing it

SEO Expert opinion

Is this statement consistent with what we observe in the field?

Yes, and it's even a welcome reminder. We regularly observe websites with pages that are crawled but never indexed — often because the content is not accessible or understandable to Googlebot. Poorly implemented JavaScript, content loaded dynamically without server-side rendering, resources blocked by robots.txt: these are all cases where crawling occurs but analysis fails.

The precision "content AND metadata" is important. Google doesn't rely on visible text alone — it cross-references multiple signals to make decisions. A title that aligns with your H1, valid structured data, a properly placed canonical tag: all of this facilitates analysis and improves your indexing chances.

What nuances should we add to this statement?

Gary Illyes remains quite general — as he often does. He doesn't specify which criteria determine whether a page passes or fails this analysis step. Is it a matter of content quality? Duplicate content? Crawl budget? [To be verified] — Google never reveals the exact thresholds.

Another point: this statement says nothing about timing. How much time passes between crawling and complete analysis? Between analysis and the indexing decision? On massive sites or with limited crawl budget, this delay can be significant — and some pages can remain in a "waiting queue" indefinitely.

In what cases does this rule not really apply?

Let's be honest: there are edge cases. Very low-quality pages, obvious spam, content duplicated word-for-word — Google can decide not to index without thorough analysis. The filter can intervene early in the pipeline, before even a complete processing.

Pages submitted via Search Console (URL inspection) sometimes benefit from prioritized processing — but even then, no guarantee of indexation. Google can analyze and refuse anyway.

Practical impact and recommendations

What should you do concretely to facilitate this analysis?

Make your content accessible without friction. Google must be able to parse your HTML easily, access visible text, and load critical resources. If your site relies heavily on JavaScript, ensure server-side rendering or static pre-generation works — test with the Rich Results testing tool or Search Console.

Optimize your metadata as if it were being read by a busy human. Unique and descriptive title, relevant meta description, clear canonical tags, valid structured data. Coherence between content and metadata — Google dislikes contradictory signals.

What mistakes should you absolutely avoid?

Never block necessary rendering resources in robots.txt — critical CSS, JavaScript, images essential to understanding. Google needs to see your page the way a user sees it to analyze it properly.

Avoid content that's inaccessible without user interaction: accordions closed by default containing main text, hidden tabs, lazy loading poorly implemented on strategic content. If Google has to "click" to see your content, it probably won't.

- Verify that Googlebot can access the complete HTML (Search Console URL inspection tool)

- Test JavaScript rendering with the Rich Results testing tool

- Audit metadata: title, description, canonical, hreflang, robots on each page type

- Validate structured data with the Schema.org validator

- Check that robots.txt doesn't block critical resources

- Identify crawled but non-indexed pages in Search Console and analyze why

- Measure the time between crawl and indexation to detect processing issues

- Eliminate duplicate or very low-quality content that slows down overall site analysis

How can you verify that your pages are being properly processed and analyzed?

Search Console remains your best ally. Monitor the coverage reports and page status (crawled but not indexed, discovered but not crawled...). These statuses often reveal problems in the analysis phase.

Use the URL inspection tool to see exactly what Google retrieves — raw HTML, rendered HTML, loaded resources. Compare it with what you see in your browser. Any discrepancy is a red flag.

❓ Frequently Asked Questions

Une page crawlée est-elle forcément indexée ?

Quelles métadonnées Google analyse-t-il avant l'indexation ?

Comment savoir si Google a correctement analysé ma page ?

Pourquoi certaines pages restent crawlées mais jamais indexées ?

Le JavaScript bloque-t-il cette phase d'analyse ?

🎥 From the same video 2

Other SEO insights extracted from this same Google Search Central video · published on 04/04/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.