Official statement

Other statements from this video 9 ▾

- □ Pourquoi Google ouvre-t-il l'accès à des données horaires dans Search Console ?

- □ Faut-il vraiment surveiller les nouvelles recommandations Search Console pour éviter les pénalités d'indexation ?

- □ Google abandonne-t-il vraiment le terme 'webmaster' dans Search Console ?

- □ Pourquoi Google lance-t-il deux core updates distinctes en même temps ?

- □ Que change vraiment la mise à jour de la politique Google sur l'abus de site ?

- □ Qu'est-ce qu'une spam update de Google et comment s'en protéger efficacement ?

- □ Faut-il supprimer les données structurées Sitelink Search Box maintenant que Google les ignore ?

- □ Pourquoi 84% des sites web possèdent-ils un fichier robots.txt ?

- □ Comment Googlebot explore-t-il réellement vos pages et quel impact sur votre crawl budget ?

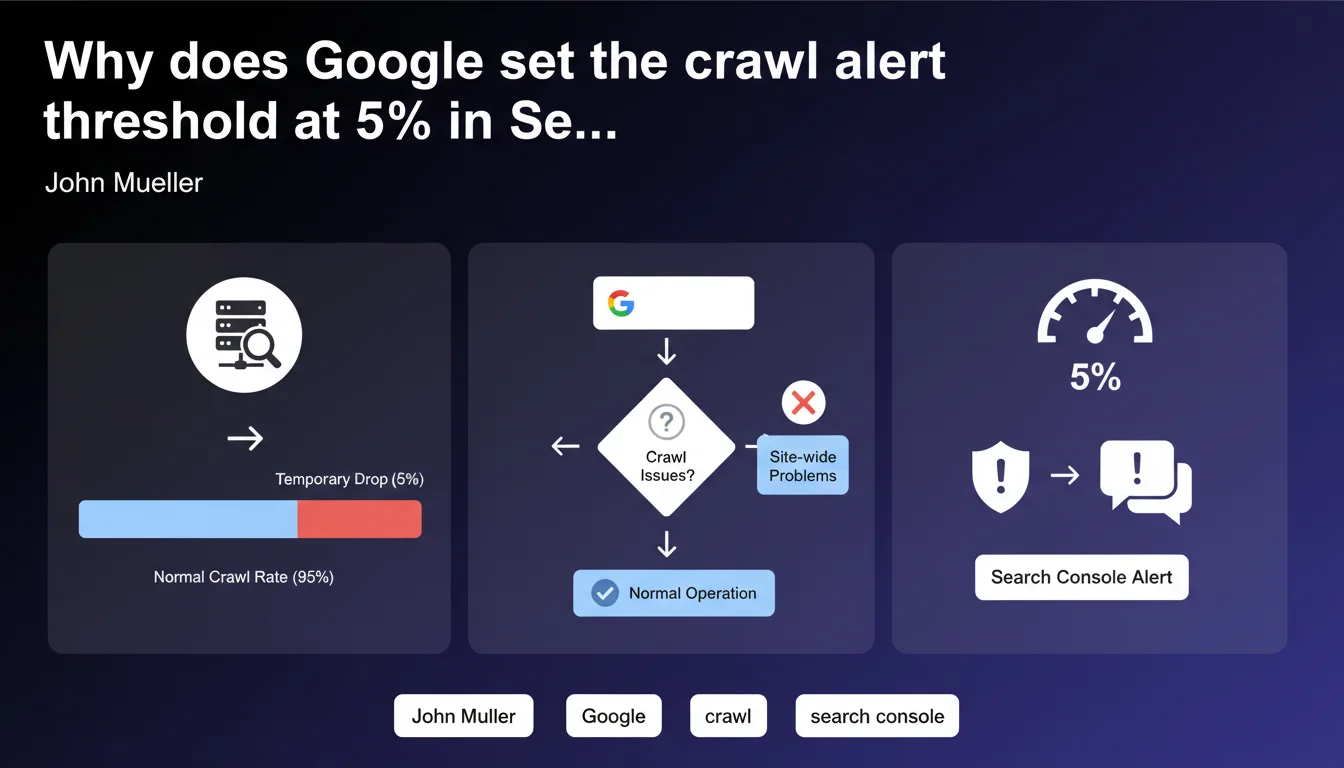

Google has set a 5% threshold as the point at which Search Console flags a measurable site-wide crawl issue. This threshold is designed to detect temporary server incidents without generating false alerts. Recommendations will continue to evolve according to Google.

What you need to understand

What does this 5% threshold actually mean in practice?

When Google detects that at least 5% of your URLs encounter the same crawl issue, Search Console triggers a recommendation. Below this threshold, the system treats it as isolated anomalies that don't warrant an alert.

This percentage applies to measurable site-wide issues — server errors, timeouts, robots.txt problems. Not isolated 404 errors or scattered soft 404s.

Why did Google choose exactly 5%?

The 5% threshold allows filtering out background noise — those sporadic errors affecting a few pages without revealing a structural problem. A server struggling for 10 minutes can easily generate 5% of crawl failures.

Google is balancing two risks: alerting too early (and overwhelming webmasters with false positives) versus alerting too late (and missing critical incidents). The 5% represents this balance point — for now.

Is this threshold permanent?

No. Mueller clarifies that recommendations will continue to be refined. This 5% is not set in stone — it's a parameter Google will adjust based on feedback and usage data.

We can expect this threshold to evolve, potentially becoming dynamic depending on the type of issue or site size. Google tests, measures, iterates.

- The 5% threshold applies to recurring crawl issues, not isolated incidents

- It primarily targets temporary server errors detectable at site scale

- This parameter will evolve — Google will adjust it based on field feedback

- Below 5%, Search Console does not generate automatic recommendations

SEO Expert opinion

Is this 5% threshold suitable for all sites?

Let's be honest: a single threshold for all sites is overly simplistic. On a 100-page site, 5% = 5 pages. On a 500,000-page site, 5% = 25,000 pages. The impact is not comparable.

For a large e-commerce site, 5% server errors could represent thousands of product pages inaccessible for several hours. The alert arrives when the damage is already done. Conversely, on a small corporate site, 5% might flag a minor incident with no real consequence.

[To verify] Google doesn't specify whether this threshold adapts to volume or site type. Nothing indicates that a 10,000-page site gets a lower threshold than a 100-page site.

Can we rely solely on this alert?

No. This threshold covers measurable crawl issues — essentially server errors. But what about crawl quality problems that never reach 5%?

Imagine 3% of your strategic pages (bestsellers, premium landing pages) become inaccessible due to poor URL parameter handling. No alert. Yet the SEO and business impact could be severe.

SEO monitoring cannot be limited to Search Console alerts. You must cross-reference with your server logs, internal crawl tools, indexation KPIs. Search Console provides a partial view — useful, but partial.

Is this transparency from Google good news?

Yes and no. On one hand, knowing the threshold lets you anticipate — you know that below 5%, you'll need to detect issues through other means. On the other, this transparency can create false security.

Some will think: "As long as I don't get an alert, everything is fine." And that's wrong. A site can lose visibility without ever triggering a 5% alert — duplicate content issues, rampant soft 404s, crawl budget drop on certain sections.

Practical impact and recommendations

What should you monitor beyond these alerts?

Even if you stay below the 5% threshold, certain signals should trigger manual review. Monitor your server logs to catch spikes in 5xx errors, even brief ones.

Cross-reference with your crawl budget data — if Googlebot reduces its crawl frequency without a Search Console alert, it's encountering friction. Also check your indexed page count evolution: a gradual decline can reveal a structural issue that never triggers a 5% alert.

How can you detect problems before they reach 5%?

Implement proactive server response time monitoring. If your TTFB spikes on certain sections, Googlebot will encounter timeouts — but perhaps not enough to trigger the alert.

Use regular crawl tools (Screaming Frog, Oncrawl, Botify) to simulate Googlebot behavior. If your own crawler hits 2-3% server errors, that's an alarm signal — don't wait for Google to confirm it at 5%.

- Review your server logs weekly to catch 5xx errors even below the 5% threshold

- Set up automatic alerts if your TTFB exceeds 1.5s on more than 2% of pages

- Run a complete site crawl at least once monthly to anticipate problems

- Monitor indexed page count evolution in Search Console — a progressive decline is an alert signal

- Cross-reference Search Console data with your analytics to identify strategic pages that could be impacted

- Don't rely solely on automatic alerts — they often come too late

What actions should you implement right now?

Establish a baseline of your crawl performance. Note how many server errors you normally generate — this is your reference point for detecting anomalies before they reach 5%.

Document your past incidents: when did you have crawl problems, at what percentage, what was Search Console's reaction? This lets you calibrate your own internal alert threshold.

❓ Frequently Asked Questions

Le seuil de 5% s'applique-t-il à tous les types d'erreurs d'exploration ?

Si j'ai 4% d'erreurs serveur, Search Console ne m'alertera pas ?

Ce seuil varie-t-il selon la taille du site ?

Google va-t-il modifier ce seuil à l'avenir ?

Dois-je me fier uniquement aux alertes Search Console pour monitorer mon exploration ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 14/01/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.