Official statement

Other statements from this video 5 ▾

- □ Google propose-t-il enfin une formation SEO avancée à la hauteur des attentes des praticiens ?

- □ Les Deep Dive SEO de Google vont-ils enfin remplacer les conférences superficielles ?

- □ Faut-il privilégier les experts SEO locaux plutôt que les déclarations officielles de Google ?

- □ Comment Google utilise-t-il vraiment vos retours pour concevoir ses événements SEO ?

- □ Search Central Live débarque en Afrique : pourquoi cette expansion géographique change-t-elle la donne pour les SEO locaux ?

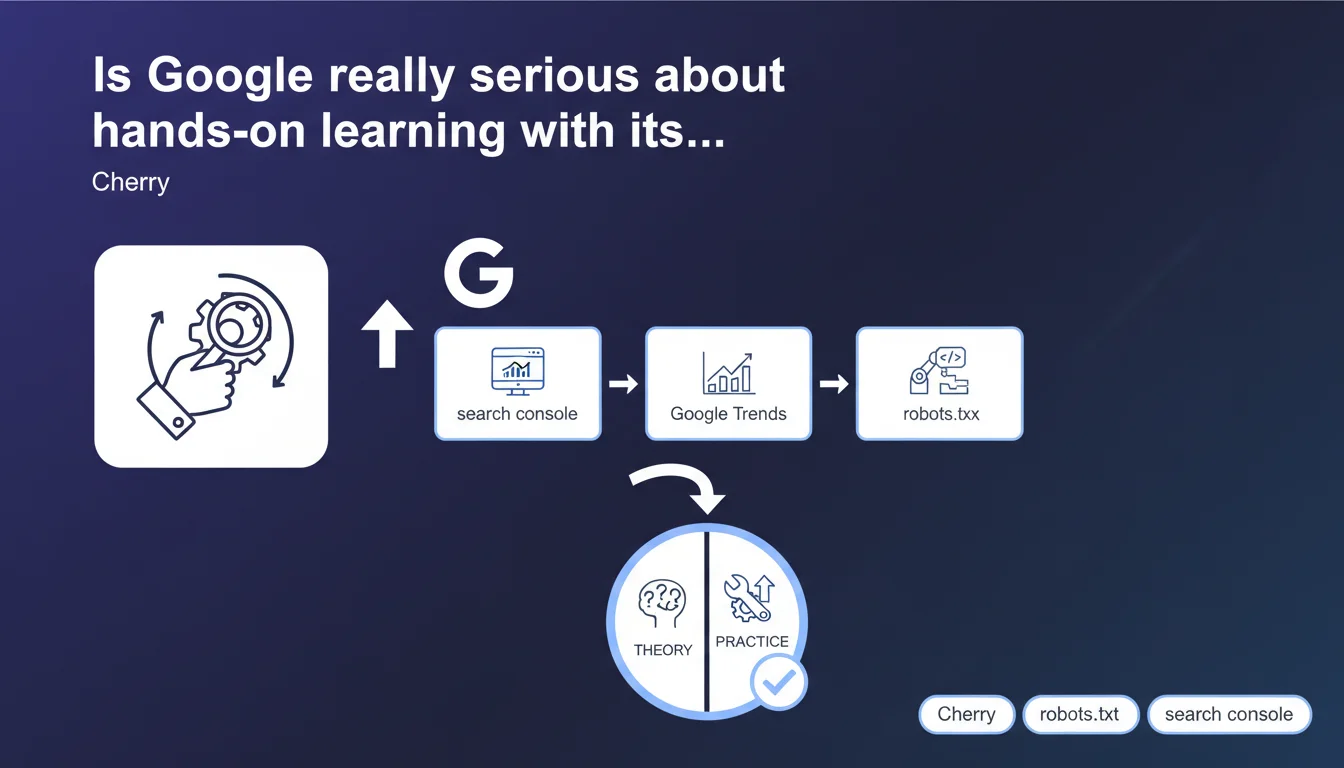

Google is launching Deep Dive workshops focused on practical application: Search Console, Google Trends, robots.txt. The stated objective is to replace theoretical presentations with hands-on sessions. An approach that could finally bridge the gap between official statements and real-world reality.

What you need to understand

How do these Deep Dive workshops differ from traditional formats?

Google has multiplied its communication channels for years — blogs, videos, webinars — but the recurring criticism remains the same: too much theory, not enough concrete application. The Deep Dive workshops promise a shift: practical sessions where participants directly manipulate tools (Search Console, Google Trends) and tackle technical subjects (robots.txt) in real-world situations.

The idea is straightforward: learn by doing. Instead of passively listening to a speaker repeat guidelines already available online, participants are supposed to work on actual case studies, ask questions as they go, and leave with actionable skills.

Why is Google pushing this format now?

Two likely reasons. First, the growing complexity of the Search ecosystem: between Core Web Vitals, structured data, selective indexation, and E-E-A-T signals, site owners are lost. Theoretical content is no longer enough — they need to upskill on the tools.

Second, Google has every reason to ensure more sites use Search Console correctly and optimize their crawl via robots.txt. Fewer support tickets, fewer misconfigured sites cluttering the index. Training practitioners directly means investing in the overall quality of the crawlable web.

What topics are covered by these workshops?

Three announced focus areas:

- Search Console — likely covering performance analysis, indexation error diagnosis, advanced report usage (coverage, experience, Core Web Vitals)

- Google Trends — leveraging search data to understand trends, refine semantic targeting, detect seasonality

- robots.txt and technical topics — file configuration, crawl budget management, diagnosing unintentional blocks, impact on indexation

SEO Expert opinion

Is this initiative consistent with what we observe in the field?

Yes and no. Google has always encouraged Search Console usage, but the reality is that many sites underutilize the tool — due to lack of training. A practical workshop can fill this gap, especially if the trainers go beyond the basics.

On robots.txt, however, Google's track record is mixed. For years, official recommendations varied: don't block resources, then allow JavaScript, then let CSS be crawled… Practitioners had to adjust their configurations according to changes. If the workshop merely repeats current guidelines without addressing field nuances (actual crawl budget, impact of Allow directives versus Disallow priority, behavior on high-volume sites), it will remain theoretical gloss dressed up as practice.

What are the likely limitations of this format?

The main risk: these workshops remain calibrated for a general audience, with simplified use cases. An experienced SEO practitioner doesn't need someone to explain how to connect a site to Search Console — they want to know how to interpret a sudden collapse in indexed pages, or why certain noindex URLs still appear in reports.

[To verify]: the real technical depth of the sessions. If the facilitators are Product Managers who don't do SEO daily, the format risks disappointing. If they're crawl engineers or internal specialists who handle edge cases, then it becomes interesting.

In what cases is this type of workshop insufficient?

For any site with complex challenges: multilingual architecture, dynamic facet management, technical migrations, site redesigns under heavy SEO pressure. These situations require custom expertise, not standardized workshops.

Similarly, if your site suffers from algorithmic penalties, chronic indexation problems, or unexplained traffic erosion, a generic workshop won't solve anything. You need a thorough technical audit, conducted by someone familiar with your industry and CMS.

Practical impact and recommendations

What should you do concretely if you participate in these workshops?

Prepare in advance. Identify the specific pain points on your site: pages not indexed despite a clean sitemap, sections accidentally blocked in robots.txt, Search Console reports you don't know how to interpret. Write down your specific questions.

During the workshop, don't just follow the exercises. Ask questions about your real cases. If the facilitator dodges or stays vague, push back — or at minimum, note the topic to dig deeper with an expert later.

What mistakes should you avoid after attending a Deep Dive workshop?

Mistake #1: blindly applying a generic recommendation without contextualizing it to your site. Robots.txt is a powerful lever — misconfigured, it can deindex entire sections. Always test in a staging environment before deploying to production.

Mistake #2: believing a few-hour workshop makes you an expert. Search Console and robots.txt are tools with complex ramifications. What you learn is a foundation — mastery comes from repeated practice, analyzing real cases, and learning from mistakes (and fixing them).

- List your specific questions before the workshop

- Test any robots.txt modification in staging before production

- Check the impact in Search Console 7-14 days after any changes

- Document your configurations and changes (versioning, file comments)

- Cross-reference Search Console data with your server logs for validation

- Never accidentally block strategic sections (products, categories, blog)

How do you ensure your site truly benefits from these optimizations?

Set up regular monitoring: weekly Search Console reports, indexed page tracking, alerts on critical errors. Compare before/after each technical adjustment. If you don't see measurable improvement within 30 days, either the modification wasn't necessary or it was applied incorrectly.

❓ Frequently Asked Questions

Les ateliers Deep Dive sont-ils accessibles à tous les professionnels SEO ?

Un atelier robots.txt peut-il vraiment aider sur des sites complexes ?

Google Trends est-il vraiment utile pour le SEO au quotidien ?

Faut-il obligatoirement maîtriser Search Console pour faire du SEO efficace ?

Ces ateliers vont-ils remplacer les Google Webmaster Hangouts ou les Search Central Office Hours ?

🎥 From the same video 5

Other SEO insights extracted from this same Google Search Central video · published on 01/05/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.