Official statement

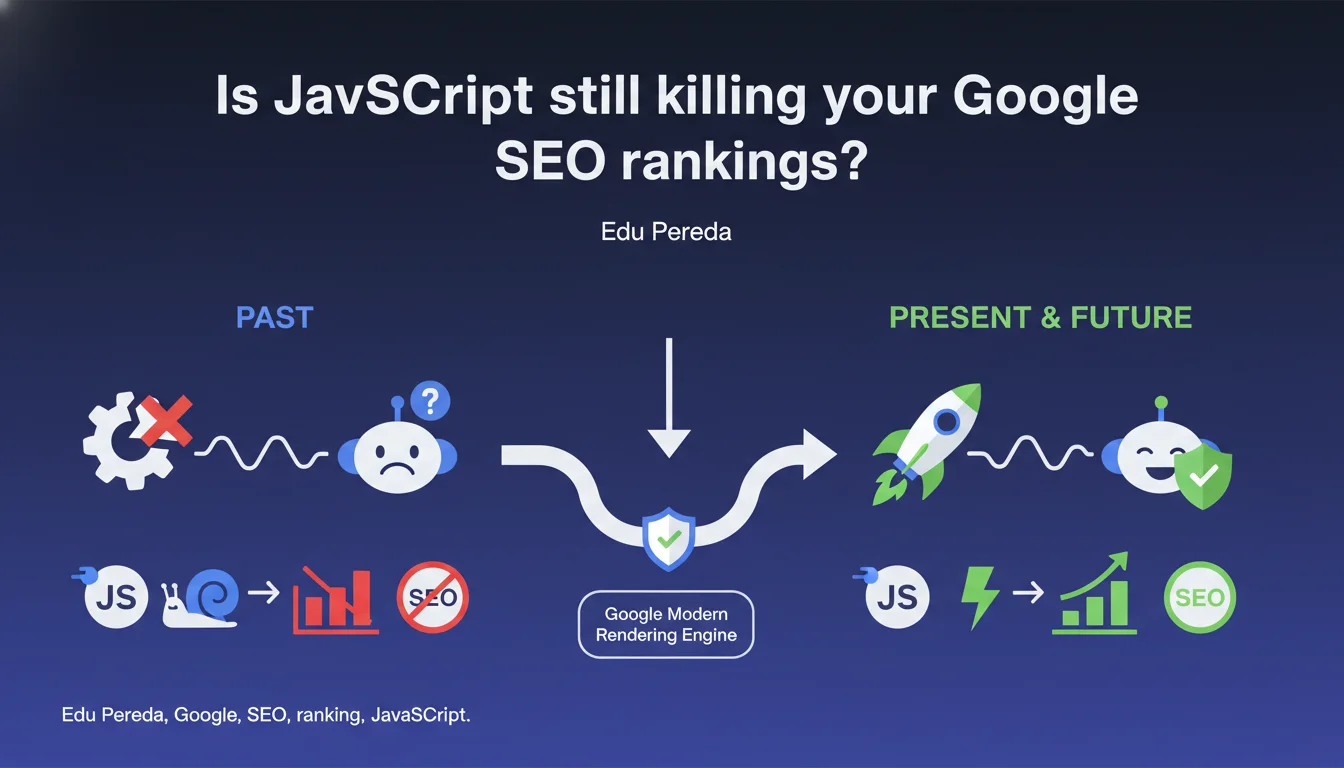

Google publicly admits that JavaScript rendering has long posed challenges for indexation. According to Edu Pereda, this period is now behind us thanks to a modern rendering engine. The promise: JavaScript should no longer be a brake on SEO.

What you need to understand

Why is Google finally admitting it struggled with JavaScript?

Historically, Googlebot did not render JavaScript the same way a standard browser would. The crawler downloaded raw HTML, and content generated dynamically by JS was either invisible or processed with considerable delay.

This technical limitation forced websites to implement workarounds: server-side rendering (SSR), static pre-rendering, or even maintaining two versions of the site. A constant headache for technical teams.

What has actually changed?

Google now claims to have a modern rendering engine based on Chromium. In theory, this means Googlebot processes JavaScript just like Chrome would, with near-complete compatibility for frameworks and libraries.

The process still works in two steps — raw HTML crawl, then queuing for JS rendering — but the promise is better reliability and speed. Let's be honest: we should stay cautious until we see this working consistently across all types of sites.

Does this mean you can build everything in JavaScript without risk?

No. Even with a modern engine, JavaScript rendering consumes more resources than static HTML. Crawl budget remains a critical variable, especially for large sites with thousands of pages.

Moreover, the delay between initial crawl and actual JS rendering can vary depending on site popularity and authority. For new domains, this latency can be problematic for fast indexation.

- Modern Googlebot uses Chromium, but JS rendering remains a separate process from the initial crawl

- Indexation time can be extended if content depends solely on JavaScript

- High-volume sites must still optimize their crawl budget even with better JS support

- Compatibility with modern frameworks (React, Vue, Angular) has significantly improved

SEO Expert opinion

Does this announcement match real-world observations?

Yes and no. Tests do show that Googlebot handles JavaScript better than before. Frameworks like React or Vue generally work without a hitch, and Single Page Applications (SPAs) index more reliably than they did three years ago.

But — and here's where things get tricky — rendering delays remain variable and unpredictable. On some sites, JS content appears in the index within days. On others, especially new domains or those with few backlinks, we observe delays of several weeks. [Needs verification]: Google provides no data on average processing times.

What important nuances should we add to this claim?

First point: saying the problem is "resolved" is optimistic shorthand. JS rendering remains slower and more expensive than static HTML. It's not a neutral choice for your technical architecture.

Second nuance: Google talks about a "modern" engine but never specifies which version of Chromium is used, or how frequently it's updated. Developers using recent JavaScript APIs can still run into compatibility issues — we've seen this with certain ES2020 features.

In what cases does JavaScript remain an SEO risk?

For news content sites or high-refresh content, every hour counts. If your article takes two days to render while a competitor's plain HTML piece is indexed in 20 minutes, you lose the SERP battle.

E-commerce sites with thousands of product pages must also stay vigilant. Even modest rendering delays, multiplied across a large inventory, can significantly slow down new product discovery by Google.

Finally, sites with complex navigation or aggressive lazy-loading can still cause problems. If your content requires user interactions (infinite scroll, click-to-load, etc.), Googlebot won't systematically simulate these.

Practical impact and recommendations

Should you still prioritize server-side rendering?

It depends on your context. If you're launching a new site or critical section (landing pages, strategic content), SSR or static pre-rendering remain the safest options to guarantee fast and complete indexation.

For an established site with good authority and regular crawling, a well-configured SPA can work without major issues. But test — and retest regularly with Search Console and rendering tools like Screaming Frog or OnCrawl.

How do you verify that Google is rendering your JavaScript correctly?

Use the URL inspection tool in Search Console. Compare raw HTML with the rendered version: all content visible to users must appear in Googlebot's rendered version.

Set up regular monitoring with tools like Sitebulb or OnCrawl, which simulate Googlebot's behavior with and without JavaScript. Gaps between the two modes reveal at-risk content.

- Test each critical template with Search Console's URL inspection tool

- Verify that title tags, meta descriptions, and structured data are present in the rendered HTML

- Audit First Contentful Paint and interactivity time — bloated JavaScript also slows rendering for Googlebot

- Monitor server logs to spot URLs crawled but not rendered (standard Googlebot presence without rendering pass)

- Set up alerts for JavaScript errors on the client side — a JS bug can block display for Google

- When possible, provide an HTML static fallback for essential content (navigation, main internal links)

❓ Frequently Asked Questions

Google indexe-t-il toutes les pages JavaScript de la même manière ?

Les frameworks comme Next.js ou Nuxt.js sont-ils mieux gérés par Google ?

Le lazy-loading d'images impacte-t-il le référencement ?

Dois-je encore utiliser des snapshots HTML pour Googlebot ?

Comment mesurer le temps que Google met à rendre mes pages JS ?

🎥 From the same video 3

Other SEO insights extracted from this same Google Search Central video · published on 23/03/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.