Official statement

Other statements from this video 2 ▾

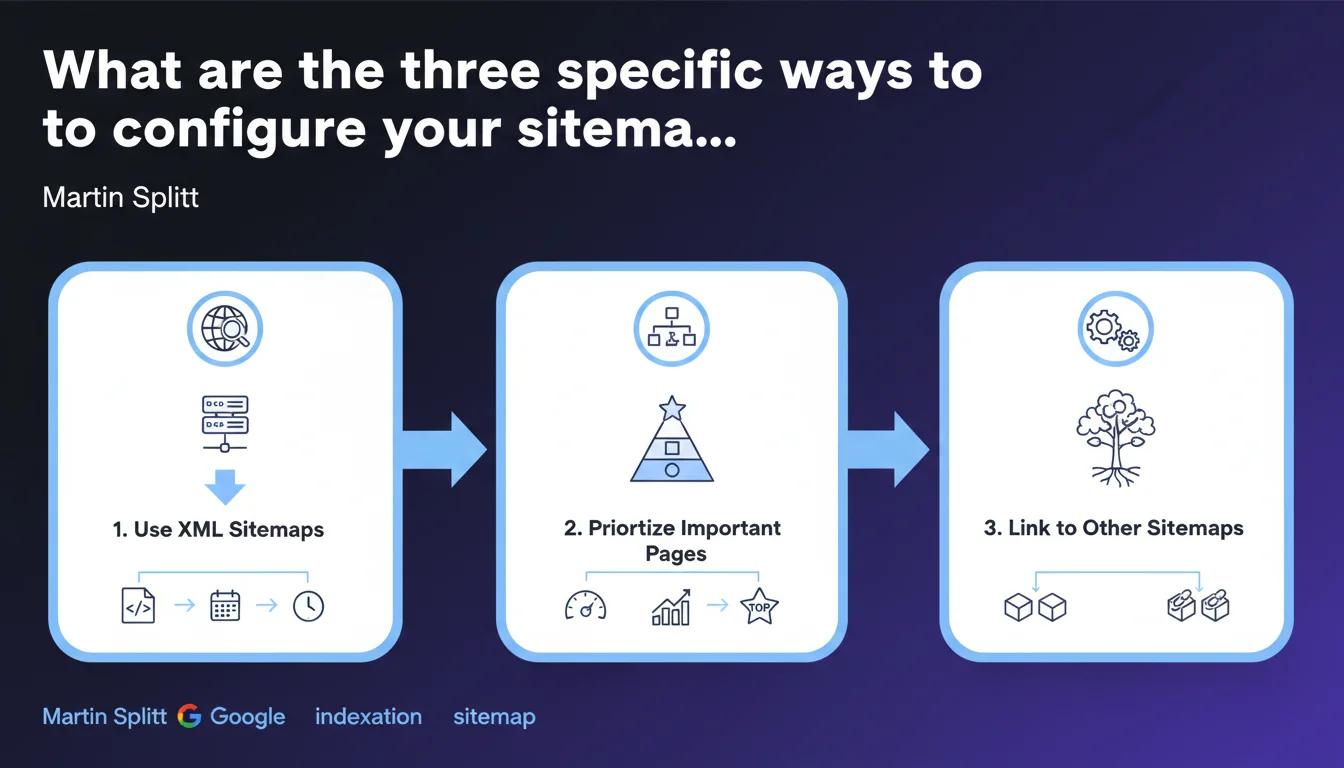

Martin Splitt shares three specific tips for optimizing sitemap configuration. Proper configuration accelerates page discovery and indexing by Googlebot. The devil is in the details: a poorly configured sitemap can do more harm than good.

What you need to understand

Why does Google still emphasize sitemaps so much?

XML sitemaps remain an important signal for Googlebot, even though the search engine is capable of discovering pages through standard crawling. They serve as a roadmap, particularly useful for large sites, deep content, or pages with weak internal links.

Splitt's statement reinforces that all sitemaps are not created equal. A poorly structured file, too large, or containing problematic URLs can dilute your crawl budget instead of optimizing it.

What are the three tips mentioned by Google?

Splitt mentions three specific practices without detailing them in this brief statement. We can reasonably assume these are the documented standards: limit file size, exclude non-canonical URLs, and keep modification dates current.

The problem? [To verify] Google remains vague about the real weighting of these signals. Field testing shows disparities depending on the industry and site size.

Why is Google making this statement now?

Google is likely still observing too many basic sitemap errors. SEO audits regularly reveal files containing 80% of URLs returning 404 errors, chain redirects, or pages blocked by robots.txt.

This reminder aims to refocus on the fundamentals — but it also raises the question: if three tips are enough, why are so many sites still struggling with indexation?

- Sitemaps accelerate discovery, but don't guarantee page indexation

- Approximate configuration can harm crawl budget more than it helps

- Google remains unclear about the exact quantitative impact of a well-configured sitemap

- The three specific tips are not detailed in this public statement

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, broadly speaking. Sites that care for their sitemaps generally see better Googlebot responsiveness when facing new content. But the effect varies enormously depending on site structure and authority.

On a small, well-linked site, the impact of an optimized sitemap remains marginal. On a large e-commerce with 50,000 references and poor internal linking, it's another story: the sitemap becomes critical.

What nuances should we add?

First nuance: a sitemap is not an indexation directive. Google can very well crawl all the URLs in the file and index only 30% of them. The sitemap indicates what exists, not what deserves to be indexed.

Second nuance: the "three specific tips" are not revealed here. We're in murky waters. Is it about segmenting by content type? Update frequency? Relative URL priority? Impossible to say without more details. [To verify]

Third nuance — and this is where it gets tricky: some sites observe slower indexation after adding a sitemap. Why? Because they injected thousands of low-quality URLs into it, which diluted Googlebot's attention. Less is sometimes more.

In what cases does this rule not apply?

If your site has fewer than 100 pages, all well-linked and accessible within 2-3 clicks from the homepage, the sitemap remains useful but its impact will be minimal. Google will find your pages anyway.

Conversely, on a site where 90% of content is behind filters, facets, or complex URL parameters, a sitemap becomes essential — but you must then carefully think about what goes in it. Including everything by default is a recipe for disaster.

Practical impact and recommendations

What should you concretely do to optimize your sitemap?

First, audit the existing one. Download your current sitemap and scrutinize every URL: HTTP status, canonical tag, actual indexability. If more than 10% of URLs have issues, your file is toxic.

Next, segment. A large site benefits from splitting sitemaps by content type: one for articles, one for categories, one for product sheets. Google can then prioritize crawling according to its own criteria and you get better visibility in Search Console.

Finally, automate updates. A static sitemap listing pages deleted six months ago is just noise. If your CMS or generation tool doesn't refresh lastmod and active URLs in real time, this is a project to launch.

What errors must you avoid at all costs?

Error number one: including URLs in noindex or blocked by robots.txt. Google will flag this in Search Console and it weakens the trust given to your sitemap.

Error number two: a single sitemap file of 3 MB with 100,000 URLs in it. The official limit is 50,000 URLs per file, but in practice, stay under 10,000 for better crawl responsiveness.

Error number three: lying about modification dates. If Google crawls a URL marked as modified yesterday and it hasn't changed in six months, it learns to ignore your signals. Sitemap credibility is earned or lost with each crawl.

How do you verify that your sitemap is compliant?

Start with Search Console: Sitemaps tab, check the number of submitted URLs versus discovered. A gap of more than 20% warrants investigation.

Next, test your file with an XML validator to ensure it follows the spec. Then crawl it yourself with Screaming Frog or equivalent: you'll immediately see 404 errors, redirects, or inconsistent canonical tags.

- Remove all URLs with errors, redirects, or noindex from the sitemap

- Segment sitemaps by content type for large sites

- Limit each file to 10,000 URLs maximum (comfort threshold)

- Automate lastmod date updates to reflect actual changes

- Submit the sitemap in Search Console and monitor errors

- Regularly crawl your own sitemap to detect inconsistencies

❓ Frequently Asked Questions

Un sitemap garantit-il l'indexation de toutes mes pages par Google ?

Quelle est la taille maximale recommandée pour un fichier sitemap ?

Dois-je inclure toutes mes URL dans le sitemap, même les moins importantes ?

À quelle fréquence faut-il mettre à jour son sitemap ?

Que se passe-t-il si mon sitemap contient des URL en erreur 404 ou en noindex ?

🎥 From the same video 2

Other SEO insights extracted from this same Google Search Central video · published on 13/11/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.