Official statement

Other statements from this video 11 ▾

- □ Google transcrit-il vraiment l'audio de vos vidéos pour les ranker ?

- □ Google analyse-t-il réellement le contenu visuel des vidéos pour le SEO ?

- □ Pourquoi les données structurées vidéo restent-elles indispensables malgré les progrès de l'IA de Google ?

- □ Pourquoi Google exige-t-il l'URL du fichier vidéo dans les données structurées ?

- □ Pourquoi bloquer vos fichiers vidéo pourrait nuire gravement à votre indexation ?

- □ Pourquoi le cache-busting d'URL vidéo bloque-t-il l'indexation Google ?

- □ Faut-il vraiment utiliser la vérification DNS inversée pour autoriser Googlebot ?

- □ Faut-il toujours privilégier content URL sur embed URL dans les données structurées vidéo ?

- □ Google analyse-t-il vraiment le contenu vidéo ou se fie-t-il uniquement au texte de la page ?

- □ Google indexe-t-il vraiment les vidéos courtes si elles ont une URL crawlable ?

- □ Pourquoi Google publie-t-il enfin ses adresses IP Googlebot publiquement ?

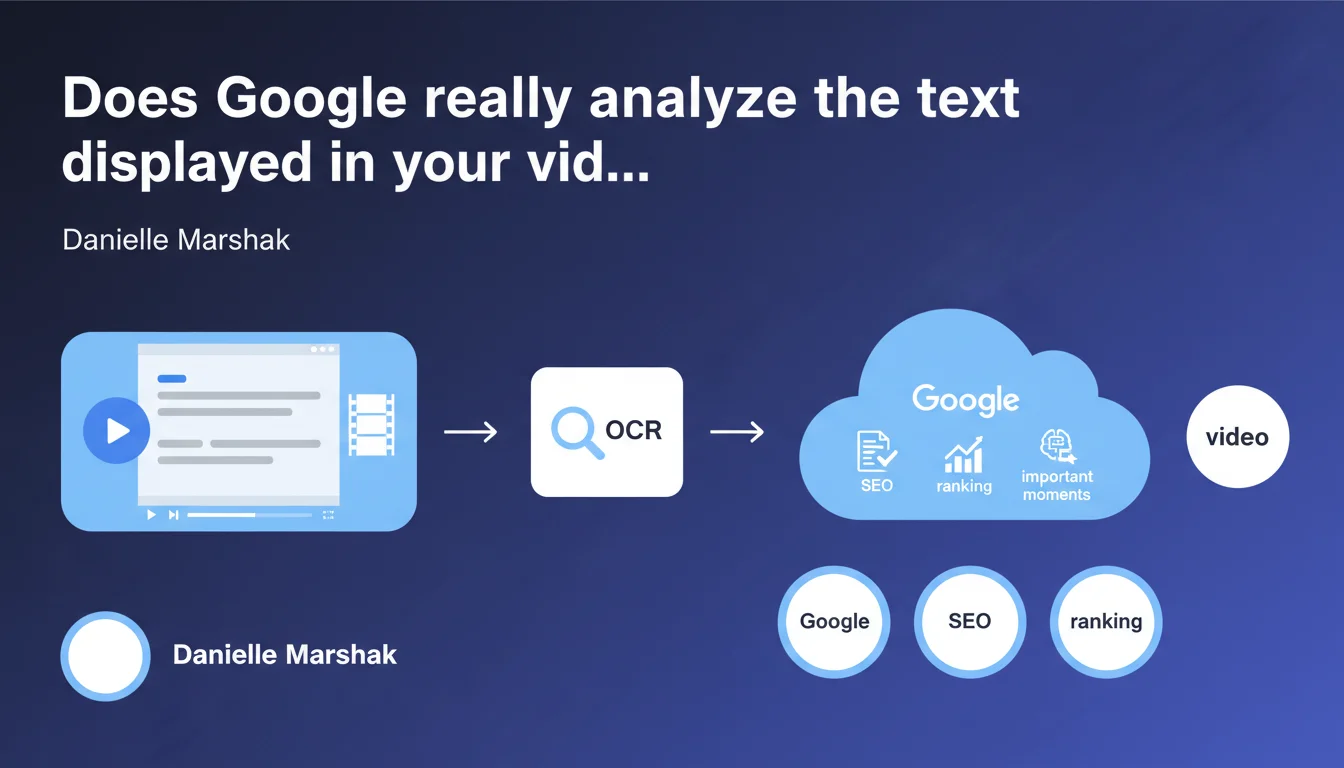

Google uses OCR to extract and index visible text in videos — titles, captions, on-screen overlays. This technology serves to identify key moments and enrich understanding of video content. For SEO practitioners, this means that all visually displayed text now counts in crawling and indexation.

What you need to understand

What is OCR and how does Google apply it to videos?

OCR (Optical Character Recognition) transforms visible text in an image or video into indexable text. Google scans the frames of your videos to detect and extract displayed characters — whether animated titles, embedded captions, graphics with legends, or superimposed text.

Concretely, this extends the scope of indexation beyond classic metadata (title, description, transcription). The engine can now read what the user sees, and use it to understand context and segment the video into chapters or relevant moments.

Why did Google develop this feature?

The objective is twofold: improve the precision of video search and enrich the Key Moments displayed in SERPs. By automatically identifying important sections of a video through visible text, Google can propose direct entry points to users.

This fits into Google's broader trend of treating multimedia content as structured text content. OCR joins other technologies such as audio analysis, object recognition, and scene detection to build a holistic understanding.

What types of text are affected?

All visible textual elements: section titles, hardcoded captions (burnt-in), graphics, explanatory legends, displayed data tables, textual quotes. Conversely, subtitles in .srt or .vtt format provided separately are already indexed via a different channel — OCR targets what is directly embedded in the image.

- OCR extracts visible text from video frames, independent of manually provided metadata

- This concerns titles, hardcoded captions, graphics, text overlays

- Google uses this data to identify key moments and improve relevance in video search

- Subtitles in separate format (.srt, .vtt) remain indexed via a distinct process

- This technology adds to other signals (audio, image, context) for overall content understanding

SEO Expert opinion

Is this statement consistent with field observations?

Yes — and it's even a welcome confirmation. For several years, we've observed that videos containing on-screen text perform better for specific queries than videos without visible text, even with identical transcriptions. OCR explains this difference.

Tests show that Google can indeed retrieve terms briefly displayed in a video and associate them with search queries. That said, the precision of extraction remains variable depending on image quality, font used, contrast, and display duration.

What nuances should be noted?

First point: Google doesn't say how this extracted text is weighted against other signals. Does a word displayed for 2 seconds carry as much weight as a word in the title or description? [To be verified] — no public data quantifies this weighting.

Second point: OCR is not infallible. Stylized fonts, low contrast, rapid animations, or complex overlays can generate errors. If your strategy relies entirely on embedded text without backup in metadata, you're taking a risk.

Third nuance — and this is crucial: Google doesn't say whether OCR applies uniformly across all video platforms (YouTube, videos hosted on-site, Facebook, Vimeo). The extraction infrastructure can vary, especially for non-YouTube videos where Google must crawl and process files itself.

In what cases does this technology not work?

Videos with resolutions too low, text embedded with rapid motion, handwritten or highly decorative fonts pose problems. Similarly, if text is partially obscured or appears in a complex visual context (multiple overlays, busy backgrounds), extraction can fail.

Another limitation: videos encoded with exotic codecs or protected by DRM may not be processed by OCR, or only partially. Finally, non-crawlable videos (files blocked by robots.txt, slow servers, multiple redirects) will never be analyzed, OCR or not.

Practical impact and recommendations

What should you concretely do to optimize your videos?

First rule: integrate readable and relevant text into your videos. Section titles, key points, quotes, important figures — everything that visually structures your message helps Google segment and understand content. Favor sans-serif fonts, high contrast (white text on dark background or vice versa), and sufficient size.

Second action: align visible text with your strategic keywords. If you're targeting a specific query, have the exact terms appear on screen at relevant moments. This reinforces semantic coherence between search intent and video content.

Third point — never neglect traditional metadata. Always provide a complete transcript, subtitles in .srt or .vtt format, an optimized title, a rich description, and VideoObject markup. OCR is an additional signal, not a substitute.

What errors should you absolutely avoid?

Don't overload your videos with on-screen text hoping to stuff keywords. Google detects visual keyword stuffing exactly as it detects it in HTML. Embedded text must provide value to the user, not manipulate the algorithm.

Also avoid fancy fonts, animations too fast (less than 2 seconds display), low contrast, or multiple overlays that make text unreadable. If a human struggles to read it, OCR will fail too.

Last frequent error: hosting videos on platforms or CDNs not optimized for crawling. If Google cannot access the video file quickly and reliably, OCR will never execute — regardless of your production efforts.

How do you verify that your videos are properly optimized?

Manually test extraction with public OCR tools (Tesseract, Google Cloud Vision API) on some representative frames. If these tools struggle to read your text, so will Google.

Also verify in Search Console that your videos are properly indexed and that Key Moments appear in search results. If this isn't the case despite relevant embedded text, it's a warning signal — crawling issue, image quality problem, or semantic inconsistency.

- Integrate readable and relevant text on screen (titles, key points, quotes)

- Use sans-serif fonts, high contrast, sufficient size

- Align visible text with your strategic keywords at relevant moments

- Always provide complete transcription and subtitles in .srt/.vtt format

- Complete with VideoObject markup and structured metadata

- Avoid visual keyword stuffing and animations too fast (< 2s)

- Test OCR extraction on your frames with public tools

- Verify indexation and Key Moments appearance in Search Console

❓ Frequently Asked Questions

L'OCR fonctionne-t-il sur toutes les plateformes vidéo ou uniquement sur YouTube ?

Le texte extrait par OCR a-t-il le même poids SEO que le titre ou la description ?

Combien de temps un texte doit-il rester affiché pour être correctement extrait ?

Les sous-titres hardcodés sont-ils traités différemment des sous-titres au format .srt ?

Peut-on vérifier ce que Google a extrait par OCR sur mes vidéos ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 10/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.