Official statement

Other statements from this video 5 ▾

- □ Google notifie-t-il vraiment toutes les actions manuelles via Search Console ?

- □ Comment sortir d'une pénalité manuelle Google sans perdre des mois ?

- □ Google tolère-t-il vraiment les erreurs SEO involontaires ?

- □ Une erreur SEO peut-elle ruiner définitivement votre classement Google ?

- □ Google tolère-t-il vraiment les mauvaises pratiques SEO si votre site a du bon contenu ?

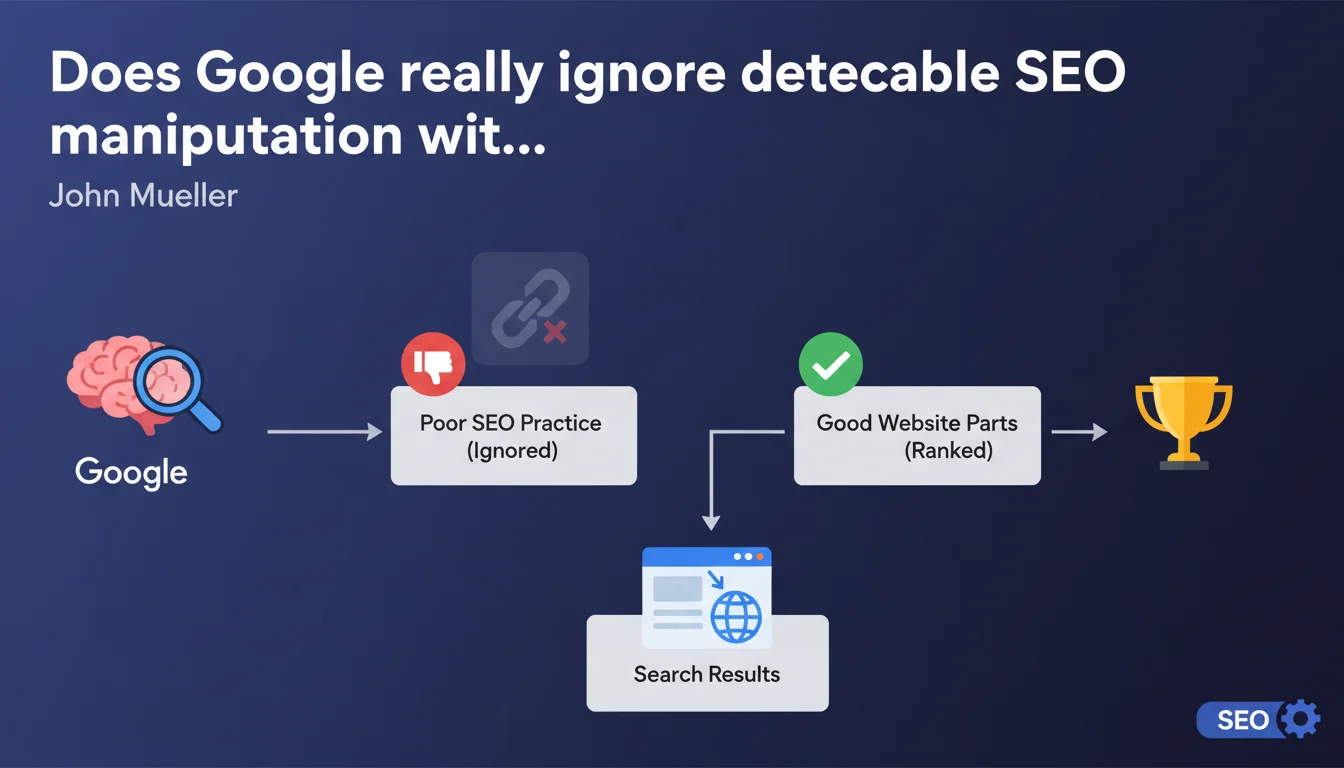

Google claims its systems automatically neutralize SEO techniques detected as manipulative, without penalizing the rest of the site. The algorithm isolates these practices to focus on legitimate content. This approach avoids manual actions while rendering manipulations ineffective.

What you need to understand

What does "ignoring" a poor practice actually mean in practice?

When Google talks about ignoring a questionable technique, it's not about a classic penalty. The algorithm detects the manipulation (keyword stuffing, basic cloaking, easily identifiable link spam) and decides not to factor it into ranking calculations.

The site isn't penalized — it simply doesn't gain the intended advantage. It's a silent neutralization: no alert in Search Console, no sudden traffic drop, just an absence of effect.

Why take this approach instead of applying a penalty?

Manual penalties are expensive in terms of human resources and generate disputes. Automating detection and canceling out effects allows Google to handle massive volumes of cases without human intervention.

This method applies mainly to tactics already well-documented: Google knows how to recognize them with high confidence. For more sophisticated manipulations, manual action remains relevant.

What distinguishes what Google ignores from what it penalizes?

The boundary comes down to automatic detection capability and the level of harm. A crude artificial link network will probably be ignored. A well-camouflaged PBN or massive AI-generated content farm could trigger manual action if it degrades user experience at scale.

Google also distinguishes between localized practices (a few pages affected) and systemic strategies that corrupt an entire domain. In the latter case, tolerance decreases.

- Automatic ignoring concerns techniques identifiable by pattern recognition

- No Search Console notification for these cases

- The rest of the site continues to be evaluated normally

- Sophisticated or large-scale harmful tactics remain exposed to manual actions

- This approach aims for algorithmic efficiency rather than punishment

SEO Expert opinion

Does this statement match what we observe in the real world?

Yes and no. We do see that certain sites using borderline techniques suffer no visible penalty — they simply stagnate without gaining ground. Their traffic remains stable, but pages optimized with these methods never climb rankings.

Conversely, other cases show sudden drops without declared manual action, suggesting the line between "ignoring" and "algorithmic penalization" is blurry. [Needs verification]: Google never clarifies exactly when filtering becomes active devaluation.

What are the limits of this ignore-it approach?

The approach works for isolated signals: a few spam links, light keyword stuffing on a handful of pages. But what happens when the poor practice is structural? If 60% of a site's content relies on mediocre automatic generation, can Google really "focus on the good parts"?

Mueller's statement assumes there's always legitimate content to promote. For sites built entirely on manipulative tactics, this distinction makes no sense — and that's where manual penalties or devastating core updates come in.

Should we conclude you can attempt tactics risk-free?

Absolutely not. What Google ignores today may become detectable tomorrow. Continuous algorithm improvements constantly expand what's automatically detectable.

Let's be honest: betting on algorithmic invisibility means gambling that your tactic stays hidden. It's a short-term bet with residual risk of manual action if someone reports your site or you rise too quickly in competitive SERPs.

Practical impact and recommendations

What should you concretely do with this information?

First move: audit the gray areas on your site. Identify tactics you're using knowing they're questionable — over-optimized anchor text, satellite pages, light automated content. Ask yourself: if Google ignores them, why keep them?

These practices consume resources (crawl budget, editorial budget, maintenance) without delivering benefit. Better to invest that time in truly differentiating content that doesn't depend on algorithmic tolerance.

How do you verify if practices are ignored rather than rewarded?

Analyze performance by segment. If certain pages or sections stagnate despite repeated optimization, Google is probably neutralizing your efforts. Compare conversion rates, session duration, engagement signals: they often reveal these pages deliver nothing to users.

Test by temporarily disabling certain suspect optimizations — remove link blocks, lighten anchor text, rewrite generated content. If traffic doesn't budge, you have your answer: those elements were already being ignored.

What mistakes should you avoid based on this statement?

Don't confuse "not yet penalized" with "approved by Google." The absence of punishment isn't validation. Many sites live in a gray zone thinking that as long as it passes, it's acceptable.

Another mistake: thinking Google has a binary definition of spam. Reality is a spectrum of variable tolerance depending on industry, competition, domain history. What gets ignored for an established site can trigger manual action for an aggressive new domain.

- Audit borderline tactics currently in place on your site

- Analyze performance by segment to identify potentially neutralized areas

- Test disabling certain suspect optimizations

- Prioritize investment in durable content and positive signals

- Never treat absence of penalty as tacit approval

- Document SEO decisions to reassess them after each core update

❓ Frequently Asked Questions

Si Google ignore les mauvaises pratiques, pourquoi mon site a-t-il été pénalisé ?

Comment savoir si une technique est ignorée ou simplement pas encore détectée ?

Les liens spam achetés sont-ils ignorés ou pénalisés ?

Dois-je nettoyer mon profil de liens si Google les ignore de toute façon ?

Cette politique d'ignorance s'applique-t-elle au contenu généré par IA ?

🎥 From the same video 5

Other SEO insights extracted from this same Google Search Central video · published on 01/02/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.