Official statement

Other statements from this video 11 ▾

- □ Google transcrit-il vraiment l'audio de vos vidéos pour les ranker ?

- □ Google analyse-t-il vraiment le texte affiché dans vos vidéos pour le référencement ?

- □ Pourquoi les données structurées vidéo restent-elles indispensables malgré les progrès de l'IA de Google ?

- □ Pourquoi Google exige-t-il l'URL du fichier vidéo dans les données structurées ?

- □ Pourquoi bloquer vos fichiers vidéo pourrait nuire gravement à votre indexation ?

- □ Pourquoi le cache-busting d'URL vidéo bloque-t-il l'indexation Google ?

- □ Faut-il vraiment utiliser la vérification DNS inversée pour autoriser Googlebot ?

- □ Faut-il toujours privilégier content URL sur embed URL dans les données structurées vidéo ?

- □ Google analyse-t-il vraiment le contenu vidéo ou se fie-t-il uniquement au texte de la page ?

- □ Google indexe-t-il vraiment les vidéos courtes si elles ont une URL crawlable ?

- □ Pourquoi Google publie-t-il enfin ses adresses IP Googlebot publiquement ?

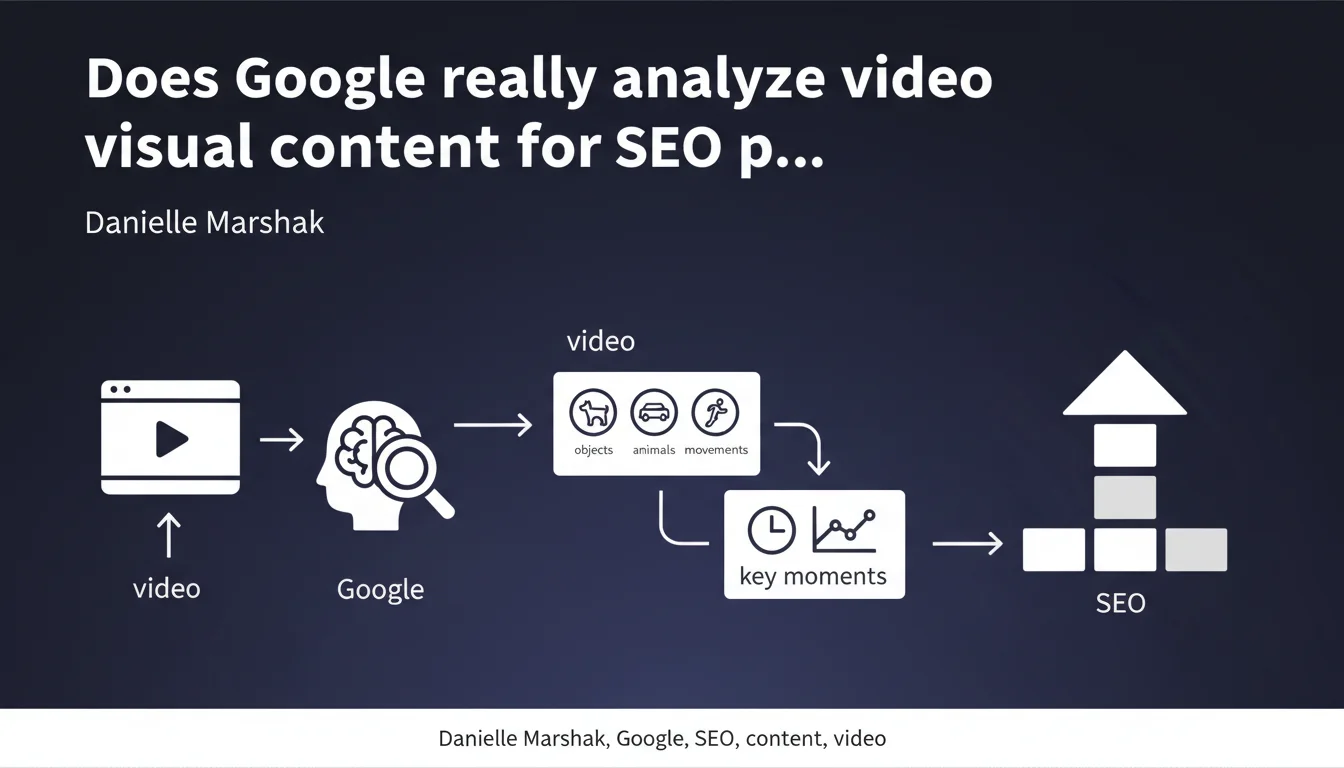

Google is deploying technologies capable of directly analyzing the visual content of videos: objects, animals, movements, key moments. This evolution reduces the relative weight of traditional textual metadata in favor of native understanding of the video stream. For SEO practitioners, this means that visual content itself becomes a ranking signal in its own right.

What you need to understand

What specific technology does Google use to analyze videos?

Google relies on computer vision models capable of detecting and classifying visual elements: objects (car, phone, tool), animals, actions (running, cooking, assembling), contexts (indoor, outdoor, professional environment). This approach continues Google Lens and image analysis technologies already deployed for several years.

The novelty here is the application of these models to the temporal video stream. Google no longer simply extracts isolated frames — it understands the sequence, identifies key moments, transitions, scene changes. It's a dynamic analysis of content.

How does this change the way Google indexes videos?

Historically, Google relied heavily on textual metadata: title, description, transcriptions, captions, VideoObject schema tags. These elements remain important, but no longer constitute the sole source of information.

Now the engine can cross-reference this textual data with what it actually sees in the video. If your title announces "iPhone 14 repair tutorial" but the video shows an Android, Google detects it. This cross-verification capability reduces the effectiveness of keyword stuffing in metadata without correlation to actual content.

What are key moments and why does Google prioritize them?

The key moments correspond to video segments where main information is concentrated: demonstration of a specific step, product appearance, concept explanation. Google seeks to automatically divide long videos into semantically coherent chapters.

The objective is twofold: improve user experience by enabling direct access to the relevant passage, and display ultra-targeted video featured snippets in the SERPs. For SEO, this means your video's narrative structure becomes a quality signal.

- Textual metadata alone is no longer sufficient — actual visual content is analyzed

- Google detects inconsistencies between title/description and filmed content

- Narrative structure (division into key moments) influences ranking

- Computer vision models continuously improve — what works today will be obsolete tomorrow

- Temporal video analysis enables granular indexing by segment

SEO Expert opinion

Is this statement consistent with on-the-ground observations?

Yes, and the evidence has been accumulating for months. Video featured snippets increasingly display precise timestamps that don't correspond to any manually declared chapters in YouTube or via schema.org. Google generates them itself, which suggests native stream analysis.

Additionally, we observe that videos with approximate or absent transcriptions now rank on highly visual queries ("how to pitch a tent", "product X demo"), whereas they were invisible two years ago. Visual content compensates for lack of text.

One gray area remains: to what extent is this technology deployed at scale? Google doesn't specify whether visual analysis applies to all indexed videos or only a priority subset (YouTube, certain domains, certain languages). [To verify]

What limitations should we keep in mind?

First, visual analysis remains probabilistic and imperfect. Google might confuse a cat with a fox, a screwdriver with a pen, one action with another. Models improve, certainly, but they still make interpretation errors — especially on niche, technical, or culturally specific content.

Second, this technology says nothing about the quality of information delivered. Google can identify that a video shows someone cooking chicken, but it doesn't know if the recipe is good, if the advice is relevant, if the author is credible. Visual analysis enriches context, it doesn't replace authority signals.

Third — and this is crucial — this evolution mechanically favors visually rich content over minimalist formats (static talking head, filmed PowerPoint slides). If your video is poor in visual signals, Google has less material to analyze. This creates a bias toward productions with editing, illustrations, physical demonstrations.

In which cases does this technology not apply or perform poorly?

Videos with abstract or conceptual content (complex animated graphics, data visualizations, schematic educational content) pose problems. An animated graph explaining macroeconomics contains few identifiable objects — Google will see curves, axes, text, but won't understand the meaning.

Similarly, videos in low resolution, poor lighting, with blurry shots limit analysis capability. If the model can't clearly identify objects, it falls back on traditional textual metadata. Technical video quality thus becomes an indirect SEO factor.

Finally, culturally or linguistically specific content risks interpretation errors. A traditional ritual object may be misclassified, cultural gestures misunderstood. Google's models are trained on dominant Western corpora — they have blind spots.

Practical impact and recommendations

What should you do concretely to optimize your videos?

First priority: ensure perfect consistency between metadata and visual content. If your title announces "MacBook Pro M3 Test", the video must clearly show the product from the first seconds. Google verifies this.

Next, structure your videos with visually distinct key moments. Vary the shots, introduce identifiable objects, mark transitions. A well-structured video in logical sequences facilitates automatic analysis and improves chances of appearing in segmented featured snippets.

On the technical side, prioritize high resolution and good lighting. A video in 1080p minimum, well-contrasted, with sharp focus on key elements. Computer vision models are sensitive to image quality.

Continue implementing complete VideoObject schema markup with transcriptions, captions, manual chapters. This data remains essential — it complements visual analysis, it's not replaced by it. Google cross-references both sources.

What mistakes should you absolutely avoid?

Don't fall into the visual clickbait trap: displaying in the thumbnail or first seconds an element that has nothing to do with actual content. Google detects the inconsistency and may penalize.

Avoid static videos poor in visual signals (text slides, fixed talking head without visual support). If content is inherently not visual, compensate with illustrations, animations, inserts. Give Google something to analyze.

Don't neglect transcriptions and captions under the pretense that Google "sees" the content. Visual analysis has its limits — text remains the most reliable way to convey semantic nuances, technical terms, proper names.

How can you verify that your videos benefit from this analysis?

Unfortunately, Google provides no diagnostic tool to know if a video has been visually analyzed and with what precision. You must proceed by indirect observation.

Check if your videos appear with automatic timestamps you haven't manually declared. This is a strong indicator that Google has analyzed the stream. Also test highly visual queries related to your content: "how to do X", "product Y demo", "tutorial Z". If your videos rank without exhaustive textual metadata, visual analysis likely plays a role.

Finally, monitor your impressions and CTR in Search Console for video queries. An unexplained increase on queries you hadn't optimized textual metadata for may signal that Google values your visual content.

- Ensure consistency between title/description/actual visual content

- Structure videos in visually distinct sequences with identifiable key moments

- Prioritize high resolution (1080p min), good lighting, sharp focus

- Implement complete VideoObject schema with transcriptions and chapters

- Visually enrich content poor in objects (animations, illustrations, demonstrations)

- Avoid visual clickbait and inconsistencies between thumbnail and content

- Monitor appearance of automatic timestamps in SERPs

- Analyze Search Console impressions on highly visual video queries

❓ Frequently Asked Questions

Google analyse-t-il toutes les vidéos ou seulement celles hébergées sur YouTube ?

L'analyse visuelle remplace-t-elle les transcriptions et sous-titres ?

Une vidéo de mauvaise qualité technique peut-elle quand même ranker grâce aux métadonnées ?

Comment savoir si Google a correctement identifié le contenu de ma vidéo ?

Les vidéos avec contenu abstrait ou conceptuel sont-elles désavantagées ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 10/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.