Official statement

Other statements from this video 9 ▾

- □ Pourquoi un simple slash final déclenche-t-il une migration de site complète selon Google ?

- □ Pourquoi un changement d'URL fait-il perdre l'historique SEO d'une page ?

- □ Pourquoi la migration d'URLs peut-elle ruiner votre classement si vous précipitez les choses ?

- □ Faut-il vraiment rediriger TOUTES les URLs lors d'une migration de site ?

- □ Faut-il vraiment mettre à jour TOUS les éléments internes après une migration d'URLs ?

- □ Pourquoi Google Search Console est-elle indispensable lors d'une migration de site ?

- □ Google traite-t-il vraiment toutes les URLs de manière égale lors d'une migration ?

- □ Combien de temps dure vraiment une migration d'URLs aux yeux de Google ?

- □ Faut-il vraiment maintenir les redirections 301 pendant un an minimum ?

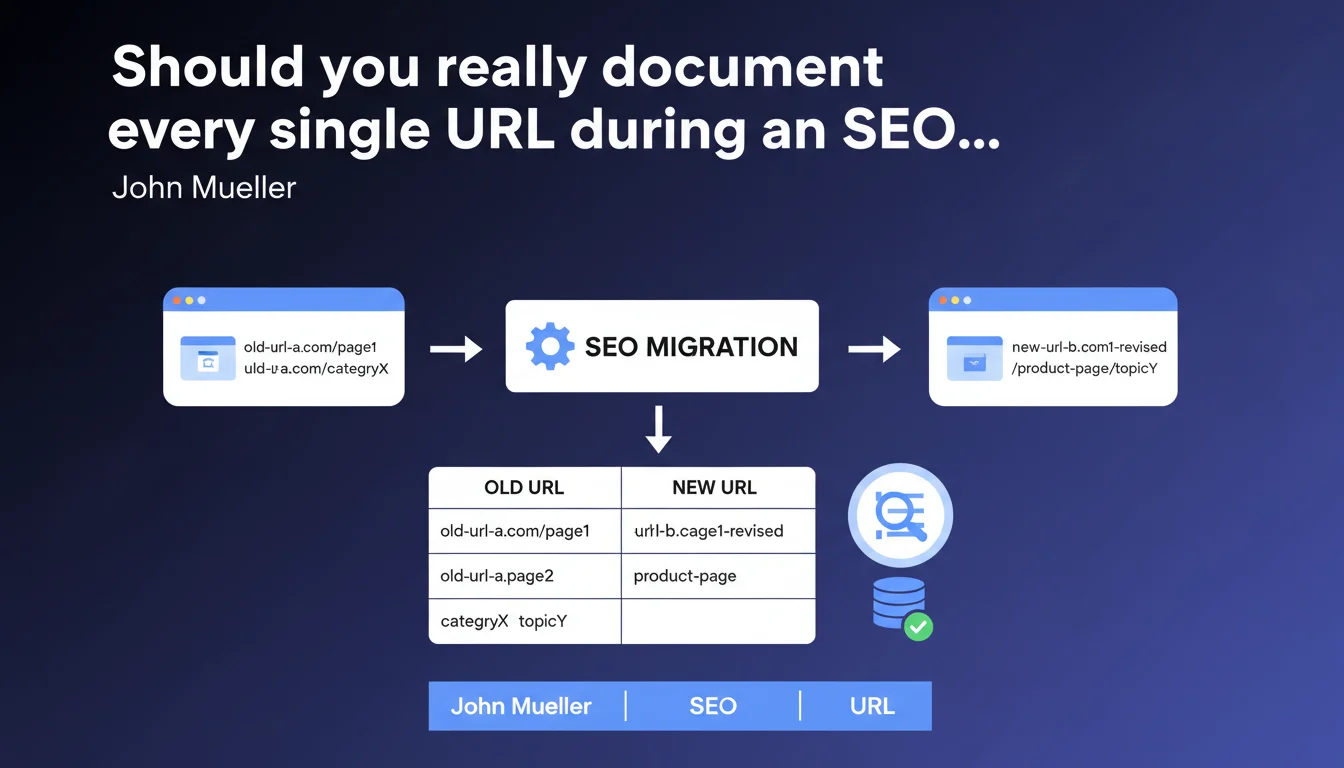

Google emphasizes the necessity of creating an exhaustive list of old URLs and their new correspondences during a migration. This documentation allows you to precisely track changes and verify that each redirect functions correctly. Without this complete mapping, it's impossible to diagnose post-migration traffic losses.

What you need to understand

Why does Google keep hammering this point that seems so obvious?

Because this is precisely where most migrations fail. A migration without complete mapping is flying blind. Google recrawls the site, discovers 404s, redirect chains, orphaned URLs — and you can't figure out why your traffic dropped 30%.

Mueller's advice isn't theoretical. It comes from the observation that too many sites migrate without rigorous documentation, then spend weeks reconstructing what changed retroactively. Except in the meantime, Google has already reindexed parts of the site with its broken new URLs.

What exactly should this list contain?

At minimum: the old URL, the new URL, the redirect type (301, 302, 410), and ideally some metadata — crawl status of the old page, organic traffic volume, number of backlinks pointing to it. This allows you to prioritize post-migration verification.

This list isn't just an Excel file to archive. It's your working tool to generate server redirect files, feed Search Console with address changes, and audit issues after switchover.

- Map 100% of URLs, not just the "important" pages — Google crawls everything

- Document the redirect type for each correspondence

- Include SEO metrics (traffic, backlinks) to prioritize verification

- Use this mapping as the basis for server redirect files

- Keep this list as a post-migration diagnostic reference

How does this list integrate into the overall migration process?

It forms the backbone of the project. Before you even touch the code, you must know exactly which pages exist, which will be preserved, merged, or deleted. Without this mapping, you can't plan redirects, prepare tests, or measure migration success.

Concretely: crawl the old site, extract all URLs, determine their destination, validate the logic with the client, generate redirect rules, test in staging environment. The list is your single source of truth at every step.

SEO Expert opinion

Is this recommendation sufficient to avoid catastrophes?

No. A complete list is necessary but not sufficient. The real problem is mapping quality, not just its completeness. I've seen migrations with 50,000-line Excel files — and 30% of redirects pointing to the homepage because nobody took the time to determine the right target for each orphaned URL.

Mueller says "create a list," but doesn't address the tricky cases: URLs with dynamic parameters, paginated versions, filter facets, internal duplicate content. [To verify] whether Google really expects a 1:1 mapping for each URL variation or tolerates logical groupings.

What are common mistakes even with a complete list?

First mistake: creating the list too late, after developers have already coded redirects "roughly." Result: your mapping reflects what should be done, not what was actually done. Guaranteed misalignment.

Second mistake: not keeping this list updated during the project. The client modifies the target structure mid-project, you add pages, merge categories — and your original Excel becomes obsolete. The list must be versioned like code.

In what cases does this approach show its limits?

On very large sites (> 100,000 URLs), manual mapping becomes unmanageable. You must script the correspondence logic — which introduces the risk of systematic errors if the script is poorly designed. At this scale, validation through stratified sampling becomes essential.

Another limit: sites with client-side dynamic URL generation (SPAs, JS filters). You can't "list" all possible URLs if they don't exist server-side. You must then work on URL patterns and test by logical groups, not line by line.

Practical impact and recommendations

What should you concretely do before any migration?

Crawl the entire site with Screaming Frog, Oncrawl, or your preferred tool. Export all discovered URLs, not just those in the XML sitemap. Include orphaned pages spotted through Search Console and Google Analytics.

Then build your mapping by cross-referencing three sources: technical crawl, organic traffic data (to identify high-value pages), and backlink inventory (to spot URLs with link equity). This sorting allows you to prioritize your mapping efforts on what really matters.

How do you verify that the mapping is correctly implemented?

Test in staging environment before switchover. Upload your list to a bulk redirect testing tool (browser extensions, Python scripts, or solutions like Redirect Path Checker). Verify that each old URL returns a 301 code to the correct target, without redirect chains or loops.

Post-migration, monitor server logs and Search Console. 404s appearing within 48 hours reveal forgotten URLs. Orphaned pages in Analytics signal missing redirects. Fix in real-time, not three weeks later when Google has already deprioritized your pages.

- Exhaustively crawl the source site (including orphaned pages and alternative versions)

- Extract SEO metrics (traffic, backlinks, indexation status) for each URL

- Define a destination for 100% of URLs, even low-traffic pages

- Document the mapping logic for complex cases (parameters, facets)

- Test all redirects in staging before production deployment

- Monitor server logs and Search Console for 4 weeks post-migration

- Maintain a tracking file for post-migration corrections and adjustments

What resources should you mobilize to succeed at this stage?

Well-documented migration takes time and rigorous methodology. Between initial crawl, data analysis, mapping construction, testing, and post-migration monitoring, you easily invest several person-days — even for a medium-sized site.

If your team lacks bandwidth or the migration involves critical business stakes, bringing in experienced specialists can make the difference between a smooth transition and an SEO disaster. A specialized SEO agency brings not only proven methodology but also tools and experience with edge cases never documented in official guidelines.

❓ Frequently Asked Questions

Faut-il vraiment mapper les URLs qui n'ont jamais eu de trafic ?

Peut-on automatiser la création de ce mapping sur un gros site ?

Quelle est la meilleure façon de stocker et partager cette liste avec l'équipe technique ?

Combien de temps après la migration faut-il conserver cette liste ?

Google pénalise-t-il un site si quelques redirections sont manquantes ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 18/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.