Official statement

Other statements from this video 14 ▾

- 1:01 Googlebot crawle-t-il et rend-il le JavaScript à la même fréquence ?

- 4:17 Googlebot exécute-t-il vraiment le JavaScript comme un navigateur réel ?

- 4:50 Googlebot ignore-t-il vraiment tout le contenu chargé après interaction utilisateur ?

- 6:53 Le HTML rendu est-il vraiment la seule référence pour l'indexation Google ?

- 7:23 Faut-il encore se fier au cache Google pour vérifier l'indexation JavaScript ?

- 7:54 Le JavaScript impacte-t-il réellement votre budget de crawl ?

- 9:00 Google indexe-t-il vraiment l'intégralité de vos pages ou juste des fragments stratégiques ?

- 12:08 Les classes CSS nommées 'SEO' pénalisent-elles le référencement ?

- 20:27 Supprimer des liens en JavaScript peut-il rendre vos pages invisibles pour Google ?

- 23:54 Pourquoi les tests en direct dans Search Console donnent-ils des résultats contradictoires ?

- 26:00 Comment gérer les paramètres d'URL pour éviter les problèmes d'indexation ?

- 30:47 Pourquoi Google découvre vos pages mais refuse de les indexer ?

- 35:39 Le sitemap XML peut-il vraiment déclencher un recrawl ciblé de vos pages ?

- 44:44 Pourquoi Googlebot ne voit-il pas les liens révélés après un clic utilisateur ?

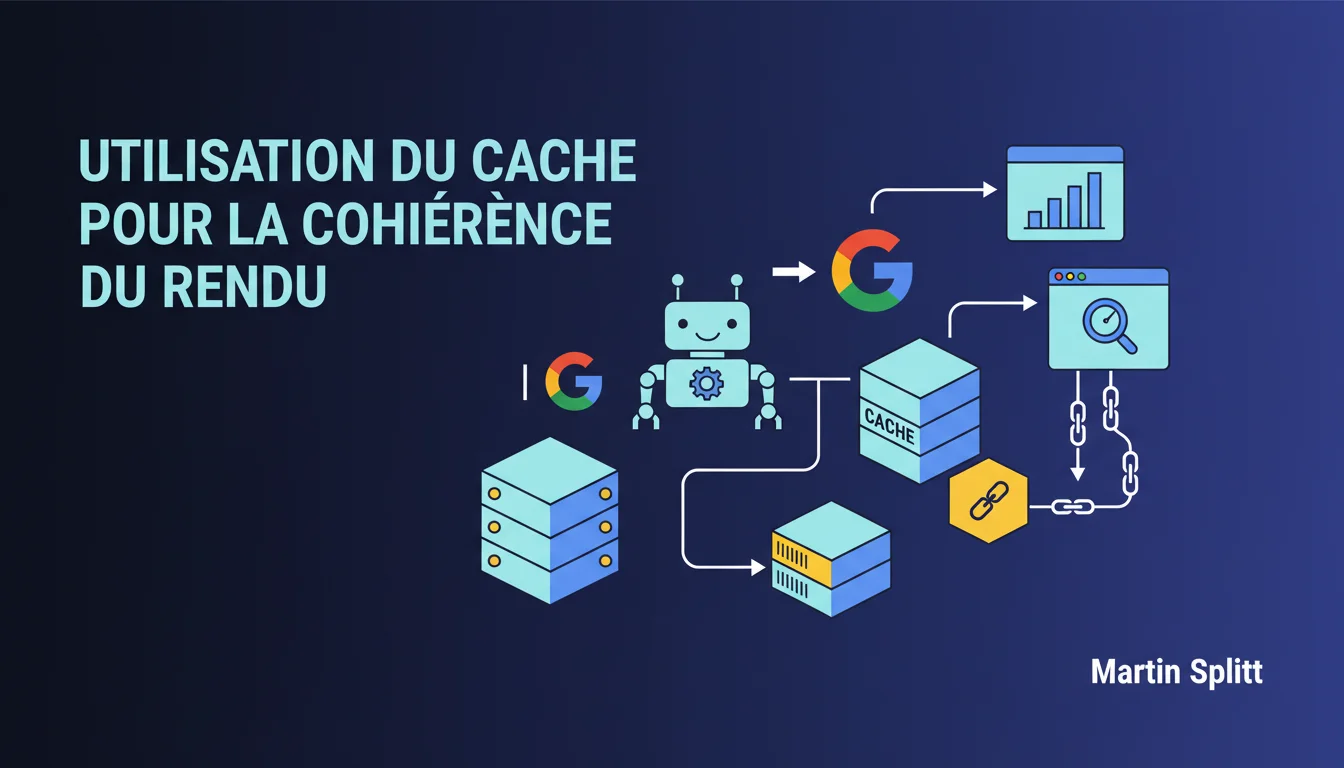

Google heavily relies on cache to ensure consistent rendering of pages. Cacheable GET requests remain cached between crawls, but if a resource expires or is non-cacheable, it will be fetched again during the next render. This mechanism can create time discrepancies between what the bot sees and what your visitors see, especially on continuously deployed sites.

What you need to understand

Why does Google rely so heavily on cache during rendering?

JavaScript rendering is resource-intensive on server costs. To avoid unnecessary requests with every crawl, Googlebot reuses cacheable resources (JS, CSS, images) as long as they comply with HTTP cache headers (Cache-Control, Expires, ETag).

Specifically, if your file app.bundle.js has a 7-day cache, Google won't re-download it during this period even if you modify it on the server side. It will use the cached version to render the page. This is efficient for Google but less so for you if you deploy frequently.

What exactly is a cacheable GET request?

A GET request is considered cacheable if it returns a 200 or 301 status and has explicit cache headers. POST, PUT, DELETE requests are never cacheable. GET requests without cache headers or with Cache-Control: no-store won't be cached either.

The catch? If you serve your assets without versioning (e.g., /main.js instead of /main.v12345.js), Google may render a page with an outdated version of your code for several days. You deploy a critical fix on your UI, but the bot continues to see the old version until the cache expires.

What happens when a resource has expired or is non-cacheable?

If the cache expires (exceeding max-age or Expires), Google will re-download the resource during the next crawl and render. The same logic applies if the resource was never cacheable: each render will require a new HTTP request.

This is where sites with Cache-Control: no-cache or very short TTLs gain freshness but lose performance on Googlebot's side. The bot will make more requests, which can slow down rendering and increase perceived latency.

- Google prioritizes cache for consistency: the same render will produce identical results if the resources are the same

- GET resources with cache headers will be reused throughout the cache duration

- If a resource expires or is non-cacheable, it will be fetched again during the next render

- Asset versioning (/main.v123.js) circumvents this issue by forcing a new file name on each deployment

- Continuously deployed sites must manage the discrepancy between the version served to users and the one seen by Googlebot

SEO Expert opinion

Is this statement consistent with observed industry practices?

Yes, and it's been documented in Google's best practices for a long time. We regularly observe cases where the Mobile-Friendly Test or Search Console displays a DOM different from the production one, precisely because of the cache.

The issue primarily arises for sites that deploy multiple times per day without versioning. If you push an update to your CSS or JS in production, Google may continue to render with the old version for 24-48 hours depending on your headers. The result: inconsistent snapshots, rendering tests that do not reflect reality.

What nuances should be added to this statement?

Google does not explicitly specify the maximum cache duration it adheres to. We know it respects standard HTTP headers, but do we observe internal ceilings? [To verify] on TTLs exceeding 30 days.

Another blind spot: what happens in the case of a Vary header variation (e.g., Vary: User-Agent)? Does Google use a shared cache or one dedicated to mobile vs desktop bot? The statement remains vague on these points. Field tests suggest a shared cache, but there's nothing official.

In what cases does this rule not apply or cause issues?

If you're using Service Workers on the client-side to manage cache, Google does not account for them during rendering. The bot does not execute Service Workers in the same way as a standard browser. Therefore, your offline caching strategy does not impact Googlebot's rendering.

Sites using Server-Side Rendering (SSR) with dynamically generated HTML can also get trapped. If the initial HTML is served without cache but the JS/CSS assets are with a long TTL, you will have a mix of fresh version (HTML) + outdated version (assets). The rendering can visually explode.

Practical impact and recommendations

What concrete steps should be taken to control cache and avoid discrepancies?

The most robust solution: asset versioning via hash or timestamp in the filename. Your build generates /main.a3f2d1c.js instead of /main.js. Each deployment creates a new file, so Google cannot serve an outdated version from the cache.

If you cannot version (legacy, technical constraints), shorten the cache TTLs to a maximum of 1-2 hours on critical rendering assets. Yes, this increases HTTP requests on Googlebot's side, but it reduces the risk of discrepancies. Use Cache-Control: public, max-age=3600 instead of max-age=604800.

What mistakes should be absolutely avoided?

Never serve your critical JS/CSS assets with Cache-Control: immutable if you are not using versioning. Immutable tells the browser (and Google) to never revalidate, even with F5. If you modify the file without changing its URL, no one will see the update.

Avoid cache busters in query string (/main.js?v=123). Google may ignore or normalize query strings, especially if they resemble tracking parameters. Prefer the hash in the filename.

How can I check that my site is configured correctly?

Use the URL Inspection Tool in Search Console and compare the rendering with your production version. If you just deployed, wait 24-48 hours before retesting. A persistent discrepancy indicates a caching issue.

Check your HTTP headers with curl or DevTools: Cache-Control, ETag, Last-Modified. If your critical assets show a max-age greater than 86400 (24h) without versioning, you are in a risk zone. Also, audit the CDN settings: some CDNs overload your headers with their own caching rules.

- Implement asset versioning (hash in the filename) on all critical JS/CSS for rendering

- Limit cache TTLs to a maximum of 1-2 hours if versioning is not possible

- Ban Cache-Control: immutable without versioning

- Avoid cache busters in query string, prefer hash in the URL

- Test rendering in Search Console after each major deployment

- Audit the HTTP headers of your assets with curl or DevTools

❓ Frequently Asked Questions

Google respecte-t-il les mêmes règles de cache qu'un navigateur classique ?

Combien de temps Google garde-t-il une ressource en cache maximum ?

Si je modifie un fichier JS sans changer son URL, combien de temps avant que Google voit la nouvelle version ?

Les requêtes POST ou les appels API sont-ils mis en cache par Googlebot ?

Le cache de Google peut-il expliquer pourquoi mon rendu Search Console est différent de ma prod ?

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · duration 48 min · published on 27/01/2021

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.