Official statement

What you need to understand

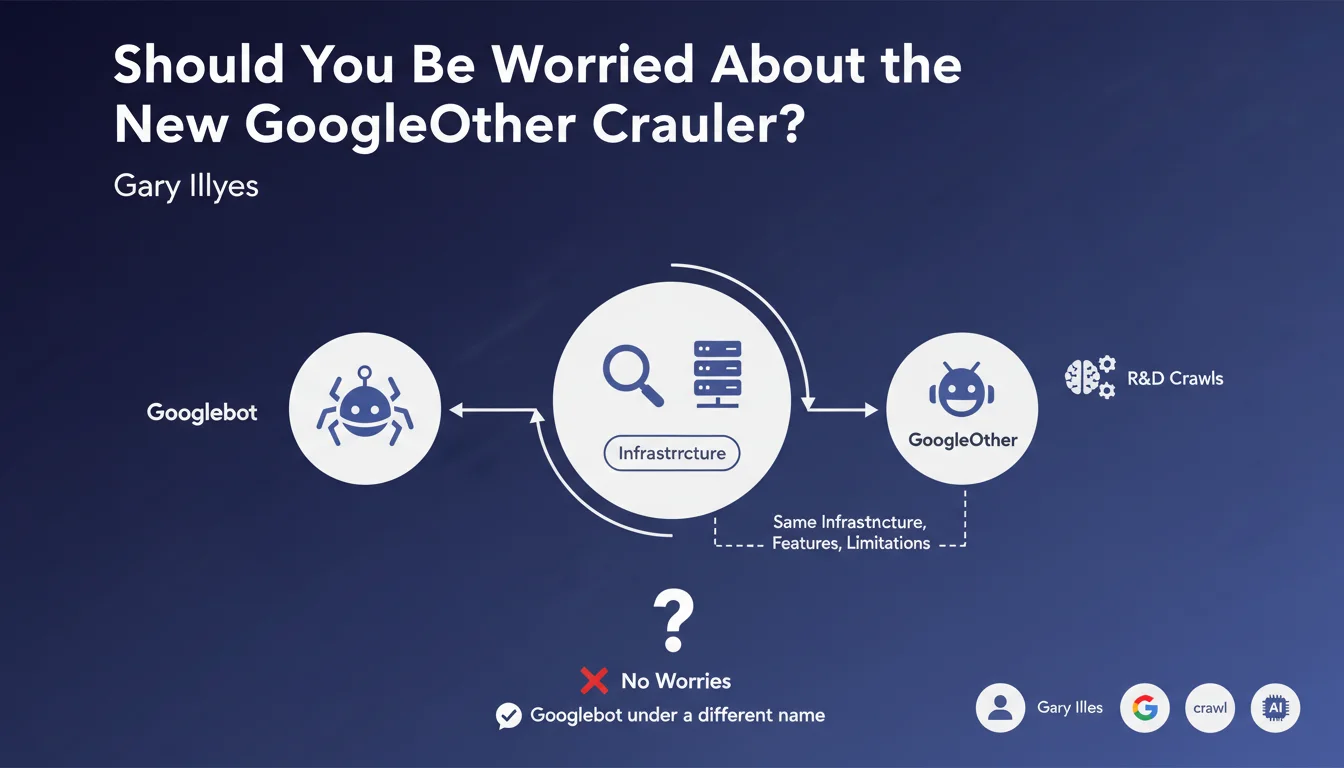

Google has introduced a new crawler called GoogleOther, which joins Google's crawler ecosystem. Contrary to what its name might suggest, this bot is not intended for research and development tasks in the traditional sense.

GoogleOther is actually a general-purpose crawler that handles all crawling tasks that are not directly related to indexing for the Google search engine. It is essentially Googlebot under a different identity, sharing the same technical infrastructure and capabilities.

The main objective of this separation is to free up crawl capacity for Googlebot, allowing the main bot to focus exclusively on crawling and indexing content for search results. GoogleOther therefore handles all of Google's other crawling needs.

- GoogleOther uses exactly the same technology as Googlebot (same infrastructure, same features)

- It is not used to index pages for Google search

- Its role is to relieve Googlebot by handling other types of crawls

- This separation improves Google's crawl resource allocation

- It respects the same robots.txt rules and the same limitations as Googlebot

SEO Expert opinion

This decision by Google is perfectly consistent with the evolution of their infrastructure. Over the years, Google has developed numerous ancillary services requiring crawling: Search Console verification, security analysis, spam detection, data collection for AI, etc. Grouping all these activities under one bot allows for better traceability and resource management.

From a technical standpoint, this separation is logical and safe for websites. GoogleOther respects the same rules as Googlebot, which means your existing robots.txt directives and crawl settings continue to work. There is no reason to specifically block this new crawler.

Practical impact and recommendations

- Do not block GoogleOther in your robots.txt – it uses the same infrastructure as Googlebot and already respects your existing rules

- Update your log analysis tools if you monitor crawl budget: separate GoogleOther from Googlebot in your reports for more accurate insights

- Focus on Googlebot for crawl optimizations – it alone impacts your indexing and SEO

- Verify that GoogleOther appears in your logs as proof that Google is actively exploring your site for various services (a good sign of activity)

- Do not modify your crawl budget strategy – the principles remain the same, only Google's internal allocation changes

- Document this change in your procedures if you have automated monitoring processes that identify bots

This evolution illustrates the growing complexity of the Google ecosystem and the need for ongoing technical monitoring. Detailed crawl log analysis, distinguishing between different bots, and crawl budget optimization are becoming specialized skills that require expertise and dedicated tools. For high-volume sites or those with significant strategic stakes, this increasing technical complexity often justifies support from a specialized SEO agency, capable of interpreting this complex data and fine-tuning technical parameters to maximize your visibility.

💬 Comments (0)

Be the first to comment.