Official statement

What you need to understand

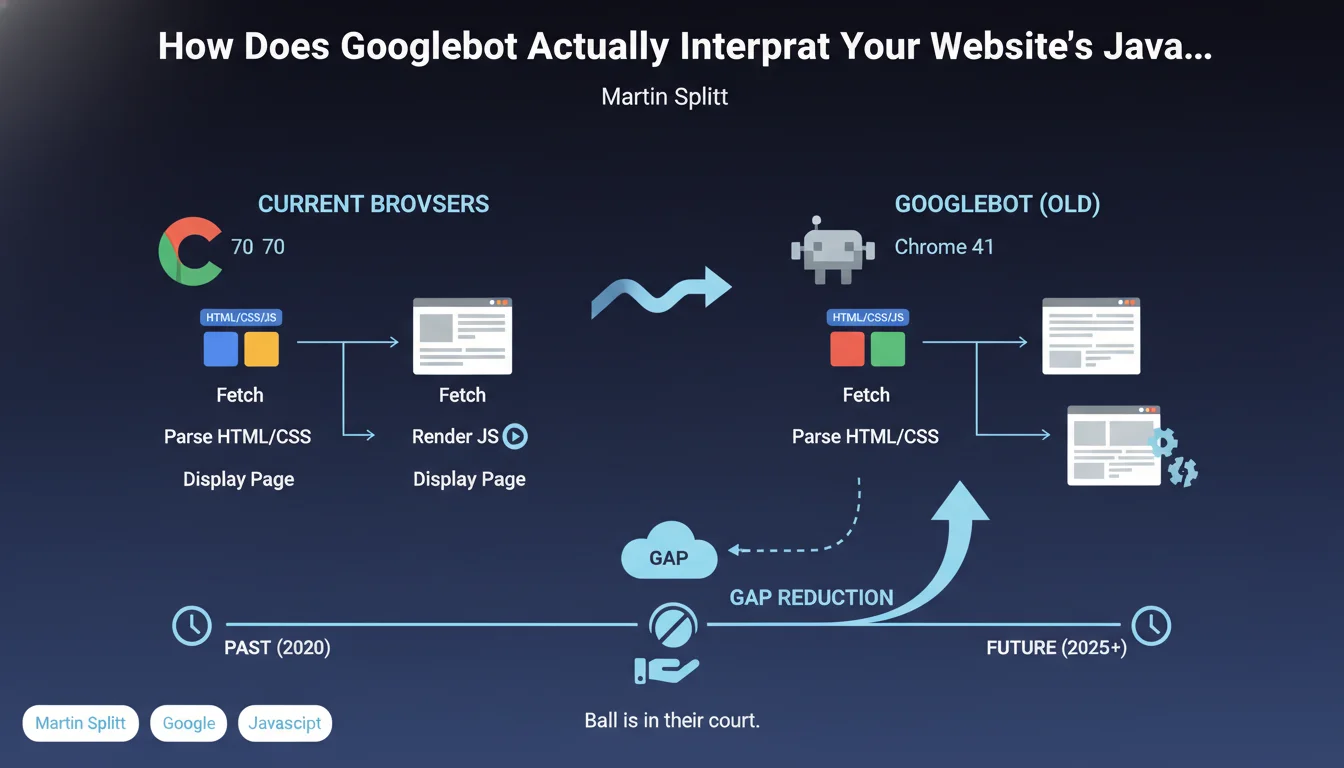

Google has clarified its position regarding JavaScript rendering and the requirements for proper indexing. According to this statement, it's not necessary for all interactive features of your site to be fully operational when the bot crawls it.

Concretely, this means that elements like burger menus, complex animations, or certain user interactions don't need to work perfectly for Google to understand and index your content. The search engine focuses on accessing the main content rather than the complete interactive experience.

However, this nuance hides a more complex reality for SEO practitioners. If bot-side rendering differs significantly from that of the user, several structural problems can emerge.

- Non-clickable links won't be followed by Googlebot, limiting the crawl of your deep pages

- Content loaded via JavaScript must remain accessible even if interactions don't work

- Main navigation must be crawlable independently of interactive features

- Blocked resources (CSS, JS) can prevent proper rendering of essential content

SEO Expert opinion

This statement is generally consistent with what we observe in the field, but it requires nuanced interpretation. Google is indeed tolerant of interactive features, but this tolerance doesn't extend to structural elements critical for SEO.

The real challenge lies in distinguishing between cosmetic features and essential navigation elements. An image carousel that doesn't work perfectly won't impact your indexing. On the other hand, a navigation menu entirely dependent on complex JavaScript can create indexing silos if links aren't detectable.

In practice, we observe that sites offering consistent rendering between bot and user perform better. Not because Google penalizes others, but because they avoid technical pitfalls that fragment the site's architecture.

Practical impact and recommendations

- Audit your rendering: Use the URL Inspection tool in Search Console to compare what Googlebot sees versus what a real user sees

- Test your navigation links: Ensure all structural links are present in the initial DOM or rendered in a way that's accessible to Googlebot

- Prioritize critical content: Headings, main paragraphs, internal links, and meta tags must be available without waiting for complete JavaScript execution

- Implement server-side rendering (SSR) or static generation for strategic pages if your site is in full JavaScript

- Avoid critical dependencies: Never condition the display of your main menu or breadcrumb on complex JavaScript interactions

- Document differences: If bot rendering intentionally differs from user rendering, map these differences to anticipate impacts on crawling

- Optimize rendering time: Reduce blocking JavaScript and optimize resources to speed up processing by Googlebot

- Monitor orphan pages: Regularly check that no important pages have become inaccessible following JavaScript modifications

These technical optimizations around JavaScript rendering and crawlable architecture require in-depth expertise and continuous monitoring. The challenges simultaneously affect front-end development, server infrastructure, and overall SEO strategy. For complex sites or migrations to modern JavaScript frameworks, support from a specialized SEO agency helps secure these transformations and avoid visibility losses that can be very costly in terms of organic traffic.

💬 Comments (0)

Be the first to comment.