Official statement

What you need to understand

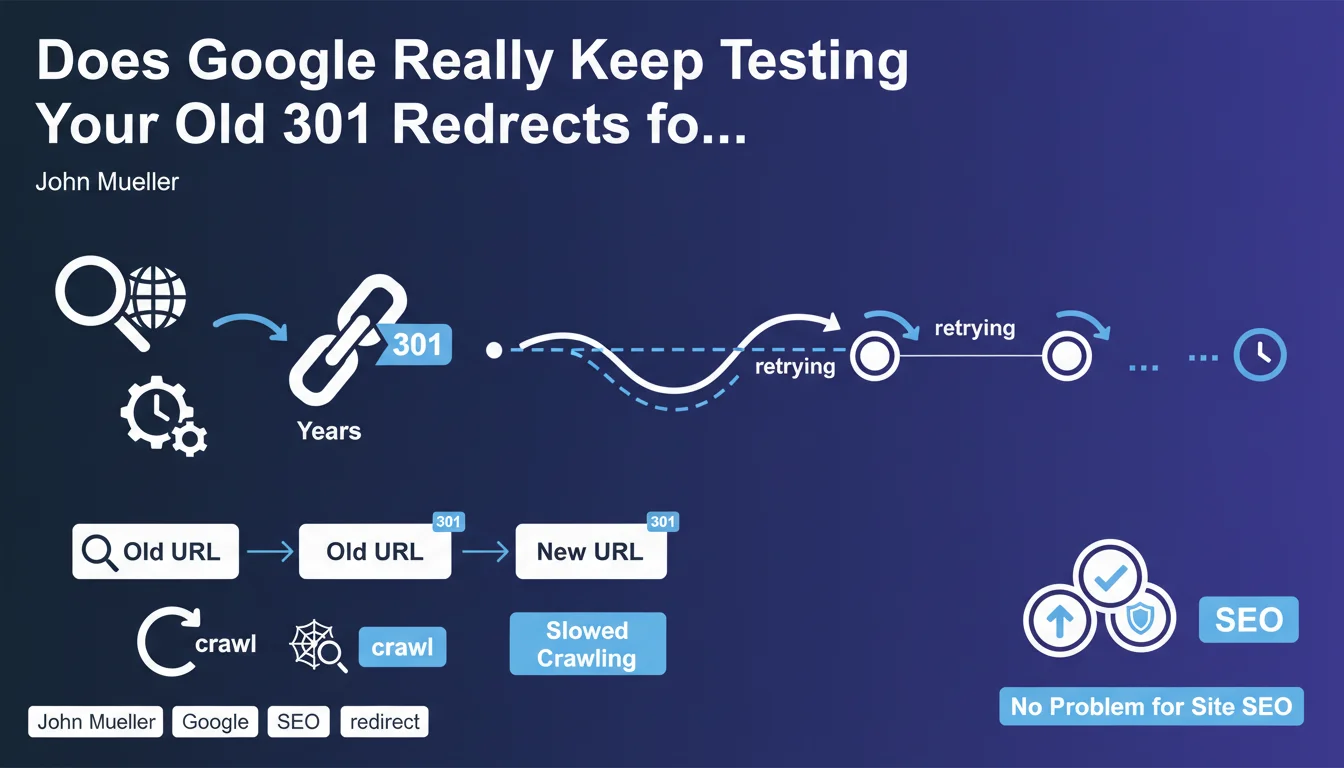

Why does Google continue testing redirects after they're implemented?

Google has a particularly tenacious indexing memory. When a URL is discovered and indexed, it remains in the search engine's databases for many years.

Even after implementing a permanent 301 redirect, Googlebot will continue to periodically check whether this redirect is still active. This verification allows for the detection of potential configuration changes or errors.

What impact do these recurring tests have on site crawling?

John Mueller specifies that Google significantly slows down the crawling of these redirected URLs over time. Crawling becomes increasingly spaced out but never stops completely.

However, this residual crawling does not penalize the rest of the site. The crawl budget allocated to these old URLs becomes negligible and does not impact the indexing of active pages.

What are the key takeaways from this statement?

- 301 redirects remain tested for years by Google, even though they are permanent

- The crawl frequency of redirected URLs decreases significantly over time

- This behavior does not constitute a problem for the site's overall SEO

- Google maintains a historical database of discovered URLs

- There's no point in trying to block these crawl attempts on old URLs

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. In my server log audits, I regularly observe Google crawling URLs that have been redirected for several years. The frequency is indeed very low (sometimes a few times per year), but the behavior is systematic.

This persistence is explained by Google's very architecture: the search engine has indexed billions of pages, and maintaining this historical mapping of the web is part of its normal operation. It's also a way to detect if a site reactivates old URLs.

What important nuances should be added to this information?

The main nuance concerns the volume of redirects. For a few dozen or hundreds of redirects, the impact is indeed negligible as Mueller states.

However, for sites that have migrated tens of thousands of URLs, even slowed-down crawling can represent a significant server load. In these cases, crawl budget optimization becomes more critical.

In which cases can this rule still cause problems?

For very high-volume sites, maintaining thousands of active redirects indefinitely can consume server resources. After several years, it may be relevant to remove the least strategic redirects.

Additionally, certain hosting platforms that charge per request may see their costs increase even with minimal crawling. A cost-benefit analysis is necessary in these specific situations.

Practical impact and recommendations

What should you actually do with your 301 redirects?

The main recommendation is to keep your redirects active indefinitely for all URLs that had traffic, backlinks, or SEO value. Don't try to remove them prematurely.

For redirects implemented during a redesign or migration, plan to keep them for at least 2 to 3 years. For strategic URLs with quality backlinks, maintain them without time limit.

Document your redirects in a tracking file with the implementation date and reason. This will facilitate future audits and maintenance decisions.

What critical mistakes should you absolutely avoid?

- Never remove a redirect after just a few months, even if traffic has disappeared

- Don't use 302 redirects (temporary) for permanent URL changes

- Avoid redirect chains (A→B→C) that dilute PageRank and slow down crawling

- Don't block old redirected URLs in robots.txt

- Don't forget to also redirect variants (www/non-www, http/https) of each URL

How can you optimize long-term management of your redirects?

Implement regular monitoring of your redirect files. Check every six months that no loops or chains have been created through updates.

For large-scale sites, analyze your server logs to identify redirects still being crawled. Focus your maintenance efforts on those generating the most Googlebot requests.

💬 Comments (0)

Be the first to comment.