Official statement

What you need to understand

What exactly is partial indexing and why does it exist?

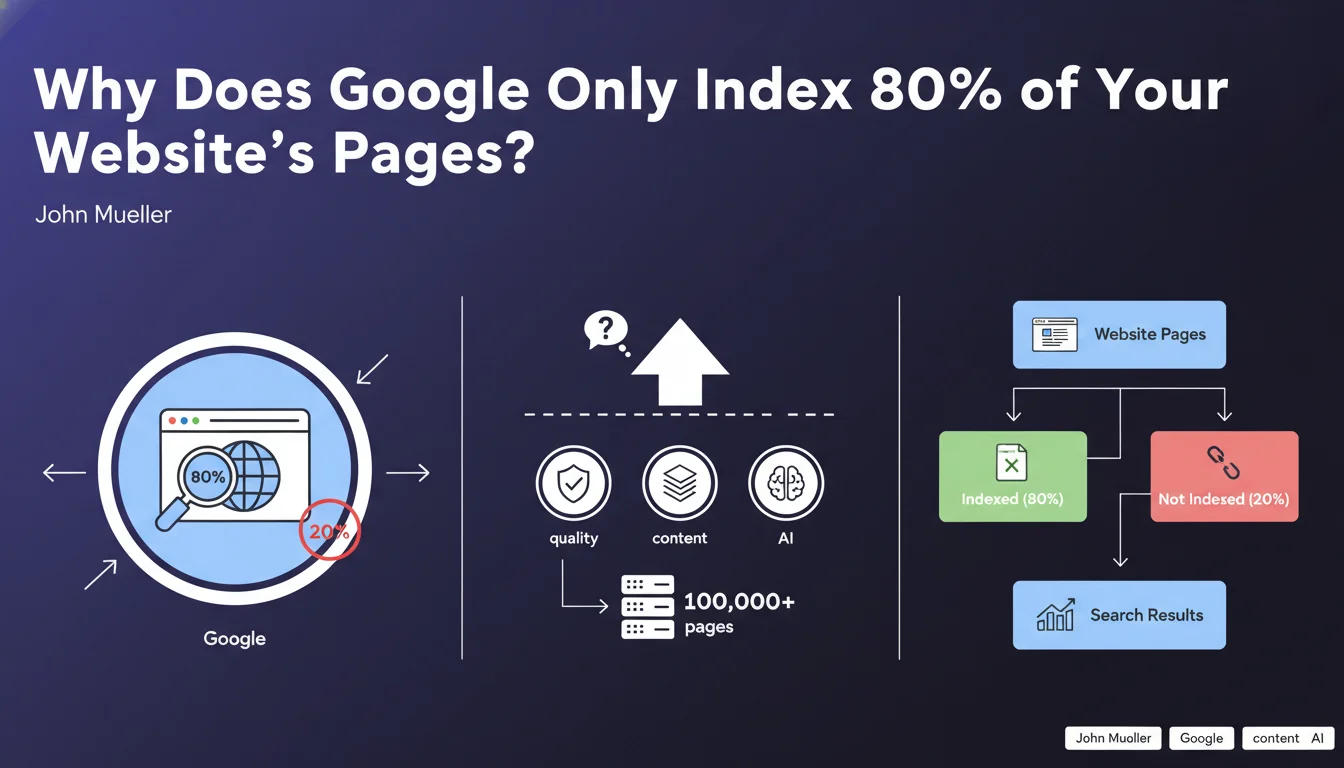

Partial indexing is a normal process at Google that involves referencing only a portion of a website's content. Contrary to popular belief, Google does not guarantee the indexing of 100% of your pages, even if they are technically accessible.

This phenomenon is explained by the fact that Google has limited resources (crawl budget, storage capacity) and must make choices. The search engine prioritizes pages it deems relevant and useful for users, at the expense of redundant content, low-quality content, or rarely consulted pages.

Which websites are particularly affected by this phenomenon?

Large-scale sites containing several hundred thousand pages are the most affected. E-commerce sites with thousands of products, news portals, or user-generated content platforms frequently encounter this situation.

For a site with 500,000 pages, it's not uncommon to find that only 300,000 to 400,000 pages are actually indexed. The remaining 20 to 40% are deliberately excluded by Google after evaluation.

How can I identify partial indexing on my website?

Search Console is the reference tool for diagnosing this issue. The "Coverage" report (or "Pages" in the new version) clearly distinguishes valid URLs from excluded URLs.

Often, the number of excluded URLs far exceeds that of indexed URLs. This disproportion is not necessarily alarming: it can result from legitimate technical choices (redirections, canonicalization) or reveal quality problems requiring in-depth analysis.

- Google never indexes 100% of a site's content, even if technically perfect

- An indexing rate of 60 to 80% is common on large sites

- Partial indexing reflects quality and relevance criteria

- Search Console allows you to quantify and analyze exclusions

- Each exclusion has a specific identifiable reason

SEO Expert opinion

Does this statement align with real-world observations?

Absolutely. After 15 years of experience, I observe this phenomenon daily on all types of sites. The reality sometimes even exceeds the 20% exclusion figure mentioned by Google.

On some poorly optimized e-commerce sites, I've observed exclusion rates reaching 50 to 60%. Conversely, well-structured editorial sites with high added value maintain indexing rates above 90%. The overall quality of the site is decisive.

What important nuances should be added to this information?

Not all exclusions are equal. We must distinguish legitimate exclusions (301 redirects, voluntary noindex pages, canonicalized duplicates) from problematic exclusions (pages deemed low quality, unintentional duplicate content, technical issues).

The real challenge is not to reach 100% indexing, but to ensure that strategic pages are properly indexed. A high-potential product page that's not indexed is a major problem. An old obsolete news article that's excluded generally isn't.

In what cases does this rule evolve or not apply?

Small quality sites (fewer than 1,000 pages) with carefully crafted content generally achieve very high indexing rates, sometimes close to 95-98%. Google has sufficient crawl budget to process all the content.

Conversely, sites generating automated or poorly differentiated content experience even more selective indexing. Google is becoming increasingly demanding about quality, particularly since the Helpful Content updates and the arrival of generative AI that multiplies low-value content.

Practical impact and recommendations

How can I effectively audit my site's indexing?

Start by extracting data from the "Pages" report in Search Console. Identify the main exclusion categories: crawled but not indexed pages, discovered but not crawled pages, server errors, etc.

Cross-reference this data with your site architecture. Classify your pages by type (categories, products, articles, informational pages) and check the indexing rate by segment. This quickly reveals problematic areas.

Use site: commands in Google for spot checks, but rely primarily on Search Console for comprehensive data. Analyze patterns: are certain sections systematically excluded?

What concrete actions should I take to improve indexing?

Prioritize quality over quantity. Remove or consolidate pages with low added value, internal duplicate content, and automatically generated pages with no real utility.

Optimize your internal linking to concentrate authority on strategic pages. Google crawls and indexes primarily pages that are easily accessible and frequently linked.

Improve quality signals: content enrichment, user experience improvement, bounce rate reduction, increased time spent on page. Google indexes more pages that engage users.

- Extract and analyze the "Pages" report from Search Console monthly

- Identify dominant exclusion patterns (quality, technical, duplication)

- Segment analysis by page type to detect patterns

- Eliminate or improve low-quality content that's systematically excluded

- Strengthen internal linking to strategic non-indexed pages

- Optimize crawl budget by blocking access to unnecessary sections (URL parameters, filters, etc.)

- Monitor indexing rate evolution after each major optimization

- Prioritize indexing of revenue-generating or qualified traffic pages

What should I do if optimizations don't yield results?

If despite your efforts, the indexing rate remains low on strategic pages, the problem may be deeper: partial algorithmic penalty, domain authority issues, or complex technical architecture requiring a redesign.

Partial indexing can also reveal unfavorable overall quality signals that Google applies to the entire domain. In this case, a holistic approach is necessary.

💬 Comments (0)

Be the first to comment.