Official statement

What you need to understand

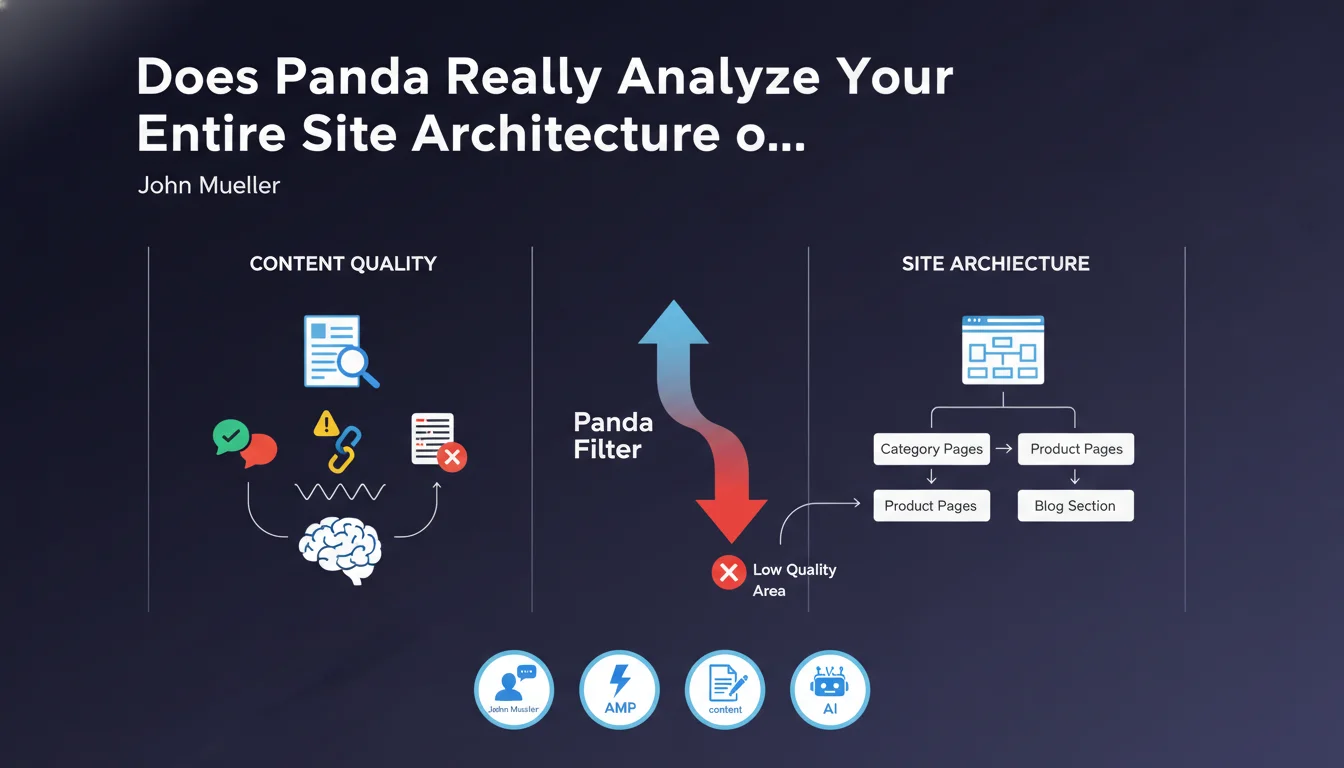

What exactly is the Panda filter and how does it work?

The Panda filter is a Google algorithm launched in 2011 to penalize sites offering low-quality content. Contrary to common belief, it doesn't limit itself to analyzing textual content page by page.

According to this statement from John Mueller, Panda adopts a holistic vision of the site. It evaluates not only the quality of texts, but also the overall architecture, content organization, and structural coherence of the entire domain.

How can a single section of the site impact the whole?

The most revealing aspect of this statement concerns the contamination effect. If a specific area of the site presents mediocre quality, it can degrade the Panda score of the entire domain.

The given example of low-quality category pages is particularly telling. These technical pages, often neglected, can become an algorithmic burden for the entire site, even if product pages or articles are excellent.

What architectural signals does Panda take into account?

Although Mueller remains deliberately vague, we can deduce several elements. Architecture includes navigation depth, internal linking coherence, content distribution, and taxonomy relevance.

- Panda evaluates the site as a whole, not just page by page

- A low-quality section can penalize the entire domain

- Category pages are particularly monitored

- Site architecture is part of the evaluation criteria

- Quality must be consistent across the entire site

SEO Expert opinion

Is this statement consistent with field observations from SEO professionals?

Absolutely. This revelation confirms what experienced SEO practitioners have been observing for years. Numerous sites have seen their traffic drop after multiplying automated category pages with little unique content.

The concept of a global quality score is particularly logical. Google seeks to evaluate the reliability of a domain as a whole, not just that of isolated pages. A site with 80% excellent content and 20% spam remains a suspect site.

What important nuances should be applied to this information?

Be careful not to over-interpret, however. The fact that one section impacts the whole doesn't mean that a single mediocre page will destroy your rankings. It's probably about proportions and thresholds.

Furthermore, the architecture mentioned by Mueller probably isn't limited to technical aspects (URLs, tree structure), but encompasses editorial coherence and thematic relevance. A finance site that suddenly adds a casino section will send contradictory signals.

In what contexts does this rule apply most strongly?

E-commerce sites and large-scale content sites are particularly concerned. Their complex architectures with multiple navigation facets naturally create risk zones.

Small sites with a few dozen pages are less exposed to this contamination effect. On the other hand, any site exceeding 500 indexed pages should imperatively audit the consistent quality of its different sections.

Practical impact and recommendations

How do I audit my site to detect Panda risk zones?

Start with a template-based analysis. Identify all types of pages on your site: product pages, categories, articles, tag pages, author pages, etc. For each template, evaluate the unique content / duplicate content ratio.

Use Search Console to identify sections with abnormally low click-through rates or degraded average positions. These signals often indicate a quality problem perceived by Google.

Check particularly automatically generated pages: search filters, facet combinations, pagination pages. These areas often create an inflation of indexed pages without real added value.

What concrete actions should be implemented to comply with Panda?

For category pages, systematically enrich them with unique editorial content: detailed descriptions, buying advice, comparisons. Aim for at least 300 words of original and relevant content.

Don't hesitate to massively deindex low-value sections. It's better to have 500 excellent indexed pages than 5000 pages of which 4000 are mediocre. Use robots.txt, noindex tags, or strategic canonicals.

- Conduct a complete inventory of all page templates on the site

- Evaluate the quality/volume ratio for each section

- Enrich category pages with 300+ words of unique content

- Deindex filter and facet pages without SEO value

- Delete or consolidate redundant tag pages

- Audit pagination pages and implement a coherent strategy

- Verify that each indexed section brings real user value

- Set up monitoring by section in Search Console

- Establish a minimum quality charter for each template

How do you maintain consistent quality over the long term?

Establish quality validation processes before any production release. Each new template, each new section must go through a Panda evaluation grid: content/code ratio, uniqueness, user value, content depth.

Plan quarterly audits to monitor the evolution of different sections. A living site constantly evolves, and quality areas can degrade over time or following technical modifications.

💬 Comments (0)

Be the first to comment.