Official statement

Other statements from this video 4 ▾

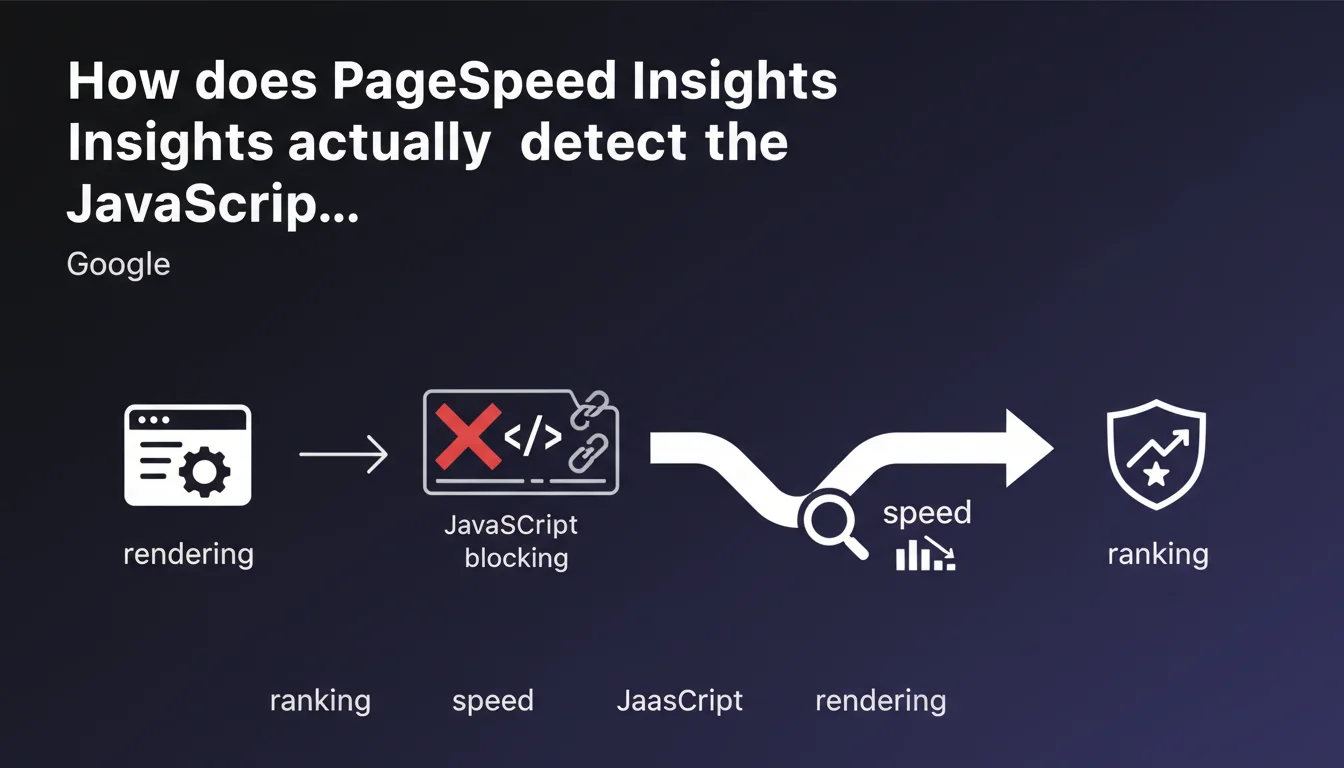

PageSpeed Insights precisely identifies JavaScript scripts that block the initial rendering of your pages—a technical factor that directly impacts your Core Web Vitals and therefore your SEO rankings. Google confirms that these blocking files constitute a measurable performance bottleneck, which isn't new but reinforces that JavaScript optimization remains a priority task for most websites.

What you need to understand

What exactly is render-blocking JavaScript?

A render-blocking script prevents the browser from displaying visible content until it has been downloaded, parsed, and executed. Concretely, the browser pauses DOM construction as soon as it encounters a <script> tag without the async or defer attribute.

This default behavior exists for historical reasons—some scripts manipulate the DOM directly during loading. But today, the vast majority of modern scripts have no reason to block initial display. The problem: too many sites continue loading their JS files synchronously, either through negligence or lack of knowledge.

Why does PageSpeed Insights focus specifically on this issue?

Because render-blocking JavaScript directly impacts First Contentful Paint (FCP) and Largest Contentful Paint (LCP), two Core Web Vitals metrics that Google has used as a ranking signal since 2021. A single script that delays display by 500 ms can be enough to push your LCP into the red zone.

PageSpeed Insights scans your rendering critical path and precisely identifies which files are causing problems. The tool even gives you an estimate of time saved if you defer these resources—direct, actionable data, not just a general observation.

Is this detection reliable or merely indicative?

The tool relies on Lighthouse, which simulates loading under controlled conditions (simulated 4G connection, throttled CPU). Results are therefore reproducible but don't always reflect the real-world reality of your actual users.

A script might be flagged as blocking when it loads in 50 ms over good WiFi—negligible. But on mobile with unstable 3G, that same script might add 2 seconds to FCP. The detection is technically correct, but you should cross-reference with your Chrome UX Report data to prioritize optimizations that have real impact.

- Render-blocking JavaScript = synchronous scripts that delay display of visible content

- Direct impact on FCP and LCP, two Core Web Vitals metrics

- PageSpeed Insights detects these files and estimates potential time savings

- Cross-reference with CrUX to validate real impact on your users

- JS optimization remains a priority lever often neglected

SEO Expert opinion

Is this recommendation consistent with real-world observations?

Yes, absolutely. We systematically observe that sites which defer or fragment their JavaScript gain between 0.5 and 2 seconds on LCP, depending on script weight and quantity. Audits on e-commerce and media sites regularly reveal 10 to 15 render-blocking JS files—often analytics tools, ad tags, or poorly integrated third-party libraries.

The problem: many technical teams still treat defer or async as "advanced" or "risky" attributes. As a result, sites continue loading jQuery, Bootstrap, and 8 other dependencies synchronously, like it's 2010. Google isn't saying anything new here; it's just reminding people of a best practice that too many sites still ignore.

What nuances should be added to this statement?

PageSpeed Insights detects render-blocking scripts, certainly—but it doesn't always distinguish between a critical script (that must execute before initial display) and a superfluous script blocking by mistake. Classic example: a script managing a cookie banner may legitimately block rendering if you must display that banner immediately for legal reasons.

Another point: the tool sometimes recommends inlining critical JavaScript directly in the HTML. This is effective for eliminating an HTTP request, but it bloats the initial document and complicates maintenance. [Verify this] case-by-case depending on your architecture.

In what cases is this detection insufficient?

PageSpeed Insights analyzes a cold load, without cache. If your JavaScript is properly cached by the browser, the real impact on repeat visits is virtually zero—but the tool will continue flagging the issue. For high-repeat-visit sites (SaaS, media with subscribers), this detection sometimes overestimates the problem's criticality.

Additionally, the tool doesn't measure the impact of long tasks—a 50 KB script executing in 800 ms and blocking the main thread is more harmful than a 200 KB script executing in 100 ms. PageSpeed Insights detects weight and network blocking, but not always CPU load. Complement with Chrome DevTools profiling to identify true bottlenecks.

Practical impact and recommendations

What concrete steps should you take to eliminate render-blocking JavaScript?

First step: run a PageSpeed Insights audit on your critical pages (homepage, product sheets, landing pages). Identify scripts flagged as blocking and verify whether their immediate execution is truly necessary. In 80% of cases, the answer is no.

Next, add the defer attribute to all scripts that don't need to manipulate the DOM during loading. If a script must execute as early as possible but without blocking rendering, use async. The difference: defer guarantees execution order, async executes as soon as the file is ready, with no order guarantee.

For critical scripts that absolutely must execute before initial display, consider inlining them directly in the <head> of your HTML—but only if they're under 2-3 KB. Beyond that, the initial document weight negates the gain from avoiding the request.

What mistakes should you absolutely avoid?

Don't blindly defer all your scripts without testing. Some tools (A/B testing, personalization, cookie banners) may require synchronous execution to work properly. Test each modification in a staging environment and verify that all functionality continues working.

Another trap: loading third-party scripts (Google Analytics, Facebook Pixel, Hotjar) synchronously. These tools have no impact on initial display—put them all on async. If your tag manager loads 10 scripts synchronously, you're sabotaging your performance for nothing.

async on scripts that depend on each other. If script B uses a function from script A, and you load both asynchronously, execution order becomes random—a source of bugs. In that case, use defer.How do you verify that the optimization is working?

After deployment, rerun PageSpeed Insights and verify the scripts are no longer flagged as render-blocking. But more importantly, measure real impact on your Core Web Vitals via Search Console (Signals Web Essentials section) over a minimum 28-day period.

Supplement with RUM (Real User Monitoring) to track LCP and FCP across your actual users. If you gain 0.5 seconds on LCP but break critical functionality, the outcome is negative. Technical optimization is only worthwhile if it preserves user experience.

- Audit critical pages with PageSpeed Insights

- Identify render-blocking scripts and verify their necessity

- Add

deferorasyncdepending on use case - Inline critical scripts < 3 KB if relevant

- Move all third-party scripts to asynchronous mode

- Test in staging before production deployment

- Measure real impact with CrUX and Search Console

- Monitor Core Web Vitals over a minimum 28-day period

❓ Frequently Asked Questions

Faut-il différer tous les scripts JavaScript sans exception ?

Quelle est la différence entre async et defer ?

PageSpeed Insights signale un script bloquant mais mon LCP est déjà bon. Dois-je optimiser quand même ?

Peut-on inline tous les scripts critiques pour éviter les requêtes HTTP ?

Les scripts tiers (analytics, publicité) doivent-ils être bloquants ?

🎥 From the same video 4

Other SEO insights extracted from this same Google Search Central video · published on 19/08/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.