Official statement

Other statements from this video 4 ▾

- □ Faut-il déployer le markup de politique de retour au niveau organisation plutôt que produit ?

- □ Le balisage des variantes produit change-t-il vraiment la donne en SEO e-commerce ?

- □ Comment tirer parti du suivi des annonces marchands dans Google Images via Search Console ?

- □ La nouvelle documentation produits de Google va-t-elle vraiment simplifier votre vie d'e-commerçant ?

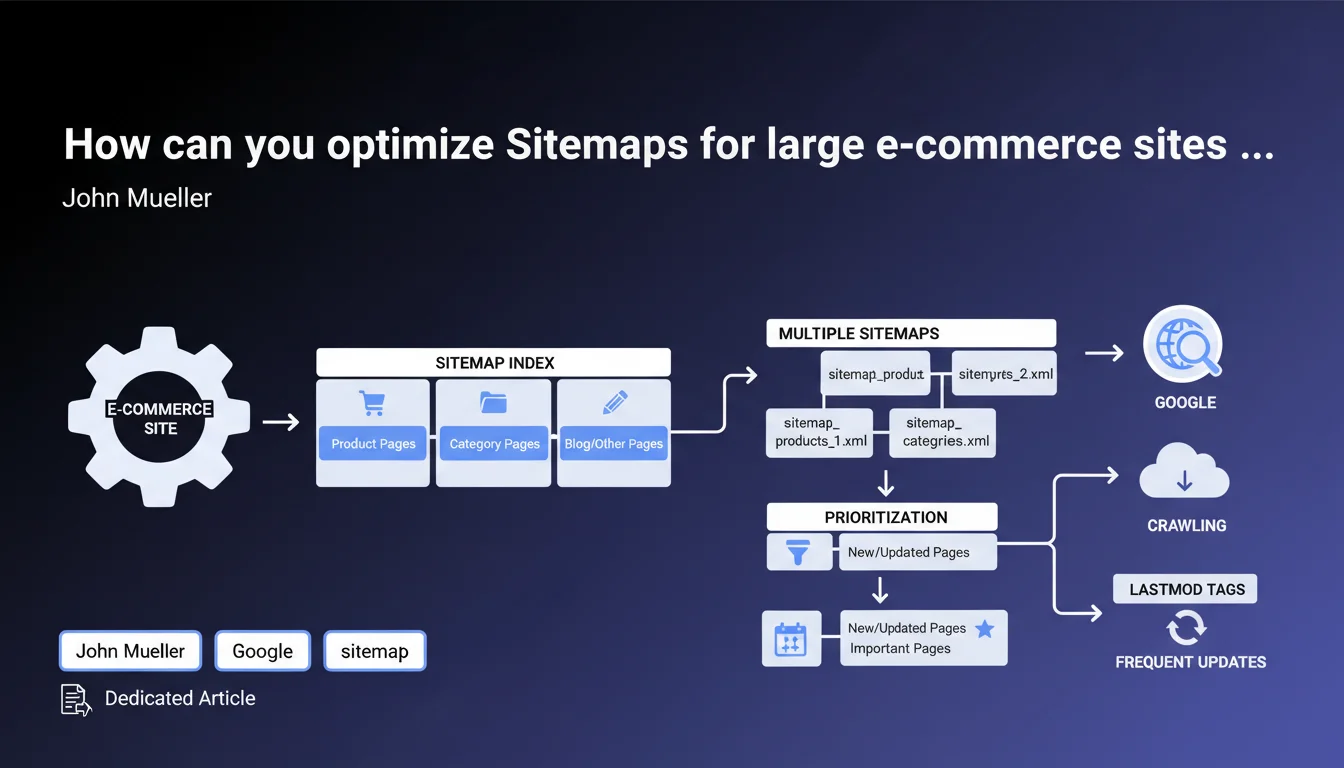

Google has published specific recommendations for structuring Sitemaps on large e-commerce sites. The focus is on strategic organization of submitted URLs and prioritizing crawl resources. These guidelines aim to improve crawl budget efficiency on platforms with thousands of product references.

What you need to understand

Why is Google specifically targeting large e-commerce sites?

Large-scale e-commerce sites present unique crawling challenges. Between seasonal product pages, URL variations from filters, and paginated pages, Googlebot can easily waste time on secondary content.

Google implicitly acknowledges that Sitemap structure directly influences how its bot prioritizes resources. A poorly designed Sitemap dilutes the signal: too many non-strategic URLs drown out the pages that truly matter.

What are the main pillars of these recommendations?

Google's advice focuses on three operational pillars: rigorous URL selection for submission, thematic or content-type segmentation, and dynamic file updates.

The goal? Transform a Sitemap from a simple exhaustive inventory into a crawl management tool. Google suggests excluding low-value URLs—combined filter pages, redundant parametric variants, obsolete temporary content.

Does this approach differ from generic recommendations?

Yes, and that's the key insight. Unlike standard advice saying "submit all important pages," Google refines the guidance for complex architectures.

The concept of "strategic segmentation" takes on full significance here: one Sitemap for main categories, another for in-stock products, possibly a third for editorial content. This granularity helps Googlebot understand the site's information hierarchy.

- Exclude low-value URLs: combined filters, paginated pages beyond a certain threshold, parametric variants

- Segment Sitemaps: by content type, business priority, update frequency

- Update dynamically: reflect the catalog's actual state (inventory, new items, discontinued products)

- Prioritize quality over completeness: 10,000 strategic URLs are better than 100,000 diluting the signal

SEO Expert opinion

Is this statement consistent with real-world observations?

Broadly yes, but with a significant caveat: Google provides no specific metrics to guide decisions. At what point is a site considered "large"? What ratio of Sitemap URLs to total URLs is optimal?

Field reports show that some e-commerce sites with 50,000 products crawl very well with an exhaustive Sitemap, while others with 20,000 references suffer from crawl budget issues. [To verify]: the real impact of Sitemap segmentation likely varies based on other factors—domain authority, technical structure, information architecture depth.

What risks does over-segmenting Sitemaps pose?

Multiplying Sitemap files without clear logic creates maintenance complexity that can backfire. Without proper automation, files quickly become outdated.

Another rarely mentioned point: an overly fragmented Sitemap Index can paradoxically slow Googlebot processing if HTTP requests multiply to fetch each fragment. The precision gain can be canceled out by increased technical latency.

Does Google remain vague about actual URL prioritization?

Yes, and it's frustrating. The <priority> attribute has been officially ignored for years, but Google offers no formal alternative to signal relative URL importance.

In practice, it's the update frequency (<lastmod>) and Sitemap structure that carry the signal. But nothing in this statement clarifies whether grouping strategic URLs in a dedicated file actually improves their crawl rate. [To verify] with controlled tests in server logs.

Practical impact and recommendations

What concrete steps should you take to restructure your Sitemaps?

First step: comprehensive audit of currently submitted URLs. Export your Sitemaps, cross-reference with server logs and Google Search Console. Identify URLs consuming crawl budget without generating impressions or organic traffic.

Next, define a clear taxonomy: main categories, actively in-stock product pages, high-value editorial content. Create a Sitemap Index with segmented files following this logic. Automate generation so inventory changes, product launches, and discontinuations are reflected in real time.

What mistakes should you avoid in this overhaul?

Classic error: excluding filter URLs but forgetting to block their indexation via robots.txt or noindex. Result: Google discovers them through internal links and indexes them anyway, but without Sitemap signal—worse than the original situation.

Another trap: creating segmented Sitemaps without coherence to internal architecture. If your navigation highlights categories that don't appear in priority Sitemaps, you're sending contradictory signals to Googlebot.

How do you measure the effectiveness of these adjustments?

Monitor three metrics in Search Console: the coverage rate of submitted URLs, evolution of average daily crawl, and the delay between publication and first indexation. Compare before/after over a minimum 30-day period.

In parallel, analyze your server logs: the ratio of Googlebot user-agent to total hits should remain stable or increase on strategic sections. If crawl shifts to secondary areas, segmentation hasn't achieved its intended effect.

- Audit current Sitemap URLs via GSC and server logs

- Identify and exclude low-value URLs: combined filters, deep pagination, long out-of-stock pages

- Segment Sitemaps by content type and business priority (categories, active products, editorial)

- Automate generation to reflect the catalog's actual state in real time

- Verify consistency with robots.txt and canonical/noindex tags

- Monitor impact for minimum 30 days: coverage rate, daily crawl, indexation delay

- Analyze server logs to validate crawl redistribution toward strategic sections

❓ Frequently Asked Questions

Faut-il vraiment exclure les pages de filtres des Sitemaps ?

Combien de Sitemaps différents créer pour un site de 50 000 produits ?

La balise <lastmod> influence-t-elle réellement le crawl ?

Peut-on soumettre des URLs canonicalisées dans un Sitemap ?

Un produit en rupture de stock doit-il rester dans le Sitemap ?

🎥 From the same video 4

Other SEO insights extracted from this same Google Search Central video · published on 07/08/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.