Official statement

What you need to understand

What does this Google statement about manual indexing actually mean?

Google states that a well-designed site doesn't need manual intervention to be indexed. Google's robots naturally crawl the web and discover new pages through internal links, XML sitemaps, and regular updates.

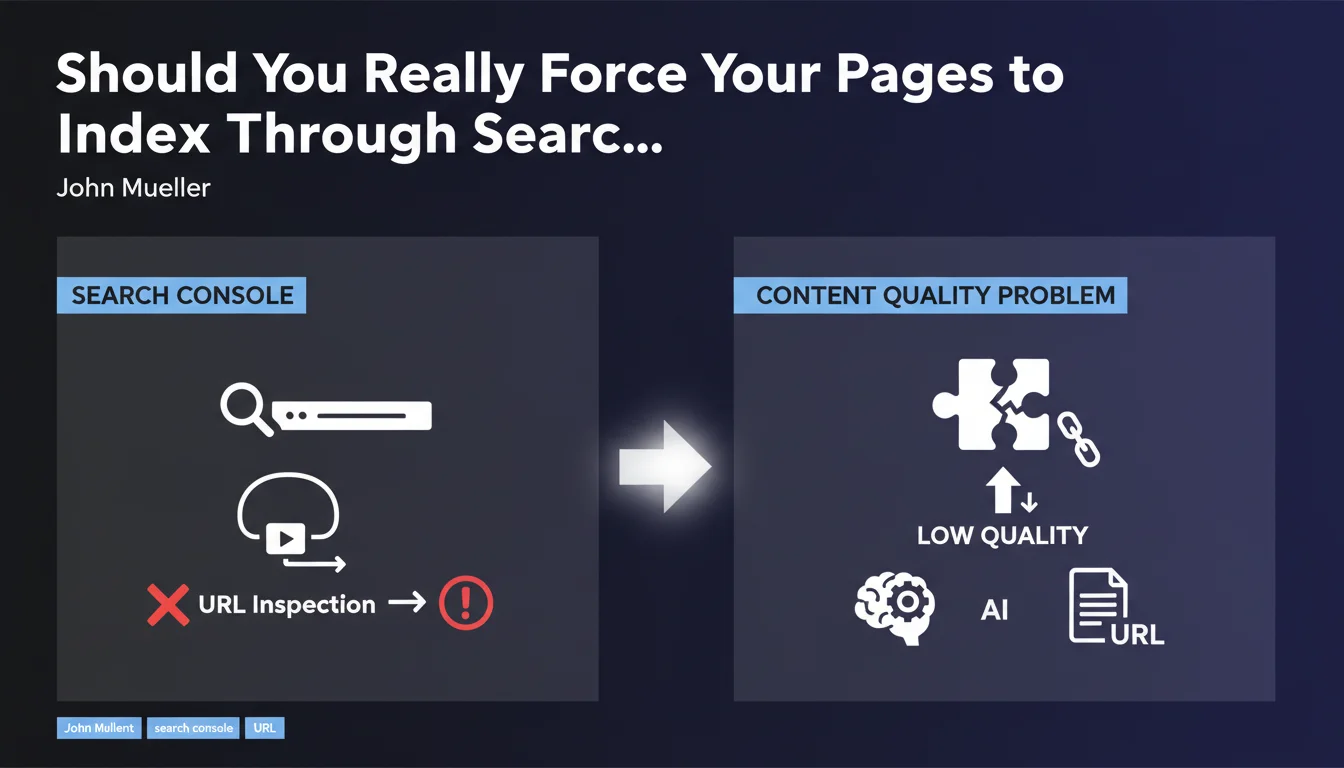

If you constantly have to use the URL inspection tool in Search Console to force indexing, it's a red flag. This indicates that Google doesn't consider your content relevant or high-quality enough to crawl and index it automatically.

Why doesn't Google crawl certain pages automatically?

Several reasons can explain this behavior. Content quality is the primary cause: duplicate content, low added value, or pages that are too similar to each other.

Technical issues are also to blame: insufficient crawl budget, complex site architecture, errors in robots.txt, or orphan pages without internal links. Google optimizes its resources and doesn't waste time on uninteresting pages.

When is manual indexing actually justified?

There are legitimate use cases for the inspection tool. For a new site or an important page that's just been published, requesting quick indexing is normal.

Similarly, after a major correction or for urgent news, requesting Google's attention is acceptable. But this should remain the exception, not the rule.

- Automatic indexing is the norm for a healthy site

- Systematic use of the inspection tool reveals structural problems

- Content quality takes precedence over technical manipulation

- Google prioritizes crawl efficiency on high-value sites

SEO Expert opinion

Does this statement align with what we observe in the field?

After 15 years of experience, I can confirm that Google's position corresponds to observable reality. Sites with quality content and clean architecture indeed never have indexing problems.

However, I've noticed that many SEOs use the inspection tool as a band-aid rather than solving the root causes. This is a strategic mistake that masks structural weaknesses.

What important nuances should we add to this rule?

Reality is more complex than the statement suggests. For large-scale sites (e-commerce, media), even with excellent content, crawl budget can be limited. Some pages may take time to be discovered naturally.

New sites or recent domains also suffer from less frequent crawling. In these cases, using the inspection tool for strategic pages isn't an admission of failure, but a pragmatic tactic.

In what contexts doesn't this rule fully apply?

For news sites or time-sensitive content, indexing speed is critical. Using the tool to accelerate the process for high-value content is perfectly justified.

Complex JavaScript sites or modern web applications may also require special attention. Even with excellent content, rendering issues can slow down natural indexing.

Practical impact and recommendations

How can I diagnose if my site has a structural indexing problem?

Start by analyzing the indexation rate in Search Console. Compare the number of pages submitted via sitemap to the number of pages actually indexed. A significant gap is a red flag.

Then check the crawl frequency in exploration statistics. If Google rarely visits your site despite regular updates, it means your content isn't generating interest.

Examine excluded pages and their reasons: "Crawled, currently not indexed" or "Discovered, currently not indexed" indicate perceived quality issues.

What concrete actions should I implement to resolve these problems?

First focus on content quality. Audit your pages to identify weak, duplicate, or poorly differentiated content. Consolidate or remove low-value pages.

Optimize your internal linking architecture. Ensure no strategic page is orphaned and that important pages are accessible within a maximum of 3 clicks from the homepage.

Improve your XML sitemap by listing only canonical and quality pages. A sitemap polluted with valueless pages dilutes the signal sent to Google.

- Audit existing content and delete or improve weak pages

- Verify that each page brings unique and non-duplicated value

- Optimize internal linking to facilitate page discovery

- Clean up the XML sitemap to keep only priority URLs

- Regularly monitor coverage reports in Search Console

- Increase the publication frequency of quality content

- Improve loading speed and Core Web Vitals

- Fix technical errors (robots.txt, canonical tags, redirects)

What strategy should I adopt to avoid depending on manual indexing?

Establish a consistent editorial calendar with regular, quality content. Google favors sites that demonstrate sustained activity and consistent expertise.

Implement a systematic internal linking strategy so that each new page is immediately connected to the rest of the site. Use semantic siloing to strengthen thematic relevance.

Invest in the continuous improvement of your site rather than in one-off tactics. Natural indexing is the consequence of a healthy ecosystem, not technical manipulation.

💬 Comments (0)

Be the first to comment.