Official statement

What you need to understand

What's the difference between Google's global assessment and granular analysis?

Google uses machine learning algorithms to evaluate a site's quality as a whole, rather than analyzing each page or query individually. This global approach means that machine learning determines an overall domain reputation, not targeted penalties on specific content.

Unlike traditional algorithmic filters that can target a page or section, machine learning operates at the entire site level. It analyzes multiple signals to establish an overall trust score that subsequently influences rankings.

Why does Google favor a non-granular approach?

This strategy allows Google to evaluate a domain's reliability and authority by cross-referencing thousands of signals. A site recognized as generally high-quality will benefit from better overall visibility, even if some content performs less well.

Machine learning detects quality or manipulation patterns that are difficult to identify through traditional rules. This holistic view discourages overly technical optimizations in favor of genuine editorial quality.

What does the absence of major new algorithms in development mean?

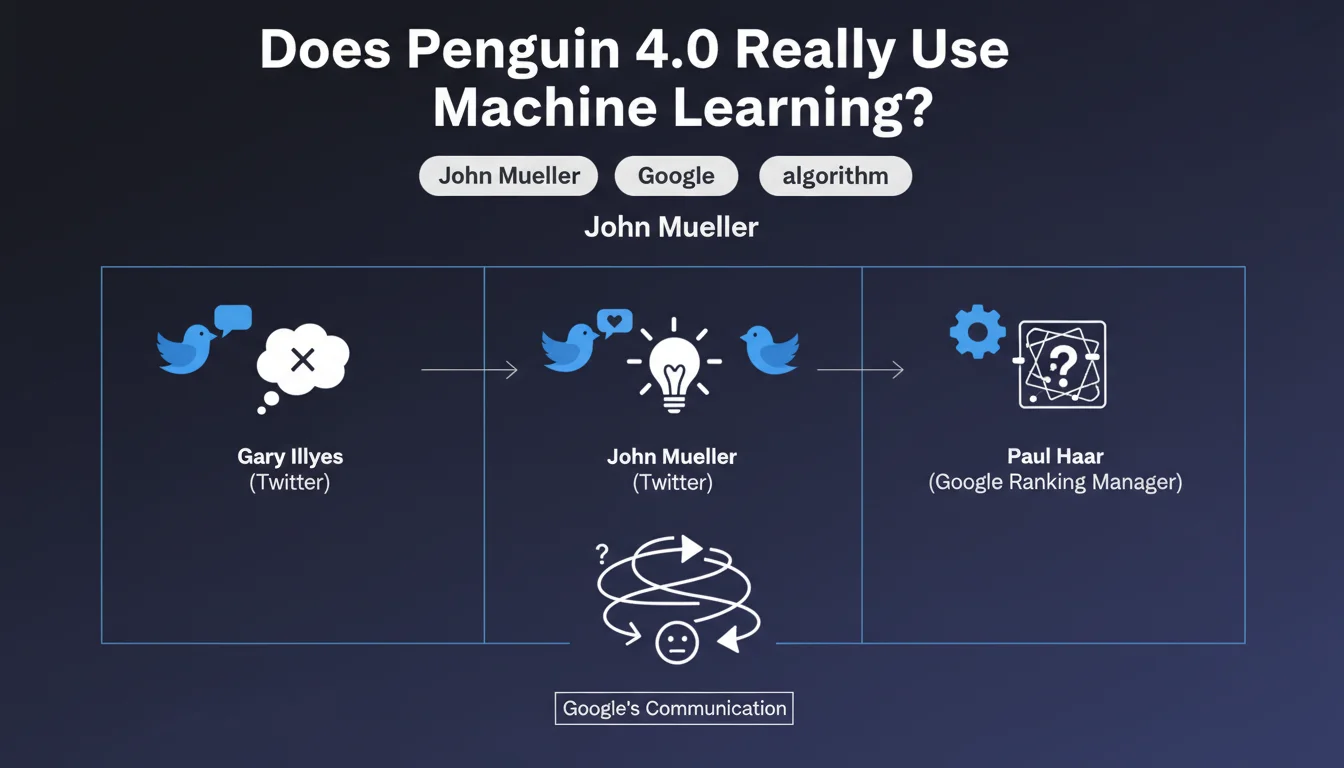

Gary Illyes' statement indicates that Google is consolidating its existing systems rather than developing new algorithmic breakthroughs. Efforts are focused on improving and refining already deployed technologies.

- Machine learning assesses overall site quality, not isolated pages

- No specific penalties are applied by these learning algorithms

- The approach is holistic and non-granular

- Google improves existing systems rather than creating new ones

- Domain reputation becomes a determining factor

SEO Expert opinion

Does this statement align with what SEO professionals observe in practice?

Analysis of numerous sites indeed confirms this approach. We regularly observe entire domains receiving a global boost rather than individual pages. Sites with established authority rank their new content more easily, even mediocre pieces.

However, certain algorithms like Core Updates sometimes seem to target specific categories or content types. The boundary between global assessment and sector-specific impacts remains blurred in practice.

What important nuances should be added to this statement?

While machine learning judges overall quality, other traditional algorithmic filters remain very granular. Penalties for duplicate content, spam, or link manipulation still function at the page or section level.

The claim about no major new algorithms dates back several years. Since then, systems like the Helpful Content Update have emerged, showing that Google continues to innovate, even if the pace has slowed.

When does this global approach become problematic?

For multilingual or multi-topic sites, one poor-quality section can negatively impact the entire domain. A neglected corporate blog can thus penalize well-optimized commercial pages.

New sites or those undergoing complete redesign must wait for Google to reassess their overall reputation, which can take several months. The system's inertia becomes frustrating when local improvements don't quickly translate into gains.

Practical impact and recommendations

What should you do concretely to optimize your site for machine learning?

Prioritize a quality approach across the entire domain rather than isolated technical optimizations. Regularly audit all site sections, including low-traffic ones that could harm your overall reputation.

Invest in editorial consistency and demonstrated expertise in your topics. Google seeks reliability signals that transcend page-by-page optimization: identified authors, cited sources, regular updates.

Eliminate or improve weak or outdated content that degrades overall perception. A site with 100 excellent pages will be better evaluated than a site with 1000 pages, 70% of which are mediocre.

What major mistakes must you absolutely avoid?

Don't neglect any section of your site thinking it's invisible. An abandoned blog, empty product pages, or mediocre automated content will impact your entire domain.

Avoid technical over-optimization strategies at the expense of real quality. Machine learning detects artificial patterns: mass-created pages, generic content, excessive internal linking.

- Audit the quality of all pages in the domain, not just priority ones

- Remove or improve weak or outdated content

- Develop consistent signals of expertise and authority across the site

- Maintain editorial consistency and regular updates

- Invest in quality over quantity of content

- Monitor domain reputation through Core Updates

- Clearly structure the site's areas of expertise

- Avoid neglected sections that degrade the overall image

How can you measure and improve your site's overall perception?

Track changes in your visibility during Core Updates that reflect overall quality reassessments. Tools like Search Console help identify traffic trends at the domain level.

Analyze engagement metrics across the entire site: bounce rate, time spent, pages per session. These behavioral signals likely feed machine learning algorithms.

💬 Comments (0)

Be the first to comment.