Official statement

Other statements from this video 6 ▾

- □ Faut-il vraiment ignorer les fluctuations quotidiennes dans Search Console ?

- □ Pourquoi les petits changements SEO peuvent-ils provoquer des effets imprévisibles sur Google ?

- □ Les signaux sociaux influencent-ils vraiment le classement Google ?

- □ Faut-il vraiment arrêter de surveiller les positions quotidiennes en SEO ?

- □ Faut-il vraiment s'inquiéter des pics soudains dans la Search Console ?

- □ Faut-il vraiment paniquer à chaque fluctuation de positionnement ?

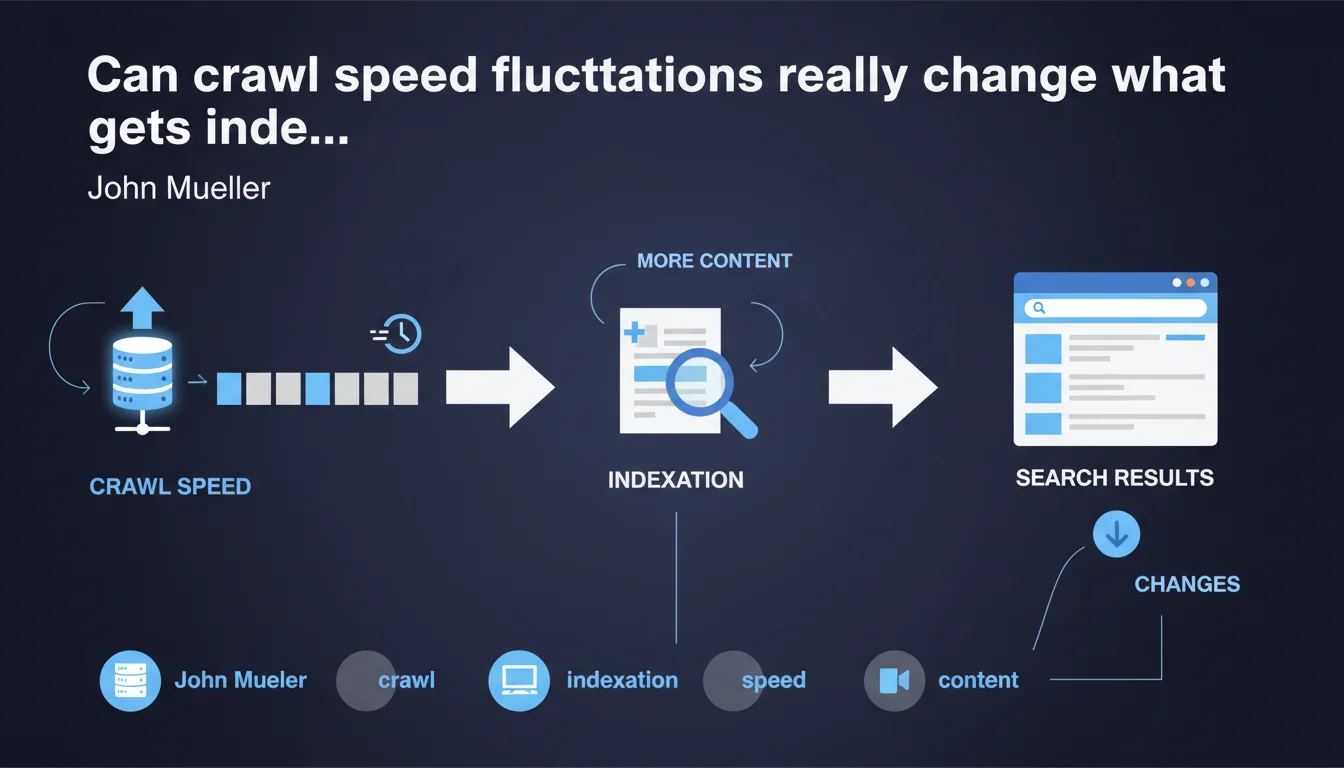

Mueller confirms that a variation in crawl speed from a Google datacenter can modify indexed content and therefore what's visible in search results. Practically speaking: faster crawling potentially exposes more pages to indexation, which can make content appear or disappear in the SERPs without any changes on your end.

What you need to understand

What does this actually mean for my site's indexation?

Mueller highlights a phenomenon that's often overlooked: the speed at which Google crawls your site is not constant. It depends on many factors, including the location and availability of Google's datacenters.

When a datacenter crawls slightly faster than usual, it can discover and index content it wouldn't have had time to reach under normal conditions. Conversely, a slowdown can temporarily make pages "disappear" from the index.

Why does crawl speed vary from one datacenter to another?

Google uses a globally distributed infrastructure. Each datacenter has its own technical constraints: server load, network latency, resource allocation priorities. These variations are normal and completely outside your control.

Result: your site can be crawled differently depending on which datacenter handles it at any given time. It's not a question of your site's quality, but of Google's infrastructure.

Which pages are most exposed to these fluctuations?

Pages located deep in your site structure, those that receive few internal or external links, and those added recently without strong popularity are the first victims. If the allocated crawl budget decreases or speed slows down, Googlebot simply won't reach them.

- A crawl speed variation can modify indexed content without any changes on your side

- Google datacenters have variable performance depending on their load and location

- Deep or unpopular pages are most vulnerable to fluctuations

- These variations are normal and independent of your site's technical quality

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, and it explains a lot of erratic behavior we see regularly. How many times have you seen a page disappear from the index and then reappear a few days later, without touching anything? That's exactly the mechanism Mueller is describing here.

The problem is that this statement remains extremely vague about the actual scale of these variations. Are we talking about a 5%, 20%, 50% difference in crawl speed? Impossible to know — and that's precisely what makes diagnosis complicated. [To verify]: what is the typical magnitude of these variations and their actual frequency.

Can we really talk about "changes in search results"?

Let's be honest: if a page disappears from the index because it wasn't crawled, it does disappear from the SERPs. But Mueller doesn't say these fluctuations affect the ranking of pages that remain indexed.

The distinction is important. We're talking here about presence/absence in the index, not ranking position changes for stable pages. If your traffic fluctuates without apparent reason, check indexation first before looking for ranking problems.

In what cases doesn't this explanation hold?

If strategic pages — homepage, main categories, flagship articles — regularly disappear from the index, the problem isn't crawl speed. It's a sign that your crawl budget is insufficient or poorly distributed, or that you have more serious technical issues.

Practical impact and recommendations

How do you distinguish normal fluctuation from a real indexation problem?

First step: monitor the trend, not isolated incidents. A page that disappears for 24-48 hours then returns is probably a crawl variation. A page missing for a week or more is something else.

Use Search Console to track pages "Discovered – currently not indexed". If this bucket suddenly grows without modifications on your end, you might be facing a crawl slowdown. But if important pages stay there permanently, look elsewhere.

What can you do to minimize the impact of these variations?

You can't control Google's crawl speed, but you do control how your crawl budget is used. Optimize your internal linking so important pages are shallow in your hierarchy. Reduce unnecessary content that wastes budget for nothing.

Also improve your server response time. If Googlebot takes 500ms to load each page instead of 100ms, it will mechanically crawl fewer pages in the same timeframe. That's direct leverage on your effective crawl rate.

When should you really worry?

If your strategic pages constantly fluctuate in the index, or if you notice a lasting decrease in the number of indexed pages without obvious technical explanation, that's when to act. Don't let these signals linger.

- Monitor indexed page count changes over several weeks, not day-to-day

- Identify "Discovered – currently not indexed" pages in Search Console and how long they've been in this status

- Optimize internal linking to reduce the depth of important pages

- Eliminate low-value content that unnecessarily consumes crawl budget

- Improve server response time to maximize the number of pages crawled per session

- Document observed fluctuations with dates and scale to distinguish noise from signal

❓ Frequently Asked Questions

Une page peut-elle disparaître de l'index uniquement à cause d'une baisse de vitesse de crawl ?

Combien de temps peut durer une fluctuation d'indexation liée au crawl speed ?

Peut-on forcer Google à crawler plus vite pour stabiliser l'indexation ?

Ces variations affectent-elles aussi le classement des pages indexées ?

Comment savoir si mon site subit ces variations de vitesse de crawl ?

🎥 From the same video 6

Other SEO insights extracted from this same Google Search Central video · published on 26/05/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.