Official statement

Other statements from this video 10 ▾

- 1:43 Faut-il vraiment perdre son temps à donner du feedback sur la documentation Google ?

- 7:27 Pourquoi bundler son JavaScript peut-il accélérer le crawl de votre site ?

- 15:17 Le classement Google est-il vraiment une science exacte ou un art subjectif ?

- 16:36 Peut-on vraiment mesurer le poids d'un facteur de classement Google ?

- 17:55 Faut-il vraiment arrêter de se concentrer sur un seul facteur de ranking pour stabiliser ses positions ?

- 19:02 Pourquoi Google refuse-t-il de donner une liste ordonnée de facteurs de classement ?

- 22:05 Pourquoi les algorithmes Google évoluent-ils sans cesse et comment s'adapter ?

- 23:15 Comment Google valide-t-il vraiment ses changements d'algorithme avant déploiement ?

- 24:18 Pourquoi votre classement peut-il baisser même si votre site reste excellent ?

- 25:20 L'expérience utilisateur peut-elle vraiment faire basculer votre classement face à un concurrent aussi pertinent que vous ?

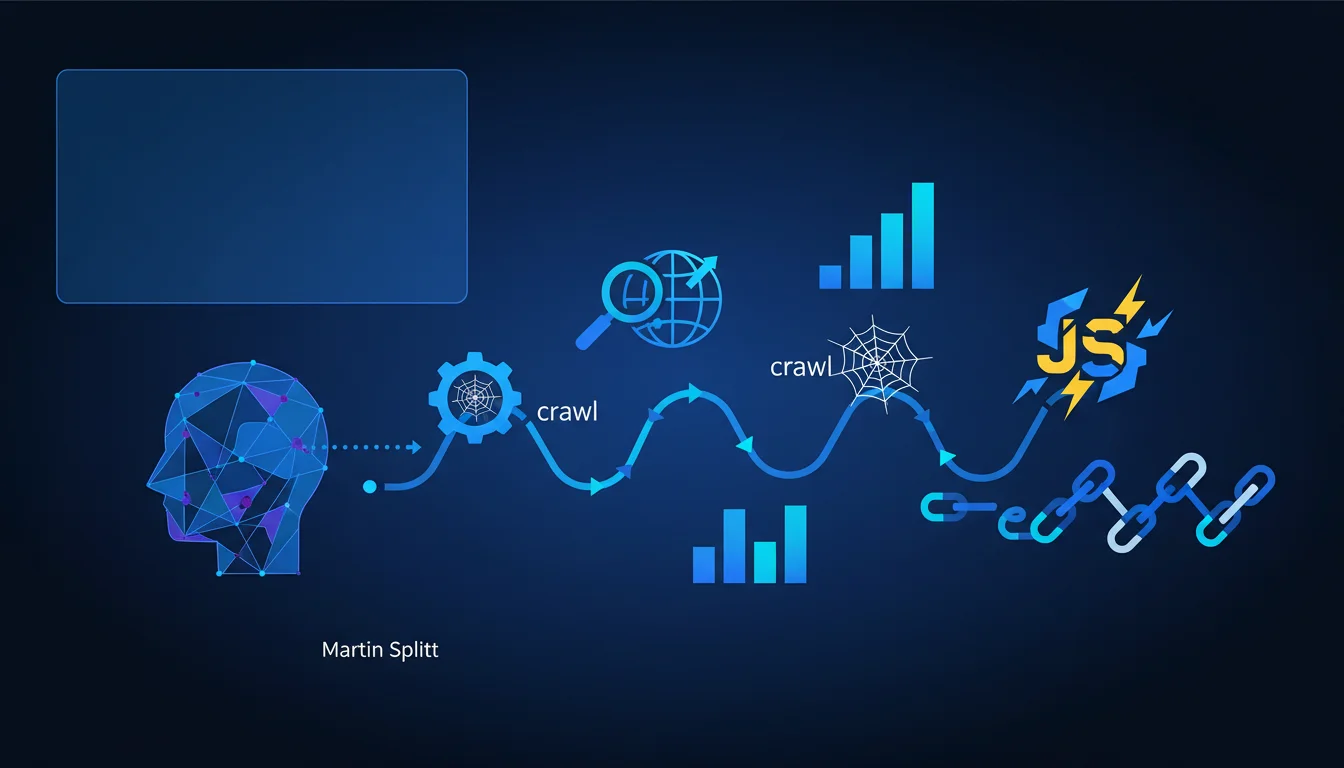

Google states that the use of JavaScript and its technical structure (bundling, splitting) are not direct ranking factors. However, these choices affect user experience and crawl efficiency, two elements that indirectly influence positioning. The nuance is crucial: a poorly implemented JS site may be penalized without it officially being a 'ranking factor.'

What you need to understand

What does "not a direct ranking factor" really mean?

When Martin Splitt asserts that JavaScript is not a ranking factor, he is making a precise technical distinction. Google's algorithm does not have a signal called "JavaScript score" that boosts or penalizes your position directly.

The way you organize your code — whether you use code splitting, lazy loading, or a monolithic bundle — does not trigger any bonus or penalty in ranking. This is a fundamental difference from confirmed signals like Core Web Vitals or backlinks.

Why is this statement confusing?

The trap is that JavaScript heavily influences factors that are confirmed as ranking criteria. A site that loads 2 MB of poorly optimized JavaScript will have a disastrous First Contentful Paint — and that is measurable in the Core Web Vitals.

If your main content is rendered client-side only and Googlebot has to wait 8 seconds to execute it, you have an issue with crawl budget and indexing. It’s not directly a "JavaScript factor" problem, but the result is the same: reduced visibility.

What is Google’s actual stance on JavaScript rendering?

Google has been crawling and indexing JavaScript for years now, but with documented limitations. The second wave of indexing — the queue where Googlebot renders JS pages — can introduce delays of several days, even weeks, for low-priority sites.

Splitt's statement does not change this technical reality. It simply clarifies that the use of JavaScript itself is not penalizing, provided the implementation is compatible with crawling and indexing. This is a huge nuance.

- JavaScript is not a ranking signal — no direct algorithmic bonus or penalty

- The user experience generated by JS impacts Core Web Vitals, which are confirmed factors

- Crawling and indexing can be slowed down or compromised by poor JS architecture

- Bundling and code splitting are neutral technical choices for ranking, but critical for perceived performance

- Google recommends SSR or hydration for sites with critical SEO concerns, although it is not mandatory

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes and no. On paper, claiming that JavaScript is not a direct ranking factor is technically accurate. But this wording masks a more complex reality: poorly implemented full-client-side JS sites consistently find themselves disadvantaged in the SERPs.

I have observed dozens of migrations from SPA (Single Page Applications) to Server Side Rendering that generated traffic gains of 30 to 60% in just a few weeks. If JS really were not an issue, these gains would be inexplicable. [To be confirmed]: Is Google downplaying the real impact to encourage the adoption of modern frameworks?

What nuances should be considered in this claim?

The distinction between direct factor and indirect impact is a semantic twist. For an SEO practitioner, what matters is the result in the SERPs — not Google’s internal taxonomy. A site that loses 40% of traffic because its content isn’t crawled correctly doesn’t care whether it's a "direct factor" or not.

Second nuance: Splitt refers to bundling and splitting as neutral, but he fails to mention that these choices determine the browser’s parsing time. A 500 KB bundle slows down the Time to Interactive, which impacts Core Web Vitals. The causal chain is indirect, but the effect is measurable.

In what situations does this rule not fully apply?

For news sites, e-commerce with thousands of product pages, or marketplaces, the reality is less forgiving. The crawl budget is not infinite — and if Googlebot has to render 10,000 JS pages, you will encounter indexing problems that classic HTML competitors will not face.

Sites with dynamically generated content (filters, facets, recommendations) must be particularly cautious. If this content exists only client-side and requires user interactions to appear, Googlebot will likely never see it — regardless of whether JavaScript is a "ranking factor" or not.

Practical impact and recommendations

What concrete steps should be taken to optimize a JavaScript site?

First priority: ensure that your critical content is accessible right from the initial HTML, without waiting for JavaScript execution. Use Server Side Rendering (SSR) or static pre-generation for strategic pages — landing pages, product sheets, blog articles.

Second action: audit your rendering time with Search Console and Lighthouse tools. If the First Contentful Paint exceeds 2 seconds or if the Time to Interactive reaches 5 seconds, you have a problem that will impact your Core Web Vitals, thus indirectly affecting your ranking.

What common mistakes should absolutely be avoided?

Never block crawling of JavaScript and CSS files in robots.txt — a still common mistake. Google needs access to these resources to correctly render your pages. Without access, you force Googlebot to index a degraded version of your site.

Avoid full client-side frameworks (React, Vue, Angular in pure SPA mode) for sites with critical SEO concerns, unless you implement SSR or hydration. Solutions like Next.js, Nuxt.js, or Gatsby exist specifically to bridge this gap.

How can you check if your JavaScript implementation is SEO-friendly?

Use the URL inspection tool in Search Console and compare the "crawled" version with the "rendered" version. If you notice major differences in visible content, it's a warning sign. The rendering should be identical or nearly identical.

Also, test with tools like Screaming Frog in JavaScript mode enabled/disabled. If entire sections of your site disappear without JS, you have a structural problem. Check server logs to identify the pages that Googlebot actually renders — some may remain stuck in the second wave of indexing indefinitely.

- Implement SSR or pre-generation for strategic pages

- Measure and optimize Core Web Vitals (FCP, LCP, TBT, CLS)

- Check accessibility of JS/CSS files in robots.txt

- Compare crawled and rendered versions in Search Console

- Audit rendering time with Lighthouse and PageSpeed Insights

- Test the site with JavaScript disabled to identify critical dependencies

❓ Frequently Asked Questions

Google indexe-t-il réellement tout le contenu généré en JavaScript ?

Le Server Side Rendering est-il obligatoire pour ranker avec un site en JavaScript ?

Les frameworks comme React ou Vue sont-ils pénalisants pour le SEO ?

Comment savoir si mon site JavaScript est correctement crawlé par Google ?

Le code splitting améliore-t-il le référencement ?

🎥 From the same video 10

Other SEO insights extracted from this same Google Search Central video · duration 33 min · published on 08/12/2020

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.