Official statement

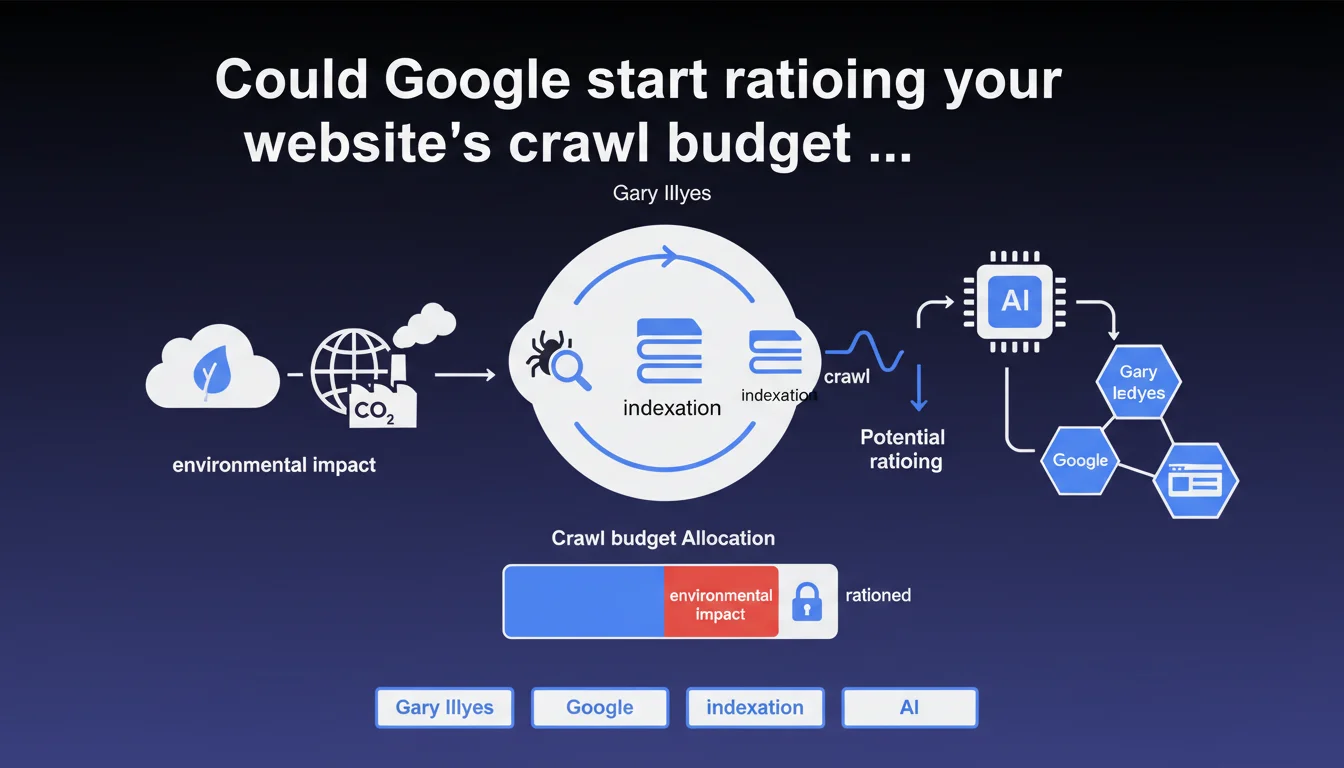

Gary Illyes announces that Google is now integrating environmental sustainability considerations into its crawl and indexation processes. In practical terms, this means your crawl budget could become increasingly constrained in the future, making technical SEO optimization even more critical to ensure your strategic pages get indexed.

What you need to understand

Why is Google suddenly talking about the environmental impact of crawling?

Crawling and indexation consume massive amounts of resources. Googlebot visits billions of pages daily, requiring servers, energy, and bandwidth. In a context where tech giants face mounting pressure about their carbon footprint, Google is starting to optimize its infrastructure through the lens of sustainability.

Illyes' statement is not inconsequential. It suggests that Google might revise its crawl priorities based on energy efficiency rather than content relevance alone. For poorly optimized sites, this could translate into fewer Googlebot visits.

What does this actually change about crawl budget?

The crawl budget — that implicit allocation of resources Google grants to each site — could become even more restrictive. If Google prioritizes sustainability, technically inefficient sites risk being penalized: slow response times, chained redirects, pointless pages that waste resources.

To put it plainly, a site that forces Googlebot to crawl 10,000 pages when 8,000 are duplicate content or parameter-based URLs with zero value becomes an environmental problem in Google's eyes. Crawl optimization is no longer just an SEO performance question — it's also an energy efficiency matter.

Is this announcement just PR spin or a genuine policy shift?

Hard to say with certainty. Google has always been vague about the exact crawl budget criteria. This statement could serve to justify future restrictions while giving itself a responsible image.

However, several signals show that Google is actually reducing crawl on certain sites over recent months. SEOs report unexplained drops in crawl frequency on well-optimized sites. This new "green" direction could be the official justification for an evolution already underway.

- Google is integrating sustainability into its crawl and indexation criteria

- The crawl budget could become more restrictive for inefficient sites

- Sites with duplicate content, unnecessary redirects, or slow response times are on the front lines

- This announcement could justify future restrictions on crawl volume allocation

- Technical optimization is becoming an environmental imperative according to Google's logic

SEO Expert opinion

Does this statement align with what we're actually seeing in the field?

Yes and no. Multiple clients have indeed noticed crawl reductions in recent months with no major changes on their end. Google is crawling less — that's a fact. But attributing this solely to environmental considerations? [Needs verification]

It's more likely that Google is optimizing its infrastructure costs — with ecology serving as a publicly acceptable narrative. Reducing crawl = fewer servers = lower expenses. The environment is a compelling argument, but the true motivation is probably financial and operational.

Which sites are at the highest risk of being impacted?

Large e-commerce sites with millions of dynamically generated URLs are on the front line. If your product catalog creates 500,000 unblocked faceted URLs, Google will have to make choices. Sites with poorly designed architecture will suffer most.

Media sites with poorly optimized archives, UGC platforms with massively indexed low-quality content, multilingual sites with duplicate content across languages — all these profiles consume heavy crawl for minimal value. Google could decide to drastically limit their exploration.

Should we take this announcement literally?

Let's be honest: Google rarely communicates transparently about its algorithms. This statement is vague enough to commit to nothing concrete. Illyes says "Google is thinking about" — not "Google will modify."

However, ignoring this signal would be a mistake. Even if the environmental argument is storytelling, the direction is crystal clear: Google wants to crawl less and smarter. Whether the motivation is ecological, financial, or technical matters less. The result for SEOs is the same: optimize your crawl or face the consequences.

Practical impact and recommendations

What should you do concretely to adapt?

First priority: audit your crawl. Analyze your server logs to identify pages being crawled unnecessarily. Is Googlebot visiting thousands of pagination URLs, filters, and session variants? If so, block them via robots.txt or noindex tags.

Next, focus on server response speed. A site that responds slowly consumes more resources on Google's side. Optimize your TTFB, enable compression, use a CDN if needed. The faster your site responds, the more pages Google can crawl with the same budget.

What critical mistakes must you avoid?

Don't let Google crawl pages with no SEO value. Internal search results pages, infinite facets, URLs with tracking parameters — all of this wastes crawl budget for nothing.

Also avoid chained redirects. Each redirect consumes an additional request. If Google has to go through 3 redirects to reach the final page, you're wasting 2 out of 3 of the budget allocated to that URL.

How can I verify that my site is optimized for efficient crawling?

Use Google Search Console to monitor crawl statistics. If you see a drop in pages crawled per day without changes on your end, that's a warning signal.

Compare the number of URLs crawled against the number of truly useful URLs on your site. If Google crawls 100,000 pages when you only have 10,000 strategic ones, you have a structural efficiency problem.

- Analyze server logs to identify unnecessarily crawled URLs

- Block via robots.txt or noindex pages with no SEO value (facets, excessive pagination, internal search)

- Optimize TTFB and server response speed

- Remove chained redirects

- Monitor crawl statistics in Search Console

- Prioritize strategic page indexation via targeted XML sitemaps

- Regularly clean up obsolete or duplicate content

❓ Frequently Asked Questions

Google va-t-il vraiment réduire le crawl de mon site pour des raisons environnementales ?

Quels types de sites sont les plus à risque ?

Comment savoir si mon crawl budget a diminué ?

Faut-il bloquer les pages de faible qualité pour économiser du crawl budget ?

Est-ce que l'optimisation du crawl a un impact direct sur le ranking ?

🎥 From the same video 3

Other SEO insights extracted from this same Google Search Central video · published on 26/07/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.