Official statement

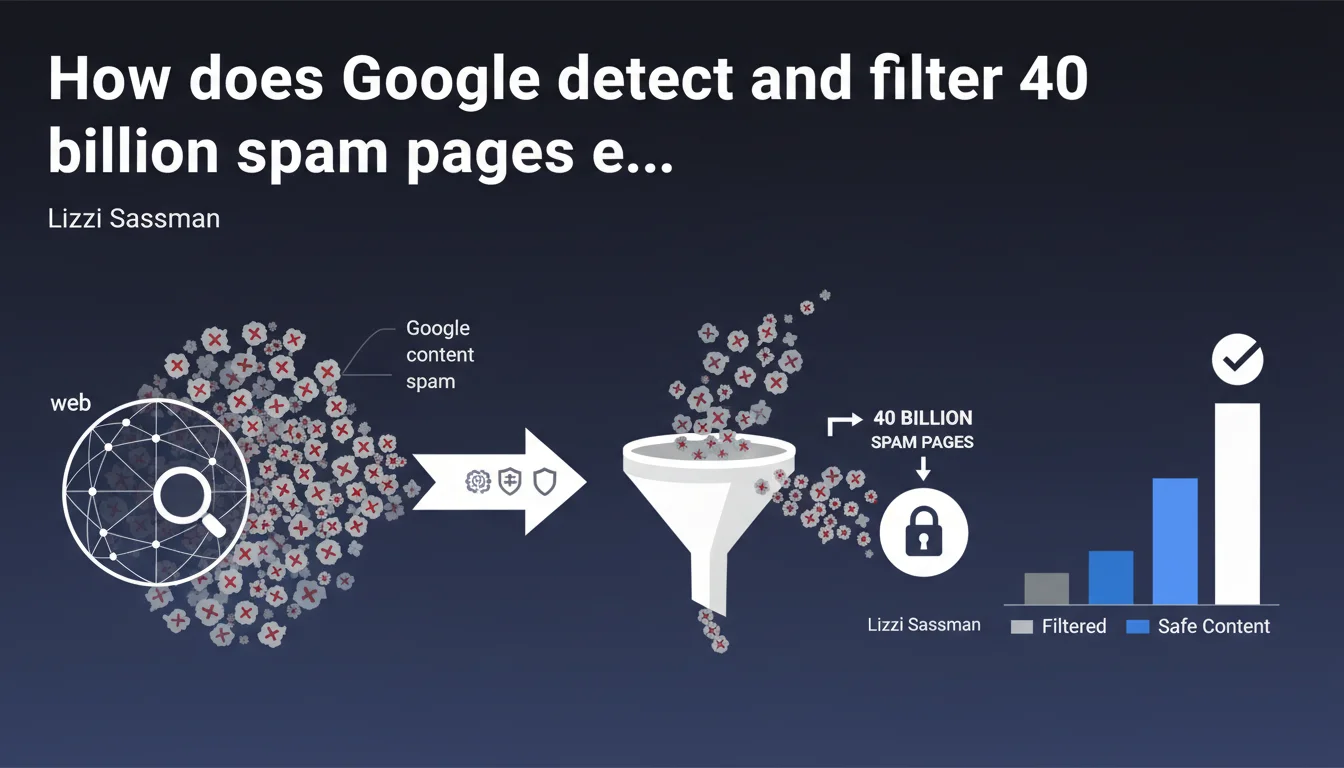

Google detects and filters 40 billion spam pages daily—a figure that illustrates both the massive scale of web spam and the sophistication of the search engine's anti-spam systems. For SEO practitioners, this means that any manipulative technique exposes you to real demotion risk—and quality remains the only sustainable defense.

What you need to understand

What does this 40 billion spam pages per day figure really reveal?

This colossal volume shows two contradictory realities. On one hand, web spam remains a thriving industry that produces massive volumes of low-quality content. On the other, Google has built infrastructure capable of processing this scale and filtering it before it even impacts search results.

Let's be honest: this figure is also a marketing message. Google wants to reassure advertisers and users about its ability to maintain index quality. But it raises a question—if 40 billion spam pages are detected every day, how many slip through the cracks?

What exactly does Google consider "spam"?

Google deliberately keeps this definition vague. Spam can include auto-generated content, link farms, cloaking, deceptive redirects, massive keyword stuffing, doorway pages, and content scraping. But also—and this is more ambiguous—"low-value" content without obvious technical manipulation.

This broad definition creates problems. Can an e-commerce site with thousands of similar product pages be considered spam? A blog that republishes syndicated content? The line between aggressive optimization and spam remains murky, and Google never provides precise thresholds.

- 40 billion spam pages detected daily—a volume that illustrates the scale of the problem but also the power of Google's algorithms

- The spam definition remains deliberately broad and encompasses both technical manipulation and "low-value" content

- Detection systems work upstream: the majority of spam never reaches the visible index in search results

- No public threshold for what tips a site from "acceptable" to "spam"—everything is opaque

Does this 40 billion figure include only newly discovered pages?

Probably not. Google speaks of pages "discovered," which can include pages already known but re-evaluated after modification, pages crawled regularly to verify they haven't become spam, and obviously new URLs detected via crawl or sitemaps.

This figure therefore aggregates multiple realities: obvious spam filtered instantly, formerly legitimate content that became spam, and new manipulation attempts. It's not 40 billion new spam sites appearing each day—but 40 billion daily evaluations that conclude "spam."

SEO Expert opinion

Does this statement align with what we observe in practice?

Yes and no. In practice, we clearly see that crude spam attempts fail quickly—low-grade PBN networks, auto-generated content farms, poorly constructed satellite sites disappear from the index fast. Google's systems are clearly effective against obvious spam.

But—and here's where it gets sticky—sophisticated spam continues working temporarily. Sites with well-packaged AI content, discrete private link networks, advanced cloaking strategies remain active for months before detection. The 40 billion figure captures crude spam, not necessarily intelligent spam.

What nuances should we add to this official narrative?

[Requires verification] Google doesn't specify how many false positives are included in these 40 billion. How many legitimate pages are temporarily flagged as spam then rehabilitated? How many e-commerce sites with product variations are wrongly penalized?

Another blind spot: this figure says nothing about detection delay. A spam page that remains active for 3 months before filtering had time to generate traffic, backlinks, revenue. Google may count this page in its 40 billion, but it already accomplished its mission.

When does this rule not apply?

Large players clearly benefit from different tolerance. Authority sites with millions of barely differentiated pages (Amazon, eBay, Booking) are never treated as spam, while small sites with 10,000 similar product pages might be.

Similarly, institutional sites, established media, major UGC platforms (Reddit, Quora) largely escape this anti-spam logic—despite obvious volumes of low-quality content. For Google, spam is also a matter of reputation and implicit trust.

Practical impact and recommendations

What concrete steps should you take to avoid being classified as spam?

The sensible answer: produce content that delivers real added value, avoid obvious manipulative techniques, follow the guidelines. But concretely, this remains vague—and that's exactly the problem.

A few pragmatic rules emerge from field observation. Avoid auto-generated pages without human intervention (unless they provide genuine utility—which is possible). Limit satellite pages created solely to rank for specific keywords. Diversify your traffic sources to avoid 100% dependence on Google, which reduces existential risk if you get demoted.

- Regularly audit automatically generated content: if product pages, categories, or landing pages are too similar, consider consolidating or enriching them

- Monitor spam signals in Search Console: manual actions, coverage excluded for "detected spam," sudden indexation drops

- Avoid detectable private link networks: IP fingerprints, linking patterns, over-optimized anchor text

- Prioritize editorial depth over volume: better 100 solid pages than 10,000 thin pages

- Test actual added value: if a page can be replaced by another without information loss, it's probably unnecessary

- Document editorial decisions: if penalized, be able to justify why a particular content structure exists

What mistakes should you absolutely avoid?

Don't assume a technique works just because it hasn't been penalized yet. The lag between manipulation and penalty can be long—several months, sometimes a year. During that time, the site generates traffic, creating false confidence.

Another trap: copying big players' strategies. What works for Amazon (millions of quasi-identical product pages) won't work for a niche e-commerce site. Google applies different standards based on trust level, even if it officially claims otherwise.

How can you verify your site isn't considered spam?

Search Console remains your first indicator. Check the "Coverage" tab for large exclusions, monitor manual actions, analyze sudden fluctuations in indexed pages. A sudden 30%+ drop could signal an algorithmic spam filter.

Next, test the site:yourdomain.com command in Google. If important pages don't appear, or if result order seems incoherent, that's a warning signal. Compare with Bing: if your site performs well on Bing but collapses on Google, a spam filter is likely.

❓ Frequently Asked Questions

Les 40 milliards de pages spam incluent-elles les pages déjà indexées ou uniquement les nouvelles découvertes ?

Un site peut-il être partiellement classé spam, ou est-ce tout ou rien ?

Combien de temps faut-il à Google pour détecter une nouvelle page spam ?

Les contenus générés par IA sont-ils automatiquement considérés comme spam ?

Si mon concurrent utilise du spam et rank, dois-je faire pareil ?

🎥 From the same video 3

Other SEO insights extracted from this same Google Search Central video · published on 26/07/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.