Official statement

Other statements from this video 6 ▾

- □ How does Google actually discover your pages before ranking them?

- □ Is your sitemap really just about URL discovery, or does it do more than Google claims?

- □ Why doesn't an indexed page necessarily appear in Google search results?

- □ Why can an indexed page remain invisible in search results even though Google has crawled it?

- □ Why isn't your indexed content ranking in search results?

- □ Is Google really removing your pages from the index if nobody clicks on them?

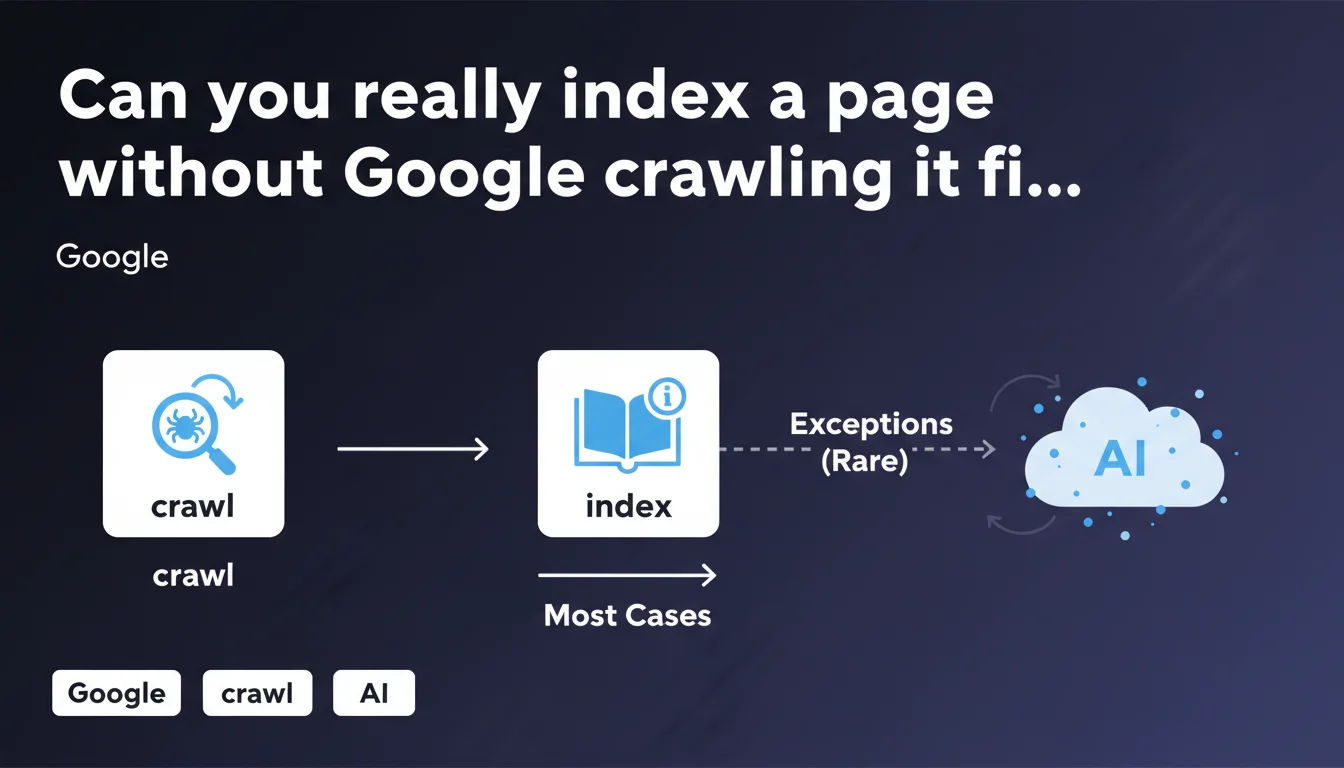

Google claims it must generally crawl a page before indexing it, but acknowledges some exceptions exist. In the majority of cases, crawling precedes indexing — meaning if Googlebot cannot access your content, it won't be indexed. The exceptions remain unclear and Google doesn't detail the contexts where they apply.

What you need to understand

What is the exact relationship between crawling and indexing?

Google establishes here a clear hierarchy: crawling precedes indexing in the majority of cases. Concretely, Googlebot must access the page's content, analyze its HTML, and interpret resources (CSS, JS, images) to understand what it contains.

Without this exploration phase, the search engine has no data to index. This is the foundation of how Google works: no crawl, no visibility in search results.

What are these famous exceptions Google mentions?

Google remains deliberately vague on this point. We know it can index URLs discovered via external backlinks without having visited the page — the URL then appears in the index with a generic snippet.

Another case: pages cited in XML sitemaps can be temporarily indexed before complete crawling. But these situations remain marginal and often temporary — Google generally ends up crawling to obtain complete data.

Why this statement now?

This assertion reminds of a fundamental principle that many sites neglect: optimizing crawl budget and technical accessibility. Too many projects focus on content while forgetting that if Googlebot cannot access it efficiently, everything else is useless.

Google reaffirms that crawling remains the primary bottleneck of indexation. If your important pages aren't crawled regularly, they cannot be properly indexed or updated in search results.

- Crawling precedes indexing in the absolute majority of cases

- Exceptions exist but remain marginal and poorly documented

- Without access to content, Google cannot index properly

- Crawl budget becomes critical on large websites

- Technical issues blocking crawling directly impact visibility

SEO Expert opinion

Does this statement really bring anything new to the table?

Let's be honest: no. Every SEO professional has known for years that crawling precedes indexing. What Google is doing here is reaffirming a basic principle, likely in response to confusion observed among webmasters.

The only interesting point remains this mention of exceptions — but again, nothing concrete. Google provides no precise criteria to identify these particular cases or their actual frequency. [To verify]: what proportion of the time do these exceptions actually occur? Google shares no data.

Are these exceptions exploitable in SEO?

In practical field experience, counting on these exceptions is a risky bet. I've observed cases where Google temporarily indexes a URL discovered via external links, but without complete crawling, the snippet remains generic and rankings are poor.

These partial indexations often disappear during index updates. In other words: even if the exception occurs, it guarantees neither quality nor longevity. No serious professional should build an SEO strategy betting on these marginal cases.

What is the real tactical priority here?

What Google doesn't explicitly say but implies: facilitate crawling. Sites that neglect their technical architecture, server response speed, crawl budget on large site structures — they mechanically lose visibility.

The real message behind this statement: stop focusing solely on content and backlinks. If your technical infrastructure slows down Googlebot, everything else becomes secondary. And that's where many sites struggle.

Practical impact and recommendations

What should you check first on your website?

First step: audit crawl accessibility. Verify that Googlebot can reach your strategic pages without obstacles (robots.txt, chained redirects, 5xx server errors, timeouts). Use server logs to identify which pages Google actually crawls vs those it ignores.

Second point: optimize your internal linking. Orphaned pages or those located more than 4 clicks from the homepage are rarely crawled. A flat and logical architecture makes Googlebot's job easier and accelerates your content's indexation.

Which errors systematically block crawling?

Server response times that are too long (>500ms) drastically slow down crawling. Google allocates a time budget per site — if your server is slow, fewer pages will be explored per session.

Another classic error: poorly managed URL parameters that create infinite duplicate content. Googlebot wastes its budget on unnecessary variations instead of crawling your strategic pages. Use Search Console to identify these traps.

How to prioritize pages for crawling?

The XML sitemap remains your best prioritization tool. Include only your strategic pages (those that drive business), exclude supporting content. Update the <lastmod> tag only during substantial modifications — not with every user session.

Use structured data and internal linking to signal the relative importance of your pages. Google crawls more frequently URLs that many quality internal links point to.

- Check server logs to identify real crawl issues

- Correct 4xx/5xx errors that block access to strategic content

- Optimize server response times (target: <200ms)

- Clean up robots.txt and remove unnecessary blocks

- Eliminate redirect chains (max 1 redirect per URL)

- Restructure internal linking to reduce click depth

- Update XML sitemap including only priority pages

- Monitor Search Console to detect crawl anomalies

Indexation depends directly on Google's ability to crawl your content efficiently. Focus on technical infrastructure: accessibility, server performance, logical architecture. Without these foundations, even the best content will remain invisible.

These optimizations often involve complex technical aspects — server infrastructure, crawl budget management, large-scale log analysis. If your internal team lacks expertise in these areas, engaging a specialized SEO agency can save you months by avoiding costly mistakes and correctly prioritizing projects based on their real impact.

❓ Frequently Asked Questions

Google peut-il indexer une page sans jamais la crawler ?

Combien de temps faut-il à Google pour crawler une nouvelle page ?

Le sitemap XML force-t-il Google à crawler mes pages ?

Pourquoi certaines pages indexées n'apparaissent pas dans mes logs serveur ?

Faut-il bloquer les pages peu importantes pour économiser le crawl budget ?

🎥 From the same video 6

Other SEO insights extracted from this same Google Search Central video · published on 19/03/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.