Official statement

What you need to understand

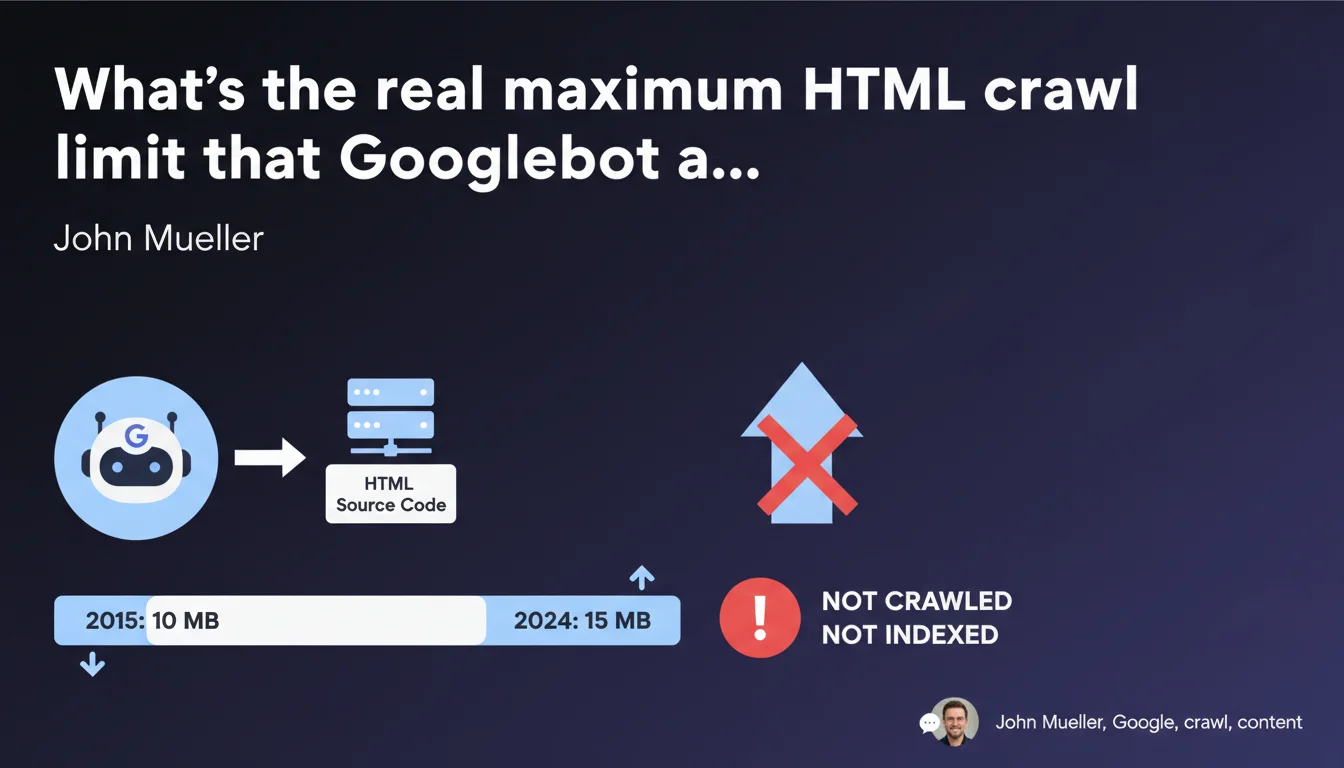

Google has officially evolved its HTML crawl limit from 10 MB to 15 MB per page. This update represents a 50% increase in Googlebot's content processing capacity.

Concretely, this means that any HTML source code or text file exceeding this limit will not be crawled or indexed in its entirety. The bot simply stops at 15 MB and ignores the rest of the content.

This limitation primarily concerns pages with particularly large HTML, which remains rare in common web practice. However, certain types of sites may be affected:

- Pages with long dynamically generated content (complete product catalogs, massive listings)

- Sites using heavy JavaScript frameworks that inflate the DOM

- Pages containing excessive structured data or bulky JSON-LD

- Sites with significant inline code (embedded CSS, JavaScript)

- Archive or listing pages without appropriate pagination

This evolution shows that Google is adapting to the growing complexity of modern websites, while maintaining a reasonable limit to optimize its crawl resources.

SEO Expert opinion

This update is consistent with the evolution of the web and the general increase in page size in recent years. Modern frameworks and complex web applications do indeed generate more code than before.

Nevertheless, the real impact must be nuanced: very few sites reach this limit. The average HTML page is between 30 KB and 200 KB. Reaching 15 MB really requires a problematic structure or questionable technical choices.

In my practice, the rare cases exceeding this limit are often signals of structural problems: absence of pagination, inline duplicated content, poor technical architecture. Correcting these aspects generally improves much more than simply respecting this limit.

Practical impact and recommendations

- Audit the HTML weight of your main pages, particularly listings and catalogs (use Chrome DevTools' Network tab)

- Implement robust pagination for all long content (articles, products, archives) instead of loading everything on a single page

- Externalize CSS and JavaScript: avoid massive inline code that unnecessarily inflates the HTML

- Optimize structured data: limit yourself to essential schema.org without excessive duplication

- Use lazy loading and deferred loading for dynamic content rather than including everything in the initial DOM

- Monitor JavaScript frameworks: some generate a hypertrophied DOM that needs to be controlled

- Test with "View Page Source" regularly to verify that your HTML remains reasonable (ideally under 1 MB)

- Configure alerts in your monitoring to detect pages exceeding 5 MB (preventive threshold)

These technical optimizations often touch on the deep architecture of the site and require sharp expertise in web development and technical SEO. Analyzing HTML weight, redesigning pagination or optimizing server-side rendering are complex projects that involve multiple skills.

For large-scale sites or e-commerce platforms with extensive catalogs, calling on a specialized SEO agency provides the benefit of an in-depth technical audit and personalized support. These experts can precisely identify the sources of HTML overweight and propose solutions adapted to your technical stack, while avoiding pitfalls that could negatively impact your indexing.

💬 Comments (0)

Be the first to comment.