Official statement

Other statements from this video 4 ▾

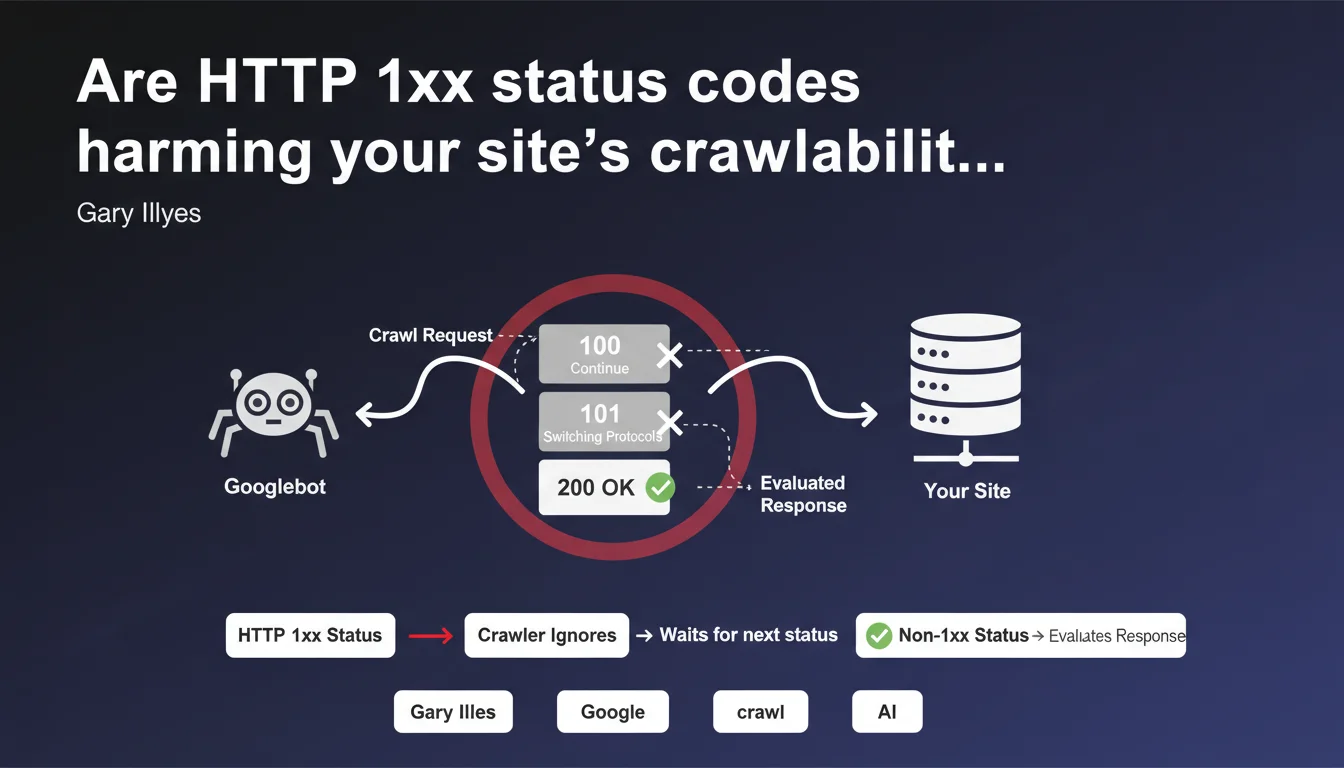

Google completely ignores HTTP 1xx status codes (100 Continue, 101 Switching Protocols, etc.). The crawler simply passes through these intermediate responses without processing them and waits for the next non-1xx status code to evaluate the request. In short: these codes have no impact on SEO, neither positive nor negative.

What you need to understand

What exactly are HTTP 1xx status codes and why do they exist?

HTTP 1xx status codes are informational responses — temporary messages exchanged between the client and server before the final response. The most well-known is 100 Continue, which allows the server to say "OK, go ahead and send your request body" before processing the complete request.

101 Switching Protocols, on the other hand, is used to switch from one protocol to another — for example during a WebSocket connection. These codes are never final: they simply signal that the HTTP transaction is in progress.

How does Googlebot react when encountering a 1xx code?

Gary Illyes's statement is crystal clear: Googlebot completely ignores these codes. It doesn't process them, doesn't record them, doesn't interpret them. It simply waits for the final status code (200, 301, 404, etc.) to decide what comes next.

Technically, the crawler passes through these intermediate responses as if it were passing through a transparent curtain. No evaluation, no indexing decision, nothing.

What are the concrete implications for SEO?

If your server sends 1xx codes before responding with a 200, this has no SEO impact. Googlebot ignores them and moves directly to the next code.

- No penalty if your server sends a 100 Continue before a 200 OK

- No crawl slowdown — response time matters, but not the presence of a 1xx code

- No possible optimization through these codes: they are invisible to the search engine

- Only the final status code (200, 301, 404…) matters for indexing and ranking

SEO Expert opinion

Does this statement change field practices?

No — and that's precisely what's interesting. Very few sites actively use 1xx codes in their server configurations. Most SEO professionals have never had to worry about them, and this statement confirms that they're right to ignore them.

What deserves attention is that this clarification closes a door to any potential optimization or manipulation attempts via these codes. There's no point in tweaking an exotic server architecture hoping for an SEO boost: Google doesn't care.

What gray areas remain in this announcement?

Gary Illyes remains vague on one point: what happens if the server sends a 1xx code and then crashes? Does Googlebot wait indefinitely for the final code, or is there a timeout that triggers a crawl error?

[To verify] The exact behavior when no final code follows a 1xx is not documented. In practice, a standard timeout probably triggers — but Google doesn't explicitly state this.

A technical detail... or a useful reminder?

This statement seems minor — and it is for 99% of sites. But it has the merit of reminding us of a fundamental SEO rule: only final HTTP status codes (2xx, 3xx, 4xx, 5xx) influence crawler behavior.

Anything related to the internal mechanics of the HTTP protocol (negotiations, intermediate confirmations) is irrelevant to SEO. Google only cares about the final result of the request.

Practical impact and recommendations

Should you modify your server configuration?

No. Unless you're using 1xx codes for specific technical reasons (complex APIs, WebSockets, large requests with 100 Continue), you don't need to change anything.

If your server already sends these codes, they have no negative impact on crawling. If you're not using them, there's no point in adding them.

How to verify that Google is crawling your pages correctly?

Focus on final status codes — the ones that actually matter for indexing. Ignore 1xx codes in your logs: they tell you nothing about Googlebot's behavior.

- Analyze server logs to identify status codes returned to crawlers (200, 301, 404…)

- Use Search Console to detect crawl errors (timeout, 5xx, 4xx)

- Verify that your 301/302 redirects are working correctly — they're what guide the crawler

- If 1xx codes appear in your logs, don't waste time fixing them: Google ignores them

- Focus your efforts on response speed (TTFB) and server stability — not on intermediate codes

What long-term strategy should you adopt?

Simplify your HTTP architecture as much as possible. The fewer steps between the request and final response, the better — for both performance and maintainability.

If you manage a complex site with custom server configurations, ensure that final status codes are consistent and predictable. That's what Google analyzes, period.

This statement confirms that HTTP 1xx codes are invisible to Googlebot. No changes needed to your SEO practices: focus on final codes (2xx, 3xx, 4xx, 5xx), optimize response speed, and ensure a stable server configuration.

If you manage complex technical infrastructure with advanced HTTP architectures, these optimizations may require in-depth auditing and careful adjustments. Working with a specialized SEO agency lets you benefit from personalized support to audit your logs, identify critical crawl errors, and stabilize your status codes across your entire architecture.

❓ Frequently Asked Questions

Un code 100 Continue peut-il ralentir le crawl de Google ?

Faut-il désactiver les codes 1xx pour améliorer le SEO ?

Les codes 1xx apparaissent-ils dans les rapports de la Search Console ?

Un serveur qui envoie un 101 Switching Protocols peut-il être pénalisé ?

🎥 From the same video 4

Other SEO insights extracted from this same Google Search Central video · published on 15/05/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.